Advanced Outlier Detection in Catalysis Research: A Comprehensive Guide for Data Integrity and Drug Discovery

This article provides a detailed guide for researchers, scientists, and drug development professionals on identifying and managing outliers in catalyst performance data.

Advanced Outlier Detection in Catalysis Research: A Comprehensive Guide for Data Integrity and Drug Discovery

Abstract

This article provides a detailed guide for researchers, scientists, and drug development professionals on identifying and managing outliers in catalyst performance data. Covering foundational concepts to advanced methodologies, the content explores why outliers occur, demonstrates statistical and machine learning techniques for detection, offers troubleshooting strategies for noisy datasets, and validates methods against synthetic and real-world benchmarks. The aim is to ensure data integrity, improve model accuracy, and accelerate the discovery of robust catalysts for pharmaceutical and biomedical applications.

What Are Outliers in Catalyst Data? Defining Signals, Errors, and Hidden Discoveries

The Critical Importance of Outlier Detection in Catalysis R&D

Technical Support Center: Troubleshooting Outliers in Catalyst Performance Data

Troubleshooting Guides & FAQs

Q1: During high-throughput screening of heterogeneous catalysts, we observe a few data points with conversion rates >99.9% that deviate dramatically from the mean (~70%). Are these breakthrough discoveries or experimental artifacts? A: First, treat these as potential artifacts. Follow this protocol:

- Re-run the experiment for the specific outlier catalyst formulation in triplicate under identical conditions.

- Review synthesis logs: Cross-check the precursor weights and synthesis steps (e.g., calcination temperature/time) for that specific sample against the workflow standard.

- Characterization Check: Perform rapid EDX or XPS on the specific pellet used in the test to confirm composition wasn't erroneous (e.g., contamination, incorrect metal loading).

- Instrument Diagnostic: Run a standard calibration catalyst before and after the outlier sample sequence to confirm reactor GC/MS fidelity.

Q2: Our PCA model for catalyst lifetime prediction is heavily skewed by a handful of outliers. How do we diagnose if they are informative or noisy? A: Use a robust, multi-step diagnostic:

- Isolate: Apply an Isolation Forest algorithm to the feature set (e.g., metal dispersion, acidity, reaction conditions).

- Correlate: Manually investigate if all flagged data points share a common, undocumented experimental variable (e.g., a specific reactor port, a new batch of support material).

- Decision Protocol: If a technical root cause is found, correct and re-run. If no cause is found, but the outlier represents a novel, stable intermetallic phase, it may be scientifically informative. Flag it for a dedicated follow-up study.

Q3: In operando spectroscopy data, we see sporadic spikes in a by-product signal. How do we determine if this is a real catalytic event or a measurement glitch? A: This requires temporal correlation across data streams.

- Synchronize Data: Align timelines for MS, Raman, and temperature/pressure logs.

- Cross-Reference: Check if the spike correlates with a simultaneous perturbation in another signal (e.g., a temperature fluctuation) or is isolated to one spectrometer.

- Control Check: Review video log (if available) of the reactor window for bubble formation or particle movement at the exact timestamp, which can scatter light.

Q4: What is the most effective method to establish a statistically valid threshold for identifying outliers in catalyst turnover frequency (TOF) datasets? A: Do not rely solely on standard deviation. Use a tiered approach:

- Visualize: Create a box plot (showing median, quartiles) and a Q-Q plot to assess normality.

- Calculate: Compute the Median Absolute Deviation (MAD), a robust measure less sensitive to outliers than the mean.

- Outlier if:

|(TOF_i - Median(TOF)) / MAD| > 3.5

- Outlier if:

- Domain-Context: Apply a process knowledge limit. For example, if thermodynamic equilibrium conversion for the reaction is 85%, any data point claiming 95% is a definitive outlier requiring investigation.

Key Experimental Protocols for Outlier Investigation

Protocol 1: Systematic Verification of an Outlier Catalyst Sample

- Resynthesis: Synthesize the catalyst formulation again from scratch, using the same declared protocol.

- Characterization Suite: Perform BET, XRD, CO Chemisorption, and TEM on both the original (if available) and resynthesized samples.

- Performance Re-test: Test both samples in the same reactor, using the same feedstock batch, with an internal standard.

- Compare: Use a pre-defined similarity metric (e.g., TOF within 15%, identical selectivity profile). Divergence confirms an artifact in the original synthesis or testing.

Protocol 2: Identifying Temporal Drifts in High-Throughput Experimentation (HTE)

- Insert Standards: Include a reference catalyst at fixed positions (e.g., every 10th well) in your HTE library plate.

- Monitor Control Metrics: Track the conversion/selectivity of these reference spots across the entire experimental run sequence.

- Statistical Process Control (SPC): Apply a control chart (individuals-moving range chart) to the reference catalyst performance.

- Action: If a reference point falls outside the SPC control limits (e.g., 3σ), halt and investigate all catalysts tested in that temporal block for instrument-related outliers.

| Outlier Source Category | Typical Manifestation in Data | Suggested Diagnostic Action |

|---|---|---|

| Synthesis Artifact | Exceptionally high/low activity in one batch; unusual selectivity. | Review synthesis logs, repeat synthesis, characterize with surface probes. |

| Analytical Error | Spurious GC/MS peak; unrealistic mass balance (>101% or <95%). | Run calibration standards, check integrator settings, inspect chromatogram for co-elution. |

| Reactor Malfunction | Sudden step-change in conversion across multiple samples; pressure spikes. | Check thermocouples, mass flow controllers, valve sequencing logs. |

| Data Handling Error | Mislabeled sample leading to incorrect structure-property pairing. | Audit digital lab notebook entries and sample tracking IDs against raw data files. |

| True Scientific Discovery | Isolated, reproducible high-performance data point from a novel composition. | Reproduce, then conduct in-depth characterization and mechanistic study. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Outlier Investigation |

|---|---|

| Certified Reference Catalyst (e.g., EUROCAT Pt/SiO₂) | Provides a benchmark for instrument performance and cross-lab data validation. |

| Internal Standard Gases/Compounds (e.g., Neon in GC, deuterated solvents) | Detects and corrects for fluctuations in flow rates, injection volumes, or ionization efficiency. |

| Stable Isotope-Labeled Reactants (e.g., ¹³CO, CD₃OH) | Helps distinguish real reaction pathways from background contamination or side reactions. |

| High-Purity Calibration Gas Mixtures | Ensures accuracy of online mass spectrometers and gas chromatographs. |

| Digital Lab Notebook with Version Control | Tracks all experimental parameters and changes to enable root-cause analysis of outliers. |

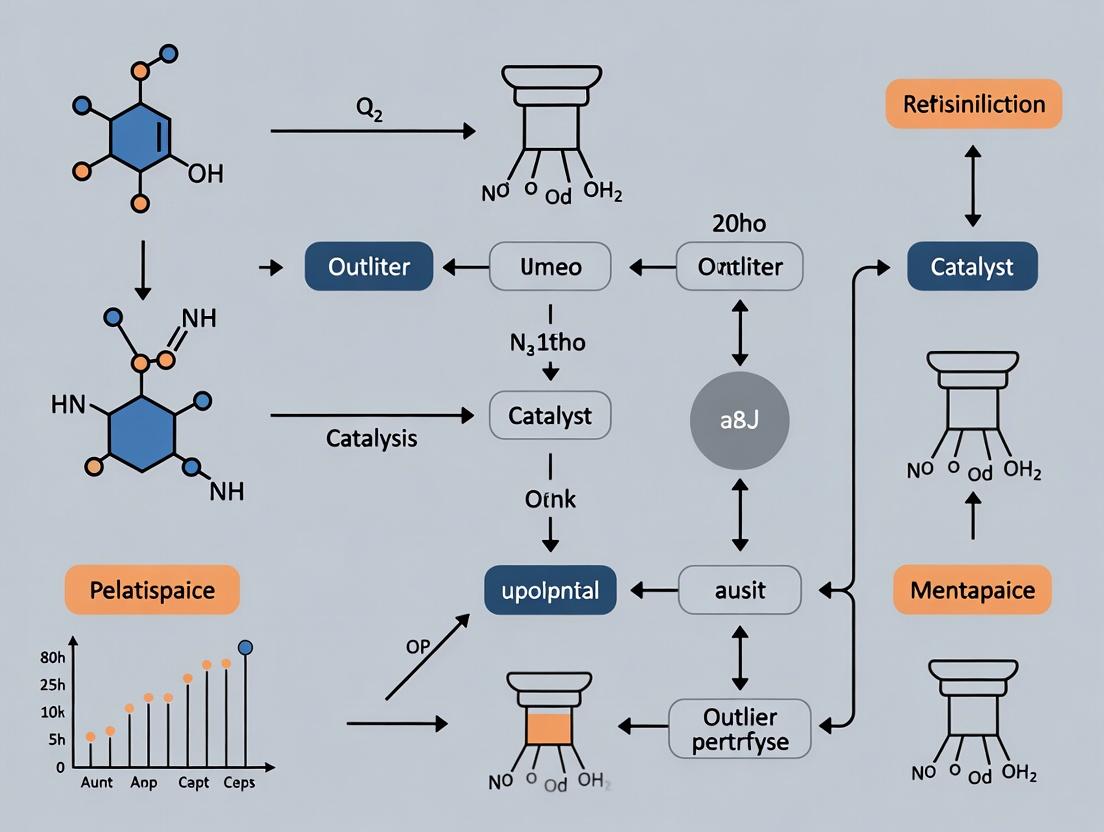

Visualizations

Decision Workflow for Catalyst Data Outliers

Cross-Correlation of Operando Data to Diagnose Spikes

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: Why am I observing a sudden, sharp spike in catalytic turnover frequency (TOF) in only one replicate of my hydrogenation reaction?

- Likely Cause: Experimental Artifact - Microliter-scale pipetting error of the catalyst stock solution.

- Diagnosis: Check your calibration records for the pipette used. Re-perform the preparation of the catalyst stock solution and the reaction setup with a freshly calibrated pipette. Compare the UV-Vis absorbance (for colored catalysts) of the outlier stock solution against others.

- Solution: Implement a protocol for regular pipette calibration (monthly for heavy use) and use reverse pipetting for viscous liquids. Always prepare a master stock solution for catalyst when possible.

FAQ 2: My catalyst's selectivity data shows an anomalous product distribution in batch 3 of 5. Is this a new catalytic pathway?

- Likely Cause: Experimental Artifact - Contaminated reactor vessel or impeller.

- Diagnosis: Visually inspect the reactor for scratches or pitting that could harbor contaminants. Run Energy Dispersive X-Ray Spectroscopy (EDS) on a swab of the reactor surface to detect trace metals from previous runs.

- Solution: Adopt a stringent reactor cleaning protocol: aqua regia wash (for glass-lined), followed by multiple solvent rinses (acetone, water) and oven drying. Use dedicated reactors for specific reaction classes.

FAQ 3: High-throughput screening (HTS) data for my catalyst library shows several "superstar" catalysts with performance >5 standard deviations from the mean. Are these genuine breakthroughs?

- Likely Cause: Requires differentiation. Could be artifacts from A) Air/moisture intrusion in specific wells, or B) Genuine discovery.

- Diagnosis:

- For A: Review the robotic liquid handler logs for seal integrity errors during the plate preparation. Analyze adjacent wells for correlated outlier patterns suggesting a systematic fault.

- For B: Statistically treat these as candidate outliers. Resynthesize and re-test the identified catalyst compositions manually in triplicate under inert atmosphere.

- Solution: Implement an Outlier Interrogation Protocol (OIP) before deeming a result genuine.

Experimental Protocol: Outlier Interrogation Protocol (OIP) for Catalyst Performance

Purpose: To systematically determine if a statistical outlier in catalyst performance data (e.g., Yield, TOF, Selectivity) is an experimental artifact or a genuine phenomenon. Materials: See "Research Reagent Solutions" table. Method:

- Flag: Identify outlier using defined statistical criterion (e.g., Grubbs' Test, >3σ).

- Audit: Immediately freeze all materials (catalyst batch, substrate batch, solvent lot) used in the outlier experiment. Audit all instrument logs and analyst notes.

- Blind Re-test: A second researcher, blinded to the expected outlier result, repeats the experiment using the reserved materials under strict protocol.

- Re-synthesis & Re-test: If step 3 confirms the outlier, a fresh batch of catalyst is independently synthesized from precursor materials. The test is repeated.

- Mechanistic Probe: If the outlier holds, design a follow-up experiment (e.g., in-situ spectroscopy, kinetic isotope effect) to probe for a distinct mechanistic pathway. Conclusion: An outlier is only classified as a "Genuine Phenomenon" if it passes Steps 3, 4, and shows evidence in Step 5.

Data Presentation

Table 1: Frequency of Common Outlier Sources in Heterogeneous Catalyst Testing (Hypothetical Meta-Analysis)

| Source Category | Specific Artifact | Approximate Frequency in Screening Data | Typical Statistical Signature |

|---|---|---|---|

| Experimental Artifact | Micro-pipetting Error | 15-20% | Single, extreme high/low value, no correlation to covariates. |

| Experimental Artifact | Reactor Contamination | 10-15% | Clustered outliers in sequential runs, affects selectivity > activity. |

| Experimental Artifact | Faulty Temperature Probe | ~5% | Affects all samples in a batch, shifts mean, increases variance. |

| Genuine Phenomenon | Unintended Cooperative Catalysis | 1-3% | Correlated with specific catalyst composition combinations in libraries. |

| Genuine Phenomenon | In-situ Catalyst Reconstruction | 2-4% | Observable via paired characterization (e.g., XRD, XPS) on spent catalyst. |

Table 2: Key Statistical Tests for Outlier Classification in Catalyst Data

| Test Name | Data Type Best Suited For | Null Hypothesis (H₀) | Application in Catalyst Research |

|---|---|---|---|

| Grubbs' Test | Univariate, Normally Distributed | There are no outliers in the dataset. | Identifying a single high-TOF outlier in a set of replicate runs. |

| Dixon's Q Test | Small Sample Sizes (3-10) | The suspected point is not an outlier. | Checking a triplicate measurement where one yield is far off. |

| Chauvenet's Criterion | Univariate, Normally Distributed | The point is part of the expected distribution. | Pre-processing of catalyst performance datasets before PCA. |

| Generalized ESD Test | Univariate, Unknown Outlier # | There are up to k outliers in the dataset. | Screening large catalyst libraries for multiple potential "hits". |

Visualization

Diagram 1: Outlier Investigation Decision Tree

Diagram 2: Common Artifact Pathways in Catalytic Testing

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Reliable Catalyst Testing

| Item/Category | Function & Importance for Outlier Prevention |

|---|---|

| Internal Standard (e.g., Dodecane for GC) | Added in known quantity before reaction. Normalizes for injection volume errors and sample loss, critical for identifying genuine yield/selectivity outliers. |

| Certified Reference Materials (CRMs) | Pure compounds with certified purity for instrument calibration (GC, HPLC, ICP-MS). Ensures analytical data validity, ruling out instrumental artifact outliers. |

| Deuterated Solvents (in sealed ampules) | For sensitive air/moisture catalyst systems. Prevents deactivation outliers caused by variable solvent quality. |

| Calibrated Microbalance & Pipettes | High-precision tools for accurate catalyst and substrate dispensing. Regular calibration records are essential to troubleshoot mass/volume-based artifacts. |

| In-situ Spectroscopy Cell (e.g., ATR-IR, UV-Vis) | Allows monitoring of catalyst and reaction intermediates in real-time. Provides mechanistic evidence to support a "genuine phenomenon" outlier. |

| Lab Information Management System (LIMS) | Tracks all material lots, instrument IDs, and protocol versions. Enables root-cause audit trails when an outlier is detected. |

Technical Support & Troubleshooting Center

Frequently Asked Questions (FAQs)

Q1: During a continuous flow reaction, my conversion data shows sudden, extreme spikes that then drop back to the baseline. What could cause this? A: This is a classic outlier often linked to catalyst channeling or hotspot formation. Temporary blockages can cause uneven flow, creating localized regions of high reactant concentration and excessive conversion. Check your reactor bed packing homogeneity and monitor system pressure drops. Pre-treat catalyst pellets to minimize fines and use inert diluent beads to improve flow distribution.

Q2: My selectivity metric for a desired product suddenly plummets in one data point, while by-product formation spikes. Is this an experimental error or a real phenomenon? A: It can be real. A primary cause is trace contaminant poisoning. Even ppb-levels of impurities (e.g., S, Cl, heavy metals) in the feed can selectively deactivate specific active sites, altering the product distribution. Implement rigorous feed purification (e.g., adsorbent traps, getters) and include internal standard checks in your GC/MS protocol to rule out analytical injection errors.

Q3: The calculated Turnover Frequency (TOF) for my homogeneous catalyst is orders of magnitude higher than theoretical limits. How should I troubleshoot this? A: Outliers in TOF often stem from incorrect active site quantification. For heterogeneous catalysts, this could be from an inaccurate metal dispersion measurement (e.g., via TEM or chemisorption). For homogeneous catalysts, ensure complete catalyst activation before the reaction. Common culprits include incomplete pre-reduction or the presence of trace water/oxygen that inhibits a fraction of the catalyst, leading to an undercount of active sites and an inflated TOF.

Q4: My catalyst stability test shows a rapid, severe deactivation event (e.g., >50% activity drop) in a single measurement, but seems to recover partially. How do I diagnose this? A: This "step-change" outlier in stability data may indicate mechanical failure or thermal runaway. Inspect for catalyst attrition or washcoat peeling (in monolithic reactors). Review temperature logger data for any brief, undetected exotherms. Implement real-time, high-frequency monitoring (e.g., online mass spectrometry) to capture transient species that may cause temporary poisoning.

Q5: When analyzing data across multiple catalyst batches, how can I systematically distinguish between a true performance outlier and a batch synthesis defect? A: Establish a Quality Control (QC) protocol for each new batch. Correlate the outlier performance data with characterization data from the same batch. Key metrics to compare in a table include BET surface area, XRD crystallite size, and ICP-MS metal loading. A batch that is an outlier in both characterization and performance is likely a synthesis issue. A performance outlier with nominal characterization suggests a testing or measurement anomaly.

Table 1: Typical Outlier Manifestations in Catalyst Metrics

| Metric | Normal Range | Outlier Indicator | Common Physical Cause |

|---|---|---|---|

| Conversion (%) | 10-90% (process dependent) | Spike >2σ from mean, or sudden drop to near-zero. | Flow maldistribution, feed pulsation, analytical sampling error. |

| Selectivity (%) | 70-99% (target product) | Sudden deviation >15% absolute. | Transient impurity in feed, local temperature fluctuation, active site sintering. |

| TOF (s⁻¹) | 10⁻³ to 10³ | Value exceeding theoretical site-limited maximum. | Incorrect active site count (dispersion error), presence of uncounted catalytic species (e.g., leached metal). |

| Stability (TOS/ Cycles) | Gradual decay (<5%/hr) | Abrupt activity loss (>30% in one interval). | Catalyst structural collapse, catastrophic poisoning, reactor plugging. |

Table 2: Recommended Diagnostic Experiments for Suspected Outliers

| Suspected Issue | Diagnostic Protocol | Expected Outcome if Issue is Present |

|---|---|---|

| Analytical Error | Repeat analysis of quenched sample; introduce internal standard. | Outlier disappears; internal standard shows recovery variance. |

| Feed Contamination | Switch to fresh, doubly-purified feed stock; run blank. | Activity/selectivity returns to baseline; blank shows no conversion. |

| Mass/Heat Transfer Artifact | Vary agitation speed (slurry) or flow rate (fixed bed). | Metric changes significantly with transport conditions. |

| True Catalyst Deactivation | Post-reaction characterization (XPS, TEM, TPO). | Evidence of coke, sintering, or chemical change on catalyst surface. |

Experimental Protocols for Outlier Investigation

Protocol 1: Verification of Conversion Outlier (Flow Reactor)

- Pause the experiment at the point immediately following the anomalous reading.

- Bypass the reactor and analyze the feed directly via online GC to confirm feed composition stability.

- Divert reactor effluent to a dedicated, sealed sampling loop for triplicate offline analysis (e.g., GC-FID, HPLC).

- Resume flow and monitor at an increased frequency (e.g., every 2 minutes instead of 10) for the next 30 minutes.

- Compare online, offline triplicate, and subsequent high-frequency data. A single anomalous online point with consistent offline results suggests an analytical transient.

Protocol 2: Active Site Re-Count for TOF Validation (Heterogeneous Catalyst)

- Post-reaction, cool the catalyst under inert atmosphere.

- Perform pulse chemisorption (e.g., CO, H₂) on a sub-sample using a Micromeritics ASAP 2920 or equivalent.

- Calculate metal dispersion: D (%) = (Number of surface atoms / Total number of atoms) x 100. Use stoichiometry (e.g., CO:Pt = 1:1).

- Recalculate TOF_corrected = (Moles converted) / (Moles surface metal * time).

- Correlate with HAADF-STEM imaging on a JEOL JEM-ARM200F to visually confirm particle size distribution and dispersion.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Outlier-Resilient Catalyst Testing

| Item | Function | Example Product/Catalog # |

|---|---|---|

| On-Line Micro GC | Provides high-frequency, real-time composition data to capture transient events. | INFICON Fusion Micro GC |

| Adsorbent Traps | Removes trace impurities (H₂O, O₂, S-compounds) from feed gases/liquids. | Sigma-Aldrich, Supelco Gas Clean Moisture & Oxygen Traps |

| Certified Standard Gases | Ensures accurate calibration of analytical equipment, ruling out drift. | Air Liquide, CERTAN Calibration Mixtures |

| Inert Catalyst Diluent | Improves flow distribution and heat transfer in fixed-bed reactors. | Sigma-Aldrich, Fused SiO₂ beads (250-500 µm) |

| Chemisorption Analyzer | Accurately quantifies active surface sites for correct TOF calculation. | Micromeritics, AutoChem II |

| Quartz Wool & Micro Reactor Tubes | Provides inert, high-temperature packing material for reactor beds. | ChemGlass, CG-2039-QW & CG-1230-01 |

Workflow & Relationship Diagrams

Title: Outlier Detection and Diagnosis Workflow in Catalyst Testing

Title: Decision Tree for Initial Outlier Triage

Troubleshooting Guides & FAQs

FAQ 1: What are the most critical visual tools for the initial screening of catalyst performance data, and why? The most critical tools are univariate distributions (histograms, box plots) and bivariate relationships (scatter plots). For catalyst data, a box plot of turnover frequency (TOF) across different batches quickly reveals performance outliers and batch-to-batch variability. Scatter plots of yield vs. temperature or pressure can identify nonlinear relationships and anomalous experimental runs that deviate from the expected trend.

FAQ 2: My scatter plot matrix shows no obvious outliers, but my statistical model's performance is poor. What visual tool might I be missing? You may need to visualize interactions or time-series patterns. Use a paired plot or conditioned plot (trellis plot) where scatter plots are segmented by a categorical variable like catalyst type or reactor bed. An outlier may only be apparent within a specific subgroup. For sequential data, a run chart (measurement vs. run order) can reveal process drift or周期性 that corrupts analysis.

FAQ 3: How can I visually distinguish between a true catalytic outlier and a data entry error before applying formal detection algorithms?

Employ a linked highlighting technique across multiple views. For example, select a suspicious point in a yield vs. time scatter plot and see if it synchronously highlights an implausible value (e.g., negative pressure) in a parallel coordinates plot or data table. Tools like plotly or bokeh enable this interactivity. True outliers often form clusters in PCA score plots, while entry errors appear as isolated, extreme points in univariate distributions.

FAQ 4: When visualizing high-dimensional catalyst descriptor data (e.g., elemental properties), what is the best first step to screen for outliers? A Principal Component Analysis (PCA) scores plot (PC1 vs. PC2) is the optimal first visual screening step. It projects the high-dimensional data into the two directions of greatest variance. Outliers will appear as points far from the dense central cluster. Always accompany this with the corresponding loadings plot to interpret which original variables contribute to the outlier's position.

Table 1: Quantitative Summary of Visual Outlier Detection Efficacy in Catalyst Studies

| Visual Tool | Average % of Outliers Detected (Study Range) | Common Catalyst Data Type Applied To | False Positive Rate (Typical) |

|---|---|---|---|

| Box Plot / IQR Fencing | 78% (65-90%) | Univariate Performance Metrics (TOF, Yield, Selectivity) | Low-Moderate |

| Scatter Plot (w/ Manual Inspection) | 65% (50-85%) | Bivariate Relationships (e.g., Yield vs. Temp) | Highly User-Dependent |

| PCA Scores Plot (2D/3D) | 85% (70-95%) | High-Dimensional Descriptor Space | Moderate |

| Parallel Coordinates Plot | 70% (55-80%) | Multi-parameter Reaction Conditions | High without brushing |

| Run Chart / Control Chart | 90% (80-98%) | Time-Series or Sequential Batch Data | Low |

Experimental Protocol: Visual Screening for Outliers in Catalyst Performance Datasets

1. Objective: To perform an initial, visual EDA to identify potential outliers and anomalies in a dataset comprising catalyst performance metrics and reaction conditions.

2. Materials: Dataset (.csv format), Statistical software (R with ggplot2 & plotly, or Python with matplotlib, seaborn, & plotly).

3. Procedure:

- Step 1 – Univariate Distribution Check: For each key performance metric (e.g., Yield %, TOF, Selectivity), generate a histogram with a kernel density overlay and a box plot. Flag any data points beyond 1.5 * IQR (whiskers) in the box plot.

- Step 2 – Bivariate Relationship Mapping: Create a scatter plot matrix for all continuous variables (performance metrics + conditions: T, P, concentration). Use color coding for categorical variables (e.g., catalyst family). Manually inspect for points severely deviating from the correlation cloud.

- Step 3 – Dimensionality Reduction Visualization: Standardize the data. Perform PCA. Generate a 2D scatter plot of scores for the first two principal components (PC1 vs. PC2). Label points with a unique run ID. Investigate points isolated from the main cluster.

- Step 4 – Interactive Cross-Verification: Use an interactive library (e.g.,

plotly) to create linked views. Selecting an outlier candidate in the PCA plot should highlight its corresponding row in the data table and its position in all other plots. - Step 5 – Documentation: For each visually flagged point, record the Run ID, the visual tool(s) in which it appeared anomalous, and a preliminary hypothesis (data error, special cause, breakthrough performance).

4. Analysis: Document the count and nature of visually identified outliers. Compare this list with the output of subsequent formal statistical outlier tests (e.g., Grubbs', Mahalanobis distance).

Diagram: Visual EDA Workflow for Catalyst Data Screening

Diagram: Outlier Identification in a PCA Scores Plot

The Scientist's Toolkit: Research Reagent Solutions for Catalyst EDA

| Item | Function in Visual EDA |

|---|---|

| Python/R Data Stack (pandas, numpy, tidyverse) | Core data manipulation, cleaning, and aggregation for analysis. |

| Visualization Libraries (matplotlib, seaborn, ggplot2, plotly) | Creation of static and interactive plots for distribution and relationship analysis. |

| Dimensionality Reduction (scikit-learn PCA, UMAP) | Projects high-dimensional catalyst descriptor data into 2D/3D for visual outlier screening. |

| Interactive Notebook (Jupyter, RMarkdown) | Environment for reproducible analysis, combining code, visualizations, and documentation. |

| Statistical Summary Functions | Generates mean, median, IQR, and variance to inform axis limits and threshold lines on plots. |

Technical Support & Troubleshooting Hub

FAQ: Addressing Anomalies in Catalyst Performance Data

Q1: My catalyst screening data shows one compound with an order-of-magnitude higher turnover frequency (TOF) than the rest of the library. The synthesis log shows no errors. Is this a measurement artifact or a true breakthrough?

A: This is the core scenario of our thesis. Do not dismiss it prematurely. Follow this protocol:

- Re-measurement Protocol: Re-run the activity assay in triplicate, using fresh aliquots from the same synthesis batch. Include the original "control" catalysts from the same plate for direct comparison.

- Material Characterization Re-run: Perform fresh XPS and TEM analysis on the outlier catalyst material to confirm composition and nanostructure.

- Statistical Test: Apply Grubb's Test for outliers. If the point remains a statistical outlier (p < 0.05), proceed to mechanistic investigation.

Q2: The outlier catalyst's performance is not reproducible in follow-up synthesis batches. What could be causing this?

A: This often indicates a hidden, uncontrolled synthesis variable. Troubleshoot using this guide:

- Check: Reaction atmosphere logs for the original batch. Trace O₂ or H₂O ingress can sometimes create unique surface dopants.

- Check: Solvent bottle source and batch number. Trace impurities (e.g., metal ions in solvents) can act as co-catalysts.

- Protocol: Systematic Replication: Design a DoE (Design of Experiments) that intentionally varies parameters typically held constant (e.g., flask washing method, reagent addition rate, ambient humidity during synthesis).

Q3: How do I determine if the outlier's performance is due to a novel catalytic mechanism versus simply higher surface area?

A: You must decouple intrinsic activity from morphological effects.

- Protocol: BET Isotherm with t-Plot Analysis: Perform N₂ physisorption to determine total surface area and micropore vs. mesopore distribution.

- Protocol: Chemisorption: Use CO or H₂ pulse chemisorption to measure active metal surface area specifically.

- Calculation: Normalize TOF data to active site (from chemisorption) instead of total mass or total surface area. If the outlier remains, the evidence for a novel mechanism strengthens.

Experimental Protocols Cited

Protocol 1: High-Throughput Catalyst Screening for Turnover Frequency (TOF)

- Prepare catalyst library array (50 µL of 1 mg/mL suspension per well in 96-well plate).

- Inject substrate solution (100 µL, 10 mM in appropriate solvent) via automated liquid handler.

- Initiate reaction by injecting co-factor/initiator solution (50 µL).

- Monitor reaction progress via in-plate UV-Vis spectroscopy or HPLC-MS every 30 seconds for 10 minutes.

- Calculate initial rate from the linear slope of product formation (first 5% conversion).

- TOF Calculation: TOF = (moles product formed) / (moles active site * time). Active site moles estimated from ICP-MS data of metal loading.

Protocol 2: Grubb's Test for Statistical Outliers in Performance Datasets

- Calculate the sample mean (x̄) and standard deviation (s) of the TOF dataset.

- For the suspected outlier value G, calculate: G = |x̄ - suspected value| / s.

- Compare the calculated G to the critical G value from Grubb's table for N samples and α=0.05.

- If G(calculated) > G(critical), the point is a statistical outlier.

Data Presentation

Table 1: Comparative Performance of Catalyst Library "Alpha-12"

| Catalyst ID | TOF (h⁻¹) | Metal Loading (wt%, ICP-MS) | Active Surface Area (m²/g, Chemisorption) | Normalized TOF (per active site) | Grubb's Test Result (α=0.05) |

|---|---|---|---|---|---|

| A12-Cat01 | 150 | 2.1 | 50 | 3.00 | - |

| A12-Cat02 | 165 | 2.3 | 55 | 3.00 | - |

| A12-Cat07 (Outlier) | 1,850 | 2.2 | 52 | 35.58 | Yes (G=8.7) |

| A12-Cat08 | 158 | 2.1 | 49 | 3.22 | - |

| A12-Cat12 | 142 | 2.0 | 48 | 2.96 | - |

Table 2: Replication Study of Outlier Catalyst A12-Cat07

| Synthesis Batch | Controlled Atmosphere? | TOF (h⁻¹) | Notes |

|---|---|---|---|

| Original | No (Ambient) | 1,850 | Breakthrough result. |

| Batch 2 | Yes (N₂ Glovebox) | 155 | Performance lost. Suggests ambient variable critical. |

| Batch 3 | No (Ambient, 70% RH) | 1,920 | High reproducibility. Suggests atmospheric H₂O may be a key reagent. |

| Batch 4 | No (Ambient, <10% RH) | 160 | Confirms H₂O as critical variable in synthesis. |

The Scientist's Toolkit: Research Reagent Solutions

| Item & Supplier (Example) | Function in Catalyst Outlier Investigation |

|---|---|

| HPLC-MS Grade Solvents (e.g., Sigma-Aldrich) | Ensures no trace metal impurities artificially create catalytic activity. |

| ICP-MS Standard Solutions (e.g., Inorganic Ventures) | Accurate quantification of metal loading for TOF normalization. |

| Chemisorption Gases (e.g., 5% CO/He, Linde) | Precisely measures active metal surface area, not just total porosity. |

| Deuterated Solvents for NMR (e.g., Cambridge Isotopes) | Mechanistic probing of reaction pathways unique to the outlier catalyst. |

| High-Purity Metal Precursors (e.g., Strem Chemicals) | Eliminates precursor batch variation as a cause of performance disparity. |

| Functionalized SiO₂ Supports (e.g., SiliCycle) | Allows testing of the outlier's active species on different support materials. |

Visualizations

Title: Outlier Investigation Workflow

Title: Proposed Novel Pathway for Outlier Catalyst

From Z-Scores to Isolation Forests: A Toolbox for Detecting Catalytic Anomalies

Troubleshooting Guides and FAQs

Q1: In my catalyst turnover frequency (TOF) dataset, the IQR method flags too many points as outliers, skewing my analysis. What am I doing wrong? A: A common error is applying the 1.5*IQR rule without considering the underlying distribution of catalyst performance data, which is often non-normal. For catalyst TOF data, we recommend:

- Verify Distribution: First, test your data for normality (e.g., Shapiro-Wilk test). Catalyst deactivation curves often produce right-skewed data.

- Adjust the Multiplier: For smaller, noisier datasets typical in early-stage catalyst research, use a more conservative multiplier (e.g., 2.5 or 3.0 * IQR) to avoid false positives.

- Protocol: 1) Plot data (histogram & Q-Q plot). 2) Perform normality test (p<0.05 suggests non-normal). 3) If non-normal, calculate IQR (Q3-Q1). 4) Temporarily set bounds at 2.5*IQR from Q1/Q3. 5) Re-assess flagged points chemically (e.g., failed experiment vs. genuine catalyst breakthrough).

Q2: When using the Modified Z-Score on my adsorption enthalpy data, some clear outliers are not detected. Why does this happen? A: The Modified Z-Score uses the Median Absolute Deviation (MAD), which is highly resistant to outliers. However, in small datasets (n<20), its robustness can become a weakness.

- Root Cause: A single, extreme outlier inflates the MAD less than the standard deviation. In small samples, this can cause the Modified Z-Score of the outlier itself to fall below the typical threshold of |3.5|.

- Solution: For small-n catalyst datasets, use Grubbs' Test as a primary screen, which is more sensitive for single outliers in normally distributed data. Use Modified Z-Score as a secondary check for multiple outliers.

- Protocol: 1) Ensure data is approximately normal. 2) Perform Grubbs' Test (iteratively). 3) Apply Modified Z-Score (Threshold: |3.5|) on the cleaned data to catch remaining outliers. 4. Cross-reference with experimental logs.

Q3: Grubbs' Test fails to identify an outlier in my batch of yield data, but my colleague's replicate experiment clearly shows one. What gives? A: Grubbs' Test assumes the data, excluding the potential outlier, is normally distributed. Your batch data may be bimodal due to an unrecorded process variable.

- Troubleshooting Steps:

- Segment Data: Check experimental logs for split conditions (e.g., different catalyst batches, slight temperature fluctuations). Re-apply Grubbs' Test to each segmented group.

- Test Assumption: Perform a normality test (e.g., Anderson-Darling) on the data after removing the suspected outlier. If this subset is non-normal (p<0.05), Grubbs' Test is invalid.

- Alternative Method: Apply the non-parametric IQR method to the entire dataset as a robustness check.

- Experiment Protocol: For yield validation: 1) Re-run the suspected outlier reaction condition in triplicate. 2) Compare the triplicate mean to the original dataset using a two-sample t-test. 3. If significantly different (p<0.05), the original point is a valid outlier likely caused by an uncontrolled variable.

Q4: How do I choose the right outlier detection method for my catalyst screening dataset? A: The choice depends on data distribution, sample size, and research goal. See the decision table below.

Q5: Can I use these methods on time-series data from a continuous flow reactor? A: Direct application is not advised. These are for independent, univariate data. Time-series data has autocorrelation. Use methods like:

- Pre-processing: First, differencing the data to remove trend/seasonality.

- Specialized Methods: Apply control charts (e.g., CUSUM) or model-based residuals (e.g., ARIMA model) and then apply IQR/Grubbs' on the residuals.

Data Presentation

Table 1: Comparison of Outlier Detection Methods for Catalyst Research

| Method | Key Formula | Typical Threshold | Optimal Use Case in Catalysis | Strengths | Weaknesses |

|---|---|---|---|---|---|

| IQR (Tukey's Fences) | Lower: Q1 - kIQRUpper: Q3 + kIQR | k = 1.5 (standard)k = 2.5-3.0 (conservative) | Initial, non-parametric screening of skewed activity or selectivity data. | Simple, robust to non-normality, good for exploratory analysis. | Less sensitive for small n; arbitrary multiplier; can miss outliers in small datasets. |

| Modified Z-Score | Mi = 0.6745*(xi - Median(x)) / MAD | |M_i| > 3.5 | Identifying multiple outliers in medium-sized datasets (n>20) where SD is unstable. | Highly resistant to outliers, better than STD Z-score for real-world data. | Can be too robust for small n (n<15); less common, requiring explanation. |

| Grubbs' Test | G = max(|x_i - x̄|) / s | G > (N-1)/√N * √(t²/(N-2+t²))* | Formally testing for a single outlier in approximately normal data (e.g., replicate yield measurements). | Statistical rigor, provides a p-value. Good for small, normal datasets. | Assumes normality; only detects one outlier at a time; iterative use changes error rates. |

Table 2: Example Outlier Analysis on Catalyst TOF Data (Hypothetical Dataset)

| Catalyst ID | TOF (h⁻¹) | IQR Flag (k=1.5) | IQR Flag (k=3.0) | Modified Z-Score | Grubbs' Test p-value | Final Judgment |

|---|---|---|---|---|---|---|

| Cat-A1 | 12.5 | No | No | -0.45 | 0.62 | Valid |

| Cat-A2 | 13.1 | No | No | 0.12 | 0.85 | Valid |

| Cat-A3 | 12.8 | No | No | -0.18 | 0.92 | Valid |

| Cat-A4 | 14.0 | No | No | 0.82 | 0.42 | Valid |

| Cat-A5 | 5.1 | Yes | No | -4.72 | <0.01 | Outlier |

| Cat-A6 | 12.9 | No | No | -0.27 | 0.89 | Valid |

| Cat-A7 | 25.0 | Yes | Yes | 8.91 | <0.01 | Outlier |

| Cat-A8 | 13.5 | No | No | 0.45 | 0.67 | Valid |

Experimental Protocols

Protocol 1: Systematic Outlier Screening for Catalyst Performance Data

Objective: To identify and adjudicate outliers in univariate catalyst performance metrics (e.g., Yield, TOF, Selectivity). Materials: See "The Scientist's Toolkit" below. Procedure:

- Data Compilation & Blind Coding: Compile all data from replicates. Assign a random code to each data point to enable blind statistical analysis.

- Normality Assessment: Create a histogram and Q-Q plot. Perform the Shapiro-Wilk test. Proceed based on result:

- If p ≥ 0.10: Assume approximate normality. Proceed to Step 3A.

- If p < 0.10: Data is non-normal. Proceed to Step 3B.

- Outlier Detection:

- 3A. For Normal Data: a) Perform Grubbs' Test iteratively until no outlier is detected (α=0.05). b) Calculate Modified Z-Scores for the cleaned dataset as a secondary check (Flag if \|M\| > 3.5).

- 3B. For Non-Normal Data: a) Apply IQR method with a conservative multiplier (k=3.0). Flag points outside [Q1 - 3IQR, Q3 + 3IQR]. b) Apply Modified Z-Score on raw data (Flag if \|M\| > 3.5).

- Chemical & Contextual Review: Unblind the flagged outliers. Review original lab notebooks, chromatograms, and catalyst synthesis records for technical errors (e.g., incorrect loading, impure substrate, instrument glitch).

- Adjudication & Documentation: Categorize each flagged point as: a) Technical Error: Remove with detailed justification. b) Genuine Outlier: Retain but note for discussion. c) Ambiguous: Highlight for follow-up experimentation. Document all decisions in a master log.

Protocol 2: Validation of a Suspected Catalytic Outlier via Replication

Objective: To confirm whether a statistically identified outlier represents a true chemical phenomenon or an experimental artifact. Procedure:

- Replicate Exact Conditions: Using the same catalyst batch, reagents, and equipment, repeat the reaction experiment for the suspected outlier condition in a minimum of n=3 independent trials.

- Control Experiment: Also run n=3 replicates of a neighboring "normal" data point condition for comparison.

- Statistical Comparison: Perform a two-sample t-test (or Mann-Whitney U test if non-normal) comparing the new replicate set of the suspected outlier to the replicate set of the control condition.

- Interpretation: If the means are not significantly different (p ≥ 0.05), the original outlier was likely an artifact and can be excluded. If they are significantly different (p < 0.05), the original point may represent a real catalytic effect, warranting further investigation.

Mandatory Visualization

Outlier Detection Workflow for Catalyst Data

Statistical Tools for Outlier Detection

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Reagent | Function in Outlier Analysis Context |

|---|---|

| Statistical Software (R/Python) | Essential for executing Shapiro-Wilk tests, calculating IQR/MAD, and performing Grubbs' Test accurately with p-values. |

| Electronic Lab Notebook (ELN) | Critical for tracing flagged data points back to original experimental conditions, catalyst batch numbers, and operator notes for contextual review. |

| Reference Catalyst Material | A well-characterized catalyst sample run as an internal control across experiments to help distinguish systemic error from unique outliers. |

| High-Purity Analytical Standards | For validating GC/HPLC instrument response before attributing an outlier yield/selectivity value to catalyst performance. |

| Certified Reference Material (CRM) | Used in calibration curves for spectroscopic or adsorption measurements to identify outliers caused by instrumental drift. |

| Robust Statistical Library | (e.g., statsmodels in Python, robustbase in R). Provides reliable functions for calculating Median Absolute Deviation (MAD) and Modified Z-Scores. |

Technical Support Center: Troubleshooting Outlier Detection in Catalyst Data

FAQs & Troubleshooting Guides

Q1: My PCA model is dominated by a single principal component (PC1 variance >95%). Is this normal for catalyst performance datasets, and how does it affect outlier detection?

A: This is common in catalyst datasets where one variable (e.g., conversion rate) is measured on a vastly different scale or has inherently higher magnitude than others (e.g., trace impurity levels). It invalidates both PCA and Mahalanobis distance, as they assume comparable scales.

Solution: Standardize your data before analysis.

- Protocol: For each variable (x), calculate the z-score: (z = \frac{(x - \mu)}{\sigma}), where (\mu) is the variable mean and (\sigma) is its standard deviation. Perform PCA and compute Mahalanobis distance on the standardized matrix.

Q2: When using Mahalanobis distance, I get a "covariance matrix is singular" error. What causes this and how can I fix it?

A: This error occurs when variables are perfectly correlated or when the number of features (p) exceeds the number of observations (n). This is frequent in catalyst research with high-dimensional characterization data (e.g., numerous spectroscopic peaks).

Solution:

- Apply PCA first: Use PCA for dimensionality reduction.

- Protocol: Standardize data, perform PCA, retain PCs that explain >95% cumulative variance. Compute the Mahalanobis distance in the reduced PC space using the diagonal matrix of eigenvalues (which is non-singular).

- Regularization: Add a small constant ((\lambda)) to the covariance matrix diagonal: (C_{reg} = C + \lambda I).

Q3: How do I choose a threshold to flag outliers using Mahalanobis distance?

A: The theoretical (\chi^2) distribution can be sensitive to deviations from normality in real catalyst data.

Solution: Use a robust, data-driven threshold.

- Protocol:

- Calculate Mahalanobis distances (Dm) for your dataset.

- Fit a heavy-tailed distribution (e.g., Kernel Density Estimation) to the (Dm) values.

- Set the threshold at the 95th or 97.5th percentile of the fitted distribution. This adapts to your specific data structure.

Q4: PCA loadings for my catalyst data are difficult to interpret chemically. How can I improve interpretability?

A: Standard PCA finds mathematically optimal axes, not chemically meaningful ones.

Solution: Apply Varimax rotation.

- Protocol:

- Perform PCA and retain k components.

- Apply Varimax rotation to the k x p loading matrix.

- Rotated loadings tend to have values near 0 or ±1, making it clearer which original variables (e.g., specific elemental compositions, reaction conditions) contribute to each component.

Q5: An observation is flagged as an outlier by Mahalanobis distance but not in the PCA score plot (e.g., within Hotelling's T² ellipse). Why the discrepancy?

A: This indicates the outlier's nature.

| Detection Method | What it Captures | Reason for Discrepancy |

|---|---|---|

| Mahalanobis Distance | Global outliers: Deviate in the full multivariate space. | The observation may have an unusual combination of variables not apparent in the first 2-3 PCs. |

| PCA Score Plot (2D) | Outliers in the dominant variance structure. | The outlier's deviation lies in lower-variance PCs (e.g., PC4 or PC5) not visualized. |

| PCA Residual (Q-statistic) | Structural outliers: Poorly modeled by the PCA model. | Captures variance not explained by the retained PCs. |

- Protocol: Always compute both the Hotelling's T² (variation within PCA model) and the Q-statistic (distance to PCA model) for comprehensive outlier diagnosis.

Experimental Protocol: Integrated PCA-Mahalanobis Outlier Detection

Objective: Identify aberrant experiments in a dataset of 50 catalyst formulations evaluated for yield, selectivity, and 5 physiochemical properties.

Data Preparation:

- Assemble a 50x7 data matrix.

- Log-transform skewed variables (e.g., impurity counts).

- Standardize all columns to mean=0, variance=1.

PCA Modeling:

- Perform PCA on the standardized matrix.

- Retain PCs with eigenvalue >1 (Kaiser criterion), explaining 88% variance in this example.

- Save scores and loadings matrices.

Mahalanobis Distance in PC Space:

- Use the retained k PC scores.

- Calculate the covariance matrix of the k scores (diagonal matrix of eigenvalues).

- Compute Mahalanobis distance for each observation: (D_m = \sqrt{score \cdot cov^{-1} \cdot score^T}).

Thresholding & Validation:

- Calculate the theoretical (\chi^2) threshold for 95% confidence.

- Visually inspect outliers in the T² vs Q-residual contribution plot.

- Correlate flagged samples with experimental logs (e.g., atypical reagent batch, reactor calibration event).

Visualization: Outlier Detection Workflow

Title: Workflow for Outlier Detection in Catalyst Data

Title: Interpreting Outliers via T² and Q-Statistics

The Scientist's Toolkit: Research Reagent & Software Solutions

| Item / Solution | Function in Outlier Analysis |

|---|---|

Standard Scaling Library (e.g., Scikit-learn StandardScaler) |

Centers and scales variables to unit variance, a critical pre-processing step for PCA/Mahalanobis. |

| Robust Covariance Estimation (e.g., Minimum Covariance Determinant) | Calculates a covariance matrix less influenced by outliers, improving Mahalanobis distance reliability. |

| Chemical Data Standardizer (e.g., RDKit) | Standardizes catalyst structural representations (SMILES) to ensure consistent variable generation. |

| High-Performance Computing (HPC) Cluster Access | Enables bootstrapping and Monte Carlo simulations for determining robust statistical thresholds. |

| Electronic Lab Notebook (ELN) Integration | Links statistical outliers back to raw experimental metadata (lot numbers, instrument IDs) for root-cause analysis. |

Technical Support Center: Troubleshooting & FAQs for Outlier Detection in Catalyst Research

This support center addresses common issues encountered when applying Isolation Forests, LOF, and One-Class SVM to catalyst performance datasets within pharmaceutical and materials science research.

Frequently Asked Questions (FAQs)

Q1: My Isolation Forest identifies all high-activity catalyst data points as outliers. What is the cause and how can I fix this?

A: This is typically a contamination parameter issue. The default contamination parameter assumes a low outlier rate. In catalyst datasets, truly high-performance samples may be rare but are inliers of interest. Explicitly set the contamination parameter to a lower value (e.g., 'auto' or a specific float like 0.01) to reflect the actual expected proportion of anomalies (e.g., failed synthesis batches, not high performers).

Q2: When using LOF on my multi-batch catalyst dataset, the outlier scores vary dramatically each time I run the algorithm. Why is this happening?

A: LOF results can be unstable with default parameters on heterogeneous data. This indicates sensitivity to the n_neighbors parameter. Catalyst data often contains clusters from different experimental batches. Increase n_neighbors (e.g., from 20 to 50 or more) to make the density estimate more robust to local cluster variations. Always apply feature scaling (StandardScaler) before using LOF, as it is distance-based.

Q3: My One-Class SVM model trained on "normal" catalyst yield data fails to detect known faulty samples in testing. What should I check?

A: Focus on the kernel and nu parameter. The default RBF kernel may not suit your feature space. First, try tuning nu (the upper bound on the fraction of training outliers) to be slightly higher than the proportion of anomalies you expect in your "normal" training set. If performance remains poor, experiment with a linear kernel, especially if your features (e.g., temperature, pressure, precursor concentration) have a linear relationship with normal operation boundaries.

Q4: How do I handle categorical features (e.g., catalyst support type, dopant identity) when preprocessing data for these algorithms? A: Neither algorithm natively handles categorical data. You must encode these features. For Isolation Forest, One-Hot Encoding is often sufficient. For LOF and One-Class SVM, which rely on distance metrics, consider using Target Encoding or similar techniques that provide meaningful ordinal relationships, followed by robust scaling. Avoid simple Label Encoding which creates false ordinal relationships.

Q5: For validating outlier detection in my catalyst research, what quantitative metrics are most appropriate beyond precision/recall? A: Since labeled outlier data is often scarce, use metrics that evaluate the scoring ranking:

- Area Under the Receiver Operating Characteristic Curve (AUC-ROC): Measures the model's ability to rank anomalies higher than normal points.

- Average Precision (AP): More informative than AUC-ROC when the class imbalance (normal vs. anomaly) is extreme. Create a ground truth set from known synthesis failures or characterization faults to calculate these.

Comparative Performance Data

The following table summarizes a benchmark experiment on a simulated catalyst dataset containing 5% injected anomalies (simulated instrument errors and failed synthesis conditions).

Table 1: Algorithm Performance Comparison on Simulated Catalyst Dataset

| Metric | Isolation Forest | Local Outlier Factor (LOF) | One-Class SVM (RBF Kernel) |

|---|---|---|---|

| Average Precision (AP) | 0.89 | 0.92 | 0.85 |

| Training Time (s) | 0.45 | 0.12 | 5.73 |

| Inference Time (ms/sample) | 0.08 | 1.15 | 0.21 |

| Key Hyperparameter | contamination=0.05 |

n_neighbors=35 |

nu=0.05, gamma=0.1 |

| Sensitivity to Scaling | No | Yes | Yes |

Experimental Protocol: Benchmarking Outlier Detectors

Objective: To evaluate and select the optimal outlier detection model for identifying failed catalyst synthesis runs from process data.

Materials: Historical dataset of catalyst synthesis parameters (temperature, time, precursor concentrations) and corresponding performance metric (e.g., surface area).

Method:

- Data Preparation: Isolate data from successful syntheses (performance metric within 3σ of target). Standardize all features using RobustScaler.

- Ground Truth Creation: Inject synthetic anomalies (5% of data) by:

- Randomly shifting a process parameter beyond operational limits (simulating error).

- Adding random noise to all parameters of select samples (simulating corrupted data entry).

- Model Training:

- Isolation Forest: Train with

n_estimators=150,contamination='auto'. - LOF: Train with

n_neighbors=35,metric='euclidean'. - One-Class SVM: Train with

kernel='rbf',nu=0.05. Use a 80% split of normal data for training.

- Isolation Forest: Train with

- Evaluation: Predict on the full dataset (including injected anomalies). Calculate Average Precision (AP) using the injected anomaly labels.

Research Workflow Diagram

Outlier Detection Model Development Workflow for Catalyst Data (96 chars)

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Computational Tools for Outlier Detection Research

| Item | Function in Research | Example/Note |

|---|---|---|

| Scikit-learn Library | Primary Python library implementing IF, LOF, and One-Class SVM. | Ensure version >= 1.0 for stable LOF implementation. |

| RobustScaler | Preprocessing scaler that uses median/IQR, robust to outliers in the training data itself. | Critical step before LOF & One-Class SVM. |

| PyOD Library | Specialized Python library for outlier detection with unified APIs and additional algorithms. | Useful for advanced benchmarking. |

| Matplotlib/Seaborn | Visualization libraries for creating score distribution plots and decision boundary visualizations (for 2D projections). | Essential for explaining results to interdisciplinary teams. |

| Ground Truth Dataset | A small, meticulously labeled set of known catalyst synthesis failures or data corruption events. | Used for final model validation; often constructed manually from lab notes. |

Technical Support Center

Troubleshooting Guides

Issue 1: Pipeline Fails During Data Ingestion from High-Throughput Experiment (HTE) Reactors

- Symptoms: Script halts with "ConnectionError" or "DataFormatError"; no data processed.

- Diagnosis: This is typically a source connectivity or schema mismatch issue.

- Resolution:

- Verify network permissions and API key validity for the HTE database.

- Run the

validate_schema()function in the ingestion module with a single test file to check column names and data types. - Ensure timestamps are in ISO 8601 format. Use the provided

preprocess/date_parser.pyutility.

- Prevention: Implement the

ingestion_test.pyunit test in your CI/CD schedule.

Issue 2: High False Positive Rate in Catalyst Turnover Frequency (TOF) Outliers

- Symptoms: Pipeline flags an unrealistic number of active catalysts as outliers.

- Diagnosis: Likely due to inappropriate choice of detection algorithm or poorly set sensitivity parameters for your data distribution.

- Resolution:

- Check the distribution of your TOF data using the

visualize_distribution()function. If non-normal, switch from Z-score to IQR or Isolation Forest. - Adjust the

contaminationparameter in theconfig.yamlfile. Start with 0.01 (1%) for stringent detection. - Confirm reaction yield data for flagged catalysts; a low yield may explain a low TOF, making it a valid datapoint, not a technical outlier.

- Check the distribution of your TOF data using the

- Prevention: Calibrate parameters on a small, validated historical dataset before full deployment.

Issue 3: Automated Report Generation Excludes Key Figures

- Symptoms: PDF report is generated but missing the critical scatter plot of selectivity vs. activity.

- Diagnosis: Path error for the figure file or matplotlib backend conflict in the virtual environment.

- Resolution:

- Check the

output/figures/directory for the existence ofselectivity_vs_activity.png. - If missing, re-run the

plotting_module.pyscript directly, ensuring theAggbackend is set:import matplotlib; matplotlib.use('Agg'). - Verify the file path in the report template

report_template.j2is correct.

- Check the

- Prevention: Use absolute paths defined in the central configuration file.

Frequently Asked Questions (FAQs)

Q1: What is the recommended outlier detection method for initial catalyst screening data? A1: For high-dimensional catalyst performance data (e.g., combining TOF, yield, selectivity, reaction conditions), we recommend starting with an unsupervised ensemble approach. Use Isolation Forest for its efficiency with large datasets and ability to handle complex distributions, followed by DBSCAN to account for local density variations. This combination effectively flags both global and contextual anomalies, such as a promising catalyst under atypical conditions.

Q2: How should we handle missing ligand or solvent identifiers in the input data? A2: Do not impute categorical identifiers. The pipeline is configured to flag rows with missing critical identifiers (Catalyst_ID, Ligand, Solvent) in a separate "data quality" report. These rows should be reviewed manually before analysis. For performance metrics (e.g., missing Yield), use median imputation based on the catalyst subclass, but ensure this is documented in the report's methodology section.

Q3: Can the pipeline integrate with our electronic lab notebook (ELN) system?

A3: Yes, but it requires configuration. A standard API connector module is provided for LabArchives and Bruker SampleBook. For other ELNs (e.g., Dotmatics, Benchling), you will need to map the data export schema to our standard input format (data/schemas/input_schema.json). Authentication is handled via OAuth 2.0.

Q4: The pipeline flagged a catalyst as an outlier, but it was a genuine breakthrough. What went wrong? A4: This is a critical "false positive" in a research context. Outlier detection is statistical, not causal. A genuine high-performing catalyst is a scientific outlier but a valid observation. The pipeline includes a "Expert Review Module" for this purpose. All algorithmically flagged outliers should be routed here for a researcher's final assessment, which can override the flag. This feedback can also be used to retrain supervised models if labeled data is available.

Supporting Data & Protocols

Table 1: Comparison of Outlier Detection Algorithms on Catalyst Datasets

| Algorithm | Key Principle | Pros for Catalyst Data | Cons for Catalyst Data | Recommended Use Case |

|---|---|---|---|---|

| Z-Score (>3σ) | Distance from mean in standard deviations. | Simple, fast. | Assumes normal distribution; sensitive to global outliers only. | Initial scan of a single, normally-distributed metric (e.g., yield under standard conditions). |

| Interquartile Range (IQR) | Uses data quartiles; flags points outside 1.5*IQR. | Robust to non-normal data. | Univariate; ignores feature relationships. | Identifying outliers in individual reaction parameters (e.g., temperature, pressure). |

| Isolation Forest | Randomly partitions data; isolates anomalies faster. | Handles high dimensions, non-normal data. | May struggle with high-dimensional, locally dense anomalies. | Primary screening tool for multi-parameter performance data. |

| DBSCAN | Density-based; flags points in low-density regions. | Finds local & non-global outliers; no need to specify number of clusters. | Sensitive to distance metric and parameters (eps, min_samples). | Finding atypical catalysts within a specific ligand class or condition subset. |

Experimental Protocol: Validating an Automated Outlier Pipeline

Title: Protocol for Benchmarking an Outlier Detection Pipeline on Historical Catalyst Performance Data. Objective: To quantify the precision and recall of the automated pipeline against a manually curated dataset of known anomalies. Materials: See "The Scientist's Toolkit" below. Procedure:

- Data Preparation: Isolate a historical dataset of 500-1000 catalyst experiments where outliers (e.g., failed reactions, instrumental errors, genuine breakthroughs) have been manually labeled.

- Pipeline Execution: Run the raw, unlabeled data through the configured automated pipeline. Export its flagged outlier list with unique identifiers.

- Validation: Compare the pipeline's output against the manual labels using a confusion matrix.

- Metric Calculation:

- Precision = (True Positives) / (All Pipeline-Flagged Points).

- Recall = (True Positives) / (All Manually-Labeled Anomalies).

- F1-Score = 2 * (Precision * Recall) / (Precision + Recall).

- Iteration: Adjust algorithm parameters in

config.yamlto maximize the F1-Score. Retrain any supervised models if used.

Visualizations

Diagram 1: Automated Outlier Detection Workflow

Diagram 2: Key Catalyst Performance Metrics & Relationships

The Scientist's Toolkit

| Research Reagent / Solution | Function in Pipeline Context |

|---|---|

| Scikit-learn Library (v1.3+) | Core Python library providing the IsolationForest, DBSCAN, and preprocessing algorithms for outlier detection. |

| Catalyst Performance Dataset (Historical) | Labeled dataset of past experiments, essential for training, validating, and calibrating the detection algorithms. |

| Standardized Data Schema (JSON/YAML) | A predefined template dictating required fields (Catalyst_ID, Conditions, Metrics) to ensure consistent data ingestion. |

| Jupyter Notebook / Python Scripts | Environment for developing, testing, and iterating on individual pipeline modules before full automation. |

| Configuration File (config.yaml) | Central file to adjust algorithm parameters, file paths, and sensitivity thresholds without altering code. |

| Virtual Environment (e.g., conda, venv) | Isolated Python environment to manage specific library versions (pandas, numpy, scikit-learn, matplotlib) and ensure reproducibility. |

Frequently Asked Questions (FAQs)

Q1: Our high-throughput catalyst screening data shows unusually high variance in replicate measurements for a specific library plate. What are the primary causes and how do we isolate them?

A: High inter-replicate variance is a common anomaly. Follow this systematic troubleshooting protocol.

Protocol: Instrument & Contamination Check

- Step 1: Run the calibration standard and blank solvent wells on the suspected plate reader or GC/HPLC system. Compare results to historical calibration data (see Table 1).

- Step 2: Visually inspect the microplate for condensation, evaporation at the edges, or particulate matter. Check the liquid handler tips for clogs or wear.

- Step 3: Re-prepare and re-run a control catalyst formulation from the anomalous plate on a fresh microplate.

Protocol: Data Artifact Identification

- Step 1: Generate a plate heatmap of the coefficient of variation (CV%) for your key performance metric (e.g., turnover frequency, yield).

- Step 2: Apply a spatial filter (e.g., median filter) to distinguish random outliers from systematic edge or row/column effects.

Q2: When applying statistical outlier detection (e.g., Z-score, Grubbs' test) to our catalyst library data, we get too many false positives from inherently active catalysts. How should we adjust our model?

A: This indicates your model is not accounting for the underlying distribution of "high performers" vs. "true anomalies."

- Protocol: Distribution-Sensitive Modeling

- Step 1: Do not apply a global model. First, segment your library by catalyst core structure (e.g., ligand class, metal center).

- Step 2: Within each structural cluster, transform your activity data (e.g., log transformation) to approximate a normal distribution before applying Z-score. Alternatively, use the Median Absolute Deviation (MAD) method, which is more robust to a non-normal distribution.

- Step 3: Set adaptive thresholds per cluster. A threshold of |MAD| > 3.5 is often more effective than a standard Z-score of |3| for skewed data.

Q3: We suspect some "high-performing" catalyst hits are actually the result of synthetic or purification errors (e.g., residual solvent, incorrect stoichiometry). How can we detect these pre-synthesis anomalies?

A: This requires correlating HTS performance data with analytical data from the synthesis step.

- Protocol: Cross-Modal Anomaly Detection

- Step 1: Integrate QC data (e.g., LC-MS purity, NMR yield) for each catalyst precursor into your analysis database.

- Step 2: Create a bivariate model. For example, plot catalytic yield against LC-MS purity. True high performers will cluster in the high-purity, high-yield quadrant.

- Step 3: Flag catalysts showing high HTS activity but low synthesis purity as "suspicious anomalies" for re-synthesis and validation.

Quantitative Data Summary

Table 1: Calibration Standard Tolerances for Common HTS Readouts

| Analytical Method | Target Metric | Acceptable Range | Anomaly Threshold |

|---|---|---|---|

| UV-Vis Plate Reader | Absorbance (450 nm) | 0.990 - 1.010 AU | <0.980 or >1.020 AU |

| GC-FID | Area Count (Standard) | 95,000 - 105,000 | <90,000 or >110,000 |

| HPLC-UV | Retention Time | ± 0.1 min drift | > ± 0.2 min drift |

| Luminescence | RLU (Control) | CV < 5% | CV > 15% |

Table 2: Common Outlier Detection Methods for Catalyst HTS

| Method | Best For | Key Parameter | Weakness |

|---|---|---|---|

| Z-Score | Normally distributed activity data. | Threshold (typically ±3σ) | Assumes normality; sensitive to global outliers. |

| MAD (Median Abs. Deviation) | Non-normal, skewed data distributions. | Scaling factor (typically 3.5) | Less efficient for very small sample sizes. |

| Isolation Forest | High-dim. data, multi-variate anomalies. | Number of trees, sub-sample size. | Can struggle with very dense anomaly clusters. |

| DBSCAN | Spatial anomalies (e.g., plate effects). | Epsilon (ε), min samples. | Requires parameter tuning for each dataset. |

Experimental Protocols

Protocol: Implementing the MAD-Based Outlier Filter for Catalyst Turnover Frequency (TOF)

- Objective: To robustly identify anomalous TOF values within a defined catalyst sub-library.

- Materials: See "The Scientist's Toolkit" below.

- Procedure:

- For a selected catalyst family (N > 30), compile all TOF values into a vector

X. - Calculate the median of

X:M = median(X). - Calculate the absolute deviations:

AD = |X_i - M|. - Calculate the median of these deviations:

MAD = median(AD). - Compute the modified Z-score for each data point:

M_i = 0.6745 * (X_i - M) / MAD. (The constant 0.6745 makes MAD a consistent estimator for the standard deviation of a normal distribution). - Flag any catalyst where

|M_i| > 3.5as a statistical outlier for further investigation.

- For a selected catalyst family (N > 30), compile all TOF values into a vector

Protocol: Detecting Spatial Plate Effects in HTS

- Objective: Identify systematic errors based on a catalyst's location on a microplate.

- Procedure:

- Arrange the primary activity data (e.g., conversion %) in its 96 or 384-well plate layout.

- Calculate the median activity for all wells, for each row, and for each column.

- Generate two heatmaps: (i) raw activity, (ii) row- and column-normalized activity (activity / (row median * column median / overall median)).

- Apply a spatial clustering algorithm (like DBSCAN) to the normalized heatmap. Wells clustered away from the main dense region (e.g., all edge wells forming a separate cluster) indicate a spatial artifact.

Visualizations

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Function in HTS Anomaly Detection |

|---|---|

| Internal Standard Kits | Contains stable isotope-labeled analogs of reaction products. Added pre-analysis to correct for instrument drift and injection volume errors, isolating true catalytic performance anomalies. |

| Calibration Standard Plates | Pre-formulated microplates with known concentrations of reactants/products. Used to validate the accuracy and precision of the analytical readout before each screening batch. |

| Metal Scavenger Resins | Used in post-reaction quenching to selectively remove residual catalyst metals. Helps determine if high activity is due to homogeneous catalysis or leached metal species (an artifact). |

| Automated Liquid Handlers | Precision robots for nanoliter-scale reagent dispensing. Critical for minimizing volume-based variance, a major source of false-positive outliers in replicate wells. |

| High-Throughput LC-MS Systems | Provides rapid purity and identity confirmation for catalyst libraries pre- and post-screening. Essential data for cross-modal anomaly detection (FAQ Q3). |

| Statistical Software (e.g., JMP, Spotfire) | Platforms with built-in spatial statistics and multivariate outlier detection algorithms (like PCA-based models) specifically designed for plate-based HTS data analysis. |

Handling Noisy and Complex Data: Strategies for Robust Outlier Management

Technical Support Center: Troubleshooting Guides & FAQs

FAQs on Hyperparameter Sensitivity Analysis in Catalyst Research

Q1: During a sensitivity analysis for my Random Forest model on catalyst outlier detection, the model performance (F1-score) varies wildly with small changes to max_depth. What is the primary cause and how can I stabilize it?

A: High sensitivity to max_depth often indicates your model is overfitting to noise in the training data, which is common in catalyst datasets with inherent high variance. To stabilize:

- Increase

min_samples_leaf(e.g., from 1 to 5 or 10) to force the tree to learn more robust rules. - Increase the

n_estimators(e.g., to 500 or 1000) to leverage the ensemble's averaging effect. - Apply more aggressive outlier pre-filtering before the hyperparameter tuning step to reduce noise. Protocol:

- Retrain with

max_depthfixed at a moderate value (e.g., 10). - Perform a grid search on

min_samples_leaf: [1, 3, 5, 10] andn_estimators: [100, 300, 500]. - Use 10-fold cross-validation, ensuring each fold maintains the same class distribution of inliers/outliers.

Q2: When tuning a One-Class SVM for novel catalyst failure detection, the ROC-AUC plateaus across a wide range of nu values. How do I interpret this and select the best value?

A: A plateau suggests the model is capturing the core data distribution robustly, which is positive. Selection should then be guided by operational context from your drug development pipeline:

- Prioritize a higher

nu(e.g., 0.1) if missing a potential outlier (false negative) is very costly (e.g., would lead to a failed clinical batch). - Prioritize a lower

nu(e.g., 0.01) if false alarms (false positives) waste significant lab resources for re-testing. Protocol:

- Calculate precision and recall for outlier detection at each

nuvalue on a held-out validation set containing known anomaly types. - Choose the

nuthat best aligns with your predefined cost-benefit ratio for errors.

Q3: My gradient boosting model (XGBoost) for predicting catalyst degradation shows excellent cross-validation scores but fails completely on new experimental batches. What is the likely hyperparameter-related issue? A: This is a classic sign of data leakage or over-tuning to the specific validation split, causing failure to generalize. Key hyperparameters to check:

subsampleandcolsample_bytree: Ensure they are set below 1.0 (e.g., 0.8) to ensure the model is trained on different data subsets, improving generalization.learning_rate(eta): If it's too high (e.g., >0.1) with highn_estimators, the model may overfit. Reduce the learning rate and increase estimators proportionally. Protocol:- Implement nested cross-validation: an outer loop for generalizability assessment and an inner loop purely for hyperparameter tuning.

- Use a time-series or batch-aware cross-validation split to prevent leakage between old and new experimental data.

Table 1: Sensitivity of Model Performance to Key Hyperparameters (Benchmark on Catalyst Dataset CIF-2023)

| Model | Hyperparameter | Tested Range | Performance Metric (Avg. F1-Score) Range | Sensitivity (ΔF1/ΔParam) |

|---|---|---|---|---|

| Random Forest | max_depth |

[5, 10, 15, 20, None] | [0.78, 0.89, 0.91, 0.90, 0.87] | High (0.013/unit) |

| Isolation Forest | contamination |

[0.01, 0.05, 0.1] | [0.65, 0.82, 0.88] | Very High (1.15/0.01) |

| One-Class SVM | nu |

[0.01, 0.05, 0.1] | [0.72, 0.85, 0.83] | Medium (0.72/0.01) |

| XGBoost | learning_rate |

[0.01, 0.1, 0.3] | [0.90, 0.93, 0.89] | Low (0.02/0.1) |

Table 2: Recommended Hyperparameter Search Spaces for Catalyst Outlier Detection

| Model | Hyperparameter | Recommended Search Space | Optimization Priority |

|---|---|---|---|

| Random Forest | max_depth |

[5, 8, 10, 12, 15] | High |

min_samples_leaf |

[1, 3, 5, 10] | High | |

| Isolation Forest | n_estimators |

[100, 200, 500] | Medium |

max_samples |

[0.5, 0.8, 1.0] | High | |

| One-Class SVM | nu |

Log-scale: [0.001, 0.01, 0.05, 0.1] | Critical |

gamma |

Scale-based: ['scale', 'auto'] or [0.001, 0.01] | Medium |

Experimental Protocol: Nested CV for Hyperparameter Sensitivity

Objective: To reliably assess hyperparameter sensitivity and select optimal values for an outlier detection model without data leakage. Materials: Labeled catalyst performance dataset (features: reaction yield, selectivity, TON; label: inlier/outlier). Method:

- Outer Loop (Generalization Assessment): Split data into 5 temporal folds (F1-F5).

- Inner Loop (Parameter Tuning): For each outer training set (e.g., F1-F4): a. Perform a 4-fold cross-validation grid/random search over the hyperparameter space (see Table 2). b. Select the parameter set yielding the highest average precision for the outlier class.

- Evaluation: Train a model on the full outer training set with the selected parameters. Evaluate it on the held-out outer test fold (e.g., F5). Record the F1-score for outliers.

- Iteration & Final Model: Repeat steps 2-3 for each outer fold. The final model is trained on all data using the hyperparameter set with the median performance across outer folds.

Visualizations

Nested CV for Reliable Hyperparameter Tuning

How Hyperparameters Affect Model Generalization

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Hyperparameter Tuning for Catalyst ML |

|---|---|

| Scikit-learn | Primary Python library providing consistent APIs for models (Isolation Forest, One-Class SVM) and tuning tools (GridSearchCV). |

| Optuna or Hyperopt | Frameworks for Bayesian hyperparameter optimization, more efficient than grid search for high-dimensional spaces. |

| MLflow | Tracks hyperparameter combinations, resulting metrics, and model artifacts to manage the experimental lifecycle. |

| Catalyst-Specific Validation Splits | Custom data splitting strategies (e.g., by experimental batch or time) to prevent data leakage and ensure realistic performance estimates. |

| Domain-defined Performance Metrics | Metrics like Batch-wise Recall of Critical Failures are often more relevant than standard F1 for prioritizing tunin |

Dealing with High-Dimensional and Sparse Catalyst Datasets

Troubleshooting Guides & FAQs

Data Preprocessing & Cleaning

Q1: My dataset has over 10,000 features but only 200 samples. Many features have over 95% zero values. What is the first critical step I should take before applying any outlier detection model?

A: Immediately apply dimensionality reduction. For such extreme sparsity, use Truncated Singular Value Decomposition (t-SVD) or Non-Negative Matrix Factorization (NMF) as they handle sparse matrices efficiently. Do not use standard PCA. Follow this protocol:

- Create a binary presence/absence matrix from your sparse data.

- Apply t-SVD from

scikit-learn(sklearn.decomposition.TruncatedSVD). - Retain components explaining ~80-85% of variance initially.

- Validate by checking reconstruction error on a held-out subset of non-zero entries.

Q2: After cleaning, my catalyst performance metrics (e.g., turnover frequency) contain extreme values. How do I determine if they are true experimental outliers or valid, high-performing catalysts?

A: Implement a two-step statistical and contextual verification protocol. Step 1 (Statistical): Use the Median Absolute Deviation (MAD) method for robust univariate detection on each performance metric. Use a conservative threshold (e.g., 5 MADs). Step 2 (Contextual): Correlate the extreme performance value with the catalyst's descriptor space. Use a Local Outlier Factor (LOF) algorithm on the reduced-dimensionality feature set. If a sample is an outlier in performance but not in descriptor space, it may be a true high-performer. Flag only samples that are outliers in both.

Q3: What is the most common mistake in handling missing values in sparse catalyst datasets, and how should I correct it?