Bayesian Optimization for Catalytic Experimental Design: A Data-Driven Approach to Accelerating Catalyst Discovery and Development

This article provides a comprehensive guide for researchers and drug development professionals on applying Bayesian Optimization (BO) to experimental design in catalysis.

Bayesian Optimization for Catalytic Experimental Design: A Data-Driven Approach to Accelerating Catalyst Discovery and Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying Bayesian Optimization (BO) to experimental design in catalysis. We explore the foundational principles of BO, contrasting it with traditional high-throughput and one-factor-at-a-time methods. The core focus is on a practical, methodological walkthrough for implementing BO in catalysis workflows—from surrogate model selection to acquisition function tuning. We address common pitfalls, optimization strategies for complex multi-objective goals, and methods for validating BO performance against established techniques. By synthesizing current literature and applications, this article serves as a roadmap for integrating this powerful machine learning tool to drastically reduce experimental cost and time in catalyst discovery, formulation, and process optimization.

What is Bayesian Optimization? Core Principles and Why It's Revolutionizing Catalyst Discovery

Technical Support Center

Troubleshooting Guide & FAQs

Q1: Our OFAT (One-Factor-At-a-Time) catalyst screening is taking too long and consuming excessive reagents. How can we design a more efficient initial experiment set within a Bayesian optimization framework?

A: The inefficiency stems from OFAT's inability to capture factor interactions. Implement a Bayesian-optimization-guided Design of Experiments (DoE).

- Define your search space: Specify ranges for each factor (e.g., temperature: 50-150°C, pressure: 1-10 bar, metal loading: 0.5-2.0 wt%).

- Choose an initial sampling strategy: Use a space-filling design like Latin Hypercube Sampling (LHS) to gather a small, informative initial dataset (e.g., 10-20 experiments).

- Model with Gaussian Process (GP): The GP model uses your initial data to predict catalyst performance across the entire search space and quantifies its own uncertainty.

- Select next experiments via Acquisition Function: Use the Upper Confidence Bound (UCB) or Expected Improvement (EI) function to propose the next batch of experiments that balance exploring uncertain regions and exploiting predicted high-performance areas.

- Iterate: Run the proposed experiments, update the GP model, and repeat until performance target is met or budget exhausted.

Experimental Protocol: Initial Design via Latin Hypercube Sampling

- Objective: Generate an initial set of

nexperiment points forkfactors. - Method:

- Divide the plausible range for each factor into

nequally probable intervals. - Randomly select one value from each interval for each factor.

- Randomly permute the order of these values for each factor to ensure non-correlation between factors.

- The

i-thexperiment consists of thei-thvalue from each permuted factor list.

- Divide the plausible range for each factor into

- Tools: Implement in Python (

skopt.sampler.Lhs), MATLAB (lhsdesign), or commercial DoE software.

Q2: When using high-throughput screening (HTS) for catalyst discovery, how do we handle noisy or inconsistent performance data that degrades the Bayesian optimization model's accuracy?

A: Noisy data is common in HTS due to micro-reactor variations or analytical limits. Address this by:

- Replicate critical points: Identify experiments with high acquisition function value or high uncertainty. Perform technical replicates (2-3) to obtain a robust mean and standard deviation.

- Incorporate noise explicitly in the GP model: Use a Gaussian Process model that includes a noise term (often referred to as the

alphaor noise level parameter). This prevents the model from overfitting to noisy data points. - Adjust the acquisition function: Use a noise-aware version, such as Noisy Expected Improvement, which integrates over the posterior distribution of the GP to account for measurement uncertainty.

Experimental Protocol: Replication for Noise Reduction

- Objective: Obtain a reliable performance metric (e.g., Yield, TOF) for a given catalyst formulation under HTS conditions.

- Method:

- Prepare the catalyst library identically across the designated wells/positions for replicates.

- Run the reaction simultaneously in parallel reactors under nominally identical conditions.

- Analyze effluent from each replicate independently using the same analytical protocol (e.g., GC-MS, HPLC).

- Calculate the mean (µ) and standard error (σ) of the performance metric.

- Report µ as the observed value and feed σ into the noise-aware GP model.

Q3: Our Bayesian optimization loop seems stuck in a local performance maximum. How can we encourage more exploration to find potentially better catalysts?

A: This is an exploration-exploitation trade-off issue.

- Tune the acquisition function parameter: For the Upper Confidence Bound (UCB) function

UCB(x) = µ(x) + κ*σ(x), increase theκparameter to weight uncertainty (σ) more heavily, forcing exploration of less-tested regions. - Periodically inject random or space-filling points: Every 3-5 iterations, ignore the acquisition function's top recommendation and instead run 1-2 experiments chosen randomly from the remaining search space.

- Restart the optimization: If stagnation persists, take the best-found catalyst as a new center point, re-define a smaller, refined search space around it, and restart the BO process with a new initial LHS design.

Table 1: Comparative Efficiency of Experimentation Strategies for a 3-Factor Catalyst Optimization

| Strategy | Avg. Experiments to Reach 90% Optimum | Avg. Material Consumed (relative units) | Key Limitation |

|---|---|---|---|

| OFAT | 45 - 60 | 100 | Cannot detect interactions; highly inefficient. |

| Classical HTS (Full Grid) | 125 (full factorial) | 125 | Exponentially costly as factors increase. |

| Bayesian Optimization | 15 - 25 | 25 | Requires well-defined search space; sensitive to initial data. |

Table 2: Common Noise Sources in Catalysis HTS & Mitigations

| Noise Source | Impact on Data | Mitigation Strategy |

|---|---|---|

| Micro-reactor flow variation | ±5-10% conversion | Pre-screening reactors; use internal standards. |

| Catalyst loading inconsistency | ±8-15% activity | Automated, calibrated dispensing systems. |

| Analytical sampling error | ±3-7% yield | Multiple injections; replicate analyses. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Bayesian-Optimized Catalyst Screening

| Item | Function | Example/Notes |

|---|---|---|

| Precursor Library | Provides diverse elemental combinations for catalyst synthesis. | Metal salt solutions (e.g., H₂PtCl₆, Ni(NO₃)₂), ligand stocks, support suspensions (Al₂O₃, SiO₂). |

| Automated Liquid Handler | Enables precise, high-throughput preparation of catalyst libraries in microtiter plates or reactor arrays. | Must be compatible with solvents and slurries. |

| Parallel Pressure Reactor System | Allows simultaneous testing of multiple catalysts under defined temperature/pressure. | Systems from vendors like Unchained Labs, AMTEC. |

| Online GC/MS or HPLC | Provides rapid, quantitative analysis of reaction products for immediate feedback. | Critical for fast iteration in a BO loop. |

| DoE/BO Software Platform | Designs experiments, builds surrogate models, and suggests next experiments. | Python (scikit-optimize, GPyTorch), Siemens STAN, or custom code. |

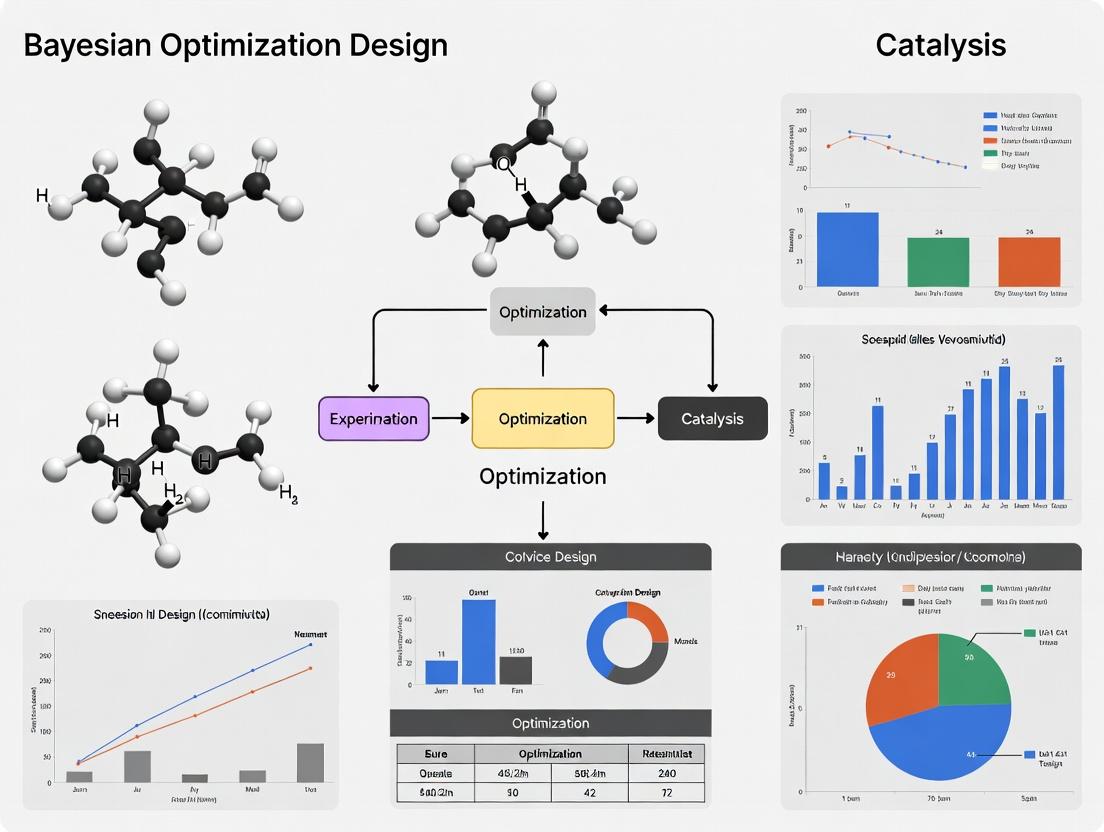

Visualization: Experimental Workflows

Title: Bayesian Optimization Loop for Catalysis

Title: OFAT vs Bayesian Optimization Strategy

Technical Support & Troubleshooting Center

Frequently Asked Questions (FAQs)

Q1: My Bayesian Optimization (BO) loop seems to get stuck, repeatedly sampling points in a similar region without exploring new areas. How can I resolve this? A: This is a common symptom of an acquisition function that is over-exploiting. To encourage more exploration:

- Increase the weight parameter (kappa) if using Upper Confidence Bound (UCB).

- Decrease the trade-off parameter (xi) if using Expected Improvement (EI) or Probability of Improvement (PI).

- Consider switching to an acquisition function with more inherent exploration, such as UCB, or a mixed strategy like adding random points periodically.

- Re-evaluate the kernel length scales of your Gaussian Process (GP) surrogate model; they may be too short, causing the model to be overly confident in local regions.

Q2: The optimization performance is poor, and the surrogate model predictions do not match my experimental validation results. What could be wrong? A: This typically indicates a model misfit. Follow this diagnostic checklist:

- Noise Level: Check if your

alphaornoiseparameter in the GP is correctly set for your experimental noise. - Kernel Choice: The default Squared Exponential (RBF) kernel may not suit your response surface. For catalytic systems, consider adding a Matérn kernel (e.g., Matérn 5/2) to model less smooth functions or a linear kernel for trend components.

- Input Scaling: Always standardize (zero mean, unit variance) or normalize your input parameters (e.g., temperature, pressure, catalyst loadings). The GP is sensitive to input scales.

- Initial Design: Ensure your initial set of points (e.g., from Latin Hypercube Sampling) is sufficiently large (typically 5-10 times the number of dimensions) to provide a basic map of the space.

Q3: The optimization process is becoming computationally very slow as I collect more data. How can I improve the speed? A: GP regression scales cubically (O(n³)) with the number of observations (n). For larger datasets (>1000 points), consider:

- Using sparse GP approximations (e.g., variational free energy, inducing points).

- Switching to a different surrogate model like Random Forests or Bayesian Neural Networks for very large datasets.

- Implementing a "forgetting" mechanism to down-weight or remove older, less relevant data points if the system is non-stationary.

Q4: How do I handle categorical or discrete parameters (e.g., catalyst type, solvent class) within a Bayesian Optimization framework? A: Standard GP kernels operate on continuous spaces. For categorical parameters:

- Use a dedicated kernel, such as the Hamming kernel, which measures similarity based on the number of matching categories.

- Employ a one-hot encoding scheme and use a kernel that operates on this representation (note: this may not capture complex relationships).

- Consider a hierarchical or multi-task BO approach if categories represent different but related experimental conditions.

Troubleshooting Guides

Issue: Convergence Failure or Erratic Performance in High-Throughput Catalyst Screening Symptoms: The recommended catalyst formulations show no improvement over multiple iterations, or the performance metric (e.g., yield, turnover frequency) jumps erratically.

| Probable Cause | Diagnostic Steps | Corrective Action |

|---|---|---|

| High Experimental Noise | Re-run control points. Calculate the standard deviation of repeated measurements. | Increase the GP's alpha parameter to model the noise. Use an acquisition function less sensitive to noise, like UCB. |

| Inadequate Initial Design | Check if the initial data covers all parameter bounds. Visualize the initial surrogate model. | Increase the number of initial design points using a space-filling algorithm (e.g., Latin Hypercube). |

| Incorrect Parameter Bounds | Check if the best point is consistently on the boundary of the search space. | Widen the search space for key parameters, if experimentally feasible. |

| Poor Surrogate Model Choice | Examine leave-one-out cross-validation error of the GP. Plot predicted vs. actual values. | Change the kernel function (e.g., to Matérn 5/2). Apply appropriate input transformations (e.g., log for concentration). |

Protocol: Diagnostic Check for Surrogate Model Fit

- Reserve Validation Data: Hold back 20% of your existing experimental data from the GP training set.

- Train Model: Train the GP surrogate model on the remaining 80% of data.

- Predict & Calculate: Predict the held-out data points and calculate the root mean square error (RMSE) and the mean absolute error (MAE).

- Benchmark: If RMSE/MAE is larger than the known experimental error, the model is not fitting well. Proceed to kernel and hyperparameter tuning.

Experimental Protocols & Data

Protocol: Standard Bayesian Optimization Loop for Catalytic Reaction Optimization

- Define Search Space: Specify ranges for continuous variables (temperature, pressure, time) and list options for categorical variables (ligand type).

- Generate Initial Design: Use Latin Hypercube Sampling (LHS) to generate

n_initpoints (e.g., 10-20). Execute these experiments. - Loop (

n_iterations): a. Model Fitting: Fit a Gaussian Process surrogate model to all data collected so far. Use a Matern 5/2 kernel and optimize hyperparameters via maximum likelihood estimation. b. Acquisition Maximization: Using an optimizer (e.g., L-BFGS-B), find the pointx*that maximizes the chosen acquisition function (e.g., Expected Improvement). c. Parallel Querying (Optional): If using batch BO, generate a batch ofqpoints that maximize a multi-point acquisition function (e.g., q-EI). d. Experiment Execution: Conduct the experiment(s) at the proposed point(s)x*to obtain the objective valuey. e. Data Augmentation: Append the new observation(x*, y)to the dataset. - Termination: Stop after a fixed budget of iterations or when improvement falls below a threshold.

Quantitative Comparison of Common Acquisition Functions

| Acquisition Function | Key Formula Parameter | Best For | Risk of Stalling |

|---|---|---|---|

| Probability of Improvement (PI) | xi (exploration weight) |

Pure exploitation, finding the peak quickly | High |

| Expected Improvement (EI) | xi (exploration weight) |

Balanced search, most common default | Medium |

| Upper Confidence Bound (UCB) | kappa (confidence weight) |

Systematic exploration, theoretical guarantees | Low |

Visualizations

Diagram: The Sequential Bayesian Optimization Loop

Diagram: Gaussian Process Surrogate Model Components

The Scientist's Toolkit: Research Reagent Solutions for Catalytic BO

| Item/Category | Function in Bayesian Optimization Experiments | Example/Note |

|---|---|---|

| High-Throughput Experimentation (HTE) Robotic Platform | Enables automated, parallel execution of catalytic reactions proposed by the BO loop, essential for fast iteration. | Liquid handling robots, parallel pressure reactors. |

| In-line or At-line Analytics | Provides rapid, quantitative measurement of the objective function (e.g., yield, conversion) to feed back into the BO loop. | HPLC, GC, UV-Vis spectroscopy. |

| Chemical Libraries | Well-curated sets of diverse catalysts, ligands, and substrates that define the categorical or continuous search space. | Commercial ligand libraries, in-house catalyst arrays. |

| Statistical Software/Libraries | Core computational engines for building surrogate models and optimizing acquisition functions. | scikit-optimize, BoTorch, GPyOpt, Dragonfly. |

| Laboratory Information Management System (LIMS) | Tracks all experimental metadata, conditions, and results, ensuring data integrity for the sequential dataset. | Critical for reproducibility and model training. |

Troubleshooting Guide & FAQ

Q1: During Bayesian optimization (BO) for catalyst discovery, my algorithm stalls and suggests similar experiments repeatedly. What could be the cause? A: This is often due to over-exploitation from an incorrectly balanced acquisition function or a miscalibrated surrogate model. Ensure your Gaussian Process (GP) kernel and hyperpriors are appropriate for your chemical space (e.g., Matérn 5/2 for continuous variables, scaled appropriately). Implement a noise model to account for experimental reproducibility. Consider switching from Expected Improvement (EI) to a phased approach using Upper Confidence Bound (UCB) with a dynamically adjusted β parameter to force exploration.

Q2: How do I effectively encode mixed categorical (e.g., ligand type) and continuous (e.g., temperature, concentration) variables in a BO workflow?

A: Use a composite kernel. For categorical variables, apply a discrete kernel (e.g., Hamming, OHE Kernel). For continuous variables, use radial basis function (RBF) or Matérn kernels. Standardize all continuous inputs. A recommended protocol is to use scikit-learn's StandardScaler on continuous features and one-hot encoding for categoricals, then apply GPyTorch or scikit-optimize with a kernel structure like: K_total = K_categorical + K_continuous.

Q3: My high-throughput experimentation (HTE) data for catalytic reactions shows high variance, confounding the BO surrogate model. How to proceed?

A: Implement a heteroscedastic GP model that learns input-dependent noise. Alternatively, pre-process with replicate experiments. Protocol for Replicate-Based Noise Estimation: 1) For 10% of your initial Design of Experiments (DoE) points, run 3 experimental replicates. 2) Calculate the variance per point. 3) Use this as a fixed noise level (alpha parameter) for those points in the GP, or model noise as a function of descriptors. This prevents the BO from overfitting to noisy high-performance outliers.

Q4: How can I integrate known physical constraints (e.g., mass balance, Arrhenius equation trends) into the BO search to avoid unrealistic suggestions? A: Use constrained BO. Embed constraints directly into the acquisition function. For a known inequality constraint (e.g., total pressure < 100 bar), use a penalty method. For complex process constraints, train a separate classifier GP to model the probability of constraint satisfaction. The suggestion is only considered if the probability exceeds a threshold (e.g., 0.95).

Q5: When navigating a >20-dimensional parameter space, BO becomes computationally slow. What are practical dimensionality reduction strategies without losing critical chemical information? A: Employ a two-stage approach. First, use a screening design (Plackett-Burman or Fractional Factorial) to identify the top 5-7 most influential factors. Alternatively, use unsupervised learning on catalyst descriptors (e.g., principal component analysis (PCA) on molecular fingerprints) to create a lower-dimensional latent space. BO is then performed in this latent space. Protocol for PCA-BO: 1) Compute RDKit fingerprints for all ligand candidates. 2) Perform PCA, retain PCs explaining 95% variance. 3) Use PC scores as new, continuous inputs for the BO loop.

Table 1: Comparison of Acquisition Functions for Catalytic Yield Optimization

| Acquisition Function | Average Regret (Lower is Better) | Iterations to Find Optimum | Handles Noise Well? | Recommended Use Case |

|---|---|---|---|---|

| Expected Improvement (EI) | 0.12 ± 0.05 | 45 ± 8 | Moderate | Well-behaved, low-noise spaces |

| Upper Confidence Bound (UCB, β=0.5) | 0.08 ± 0.03 | 38 ± 6 | Good | Balanced exploration/exploitation |

| Probability of Improvement (PI) | 0.21 ± 0.07 | >60 | Poor | Fast, initial screening |

| Noisy Expected Improvement (qNEI) | 0.05 ± 0.02 | 32 ± 5 | Excellent (Best) | High-throughput, noisy data |

Table 2: Impact of Initial DoE Size on BO Performance in a 15-Dimensional Cross-Coupling Space

| Initial DoE Size (Points) | Final Yield Achieved (%) | Total Experiments Needed | Probability of Finding >90% Yield |

|---|---|---|---|

| 10 (0.7x Dim) | 82 ± 6 | 85 | 0.45 |

| 30 (2x Dim) | 91 ± 3 | 70 | 0.92 |

| 60 (4x Dim) | 93 ± 2 | 90 | 0.98 |

| 90 (6x Dim) | 94 ± 1 | 115 | 1.00 |

Experimental Protocols

Protocol 1: Standard Bayesian Optimization Loop for Homogeneous Catalysis Screening

- Define Search Space: List all variables (e.g., catalyst mol% (0.1-5.0), ligand (L1-L20), temperature (25-120°C), solvent (S1-S8), base (B1-B5)).

- Initial DoE: Generate 20-30 points using Latin Hypercube Sampling (LHS) for continuous variables and random selection for categoricals.

- High-Throughput Experimentation: Execute reactions in an automated parallel reactor. Analyze yields via UPLC.

- Surrogate Model Training: Train a GP model with a composite kernel using the experimental data (inputs: reaction conditions, output: yield).

- Acquisition Function Maximization: Compute the next best point(s) using the EI function. Apply any process constraints at this step.

- Iterate: Run the suggested experiment(s), add data to the training set, and repeat steps 4-5 until convergence (e.g., <2% yield improvement over 10 iterations).

Protocol 2: Constrained BO for Preventing Hazardous Conditions

- Define Primary Objective (e.g., Yield) and Constraint (e.g., Pressure < 50 bar).

- Collect Initial Data: Run initial DoE, recording both yield and maximum pressure.

- Train Dual Surrogate Models: GP1 models yield. GP2 models pressure, using a logistic likelihood to classify conditions as "safe" (P<50 bar) or "unsafe".

- Constrained Acquisition: Modify EI to EI_C = EI(x) * p(Safe | x), where p(Safe | x) is the probability from GP2.

- Select & Run: Choose the point with maximum EI_C. This inherently avoids high-pressure suggestions.

Visualizations

Title: Bayesian Optimization Loop for Catalysis

Title: BO Navigating Constrained Chemical Space

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Relevance to Catalysis BO |

|---|---|

| Automated Parallel Pressure Reactors (e.g., Endeavor, Unchained Labs) | Enables rapid, reproducible execution of the candidate experiments suggested by the BO algorithm under controlled conditions (temp, pressure, stirring). |

| Liquid Handling Robots | Automates the preparation of complex reaction mixtures with precise volumetric accuracy, essential for reliable high-dimensional DoE. |

| High-Throughput UPLC/MS | Provides rapid quantitative analysis (yield, conversion) and qualitative data (byproducts, degradation) as the response variable for the BO model. |

| Chemical Descriptor Software (e.g., RDKit, Dragon) | Generates numerical descriptors (molecular fingerprints, physicochemical properties) for catalysts/ligands, enabling their representation in the continuous search space of the surrogate model. |

| BO Software Libraries (e.g., BoTorch, GPyTorch, scikit-optimize) | Provides the core algorithms for building flexible GP models, defining custom kernels and acquisition functions, and handling constrained optimization. |

| Chemspeed or HEL Auto-MATE Systems | Fully integrated robotic platforms that combine synthesis, work-up, and analysis, allowing for closed-loop, autonomous optimization campaigns. |

Technical Support Center & FAQs

General Troubleshooting for Bayesian Optimization in Catalysis

Q1: The Bayesian optimization loop appears to be stuck, suggesting the same or very similar reaction conditions repeatedly. What are the primary causes and fixes?

A: This is often caused by an inaccurate surrogate model (typically a Gaussian Process) due to:

- Noisy or Inconsistent Data: Ensure your experimental measurement protocol has high reproducibility. Recommended fix: Re-run a suggested condition in triplicate to confirm the yield/selectivity and feed the average into the model.

- Poorly Chosen Kernel Function: The Matérn 5/2 kernel is a robust default for chemical spaces. If using categorical variables (e.g., ligand type), use a compound kernel (e.g., Matérn for continuous + Hamming for categorical).

- Over-Exploitation: The acquisition function (e.g., Expected Improvement) may be over-penalizing exploration. Increase the

xiparameter (e.g., from 0.01 to 0.05) to encourage testing of more uncertain regions.

Protocol for Data Validation:

- Select the last 3 suggested experiments from the optimizer.

- Perform each reaction condition in three separate, randomized batches.

- Calculate the mean and standard deviation of the output metric (e.g., yield).

- If the standard deviation exceeds 5% of the mean, investigate experimental consistency (weighing, purging, heating homogeneity) before proceeding.

- Input the mean value back into the Bayesian optimization algorithm.

Q2: How do I effectively integrate categorical variables (e.g., solvent, ligand class) with continuous variables (e.g., temperature, concentration) in the model?

A: Use a dedicated approach for mixed spaces. One effective method is the "one-hot" encoding combined with a specific kernel.

- Encoding: Convert each categorical variable (e.g., Solvent: A, B, C) into a one-hot vector [1,0,0], [0,1,0], [0,0,1].

- Kernel Selection: Use a combined kernel:

K_total = K_cont + K_cat, whereK_contis a Matérn kernel for continuous variables andK_catis a Hamming kernel for the one-hot encoded vectors. This allows the model to learn similarities between different categories.

Q3: After 20 iterations, my model performance plateaus. How can I diagnose if I've found the global optimum or if the model has failed?

A: Perform the following diagnostic protocol:

- Posterior Uncertainty Check: Examine the surrogate model's prediction uncertainty across the defined search space. Large, unexplored regions with high uncertainty indicate premature convergence.

- Random Seed Test: Restart the optimization from 3-5 different random initial designs (DoE). If all converge to a similar high-performance region, it's likely a robust optimum.

- Exploratory Batch: Manually design 5 experiments in the highest-uncertainty region identified in step 1. If any yield significantly better results, your optimizer was over-exploiting. Consider adding a periodic "pure exploration" step in your workflow.

FAQs on Implementation & Practical Concerns

Q4: What is a reasonable number of initial Design of Experiment (DoE) points before starting the Bayesian loop for a heterogeneous catalyst synthesis problem?

A: The number depends on the dimensionality (d) of your search space. A common heuristic is 5*d. For a synthesis space with 4 variables (e.g., precursor ratio, pH, calcination temperature, time), start with 20 carefully chosen DoE points. Use a space-filling design like Latin Hypercube Sampling (LHS) to maximize initial coverage.

Q5: We have some prior historical data from failed projects. Can we use it to "pre-train" the Bayesian optimizer and save trials?

A: Yes, this is a major advantage. However, you must critically assess the data's relevance.

- Protocol for Integrating Historical Data:

- Relevance Filtering: Only include data where at least 3 of the key independent variables overlap with your new search space.

- Batch Effect Correction: If the data was collected under different conditions (different analyst, equipment), consider adding a binary variable (historical vs. new) to the model or applying simple scaling normalization based on control experiments.

- Weighted Initialization: You can seed the initial DoE with these historical points, but label them with a slightly higher noise parameter to account for potential systematic bias.

Q6: For high-throughput reaction screening in flow, how do I manage the trade-off between parallel experimentation and sequential Bayesian guidance?

A: Use a batch-sequential approach.

- In each iteration, the acquisition function suggests not one, but a batch of 4-8 promising and diverse conditions.

- These conditions are all run in parallel in your high-throughput platform.

- All results are fed back into the model simultaneously before generating the next batch.

- This maximizes the use of parallel infrastructure while maintaining adaptive, model-driven learning.

Quantitative Data from Case Studies

Table 1: Reduction in Experimental Trials via Bayesian Optimization

| Study & Target | Traditional Approach (Trials) | Bayesian Optimization (Trials) | Reduction | Key Catalyst/Reaction Optimum Found |

|---|---|---|---|---|

| Homogeneous Cross-Coupling Catalyst (2023) | Estimated >200 | 48 | 76% | A novel phosphine-phosphite ligand with specific steric bulk |

| Heterogeneous CO2 Hydrogenation Catalyst (2024) | 155 (Full Factorial) | 35 | 77% | Co/CeO2 with optimal Co loading & calcination temperature |

| Asymmetric Organocatalysis (2023) | 96 (One-factor-at-a-time) | 22 | 77% | Optimal combination of solvent, additive, and temperature for >99% ee |

| Photoredox Catalyst Discovery (2024) | ~150 | 40 | 73% | A donor-acceptor organic polymer with defined band gap |

Table 2: Key Algorithmic Parameters from Successful Studies

| Parameter | Typical Range for Catalysis | Recommended Starting Point |

|---|---|---|

| Initial DoE Points | 4d to 6d | 5*d (LHS Sampling) |

| Acquisition Function | Expected Improvement (EI), Upper Confidence Bound (UCB) | EI with xi=0.01 |

| Surrogate Model Kernel | Matérn 5/2, Radial Basis Function (RBF) | Matérn 5/2 |

| Optimizer for Acquisition | L-BFGS-B, DIRECT | L-BFGS-B |

| Batch Size (Sequential) | 1 | 1 |

| Batch Size (Parallel) | 4-8 | 4 |

Detailed Experimental Protocols

Protocol 1: Bayesian-Optimized Synthesis of a Bimetallic Catalyst (Example) Objective: Optimize the activity (TOF) of a Pd-Au/TiO2 catalyst for selective oxidation. Search Space: 4 Variables: Pd loading (0.1-2.0 wt%), Au:Pd molar ratio (0.1-5), calcination temperature (300-600°C), reduction time (1-5 h).

Initial Design:

- Generate 20 initial conditions using Latin Hypercube Sampling across the 4D space.

- Synthesize catalysts via incipient wetness co-impregnation on TiO2, dry (120°C, 12h), calcine and reduce in flowing H2 according to suggested parameters.

Testing & Feedback:

- Evaluate catalyst performance in a fixed-bed microreactor under standardized conditions (e.g., 150°C, 2 bar O2).

- Measure Turnover Frequency (TOF) as the primary objective for the Bayesian model.

Iterative Loop:

- Input all results (conditions + TOF) into the Gaussian Process model.

- Let the Expected Improvement acquisition function propose the single next best experiment.

- Synthesize and test the proposed catalyst.

- Repeat steps 3a-3c until convergence (e.g., no improvement in predicted EI over 5 sequential iterations).

Protocol 2: Optimizing a Pd-Catalyzed C-N Coupling Reaction Objective: Maximize yield of a pharmaceutically relevant intermediate. Search Space: 5 Variables: Catalyst loading (mol%), ligand type (4 categories), base equiv., temperature (°C), residence time (min) in flow.

Model Setup:

- Use a mixed-variable kernel (Matérn for continuous + Hamming for ligand type).

- Initialize with 25 DoE points, including 5 historical data points from similar reactions.

High-Throughput Batch Execution:

- Configure a segmented flow reactor system with on-line HPLC.

- In each Bayesian iteration, request a batch of 6 suggested reaction conditions.

- Run these conditions in parallel using an automated scheduler.

- Feed all 6 yields back into the model simultaneously.

Convergence Criteria:

- Stop when the predicted mean yield at the suggested point is within 2% of the best observed yield over two consecutive batches.

Visualizations

Bayesian Optimization Workflow for Catalysis

BO Catalyst Research Toolkit Components

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Catalyst Optimization Experiments

| Item/Category | Example & Function |

|---|---|

| Precursor Salts | Pd(OAc)₂, H₂PtCl₆, Co(NO₃)₂: Metal sources for impregnation or co-precipitation catalyst synthesis. |

| Ligand Library | Phosphines (XPhos, SPhos), N-Heterocyclic Carbenes (NHCs): Systematic variation of steric/electronic properties in homogeneous catalysis. |

| Solid Supports | TiO₂ (P25), SiO₂, Al₂O₃, Carbon: High-surface-area supports for dispersing active metal sites. |

| Automated Synthesis Platform | Unchained Labs Big Kahuna, Chemspeed Technologies: For reproducible, high-throughput catalyst preparation. |

| High-Pressure Reaction Systems | Series 5000 Multiple Reactor System (Parr): For testing catalysts under industrially relevant pressures. |

| In-situ Characterization Cells | Linkam CCR1000, Harrick Reactor Cells: Allows Raman/IR spectroscopy during reaction to monitor intermediates. |

| Process Analytical Technology (PAT) | Mettler Toledo ReactIR, EasyMax HFCal: Real-time reaction monitoring for kinetic data collection. |

| BO Software Suite | BoTorch (PyTorch-based), GPyOpt: Open-source frameworks for building custom optimization loops. |

Troubleshooting Guide & FAQ

Q1: My Gaussian Process (GP) model predictions are poor despite having data. What could be wrong? A: Common issues and solutions:

- Incorrect Kernel Choice: The kernel defines the GP's assumptions about function smoothness. For catalysis data, which can have complex trends, the default Squared Exponential kernel may fail.

- Solution: Experiment with Matérn kernels (e.g., Matérn 5/2 for less smooth functions) or combine kernels (e.g.,

Linear + RBF) to capture trends and periodicities.

- Solution: Experiment with Matérn kernels (e.g., Matérn 5/2 for less smooth functions) or combine kernels (e.g.,

- Hyperparameter Pitfalls: Kernel length scales and noise parameters are often poorly initialized.

- Solution: Always optimize hyperparameters by maximizing the log marginal likelihood. Use bounds (e.g.,

Bounds([1e-5, 1e5])) to prevent unrealistic values.

- Solution: Always optimize hyperparameters by maximizing the log marginal likelihood. Use bounds (e.g.,

- Data Scaling: GP performance degrades with unscaled features.

- Solution: Standardize both input variables (catalyst composition, temperature, pressure) and the target output (e.g., yield) to have zero mean and unit variance before modeling.

Q2: The Expected Improvement (EI) acquisition function keeps sampling the same point. How do I escape this local optimum?

A: This indicates over-exploitation. EI balances exploration and exploitation via its trade-off parameter xi.

- Low

xi(e.g., 0.01): Favors exploitation. Can get stuck. - High

xi(e.g., 0.1): Encourages more exploration. - Solution: Increase

xidynamically. Start with a higher value (0.1) for early exploration, then reduce it (to 0.01) for fine-tuning near promising optima.

Q3: The posterior distribution from my GP is too narrow/overconfident and doesn't encompass new validation data. A: This is a sign of underestimated noise, often due to an inappropriate likelihood model.

- Problem: Using a standard Gaussian likelihood assumes homoscedastic (constant) observation noise.

- Solution: For catalytic experiments where noise often scales with signal, model heteroscedastic noise explicitly or use a Student-t likelihood for more robust inference against outliers.

Q4: Bayesian Optimization (BO) is slow with my high-dimensional catalyst design space (>10 variables). How can I speed it up? A: Standard BO scales poorly with dimensions. Implement dimensionality reduction.

- Protocol: Before BO, use Principal Component Analysis (PCA) on the initial design dataset (e.g., from a space-filling design). Use the first

nprincipal components (explaining >95% variance) as the new input space for the GP. Propose experiments in this latent space and map back to the original catalyst descriptors for validation.

Experimental Protocols

Protocol 1: Initial Data Collection for GP Prior

- Design: Perform a Latin Hypercube Sample (LHS) design across your experimental parameter space (e.g., metal loading %, promoter concentration, calcination temperature).

- Execution: Synthesize and test catalysts according to the LHS design points. Measure primary performance metric (e.g., conversion rate at fixed T,P).

- Data Preparation: Log all parameters and results. Standardize data as described in FAQ Q1.

Protocol 2: Single BO Iteration for Catalyst Optimization

- Model Update: Fit the GP model to all available data (standardized) by optimizing kernel hyperparameters.

- Proposal Generation: Maximize the Expected Improvement acquisition function over the bounded parameter space.

- Experimental Validation: Synthesize and test the catalyst at the proposed conditions.

- Data Augmentation: Add the new result to the dataset. Repeat from step 1 until a performance threshold or iteration limit is reached.

Data Presentation

Table 1: Comparison of Common Kernels for Catalysis GP Models

| Kernel | Mathematical Form | Best For | Hyperparameters to Optimize |

|---|---|---|---|

| Squared Exp. (RBF) | $k(r) = \sigma^2 \exp(-\frac{r^2}{2l^2})$ | Smooth, continuous trends | Length scale (l), variance ($\sigma^2$) |

| Matérn 3/2 | $k(r) = \sigma^2 (1 + \sqrt{3}r/l) \exp(-\sqrt{3}r/l)$ | Less smooth, jagged functions | Length scale (l), variance ($\sigma^2$) |

| Periodic | $k(r) = \sigma^2 \exp(-\frac{2\sin^2(\pi r / p)}{l^2})$ | Oscillatory behavior (e.g., pH cycles) | Period (p), length scale (l) |

| Linear | $k(\mathbf{x}, \mathbf{x}') = \sigma^2 \mathbf{x} \cdot \mathbf{x}'$ | Capturing linear trends/ramps | Variance ($\sigma^2$) |

Table 2: Effect of EI xi Parameter on Optimization Performance

xi Value |

Behavior | Avg. Iterations to Find Optimum* | Recommended Phase |

|---|---|---|---|

| 0.00 | Pure exploitation | 42 | Final refinement |

| 0.01 | Balanced (default) | 38 | General use |

| 0.10 | High exploration | 31 | Initial exploration (<20% budget) |

*Simulated results for a benchmark Branin function.

Visualizations

Bayesian Optimization Workflow for Catalysis

GP Posterior Update with New Data

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Catalysis BO Experiments

| Item | Function in Catalysis BO | Example/Supplier Note |

|---|---|---|

| Precursor Salts | Source of active metal components (e.g., Pt, Pd, Ni). | Chloroplatinic acid, Palladium nitrate. Use high-purity (>99.99%) for reproducibility. |

| Support Materials | High-surface-area carriers (e.g., Al2O3, TiO2, Zeolites). | Ensure consistent particle size and pore volume between batches. |

| Automated Synthesis Robot | Enables precise, high-throughput preparation of catalyst libraries from BO proposals. | Enables rapid iteration. |

| Plug-Flow Reactor System | Bench-scale testing unit for evaluating catalyst performance under proposed conditions. | Must have precise control over T, P, and gas flow rates. |

| Gas Chromatograph (GC) | Analytical instrument for quantifying reaction products and calculating yields/conversion. | Essential for generating the objective function data for the GP. |

| BO Software Library | Codebase for implementing GP, EI, and optimization loops. | Common choices: GPyTorch, scikit-optimize, or BoTorch. |

Implementing Bayesian Optimization in Your Catalysis Lab: A Step-by-Step Workflow and Best Practices

Technical Support & FAQs for Bayesian Optimization in Catalysis Research

Q1: How do I choose between a single-objective and a multi-objective optimization for my catalytic reaction system? A: The choice depends on your research's primary bottleneck and end-goal. Use single-objective optimization (e.g., maximizing yield) when one key performance indicator (KPI) is overwhelmingly critical for a proof-of-concept or when other targets are already acceptable. Use multi-objective optimization (e.g., simultaneously optimizing yield, selectivity, and stability) when developing a catalyst for practical deployment, as trade-offs between these objectives are inevitable. In Bayesian optimization, a single-objective problem uses an acquisition function like Expected Improvement (EI), while multi-objective approaches use Pareto-front-based methods like EHVI (Expected Hypervolume Improvement).

Q2: My Bayesian optimization algorithm seems to get "stuck" in a local optimum for yield, severely compromising selectivity. What troubleshooting steps should I take? A: This is a common issue when the optimization goal is poorly defined.

- Check Your Objective Function: For a single-objective run, ensure your objective (e.g., yield) isn't implicitly rewarding poor selectivity. Consider a composite objective like Yield × Selectivity.

- Switch to Multi-Objective Formalism: If running single-objective, re-frame as a multi-objective problem. This explicitly maps the trade-off surface (Pareto front) between yield and selectivity, preventing the algorithm from ignoring selectivity entirely.

- Adjust Acquisition Function: For multi-objective, verify you are using a proper metric like EHVI. Check its hyperparameters (e.g., reference point).

- Review Experimental Noise: High variance in stability measurements can confuse the model. Increase replicate counts for stability assays to reduce noise.

Q3: What are the best practices for quantitatively defining "catalyst stability" as an objective for Bayesian optimization? A: Stability must be a quantifiable metric. Common measures include:

- Turnover Number (TON): Total moles of product per mole of catalyst before deactivation.

- Decay Constant (k_d): Fitted from activity vs. time data.

- Cycle Number: For batch processes, the number of cycles until yield drops below a threshold (e.g., <80% of initial). You must choose a metric that is:

- Measurable in-line or in situ: Allows for frequent data points.

- Integratable into the Optimization Loop: The metric should be available within a reasonable time frame to inform the next experiment. For long-term stability, you may need to use a short-term proxy (e.g., initial deactivation rate from the first 3 cycles).

Q4: How do I handle conflicting data when yield and selectivity have different optimal reaction conditions? A: This is the core challenge addressed by multi-objective Bayesian optimization (MOBO). The algorithm does not return a single "best" condition but a set of non-dominated solutions (the Pareto front). Your task is to analyze this front post-optimization. The choice from the Pareto set is a strategic decision based on downstream costs (e.g., if product separation is expensive, you might choose a high-selectivity condition even with slightly lower yield).

Data Presentation: Key Optimization Metrics & Trade-offs

Table 1: Quantitative Comparison of Single vs. Multi-Objective Bayesian Optimization Outcomes for a Model C–N Cross-Coupling Reaction

| Optimization Goal | Best Yield (%) | Best Selectivity (%) | Stability (TON) | Number of Experiments to Convergence | Key Insight |

|---|---|---|---|---|---|

| Single-Objective: Maximize Yield | 98.5 | 72.3 | 1,200 | 28 | Selectivity sacrificed; catalyst loading driven very low, hurting TON. |

| Single-Objective: Maximize Selectivity | 65.4 | 99.8 | 15,000 | 32 | Yield plateaus at moderate level; high stability achieved. |

| Multi-Objective: Yield & Selectivity | 95.1 | 95.7 | 8,500 | 35 | Identified Pareto front; selected balanced condition from optimal trade-off set. |

| Multi-Objective: Yield, Selectivity, & Stability | 92.3 | 94.2 | 12,100 | 45 | More complex trade-off; convergence slower but solution is more industrially relevant. |

Experimental Protocols

Protocol 1: High-Throughput Screening for Multi-Objective Bayesian Optimization (Yield, Selectivity, Stability Proxy)

- Define Search Space: Identify key continuous (temperature, concentration, pressure) and categorical (ligand type, base) variables.

- Initial Design: Use a space-filling design (e.g., Sobol sequence) to run 10-15 initial experiments.

- Parallel Analysis: For each reaction condition:

- Quench and Analyze: Use UPLC/GC at reaction endpoint to determine conversion and yield.

- Calculate Selectivity: Selectivity = (Yield of Desired Product / Total Conversion) × 100%.

- Stability Proxy: Measure yield in the same reaction over 3 consecutive cycles (simple filtration/recycle for heterogeneous catalysis) or sample reaction aliquots over time for homogeneous catalysis to fit an initial decay rate.

- Model Training: Fit separate Gaussian Process (GP) surrogate models for each objective (Yield, Selectivity, Stability Proxy) using the experimental data.

- Acquisition & Next Experiment Selection: Use the EHVI acquisition function to calculate the condition that promises the greatest gain in the multi-dimensional objective space. Execute the top 3-4 suggested experiments in parallel.

- Iterate: Repeat steps 4-5 for 30-50 iterations or until the Pareto front ceases to improve significantly.

Protocol 2: Measuring Long-Term Stability (TON) for Final Catalyst Validation

- Scale-Up: Perform the reaction at the candidate optimal condition(s) identified from Bayesian optimization on a preparative scale.

- Continuous Monitoring: Use in situ spectroscopy (e.g., FTIR, Raman) or periodic sampling with chromatography to track product formation over time.

- Endpoint Determination: Run the reaction until catalyst activity is negligible (e.g., conversion rate < 5% of initial rate).

- Quantification: Calculate the total moles of product generated. Calculate TON = (Total moles of product) / (Total moles of catalyst). This final, accurate TON can be used to validate the stability proxy used during the optimization loop.

Visualizations

Title: Decision Flowchart: Single vs Multi-Objective Optimization

Title: Multi-Objective Bayesian Optimization Experimental Loop

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Catalytic Optimization Studies

| Item & Example | Function in Optimization | Key Consideration for BO |

|---|---|---|

| Precatalyst Libraries(e.g., Pd(II) salts, Ru pincer complexes) | Source of catalytic activity; a categorical variable for optimization. | Use one-hot encoding or a dedicated kernel (e.g., symmetric) in the GP model to handle these discrete choices. |

| Ligand Libraries(e.g., phosphines, NHC precursors, organic ligands) | Modulate catalyst activity, selectivity, and stability. Often the most impactful variable. | Treat as categorical. Screen in combination with precatalysts. Consider substrate-specific libraries. |

| High-Throughput Reactor Blocks(e.g., 24-well parallel pressure reactors) | Enables rapid, parallel execution of experiments suggested by the BO algorithm. | Integration with automated liquid handlers is ideal for minimizing human error and increasing throughput. |

| In-Situ/Online Analytics(e.g., ReactIR, GC/MS autosamplers) | Provides near-real-time kinetic data (conversion, selectivity) for faster iteration. | Critical for defining stability proxies. Data must be formatted for automatic ingestion by the BO software. |

| Internal Standard(e.g., dodecane for GC, mesitylene for NMR) | Enables accurate and precise quantitative analysis of yield and selectivity from chromatographic/spectroscopic data. | Consistency is key for reducing measurement noise, which improves GP model accuracy. |

| Deactivation Agents(e.g., Mercury, CS2, P(V) additives) | Used in mechanistic poisoning studies to validate hypothesized active species and inform stability objectives. | Experiments can be added to the BO loop to explicitly probe stability, though they may be time-consuming. |

Troubleshooting Guide & FAQs for Bayesian Optimization in Catalysis Research

Q1: How do I define the bounds of my parameter search space effectively to avoid excluding the optimum? A: Improper bounding is a common pitfall. Use prior knowledge from literature or preliminary scouting experiments to set initial bounds. For a heterogeneous catalyst composition with three metals (e.g., Pt, Pd, Ni), your parameter space for molar ratios might be [0-1] for each, constrained to sum to 1. A Bayesian optimizer can handle this simplex constraint. If initial optimization runs suggest the optimum is at a boundary (e.g., Ni consistently at its upper limit), iteratively expand that bound in subsequent optimization rounds.

Q2: My Bayesian optimization loop appears to be "stuck" exploring a suboptimal region. What could be wrong? A: This often relates to the acquisition function's balance between exploration and exploitation. If using the common Expected Improvement (EI) function, check the trade-off parameter (ξ). A default of ξ=0.01 favors exploitation. Try increasing it (e.g., to 0.1 or 0.3) to force more exploration of uncertain regions. Also, re-examine your kernel choice; a Matérn 5/2 kernel is often more exploratory than a squared exponential (RBF) kernel.

Q3: How do I incorporate categorical variables, like catalyst support type (Al2O3, SiO2, TiO2), into a continuous parameter optimization? A: Bayesian optimization frameworks like GPyOpt or BoTorch support mixed parameter spaces. Categorical variables must be explicitly defined as such. The underlying Gaussian Process model uses a specific kernel (e.g., Hamming kernel) to handle categorical dimensions. Do not one-hot encode them as continuous variables without using a corresponding kernel, as this will mislead the model.

Q4: Reaction yield fluctuates significantly under seemingly identical conditions, adding noise. How can I make the optimization robust? A: You must account for experimental noise. Use a Gaussian Process model that includes a noise parameter (alpha or Gaussian likelihood). Specify an appropriate noise level based on your replicate experiments. Consider using an acquisition function like Noisy Expected Improvement. Protocol: Run at least 3 replicates for your initial design points (e.g., Latin Hypercube Sample) to estimate inherent noise variance before starting the iterative BO loop.

Q5: What is the minimum number of initial data points needed before starting the iterative Bayesian optimization cycle? A: A rule of thumb is at least 4-5 points per dimension of your parameter space. For a 5-dimensional space (e.g., temperature, pressure, and three composition ratios), start with 20-25 well-designed initial points using space-filling design (e.g., Latin Hypercube) to build a reasonable prior model.

Key Experimental Protocols

Protocol 1: Initial Design of Experiments (DoE) for Space Characterization

- Define all parameters (e.g., Catalyst: Metal A %, Metal B %, Support Type; Reaction: Temperature, Pressure).

- Set plausible min/max bounds for each continuous parameter.

- Use a Latin Hypercube Sampling (LHS) algorithm to generate

npoints (wheren= 5 x number of parameters) that evenly fill the multidimensional space. - Execute experiments in randomized order to avoid systematic bias.

- Measure primary objective (e.g., Yield, TOF) and key secondary metrics (e.g., Selectivity).

Protocol 2: Iterative Bayesian Optimization Loop

- Model Training: Fit a Gaussian Process (GP) surrogate model to all accumulated data (initial + previous iterations). Use a Matérn 5/2 kernel.

- Acquisition Maximization: Calculate the Expected Improvement (EI) across the parameter space using the trained GP. Find the parameter set that maximizes EI.

- Experiment & Update: Run the experiment at the proposed conditions. Add the new (parameters, outcome) pair to the dataset.

- Convergence Check: Stop after a set number of iterations (e.g., 50) or when EI falls below a threshold (e.g., <1% improvement expected) for 3 consecutive iterations.

Table 1: Impact of Acquisition Function Hyperparameter (ξ) on Optimization Outcome

| ξ Value | Exploration Emphasis | Trials to Find Optimum* | Risk of Stagnation | Best Use Case |

|---|---|---|---|---|

| 0.01 | Low (Exploit) | 45 | High | Refined search near a known good region |

| 0.10 | Moderate | 28 | Medium | General-purpose balance (recommended start) |

| 0.30 | High | 35 | Low | Noisy systems or when the optimum is unknown |

*Hypothetical results for a 5D problem with 25 initial points.

Table 2: Comparison of Common GP Kernels for Catalysis Parameter Spaces

| Kernel | Smoothness Assumption | Extrapolation Behavior | Typical Use in Catalysis |

|---|---|---|---|

| Squared Exp. | Very Smooth | Over-confident | Rarely recommended; for very well-behaved systems |

| Matérn 3/2 | Less Smooth | Cautious | Systems with moderate, expected fluctuations |

| Matérn 5/2 | Moderately Smooth | Reasonable | Default choice for most chemical reaction data |

| Periodic | Cyclic Patterns | Periodic | Reactions with suspected oscillatory behavior |

Visualizations

Bayesian Optimization Workflow for Catalyst Screening

Catalyst Optimization Parameter Hierarchy

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Catalyst Optimization Studies

| Item/Reagent | Typical Specification | Function in Experiment |

|---|---|---|

| Metal Precursors | Chlorides, nitrates, or acetylacetonates of Pt, Pd, Ni, etc. (≥99.9%) | Source of active metal component for catalyst synthesis. |

| Catalyst Supports | High-purity γ-Al₂O₃, SiO₂, TiO₂ (specific surface area >100 m²/g) | Provide high surface area for metal dispersion and can influence reaction pathways. |

| Reducing Agents | Hydrogen gas (H₂, 5% in Ar), Sodium borohydride (NaBH₄) | Reduce metal precursors to their active metallic state during catalyst activation. |

| Reactants & Substrates | e.g., Nitrobenzene, Alkynes, Carbon monoxide (CO) | Target molecules for the catalytic reaction being optimized (e.g., hydrogenation, coupling). |

| Internal Standard | e.g., Dodecane for GC analysis (Chromatographic grade) | Quantifies reaction conversion and yield accurately via Gas Chromatography (GC). |

| Bayesian Opt. Software | GPyOpt, BoTorch, or custom Python with scikit-learn & GPflow | Core platform for building the surrogate model and executing the optimization algorithm. |

Troubleshooting Guides & FAQs

Q1: During a catalyst screening BO loop, my Gaussian Process (GP) model predictions are poor and the optimizer stalls. What could be wrong?

A: This is often due to an inappropriate kernel choice or hyperparameters. For catalytic reaction data, length scales can vary dramatically across the feature space (e.g., metal identity vs. ligand concentration).

- Action 1: Switch from a standard Radial Basis Function (RBF) kernel to a Matérn 5/2 kernel, which is less smooth and better for capturing sharper, physical phenomena. Re-optimize kernel hyperparameters (length scale, variance) by maximizing the log marginal likelihood.

- Action 2: If your dataset grows beyond ~2000 points, consider sparse GP approximations (e.g., SVGP) to combat cubic computational scaling. For high-dimensional catalyst descriptors (e.g., >20), use an Automatic Relevance Determination (ARD) kernel to identify irrelevant features.

- Protocol: Standardize all input features (mean=0, std=1) and, if applicable, transform your target (e.g., yield, TOF) using a log or Box-Cox transformation to better satisfy GP's implicit normality assumption.

Q2: My Random Forest (RF) surrogate provides fast predictions but the Bayesian optimizer seems excessively exploitative, missing global optima. How can I fix this?

A: RFs can produce non-smooth, piecewise constant prediction surfaces. The default acquisition function (e.g., Expected Improvement) may get stuck in a local region.

- Action 1: Increase the number of trees (

n_estimators) to 500 or more and the minimum samples per leaf to 5. This smooths the mean prediction and improves uncertainty quantification. - Action 2: Modify the acquisition function. Use the Upper Confidence Bound (UCB) with an increased exploration weight (

kappaorbeta). Alternatively, use Thompson Sampling by drawing predictions from the forest's posterior. - Protocol: Implement a noise-aware configuration. Set

bootstrap=Trueand ensuremax_samplesis less than 1.0 to generate the jackknife-based uncertainty estimates critical for BO.

Q3: When using a Neural Network (NN) surrogate, the model's epistemic uncertainty is poorly calibrated, leading to overconfident exploration. How do I improve it?

A: Standard NNs do not natively provide predictive uncertainty. You must use specific architectures designed for uncertainty quantification.

- Action 1: Implement a Bayesian Neural Network (BNN) using variational inference or Monte Carlo Dropout (MC Dropout). For MC Dropout, ensure dropout layers are active during both training and prediction to generate stochastic predictions.

- Action 2: Use an ensemble of NNs (5-10 models) with randomized weight initializations. The mean prediction is the ensemble average, and the standard deviation across models provides the uncertainty estimate.

- Protocol: Use the Negative Log Likelihood (NLL) as the loss function, which trains the network to output both a mean and a variance, leading to better-calibrated uncertainties crucial for acquisition function guidance.

Q4: For my multi-objective optimization (e.g., maximizing catalyst activity while minimizing cost), which surrogate model is most suitable?

A: All three can be extended, but GPs are often preferred for their well-defined multi-output extensions.

- Action: For 2-4 objectives, use independent GPs with a shared kernel or a coregionalized GP model if outputs are correlated. For RFs or NNs, train separate models per objective and compute a joint acquisition function like Expected Hypervolume Improvement (EHVI).

- Protocol: When using GPyTorch or BoTorch, utilize the MultiTaskGP model. Scale each objective function to unit variance before modeling to ensure equal weighting in the hypervolume calculation.

Quantitative Model Comparison Table

| Feature | Gaussian Process (GP) | Random Forest (RF) | Neural Network (NN) |

|---|---|---|---|

| Native Uncertainty | Excellent (posterior variance) | Good (jackknife/ensemble) | Requires modification (BNN/Ensemble) |

| Sample Efficiency | High (< 200 data points) | Medium | Low (> 1000 data points) |

| Scalability to Big Data | Poor (O(n³)) | Good (O(n log n)) | Excellent (O(n)) |

| Handling High Dimensions | Medium (requires ARD) | Good | Excellent (with architecture) |

| Model Interpretability | Medium (kernel choice) | High (feature importance) | Low (black box) |

| Typical Library | GPyTorch, Scikit-learn | Scikit-learn, SMAC3 | PyTorch, TensorFlow |

| Best For (Catalysis) | Initial, data-scarce campaigns | Mixed data types, categorical vars. | High-throughput data, complex descriptors |

Experimental Protocol: Benchmarking Surrogate Models for BO in Catalyst Discovery

Objective: To empirically evaluate GP, RF, and NN surrogate models within a BO loop for optimizing a catalytic reaction yield.

1. Dataset Generation:

- Use a known catalytic dataset (e.g., Buchwald-Hartwig amination from literature) with 5-10 continuous/categorical variables (catalyst, ligand, base, solvent, temperature, time).

- Start with an initial Design of Experiments (DoE) set of 20 points (e.g., Latin Hypercube).

- Define a held-out test set of 50 points representing the full design space.

2. Surrogate Model Configuration:

- GP: Use a Matérn 5/2 kernel. Optimize hyperparameters via L-BFGS-B, maximizing the marginal likelihood every 5 BO iterations.

- RF: Set

n_estimators=500,min_samples_leaf=5,bootstrap=True. Use the forest's built-in uncertainty. - NN Ensemble: Use a 3-layer MLP (256, 128, 64 nodes) with ReLU. Train an ensemble of 5 networks with Adam (lr=1e-3). Uncertainty = standard deviation of ensemble predictions.

3. BO Loop Execution:

- Acquisition Function: Expected Improvement (EI) for all models.

- Run 50 sequential BO iterations. Each iteration: fit surrogate on all available data, maximize EI via multi-start optimization, evaluate the proposed condition in silico (or via the test set), and add the new (x, y) pair to the data.

- Record the Best Found Yield after each iteration.

4. Evaluation Metrics:

- Plot Best Found Yield vs. Number of Iterations for each model.

- Compute the Area Under the Curve (AUC) for each model's performance trajectory.

- Calculate final Regret (difference between global optimum and model's best found point).

Surrogate Model Decision Workflow

Bayesian Optimization Loop for Catalysis

The Scientist's Toolkit: Key Reagent Solutions for Catalytic BO Experiments

| Item | Function in BO-Driven Catalysis Research |

|---|---|

| Commercial Catalyst Libraries (e.g., from Sigma-Aldrich, Strem) | Provides a well-defined, purchasable search space of pre-characterized metal complexes and ligands for high-throughput experimentation. |

| HTE Reaction Blocks & Microplates | Enables parallel synthesis and screening of up to 96 catalytic reactions at once, generating the batch data required for efficient BO iteration. |

| Automated Liquid Handling Systems | Removes human error and ensures precise, reproducible dispensing of catalysts, substrates, and solvents for reliable data generation. |

| GC/MS or UPLC-MS with Autosamplers | Allows for rapid, quantitative analysis of reaction yields and selectivities, turning physical experiments into digital data for the surrogate model. |

| Chemical Descriptor Software (e.g., RDKit, Dragon) | Generates quantitative numerical features (e.g., steric/electronic parameters, molecular fingerprints) from catalyst structures for the model's input space. |

| BO Software Platform (e.g., BoTorch, AX Platform, custom Python) | The core engine that integrates surrogate modeling, acquisition function optimization, and manages the iterative experiment-design loop. |

Troubleshooting Guides and FAQs

Q1: During my catalysis Bayesian optimization (BO) loop, my algorithm seems to get stuck, repeatedly evaluating points in a similar region. What might be wrong with my acquisition function (AF) choice? A1: This is a classic sign of exploitation over-exploration. Check your AF parameters:

- For Expected Improvement (EI): Ensure you are not using a very small or zero

xi(exploration parameter). A default of0.01is common. Axi=0leads to pure greedy exploitation. - For Upper Confidence Bound (UCB): The

kappaparameter controls exploration. Ifkappais set too low, the algorithm becomes overly greedy. Increasekappa(e.g., from 2.0 to 3.0 or higher) to force exploration of uncertain regions. A decaying schedule forkappaover iterations can also help. - General Check: Verify your surrogate model (Gaussian Process) hyperparameters. Poor length-scales can lead to inaccurate uncertainty estimates, misleading any AF.

Q2: My Probability of Improvement (PI) function keeps selecting points very close to my current best observation, ignoring potentially better but more uncertain regions. How can I fix this? A2: PI is inherently exploitative. To mitigate this:

- Increase the trade-off parameter: The

xiparameter in PI defines a "margin of improvement." Increasingxi(e.g., from 0.01 to 0.05 or 0.1) makes the algorithm consider points that are at leastxibetter than the current best, pushing it slightly into more uncertain regions. - Consider switching AFs: If your experimental budget allows for more exploration, EI or UCB are often better defaults than PI. EI balances improvement magnitude with probability, naturally exploring more.

- Protocol: Run a short test (e.g., 10 BO iterations) comparing PI with

xi=0.01vsxi=0.1on a benchmark function like the Branin-Hoo. Observe the coverage of the search space.

Q3: For optimizing catalyst yield, how do I choose between EI, PI, and UCB when each experiment is very expensive? A3: With high experimental cost, you want to maximize information gain per experiment.

- EI is often the recommended default, as it provides a good balance, weighting both the probability of improvement and the potential magnitude of improvement.

- UCB can be advantageous if you have a clear, fixed experimental budget and can tune

kappaaggressively for a final best result. A protocol is to setkappato decrease with iterations (e.g.,kappa = initial_value / sqrt(iteration)). - PI is generally less recommended for expensive experiments unless you are very close to a suspected optimum and want fine-grained local search.

- Protocol: Perform an initial design of experiments (DoE), fit your GP model, and plot the acquisition function surfaces for EI, PI, and UCB. The one that highlights the most informative and diverse candidate points (balancing known high-performance regions and unexplored spaces) may be best for your specific yield surface.

Q4: I'm using UCB, but the scale of my objective function (e.g., turnover frequency) seems to affect the recommendations dramatically. What should I do? A4: UCB is sensitive to the scale of the mean and standard deviation predictions. You must standardize your objective function (y) before modeling.

- Standardization Protocol: For

nobservations, compute the mean (μ_y) and standard deviation (σ_y) of your target values. Transform your training targets:y_scaled = (y - μ_y) / σ_y. - Train the Gaussian Process on the scaled

y_scaled. - The acquisition function operates on this scaled space, and the

kappaparameter is applied to the scaled uncertainty. - Remember to inversely transform the GP's predictions back to the original scale for reporting.

Data Presentation: Acquisition Function Comparison

Table 1: Key Characteristics of Common Acquisition Functions

| Feature | Expected Improvement (EI) | Probability of Improvement (PI) | Upper Confidence Bound (UCB) |

|---|---|---|---|

| Core Formula | EI(x) = E[max(f(x) - f(x*), 0)] |

PI(x) = P(f(x) ≥ f(x*) + ξ) |

UCB(x) = μ(x) + κ * σ(x) |

| Exploration Parameter | ξ (exploration) |

ξ (trade-off/margin) |

κ (exploration weight) |

| Exploitation Bias | Moderate | High | Tunable (Low to High) |

| Exploration Bias | Moderate | Low | Tunable (Low to High) |

| Response to Noise | Moderately Robust | Sensitive (can be misled) | Robust if κ is tuned |

| Typical Default Parameter | ξ = 0.01 |

ξ = 0.01 |

κ = 2.0 |

| Best Use Case in Catalysis | General-purpose optimization of yield/activity. | Fine-tuning near a known high-performance region. | When a clear budget exists and aggressive exploration is needed early. |

Table 2: Example Results from a BO Run on a Simulated Catalytic Activity Surface

| Iteration | Selected Condition (X) | Observed Activity | AF Used (κ=2.0, ξ=0.01) | GP Posterior Mean (μ) | GP Posterior Std (σ) |

|---|---|---|---|---|---|

| 6 (Initial Best) | [0.5, 0.5] | 78.2 | N/A | 75.4 | 4.1 |

| 7 | [0.7, 0.3] | 65.1 | UCB (Value: 83.6) | 71.2 | 6.2 |

| 8 | [0.2, 0.8] | 82.5 | EI (Value: 8.9) | 74.8 | 5.5 |

| 9 | [0.9, 0.9] | 55.3 | UCB (Value: 81.9) | 68.5 | 6.7 |

| 10 | [0.3, 0.6] | 80.1 | PI (Value: 0.65) | 78.9 | 3.2 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Bayesian Optimization in Catalysis Research

| Item | Function in BO Experimental Loop |

|---|---|

| High-Throughput Experimentation (HTE) Rig | Enables rapid, automated synthesis and screening of catalyst candidates as dictated by BO suggestions. |

| Gaussian Process Software (e.g., GPyTorch, scikit-learn) | Core library for building the probabilistic surrogate model that predicts catalyst performance and uncertainty. |

| Bayesian Optimization Library (e.g., BoTorch, Ax, scikit-optimize) | Provides implementations of acquisition functions (EI, PI, UCB) and manages the optimization loop. |

| Design of Experiments (DoE) Software | Used to generate the initial, space-filling set of catalyst compositions/conditions to seed the BO model. |

| Standardized Performance Metric Assay | A reliable, reproducible activity/selectivity/stability measurement (e.g., GC-MS yield, turnover frequency) to serve as the objective f(x). |

Mandatory Visualizations

Title: Decision Flowchart for Selecting an Acquisition Function

Title: BO Workflow for Catalyst Optimization

FAQs & Troubleshooting Guide

Q1: During the iterative loop, my acquisition function (e.g., Expected Improvement) suggests new experiment points that are extremely close to previous ones. Is this a sign of convergence or a problem with my model? A: This is a common issue, often indicating one of two things: 1) Over-exploitation: Your Gaussian Process (GP) model may be overconfident in a local region due to an inappropriate kernel length scale or noise estimate. 2) Numerical Instability: Covariance matrices can become ill-conditioned after many iterations. First, add a small "nugget" term (e.g., 1e-6) to your kernel's diagonal for numerical stability. Re-examine your kernel choice; a Matérn 5/2 kernel is often more robust than the RBF kernel. Consider switching to a different acquisition function like Upper Confidence Bound (UCB) with a dynamic kappa parameter to encourage exploration.

Q2: How do I handle experimental results that are clear outliers or failures in the Bayesian optimization loop? A: Do not simply discard the data point, as it contains information. Model the failure explicitly. Two primary approaches are:

- Imputation with High Uncertainty: Assign the failed experiment a poor objective value (e.g., low yield) but significantly increase the noise parameter (alpha) for that specific data point in the GP model. This tells the model the observation is unreliable.

- Use a Two-Stage Model: Implement a classifier GP to model the probability of success (e.g., reaction worked/failed), and a regressor GP for the objective only on successful experiments. The joint acquisition function then balances the probability of success with the expected performance.

Q3: My objective function evaluation is very noisy (e.g., catalytic yield has high variance between technical replicates). How should I adjust the BO loop? A: You must explicitly account for heteroscedastic noise.

- Kernel Modification: Use a kernel that includes a white noise term (

WhiteKernelin scikit-optimize) whose magnitude can be learned or set based on your known replicate variance. - Replicate Strategy: For points the acquisition function deems highly promising, allocate budget for 3-5 experimental replicates. Use the mean and standard error of these replicates to update the GP. The model's

alphaparameter should reflect this aggregated noise. - Acquisition Function: Noisy Expected Improvement (NEI) is specifically designed for this scenario and integrates over the noise posterior.

Q4: What are concrete, quantitative stopping criteria for the iterative BO loop in catalysis research? A: Relying solely on a fixed iteration count is inefficient. Implement a multi-faceted stopping rule as summarized in the table below.

| Stopping Criterion | Quantitative Threshold | Rationale |

|---|---|---|

| Objective Improvement | Max Expected Improvement < 0.01 * (Current Best Value) | Further expected gains are negligible relative to scale. |

| Parameter Space Convergence | All proposed points in last 5 iterations are within 5% (normalized) of a previous point. | The algorithm is no longer exploring new regions. |

| Uncertainty Reduction | Average posterior standard deviation across design space has decreased by <1% over last 10 iterations. | The model is no longer learning significantly. |

| Resource Exhaustion | Pre-defined budget (e.g., 100 experiments, 6 months) is reached. | Practical project constraint. |

Q5: After updating my GP model with new data, the predicted optimum shifts dramatically. Is this normal?

A: Significant shifts early in the loop (e.g., <20 experiments) are normal as the model learns the response surface. Large shifts late in the loop are a red flag. This is often caused by non-stationarity—the underlying function's properties change across the parameter space. Solution: Use a composite kernel, such as the sum of a Matérn kernel and a linear kernel (Matérn() + Linear()), to capture both smooth variations and global trends. Re-initialize the hyperparameter optimization when updating the model.

Key Experimental Protocol: High-Throughput Catalyst Screening Validation

Purpose: To validate a candidate catalyst identified by the Bayesian Optimization (BO) loop through rigorous, statistically robust testing. Methodology:

- Replicate Testing: Perform the reaction with the candidate catalyst formulation (e.g., 1% Pd/ZnO) and conditions (Temperature, Pressure, Residence Time) a minimum of 6 times in a randomized block design.

- Control Inclusion: Include the previously best-known catalyst and a negative control in each experimental block.

- Analytical Calibration: Use internal standards for GC/MS or HPLC analysis to quantify yield/selectivity. Generate a calibration curve for the primary product daily.

- Statistical Analysis: Perform a one-way ANOVA comparing the candidate to the historical best. A p-value < 0.01, coupled with a yield improvement of >5% absolute, is considered a successful validation.

- Model Update: Feed the full statistical summary (mean, variance, n) of the validation experiments back into the GP dataset as a single, high-precision point.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Bayesian Optimization for Catalysis |

|---|---|

| Precatalyst Libraries (e.g., Metal Salt Sets, Ligand Kits) | Provides a discrete, combinatorial search space for the BO algorithm to propose new combinations. |

| Automated Liquid Handling / Microfluidic Reactors | Enables precise, high-throughput execution of the small-volume experiment proposals from the BO loop. |

| In-line/On-line Analytics (FTIR, GC) | Provides rapid objective function evaluation (e.g., conversion, selectivity) for immediate model updating. |

| Standardized Substrate Solutions | Ensures consistency in reactant concentration across dozens of automated experiments, reducing noise. |

| Internal Standard Kits | Critical for accurate quantitative analysis in high-throughput screening, providing the reliable data the GP model requires. |

Hyperparameter Optimization Software (e.g., scikit-optimize, BoTorch) |

The computational engine that fits the GP model and maximizes the acquisition function to propose the next experiment. |

Visualizations

Bayesian Optimization Iterative Loop Workflow

Multi-Criteria Stopping Logic for BO Loop

Technical Support Center: Troubleshooting & FAQs

FAQ 1: High-Level API Integration

- Q: When integrating BoTorch with my lab's robotic liquid handler (e.g., via a Python API), the optimization loop fails after the first batch with a

DeviceErroror timeout. What should I check? - A: This is commonly a synchronization issue. The lab hardware operates on "wall-clock" time, while the optimization script proceeds without explicit waiting. Implement a robust polling loop with a status check.

- Protocol: After sending the experiment instructions (e.g.,

robot_api.run_experiment(params)), do not callcandidate = optimizer.get_next_candidate()immediately. Instead, enter a loop that queries the hardware status every 30 seconds (status = robot_api.get_status()). Only when the status returns"IDLE"or"COMPLETE"and you have successfully loaded the new experimental results (new_y = load_data()), should you proceed to generate the next batch. Always include a timeout (e.g., 24 hours) and error flagging logic.

- Protocol: After sending the experiment instructions (e.g.,

FAQ 2: Numerical Instability in Surrogate Models

- Q: My Gaussian Process (GP) model in GPyOpt or BoTorch throws

LinAlgError(non-positive definite matrix) or warning during fitting, especially after many iterations. How can I stabilize this? - A: This is due to ill-conditioned covariance matrices from near-duplicate data points or noisy measurements.

- Protocol: Implement a two-step stabilization protocol.

- Pre-processing: Before fitting the GP, add a small jitter (e.g.,

jitter=1e-6) to the diagonal of the kernel matrix. In BoTorch, settrain_X = add_jitter(train_X)and usecholesky_jitter=1e-4in thefit_gpytorch_modelutility. - Kernel Selection: Use a

Matérn 5/2kernel instead of theRBFfor more robustness. Explicitly add aNoisecomponent (WhiteNoiseKernelin GPyTorch) if your experimental noise is significant. Consider standardizing your input data (X) and output data (y) to have zero mean and unit variance.

- Pre-processing: Before fitting the GP, add a small jitter (e.g.,

- Protocol: Implement a two-step stabilization protocol.

FAQ 3: Failed Automation Data Parsing

- Q: My automated HPLC or plate reader outputs

.csvfiles, but my BO script fails to parse them, throwingValueError: could not convert string to float. - A: The file format or structure has likely changed mid-run. Raw instrument data is often messy.

- Protocol: Create a dedicated, robust parsing function with explicit error handling.

- Protocol: Create a dedicated, robust parsing function with explicit error handling.

Troubleshooting Guide: Common Error Codes & Resolutions

| Error Code / Message (Library) | Likely Cause | Immediate Action | Long-Term Fix |

|---|---|---|---|

RuntimeError: CUDA out of memory (BoTorch) |

Too many candidates or training points in batch mode. | Reduce batch_size or num_samples. Restart kernel. |