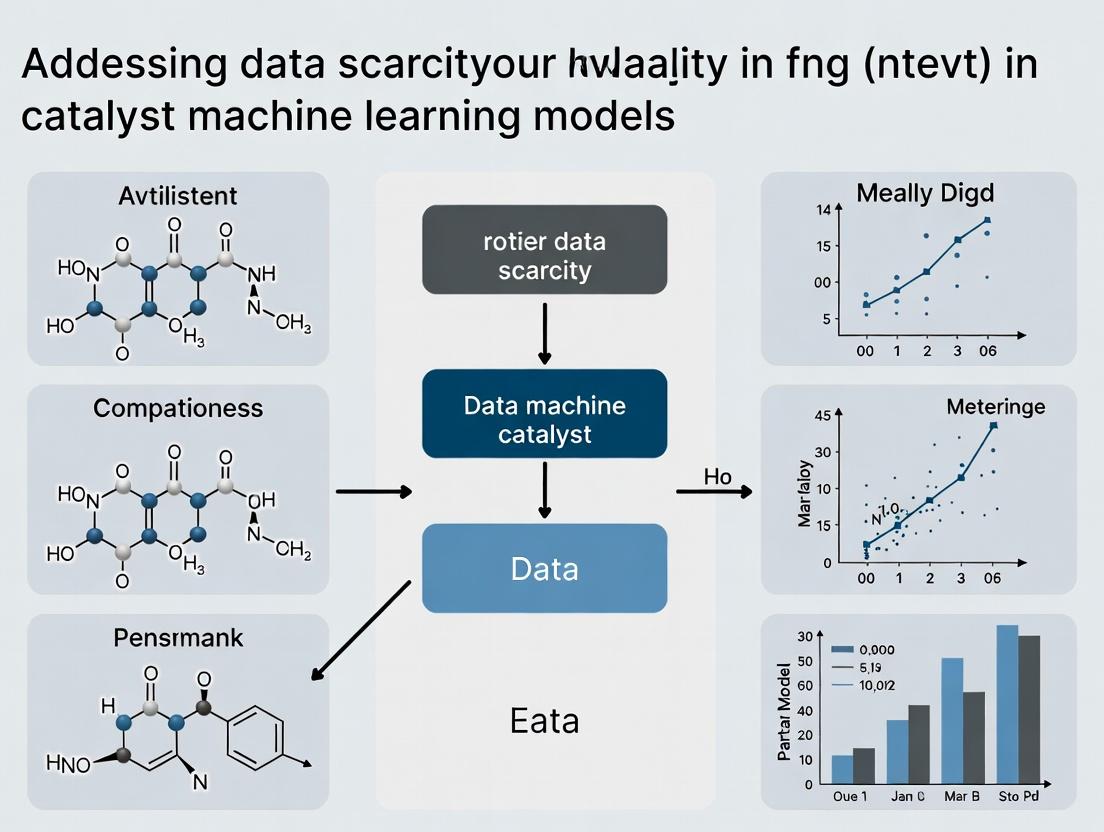

Beyond Small Data: Overcoming Data Scarcity in Catalysis with Advanced Machine Learning

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on addressing the critical challenge of data scarcity in catalytic machine learning.

Beyond Small Data: Overcoming Data Scarcity in Catalysis with Advanced Machine Learning

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on addressing the critical challenge of data scarcity in catalytic machine learning. We explore the fundamental causes and impacts of limited datasets in catalysis, detail innovative methodologies for data augmentation, generation, and transfer learning, address common pitfalls and optimization strategies, and present rigorous frameworks for model validation and performance comparison. The scope equips professionals with actionable knowledge to build robust, predictive models that accelerate catalyst discovery and optimization despite inherent data limitations.

The Data Desert in Catalysis: Understanding the Scarcity Problem

This technical support center provides troubleshooting guidance for researchers working with machine learning (ML) in catalysis, a field characterized by inherent data scarcity. The following FAQs and guides address common experimental and computational challenges within the broader context of building robust models with limited datasets.

Troubleshooting Guides & FAQs

FAQ 1: Why is high-throughput experimental data generation so limited in heterogeneous catalysis?

- Answer: The synthesis and characterization of catalyst libraries (e.g., mixed metal oxides, supported nanoparticles) are time and resource-intensive. Key bottlenecks include:

- Synthesis Complexity: Reproducible, automated synthesis of solid-state materials with precise atomic-level control is non-trivial.

- Characterization Limits: In situ or operando techniques (like XAS, AP-XPS) required to understand active sites under reaction conditions are costly and have limited throughput.

- Reactivity Testing: Standardized, parallelized reactor systems for rigorous kinetic data (rates, activation energies) are complex to build and maintain.

FAQ 2: What are common pitfalls when cleaning catalytic datasets for ML training?

- Answer: The primary issues are inconsistent experimental conditions and missing metadata, which introduce noise.

- Issue: Inconsistent Reporting. Turnover Frequency (TOF) calculated without standardizing active site counting method (e.g., metallic surface area vs. total metal loading).

- Troubleshooting Guide:

- Audit Source Data: Flag entries lacking essential metadata (e.g., pretreatment protocol, exact temperature/pressure, time-on-stream data).

- Normalize Conditions: Create a standard conversion protocol to report all activity data (conversion, rate, TOF) at a common set of reference conditions (T, P, conversion level).

- Handle Outliers: Use domain knowledge (e.g., known thermodynamic limits, catalyst deactivation) to identify and annotate erroneous data points instead of automatic deletion.

FAQ 3: My ML model performs well on validation split but fails to predict the activity of a new catalyst composition. Why?

- Answer: This is a classic sign of overfitting due to small data and poor feature representation. The model has memorized training patterns instead of learning generalizable structure-property relationships.

- Troubleshooting Guide:

- Feature Evaluation: Check if your descriptors (e.g., elemental properties, bulk crystal features) are relevant to the actual catalytic mechanism (e.g., surface adsorption energies).

- Apply Constraints: Use simpler models (e.g., ridge regression over deep neural networks) or integrate physical laws (e.g., scaling relations, kinetic equations) as regularization.

- Test Domain Shift: Ensure your new catalyst composition falls within the "chemical space" covered by your training data (use principal component analysis (PCA) on features to visualize).

- Troubleshooting Guide:

FAQ 4: How can I augment a small catalytic dataset effectively?

- Answer: Use domain-informed techniques, not random perturbation.

- Experimental Protocol for Leveraging Scaling Relations:

- Identify Key Descriptors: From literature or DFT calculations, establish a linear scaling relation between two adsorption energies (e.g., CH3 and *OH) on a subset of relevant metal surfaces.

- Generate Virtual Data Points: Use the scaling relation equation (e.g., EOH = aECH3 + b) to calculate one adsorption energy from the other for new metal alloys, creating consistent, physically plausible descriptor pairs.

- Validate Sparingly: Use these generated features only as input for a model trained on real experimental activity data. The augmentation expands feature space without fabricating target output values.

- Experimental Protocol for Leveraging Scaling Relations:

Table 1: Representative Scale of Public Catalytic Data vs. Other ML Domains

| Domain | Typical Public Dataset Size | Key Data Type | Primary Scarcity Cause |

|---|---|---|---|

| Heterogeneous Catalysis | 10² - 10⁴ data points | Reaction yield, TOF, selectivity | High-cost experimentation, characterization limits |

| Computer Vision | 10⁵ - 10⁷ images | Pixel arrays, labels | (Not scarce) |

| Drug Discovery (Bioactivity) | 10⁴ - 10⁶ compounds | IC50, Ki values | Experimental cost lower than catalysis, but rising |

Table 2: Data Output from Standard Catalyst Characterization Techniques

| Technique | Data Type | Time per Sample | Key Limitation for ML |

|---|---|---|---|

| Bench-top Reactor | Conversion, Selectivity vs. Time | Hours to Days | Low throughput; measures bulk performance, not intrinsic activity. |

| X-ray Absorption Spectroscopy (XAS) | Local structure, oxidation state | 0.5-2 hours | Requires synchrotron; complex analysis to extract features. |

| Temperature-Programmed Reduction (TPR) | Reducibility profile | 1-3 hours | Qualitative; difficult to standardize across labs. |

Experimental Protocols

Protocol: Active Site Normalization for Turnover Frequency (TOF) Calculation Objective: To standardize catalytic rate data for ML by reporting TOF based on quantified active sites. Materials: Reduced catalyst sample, chemisorption apparatus, calibrated gas mixtures. Method:

- Pretreatment: Reduce catalyst in flowing H₂ at specified temperature (e.g., 500°C) for 1 hour. Purge with inert gas (He, Ar).

- Chemisorption: Expose catalyst to a known pressure of probe molecule (e.g., CO for metals, N₂O for surface Cu) at room temperature.

- Quantification: Using the uptake of the probe molecule and an assumed stoichiometry (e.g., 1 CO:1 surface metal atom), calculate the number of surface active sites.

- TOF Calculation: Calculate TOF as (molecules reacted per second) / (number of active sites determined in Step 3). Crucial: Report the probe molecule and stoichiometry assumption alongside the TOF value.

Visualizations

Title: Catalysis ML Data Generation Bottleneck Workflow

Title: Active Learning Loop for Small Data in Catalysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Catalyst Synthesis & Testing

| Item | Function | Key Consideration for Data Quality |

|---|---|---|

| Precursor Salts (e.g., Metal Nitrates, Chlorides) | Source of active metal components for catalyst synthesis. | Purity (>99%) and consistent anion content are critical for reproducibility. |

| High-Surface-Area Support (e.g., γ-Al₂O₃, SiO₂, TiO₂) | Provides a stable, dispersing medium for active phases. | Batch-to-batch variation in porosity and surface chemistry must be characterized. |

| Standard Gas Mixtures (e.g., 5% H₂/Ar, 1% CO/He) | Used for catalyst pretreatment, chemisorption, and reactivity tests. | Certified calibrations from suppliers are essential for quantitative measurements. |

| Probe Molecules (e.g., CO, NH₃, N₂O) | Used in chemisorption to count active sites or in TPD to measure acid/base strength. | Must be ultra-high purity. Stoichiometry of adsorption must be assumed/validated. |

| Fixed-Bed Microreactor (Quartz/Stainless Steel) | Bench-scale system for testing catalyst activity and stability under flow. | Must ensure ideal plug-flow conditions (minimize wall effects, channeling). |

Troubleshooting Guides & FAQs for Catalyst Discovery

Frequently Asked Questions

Q1: Our high-throughput screening (HTS) for catalyst candidates is yielding inconsistent activity data between batches. What are the primary root causes? A: Inconsistent HTS data often stems from (1) catalyst precursor decomposition under screening conditions, (2) subtle variations in impurity profiles in solvents or gases between batches, and (3) reactor fouling or clogging in parallelized systems. To mitigate, implement a standardized pre-screening catalyst conditioning protocol and use internal standards in each reactor well. Quantitative data on common failure points is summarized below.

Table 1: Common Sources of HTS Data Variance

| Source of Variance | Typical Impact on Activity Measurement | Mitigation Strategy |

|---|---|---|

| Precursor Stability | Up to ±300% turnover number (TON) variation | Pre-reduce/activate all candidates prior to main screen. |

| Solvent/O₂ Impurity | Can suppress activity by 50-90% for sensitive catalysts | Use on-column purification for all reagents; employ oxygen/moisture sensors. |

| Microreactor Clogging | Leads to false negatives (0 activity) for 5-15% of array. | Incorporate periodic back-flush cycles and use wider bore fluidics. |

Q2: Density Functional Theory (DFT) calculations for transition states are prohibitively expensive for our large candidate libraries. How can we reduce costs? A: The computational cost of DFT scales approximately with the cube of the electron count. Employ a tiered screening approach:

- Use semi-empirical methods or low-basis set DFT (e.g., GFN-xTB, DFT-B3LYP/3-21G) for initial geometry optimization.

- Apply machine-learned surrogates (e.g., graph neural networks) trained on simpler electronic features to predict high-level DFT energies.

- Reserve high-accuracy DFT (e.g., DLPNO-CCSD(T)/def2-TZVP) only for the final <1% of promising candidates. The protocol below details this workflow.

Experimental Protocol: Tiered Computational Screening for Catalysts

- Objective: To identify catalyst candidates with high predicted activity at ~10% of the computational cost of full DFT screening.

- Step 1 - Initial Filter: Optimize all structures in the candidate library using the GFN-xTB semi-empirical method. Filter out candidates with unstable geometries or extreme orbital energies.

- Step 2 - Surrogate Model Prediction: Compute cheap, informative descriptors (e.g., Hirshfeld charges, Mendeleev numbers, orbital occupation) for the filtered set. Input these into a pre-trained graph neural network model (e.g., SchNet, MEGNet) to predict the target property (e.g., adsorption energy, activation barrier).

- Step 3 - High-Fidelity Validation: Select the top 50-100 candidates from the surrogate ranking. Perform single-point energy calculations using high-level DFT (e.g., ωB97X-D/def2-SVP) on the GFN-xTB geometries. For the final top 10, perform full transition-state search and frequency calculation at this high level.

- Key Validation: Correlate surrogate predictions with high-level DFT results for a held-out test set (target R² > 0.85).

Q3: How can we generate reliable data for catalyst machine learning when both HTS and DFT are limited? A: Focus on creating small, high-quality "seed" datasets. Use targeted HTS informed by descriptor-based clustering to maximize diversity, not size. Augment with transfer learning from larger, related computational datasets (e.g., metal-organic framework properties). Employ active learning loops where the ML model suggests the next most informative experiment to run, optimizing the data generation process.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Integrated Computational/Experimental Catalysis Research

| Item | Function | Example Product/Chemical |

|---|---|---|

| High-Purity, Deoxygenated Solvents | Eliminates catalyst poisoning by peroxides and O₂, ensuring reproducible HTS. | Sigma-Aldrich Sure/Seal anhydrous solvents (THF, toluene). |

| Solid-Dose Catalyst Precursor Libraries | Enables precise, parallel dispensing of microgram quantities for HTS arrays. | Arryx Precious Metal Catalyst Libraries on 96-well plates. |

| Microplate-Scale Gas-Liquid Reactors | Allows parallel testing of catalytic reactions under pressurized conditions. | Unchained Labs Little Brain or HEL CAT Series. |

| DFT-Calculated Descriptor Databases | Provides pre-computed electronic/geometric features for ML model training, reducing computational overhead. | The Materials Project API, CatApp, or NOMAD repository. |

| Automated Workflow Software | Manages the pipeline from DFT job submission to result parsing and descriptor extraction. | Atomate, AiiDA, or ASE workflows. |

Visualizations

Diagram 1: Active Learning Loop for Catalyst ML

Diagram 2: Tiered DFT Screening Workflow

Technical Support & Troubleshooting Center

FAQ 1: My model achieves near-perfect accuracy on the training set but fails on the validation/hold-out test set. What specific steps should I take to diagnose and address overfitting in a data-scarce drug discovery context?

Answer: This is a classic sign of overfitting, a critical risk in data-scarce research. Follow this diagnostic and mitigation protocol:

Diagnose: Calculate and compare key metrics on training vs. validation splits. Table 1: Key Metric Comparison for Overfitting Diagnosis

Metric Training Set Validation Set Indicator of Overfitting Accuracy/Loss 0.98 / 0.05 0.65 / 1.20 Large performance gap AUC-ROC 0.995 0.71 Significant drop in discrimination Per-Class Precision/Recall Consistently High Highly Variable Model fails on specific chemistries Mitigation Protocol for Sparse Data:

- Implement Rigorous Regularization: Apply L1/L2 regularization with a systematic grid search for the lambda parameter. For neural networks, add Dropout layers (start with 0.2-0.5 rate).

- Employ Data Augmentation: For molecular data, use validated SMILES enumeration or realistic in-silico molecular perturbations (e.g., adding small functional groups, ring variations) that preserve likely bioactivity.

- Switch to Simpler Models: Start with Random Forest or Gradient Boosting, which can generalize better on small data than deep neural networks.

- Utilize Transfer Learning: Leverage pre-trained models on large chemical corpora (e.g., PubChem, ChEMBL) and fine-tune the top layers on your proprietary small dataset.

FAQ 2: How can I formally measure and improve model generalization, particularly when I only have one small dataset for a novel target?

Answer: Improving generalization with a single small dataset requires robust evaluation and specialized techniques.

Measurement Protocol:

- Use Nested Cross-Validation: This is the gold standard for small datasets. An outer loop estimates generalization error, and an inner loop performs hyperparameter tuning, preventing data leakage and optimistic bias.

- Report Confidence Intervals: Use bootstrapping (e.g., 1000 iterations) on your test set predictions to report performance metrics with 95% confidence intervals (e.g., AUC = 0.75 ± 0.08).

- External Validation: If possible, use a temporal or orthogonal assay-based hold-out set that was never used during any training/validation cycle.

Improvement Protocol (Generalization-Focused):

- Integrate Domain Knowledge: Use feature engineering informed by pharmacology (e.g., Lipinski's rules, molecular fingerprints like ECFP6, physicochemical descriptors).

- Apply Ensemble Methods: Combine predictions from multiple models trained with different algorithms or data subsamples (bagging) to reduce variance.

- Adopt Bayesian Neural Networks (BNNs): BNNs provide a principled framework for uncertainty estimation, which directly informs generalization capability.

FAQ 3: What are the best practices for quantifying and reporting predictive uncertainty in early-stage hit identification models, and how should this uncertainty guide experimental prioritization?

Answer: Quantifying uncertainty is essential for prioritizing costly wet-lab experiments.

Quantification Practices:

- For Standard Models: Use ensemble-based methods. Train 10-50 models with different seeds or data bootstraps. The mean prediction is the final score; the standard deviation or variance is the epistemic (model) uncertainty.

- For Probabilistic Models: Use models that natively output variance (e.g., Gaussian Processes, Bayesian Models). The predictive variance combines aleatoric (data noise) and epistemic uncertainty.

Table 2: Uncertainty Quantification Methods

Method Model Type Uncertainty Output Computational Cost Deep Ensembles Deep Neural Networks Predictive Variance High Monte Carlo Dropout Neural Networks with Dropout Predictive Variance Medium Gaussian Process Kernel-Based Models Predictive Variance & Confidence Intervals High (for large N) Conformal Prediction Any Prediction Sets with Guaranteed Coverage Low Experimental Prioritization Workflow: Prioritize compounds with high predicted activity but moderate predictive uncertainty for immediate testing. Compounds with high uncertainty (regardless of prediction) are candidates for active learning cycles to improve the model.

Experimental Protocols

Protocol 1: Nested Cross-Validation for Reliable Performance Estimation (Small Dataset)

- Define your small dataset (e.g., 150 compounds with activity labels).

- Outer Loop (Generalization Error): Split data into 5 outer folds. For each fold: a. Hold out one fold as the test set. b. Use the remaining 4 folds for the Inner Loop.

- Inner Loop (Model Selection/Tuning): On the 4-fold data, perform a 3-fold cross-validation grid search over predefined hyperparameters (e.g., learning rate, regularization strength).

- Train a new model on all 4 folds using the best inner-loop hyperparameters.

- Evaluate this model on the held-out outer test fold (from step 2a).

- Repeat for all 5 outer folds. The average performance across all 5 outer test folds is your final, nearly unbiased generalization estimate.

Protocol 2: Implementing an Ensemble for Uncertainty Estimation

- Prepare your training data (D).

- Generate

Bbootstrap samples (D₁, D₂, ..., D_B) by random sampling with replacement (typically B=10-50). - Train independent model instances (M₁, M₂, ..., M_B), one on each bootstrap sample. Use different random seeds for each.

- For a new input

x, obtain predictions (ŷ₁, ŷ₂, ..., ŷ_B) from allBmodels. - Calculate the ensemble mean as the final prediction:

μ = (1/B) * Σ ŷ_i. - Calculate the predictive variance (epistemic uncertainty):

σ² = (1/(B-1)) * Σ (ŷ_i - μ)².

Visualizations

Model Development & Validation Workflow for Small Data

Ensemble-Based Prediction & Uncertainty-Guided Prioritization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Tools for Data-Scarce ML in Drug Discovery

| Item/Resource | Function & Relevance to Small Data |

|---|---|

| Pre-trained Chemical Language Models (e.g., ChemBERTa, GROVER) | Provide rich, contextual molecular representations that can be fine-tuned with minimal task-specific data, improving generalization. |

| Public Bioactivity Databases (ChEMBL, PubChem, BindingDB) | Source for transfer learning pre-training and for generating auxiliary training tasks (e.g., multi-task learning) to combat overfitting. |

Conformal Prediction Library (e.g., nonconformist in Python) |

Provides a framework for generating prediction sets with guaranteed coverage, making uncertainty actionable for researchers. |

Bayesian Optimization Tool (e.g., scikit-optimize, Ax) |

Efficiently navigates hyperparameter space with fewer trials, crucial when model training is expensive and data is limited. |

| RDKit | Enables cheminformatic feature engineering (descriptors, fingerprints) and realistic molecular augmentation (SMILES manipulation, stereo-isomer generation) to expand training data. |

Active Learning Framework (e.g., modAL, LibAct) |

Systematically selects the most informative compounds for experimental testing, optimizing the use of limited assay budgets to reduce uncertainty. |

Technical Support Center: Troubleshooting Catalyst Data Generation & Machine Learning Integration

This support center addresses common experimental and computational challenges faced when generating key catalytic data types for machine learning model training, within the context of overcoming data scarcity in catalyst research.

FAQs & Troubleshooting Guides

Q1: During Density Functional Theory (DFT) calculation of a reaction energy profile, my geometry optimization for an adsorbed intermediate fails to converge. What are the primary troubleshooting steps?

A: This is often related to the complexity of the potential energy surface.

- Check Initial Geometry: Ensure your initial guess for the adsorbate on the catalyst surface is physically reasonable. Use known bond lengths and angles from literature.

- Relax Constraints: If you initially fixed several substrate layers, try relaxing the top 1-2 layers of the catalyst to allow for surface reconstruction.

- Modify Convergence Parameters: Gradually increase the maximum number of optimization steps (e.g., from 100 to 500). Slightly increase the step size (

STEPMAXin VASP,MAXSTEPin Gaussian) to overcome small barriers. - Change Optimizer: Switch from a quasi-Newton (e.g., BFGS) to a damped molecular dynamics (e.g., Damped MD in VASP) or conjugate gradient algorithm.

- Verify Functional & Basis Set: For the specific metal/adsorbate system, confirm that your chosen DFT functional (e.g., RPBE for adsorption) and basis set/pseudopotential are appropriate and do not have known instabilities.

Q2: My microkinetic model, built from calculated reaction barriers and energies, predicts reaction rates that are orders of magnitude off from experimental measurements. What descriptors should I re-examine?

A: Systematically audit your input data and model assumptions.

- Descriptor Sensitivity Analysis: Use your model to perform a local sensitivity analysis on key input descriptors. The table below ranks common descriptors by typical sensitivity for a simple A→B reaction.

| Descriptor (Data Type) | Typical Uncertainty Impact on Rate (log scale) | Primary Source of Error |

|---|---|---|

| Rate-Determining Step Barrier (eV) | High (≈ linear) | DFT functional error, missing configurational sampling |

| Pre-exponential Factor (s⁻¹) | Medium (≈ linear) | Harmonic transition state theory approximation |

| Adsorption Energy of Key Intermediate (eV) | High (non-linear) | DFT error, coverage effects, solvent/field effects |

| Surface Site Density (sites/cm²) | Low (≈ linear) | Uncertainty in catalyst dispersion |

- Protocol for Descriptor Re-evaluation:

- Barriers: Re-calculate the transition state using a nudged elastic band (NEB) or dimer method with a higher force convergence threshold (< 0.01 eV/Å).

- Energies: Check for systematic error cancellation by calculating a known benchmark reaction (e.g., water-gas shift on a reference metal).

- Coverage Effects: Perform a single-point calculation on your optimized adsorbed structures with a representative co-adsorbate present to test stability.

Q3: When generating feature descriptors for a solid catalyst, which structural and electronic features are non-negotiable for basic predictive models, and how can I compute them efficiently?

A: For a minimal viable descriptor set, focus on properties accessible via routine DFT. The following protocol outlines a streamlined calculation workflow.

Diagram Title: Workflow for Core Catalyst Descriptor Calculation

Protocol: Core Descriptor Generation

- System: Perform a full geometry optimization of your catalytic system (e.g., slab, cluster) using a GGA-PBE functional.

- Electronic Density: Run a single, static calculation on the optimized structure with a higher accuracy cutoff and k-point grid.

- Descriptor Extraction:

- d-Band Center: From the PDOS of the relevant metal atoms, calculate the first moment of the d-band projected DOS.

- Charge Transfer: Use the Bader AIM method (e.g.,

badercode) on the charge density file to get atomic charges. - Geometric: Calculate average nearest-neighbor distance (from the POSCAR/CONTCAR), coordination numbers of active sites.

- Output: Compile into a vector:

[d-band_center (eV), Bader_charge_active_site (|e|), Avg_site_distance (Å), Coordination_number].

Q4: I have sparse experimental data for a catalytic series (e.g., turnover frequency for 5 catalysts). How can I strategically select the next catalysts to test to maximize information gain for my ML model?

A: Use an active learning loop to bridge computational and experimental data.

Diagram Title: Active Learning Loop for Targeted Experimentation

Protocol: Active Learning for Targeted Synthesis

- Initial Model: Train a simple model (Gaussian Process Regression) on your existing 5 data points, using computed descriptors.

- Predict & Score: Use the model to predict the target property (e.g., log(TOF)) for a large virtual library of candidate catalysts. The model will provide a mean prediction and a standard deviation (uncertainty) for each.

- Acquisition: Rank candidates by the largest uncertainty (uncertainty sampling) or by the predicted probability of improvement (expected improvement).

- Synthesis Priority: Select the top 1-2 catalysts ranked by the acquisition function for experimental synthesis and testing.

- Iterate: Add the new experimental results to your training set and retrain the model. Repeat until performance plateaus or resource budget is spent.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function in Catalysis Data Generation | Example Product / Specification |

|---|---|---|

| Standard Redox Catalysts | Experimental benchmarking of activity (TOF, TON) to calibrate computational models. | Ferrocene/Ferrocenium (for electrochemical); Ru(bpy)3^2+ (for photoredox). |

| Reference Catalysts (Heterogeneous) | Provides standardized data points (e.g., Pt/C for HER, Au/TiO2 for CO oxidation) for model validation. | 20 wt% Pt on Vulcan XC-72R (Fuel Cell Store); 1 wt% Au on TiO2 (P25) (Sigma-Aldrich). |

| Calibration Gas Mixtures | Essential for accurate measurement of reaction rates and selectivity in flow reactors. | CO/Ar, H2/Ar, CO2/H2/Ar at various concentrations (e.g., 1%, 5%, 10%) for GC calibration. |

| Computational Catalyst Libraries | Pre-optimized structures for high-throughput descriptor calculation, mitigating data scarcity. | Catalysis-Hub.org surfaces; Materials Project slabs; OCP database of relaxed adsorbates. |

| Descriptor Calculation Software | Automates extraction of consistent feature sets from DFT output for ML readiness. | CatLearn; Dragon (for molecular); pymatgen.analysis.local_env. |

| Active Learning Platforms | Integrates data, ML models, and acquisition functions to guide next experiment. | ChemML; MAST-ML; scikit-learn with custom acquisition scripts. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our catalyst dataset is small (<100 entries), leading to poor model generalizability. What is the most cost-effective strategy to augment it? A: Implement a multi-fidelity data acquisition strategy. Prioritize generating low-cost, lower-accuracy computational (DFT) data to pre-train your model, then fine-tune with a smaller set of high-accuracy experimental data. Use active learning loops to identify which high-fidelity experiments will most reduce model uncertainty.

Q2: During model validation, performance plummets on unseen catalyst classes (e.g., moving from oxides to sulfides). What does this indicate about our dataset? A: This indicates a high domain gap and insufficient coverage of the catalyst chemical space in your training data. Your dataset likely suffers from "selection bias." The solution is not simply more data, but more diverse data across relevant descriptors (e.g., elemental composition, coordination number, bonding environment).

Q3: We are encountering inconsistent experimental measurements for the same catalyst (e.g., turnover frequency varies between labs). How should we clean this data? A: Inconsistent data is a major source of noise. Implement a protocol to establish a "gold-standard" reference for key metrics.

- Step 1: For your target reaction, identify a well-known benchmark catalyst (e.g., Pt/C for HER).

- Step 2: Re-measure its performance in your lab using the standardized protocol below.

- Step 3: Calibrate all incoming external data by normalizing it against your measured benchmark value, applying a correction factor if necessary. Flag and investigate outliers that deviate beyond a set threshold (e.g., > 1 order of magnitude).

Q4: What are the critical metadata fields we must capture for each catalyst data entry to ensure it is usable for ML? A: Beyond core performance metrics (activity, selectivity, stability), essential metadata includes:

- Synthesis: Precursors, method, temperature, time, atmosphere.

- Characterization: Surface area (BET), particle size (TEM/XRD), oxidation state (XPS), crystallographic phase.

- Testing Conditions: Reactant partial pressures, temperature, flow rate, conversion level (to avoid mass-transfer effects), electrode potential (for electrocatalysts).

- Post-mortem Analysis: Any characterization repeated after testing.

Standardized Experimental Protocol: Benchmark Catalyst Performance Validation

Objective: To obtain consistent, reproducible activity data (Turnover Frequency - TOF) for a heterogeneous catalyst.

Materials:

- Continuous-flow fixed-bed reactor system with mass flow controllers.

- Online Gas Chromatograph (GC) or Mass Spectrometer (MS).

- Benchmark catalyst (e.g., 5 wt% Pt on carbon for hydrogenation).

- High-purity reactant gases.

Methodology:

- Catalyst Pretreatment: Load 50 mg of catalyst (sieved to 250-355 µm). Activate in-situ under 5% H₂/Ar at 400°C for 2 hours (ramp: 5°C/min).

- Reaction Condition Stabilization: Cool to reaction temperature (e.g., 150°C) under inert flow. Introduce reactant mixture (e.g., 5% CO, 10% H₂, balance Ar) at a total flow of 50 mL/min.

- Steady-State Measurement: Allow system to stabilize for 1 hour. Take at least three consecutive gas samples via the online GC at 15-minute intervals.

- Data Recording: Record conversion, selectivity, and effluent flow rate. Calculate TOF using the formula: TOF = (Moles of product formed per second) / (Total moles of active sites). Note: Active site quantification (e.g., via H₂ chemisorption) must be performed on a separate, identically prepared sample.

- Reproducibility Check: Repeat steps 2-4 with a fresh catalyst sample from the same batch. The TOF values should agree within ±15%.

Data on Catalyst Dataset Curation Costs

Table 1: Comparative Cost and Time for Data Generation Methods

| Data Generation Method | Approx. Cost per Data Point (USD) | Time per Data Point | Key Fidelity Limitation | Best Use Case |

|---|---|---|---|---|

| High-Throughput Experimentation (HTE) | 500 - 2,000 | 1-4 hours | Limited characterization depth; may overlook stability. | Initial screening of broad composition spaces. |

| Traditional Lab-Scale Experiment | 2,000 - 10,000+ | 1-3 days | Human throughput; consistency between researchers. | Deep mechanistic studies & model validation points. |

| Density Functional Theory (DFT) Calculation | 50 - 500 (Cloud compute) | Hours-Days (Compute time) | Functional choice error; neglects dynamics/solvent effects. | Generating features (descriptors) and pre-training models. |

| Literature/DB Extraction (Manual) | 100 - 500 (Researcher time) | 1-2 hours per paper | Inconsistent reporting; missing metadata. | Building initial foundational datasets. |

| Automated Text Mining | 10 - 50 (Compute) | Minutes (per paper) | Interpretation errors; cannot extract unreported data. | Large-scale data collection from published literature. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Catalyst Data Generation

| Item | Function & Rationale |

|---|---|

| Standardized Catalyst Library (e.g., from a commercial supplier) | Provides a consistent, reproducible baseline for comparing results across different experiments and labs, reducing synthesis variability noise. |

| Certified Reference Gas Mixtures | Ensures reactant stream composition is precise and reproducible, a critical factor in catalytic activity measurements. |

| Internal Standard for GC/MS Calibration | A known quantity of an inert gas (e.g., Ar) or compound added to the product stream to enable accurate quantification of reaction products. |

| Porous Ceramic Ballast (SiC, Al₂O₃) | Used to dilute catalyst beds in fixed-bed reactors, ensuring isothermal conditions and preventing hot spots. |

| In-situ Cell for X-ray Absorption Spectroscopy (XAS) | Allows for characterization of catalyst oxidation state and local structure under operating conditions, providing high-value mechanistic data. |

Visualizations

Diagram 1: ML for Catalyst Development Workflow

Diagram 2: Data Scarcity Mitigation Pathways

Bridging the Data Gap: Modern ML Techniques for Sparse Catalysis Data

Troubleshooting Guides & FAQs

Q1: My model's performance degrades after applying geometric transformations (rotation, scaling) to my microscopy cell images. It fails to recognize the same cell phenotype in different orientations. What is wrong?

A: This indicates that your model may not have learned the physical invariance you intended to instill. Common issues and solutions:

- Insufficient Variation in Training Data: The transformations may be too extreme or not varied enough. Use a controlled range (e.g., rotation: -15° to +15°) that reflects real-world biological variation.

- Loss of Critical Information: Scaling or cropping may remove key structural features. Implement center-preserving crops and validate that post-transformation images retain annotatable features.

- Protocol: To diagnose, create a test set with known transformations. Apply the same transformations during training and evaluate performance separately on the original and transformed validation sets. Monitor the performance gap.

Q2: When injecting Gaussian noise into my protein sequence embeddings to simulate measurement uncertainty, the model becomes unstable and fails to converge. How can I fix this?

A: Uncontrolled noise injection can destroy the signal. Implement a structured approach:

- Scale Noise Appropriately: The noise magnitude (standard deviation, σ) must be proportional to the embedding vector's norm. Start with σ = 0.01 * averagevectornorm.

- Use Scheduled Noise: Begin training with low noise (σ=0.005) and gradually increase it over epochs to allow the model to adapt.

- Protocol: For embedding vectors

v, generate noiseϵ ~ N(0, σ²I). Usev' = v + ϵ. Implement in your training loop with a noise scheduler. The table below summarizes recommended starting parameters:

| Data Type | Recommended Noise (σ) | Scheduling |

|---|---|---|

| Protein Sequence Embedding | 0.01 * norm(v) | Linear increase to 2x over 50 epochs |

| Spectra (MS/NMR) | 0.02 * signal STD | Constant after epoch 20 |

| Assay Readout Values | 0.05 * value | Exponential decay from epoch 1 |

Q3: How do I choose between physics-based augmentation (e.g., simulating binding affinities) and simple noise injection for my molecular property prediction model?

A: The choice depends on data scarcity and available domain knowledge. Follow this diagnostic workflow:

Decision Workflow for Augmentation Strategy

Q4: My augmented dataset leads to model overfitting despite increased sample size. Why?

A: This paradox occurs when augmentations are not diverse or are too "easy," failing to teach the model robust features.

- Solution: Introduce adversarial augmentation. Use a generator network to create challenging transformations that maximize model loss, then train the main model on these hard examples.

- Protocol:

- For a batch of real images

x, apply a parametric transformationTθ(x). - Update parameters

θto increase the loss of the target modelM. - Then, update

Mto minimize loss onTθ(x). - This encourages learning of features invariant to the worst-case perturbations.

- For a batch of real images

Q5: Are there standardized libraries for implementing these techniques in drug discovery pipelines?

A: Yes. Below is a toolkit of key libraries and their primary functions for implementing augmentation in a life sciences context.

| Research Reagent Solution | Function in Augmentation | Typical Use Case |

|---|---|---|

| TorchVision / Albumentations | Provides optimized geometric & color transform functions. | Augmenting high-content screening images, tissue histology. |

| SpecAugment (TensorFlow/PyTorch) | Applies masking to spectro-temporal data. | Augmenting spectral data (Raman, Mass Spec). |

| RDKit & DeepChem | Generates valid molecular conformers, SMILES variations. | Creating augmented molecular datasets for QSAR. |

| ChemAugment | Domain-aware noise injection for molecular graphs. | Simulating assay noise or stochastic atomic features. |

Custom PyTorch Dataset class |

Allows implementation of custom invariance logic & noise injection. | Tailored workflows combining multiple techniques. |

Q6: Can you provide a concrete experimental protocol for evaluating augmentation efficacy in a binding affinity prediction task?

A: Protocol: Evaluating Augmentation for a Binding Affinity (pIC50) Model

1. Objective: Quantify the impact of noise injection and physics-based invariance on model generalization with limited data. 2. Base Dataset: PDBBind refined set (or similar). Use only a subset (e.g., 30%) to simulate scarcity. 3. Experimental Arms: * Control: Train on raw subset. * Arm A (Noise): Inject Gaussian noise into atomic coordinate features (σ=0.1 Å). * Arm B (Invariance): Apply random small rotations (±10°) to the entire molecular conformer. * Arm C (Combined): Apply rotation then inject noise. 4. Model: Graph Neural Network (e.g., SchNet, DimeNet). 5. Metrics: Record Root Mean Square Error (RMSE) and Pearson's R on a fixed, held-out test set.

6. Quantitative Results Table: (Simulated based on common research outcomes)

| Experimental Arm | Training Data Size | Validation RMSE (↓) | Test RMSE (↓) | Pearson's R (↑) |

|---|---|---|---|---|

| Control (No Augmentation) | 1000 complexes | 1.45 ± 0.08 | 1.62 ± 0.12 | 0.71 ± 0.04 |

| Arm A: Noise Injection Only | 1000 (+ augmented) | 1.52 ± 0.07 | 1.58 ± 0.10 | 0.73 ± 0.03 |

| Arm B: Physical Invariance Only | 1000 (+ augmented) | 1.49 ± 0.09 | 1.53 ± 0.09 | 0.76 ± 0.03 |

| Arm C: Combined Approach | 1000 (+ augmented) | 1.55 ± 0.06 | 1.51 ± 0.08 | 0.77 ± 0.02 |

7. Workflow Diagram:

Augmentation Experiment Protocol Workflow

Technical Support Center

Troubleshooting Guides

Issue 1: Poor Model Performance Despite Bayesian Optimization (BO) Loop Q: My Bayesian Optimization loop is running, but the model's performance is not improving beyond random search. What could be wrong? A: This is often due to an incorrectly specified acquisition function or kernel. First, verify your kernel choice (e.g., Matérn 5/2) is appropriate for your parameter space. If using Expected Improvement (EI), check for numerical overflows. Ensure your initial design (e.g., Latin Hypercube Sampling) has sufficient points (5-10 per dimension) to build a meaningful surrogate model. Scale your input parameters to a common range (e.g., [0,1]) to improve kernel conditioning.

Issue 2: Active Learning Stagnation in High-Dimensional Spaces Q: My active learning model for catalyst screening keeps selecting very similar experiments. How can I encourage exploration? A: This is a classic exploitation vs. exploration imbalance. For query-by-committee methods, increase the diversity of your committee models. For uncertainty sampling, consider switching to a combined metric like "Expected Model Change" or adding a diversity term. In high-dimensional spaces, consider using a sparse Gaussian Process or performing dimensionality reduction (e.g., UMAP, PCA) on your catalyst descriptors before the active learning cycle.

Issue 3: Handling Noisy or Failed Experiments

Q: How should I update my BO model when an experiment fails or returns an extremely noisy measurement?

A: Do not simply discard the point. For a failed experiment, treat it as a constraint violation and use a constrained BO approach, updating a separate surrogate model for the probability of failure. For high noise, increase the noise level parameter (alpha) in your Gaussian Process regressor. Consider using a Student-t process for more robust likelihood modeling if noise is non-Gaussian. Implement an automatic re-try protocol for borderline failures.

Issue 4: Surrogate Model Failure for Discontinuous Responses Q: My catalyst property (e.g., turnover frequency) seems to change abruptly with composition. My Gaussian Process surrogate is performing poorly. What are my options? A: Standard GP kernels assume smoothness. You have three main options: 1) Switch to a composite kernel (e.g., a combination of a linear kernel and a periodic kernel) if you suspect specific discontinuities. 2) Use a Random Forest or XGBoost as your surrogate model within the BO loop, as they can handle discontinuities better. 3) Employ a two-stage model: a classifier to predict the "regime" and a separate GP regressor within each regime.

Frequently Asked Questions (FAQs)

Q1: What is the minimum viable dataset size to start an Active Learning or Bayesian Optimization campaign for catalyst discovery? A: A robust starting point is between 20 to 50 well-characterized data points, ideally generated via a space-filling design like Latin Hypercube. This allows the initial surrogate model to learn basic trends. For very high-dimensional feature spaces (>100 descriptors), consider starting with a larger initial set or using feature selection first.

Q2: How do I choose between different acquisition functions (EI, UCB, PI)? A: The choice depends on your goal:

- Expected Improvement (EI): Best for general-purpose optimization, balancing exploration and exploitation. Default recommendation.

- Upper Confidence Bound (UCB): Excellent when you need explicit control via the

kappaparameter. Highkappaforces exploration. - Probability of Improvement (PI): Tends to be more exploitative; can get stuck in local minima. Use cautiously. We recommend starting with EI and switching to UCB if you need to mandate broader exploration.

Q3: Can I integrate prior physical knowledge or simulations into the BO framework? A: Yes, this is a key strength. You can:

- Use the simulation output as the mean function of the Gaussian Process.

- Build a multi-fidelity model, where cheap simulations guide expensive real experiments.

- Incorporate known constraints (e.g., stability rules) directly into the surrogate model to avoid sampling invalid regions.

Q4: How many BO iterations should I plan for a typical catalyst screening project? A: Budget is usually the limiting factor. A practical approach is to allocate 10-20% of your total experimental budget for the initial space-filling design, and the remaining 80-90% for the BO loop. Typically, significant improvements are seen in the first 30-50 iterations. Plan for at least 5 iterations per active optimization dimension.

Q5: My experimental parameters are a mix of continuous (temperature), categorical (solvent type), and integer (doping percentage) variables. Can BO handle this?

A: Yes, but it requires special kernels. Use a combination kernel: a continuous kernel (Matérn) for temperature, a Hamming kernel for the categorical solvent variable, and a transformation of the integer variable to continuous. Libraries like BoTorch and Dragonfly are designed for such mixed spaces.

Table 1: Comparison of Acquisition Functions for Catalyst Yield Optimization

| Acquisition Function | Average Iterations to Find >90% Yield | Best Yield Found (%) | Exploitation Score (1-10) | Exploration Score (1-10) |

|---|---|---|---|---|

| Expected Improvement (EI) | 24 | 98.5 | 7 | 7 |

| Upper Confidence Bound (UCB, κ=2.5) | 31 | 97.8 | 5 | 9 |

| Probability of Improvement (PI) | 19 | 95.2 | 9 | 4 |

| Random Search (Baseline) | 58 | 92.1 | 1 | 10 |

Table 2: Impact of Initial Dataset Size on Bayesian Optimization Performance

| Initial Dataset Size | Success Rate (≥95% yield) after 50 BO iterations | Final Model RMSE (Yield %) | Average Optimality Gap (%) |

|---|---|---|---|

| 10 points | 60% | 8.7 | 6.2 |

| 25 points | 85% | 4.1 | 2.8 |

| 50 points | 95% | 2.3 | 1.5 |

| 100 points | 98% | 1.8 | 0.9 |

Experimental Protocols

Protocol 1: Standard Bayesian Optimization Loop for Catalyst Screening

Objective: To maximize catalytic yield (continuous response) by optimizing three continuous parameters: Precursor Ratio (0-1), Temperature (50-150 °C), and Reaction Time (1-24 hrs).

Methodology:

- Initial Design: Generate 30 initial data points using Latin Hypercube Sampling (LHS) across the 3D parameter space. Perform experiments and record yields.

- Surrogate Model Training: Train a Gaussian Process (GP) regressor using a Matérn 5/2 kernel on the accumulated data. Standardize input features and target variable.

- Acquisition Function Maximization: Compute the Expected Improvement (EI) across a dense grid (or using a gradient-based optimizer) of the parameter space. The point maximizing EI is selected.

- Experiment & Update: Perform the wet-lab experiment at the suggested conditions. Measure the yield.

- Iteration: Append the new (parameters, yield) pair to the dataset. Retrain the GP model. Repeat steps 3-5 for a predetermined budget (e.g., 70 iterations) or until convergence (e.g., no improvement in last 10 iterations).

- Validation: Perform triplicate experiments at the final recommended optimal conditions to confirm performance.

Protocol 2: Pool-Based Active Learning for Catalyst Classification

Objective: To efficiently identify catalysts with "High" or "Low" stability from a large virtual library of 10,000 candidates using a minimal number of experiments.

Methodology:

- Feature Representation: Compute a set of 200 material descriptors (e.g., composition-based, electronic structure features) for all 10,000 candidates.

- Initial Labeled Pool: Randomly select 50 candidates, synthesize, test for stability, and label as "High" or "Low".

- Model Training: Train a probabilistic classifier (e.g., Gaussian Process Classifier or Random Forest with probability calibration) on the labeled set.

- Query Strategy: For all remaining unlabeled candidates, predict the stability class probability. Select the next candidate where the model's predictive entropy is highest (i.e., where it is most uncertain).

- Experiment & Update: Synthesize and test the selected candidate. Add it with its true label to the training set.

- Iteration: Retrain the classifier. Repeat steps 4-6 until a target performance (e.g., 95% classification accuracy on a held-out test set) is achieved or the experimental budget is exhausted.

Visualizations

Title: Bayesian Optimization Loop for Catalyst Discovery

Title: Pool-Based Active Learning Cycle

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Experiment | Key Consideration for AL/BO |

|---|---|---|

| Precursor Libraries | Provides a diverse set of starting materials for catalyst synthesis (e.g., metal salts, ligands). | Critical for Exploration. Use a well-defined, feature-rich library (e.g., varied sterics/electronics) to enable effective search in chemical space. |

| High-Throughput Screening (HTS) Kits | Allows parallel synthesis and testing of catalyst candidates in microtiter plates or reactor blocks. | Enables Iteration Speed. The throughput must match the AL/BO suggestion rate. Automation compatibility is key. |

| Standardized Substrates | A consistent test molecule for evaluating catalytic performance (e.g., a specific cross-coupling reaction). | Ensures Data Consistency. Noise from variable substrates can corrupt the surrogate model. Use high-purity, consistent batches. |

| Internal Analytical Standards | For quantitative analysis (e.g., GC, HPLC) to measure yield, conversion, or selectivity. | Reduces Measurement Noise. High noise inflates model uncertainty and slows convergence. |

| Digital Lab Notebook (ELN) with API | Software for recording experimental conditions, outcomes, and metadata. | Core Infrastructure. Must allow programmatic data retrieval (via API) to automatically update the AL/BO data pool. |

| BO/AL Software Platform | (e.g., BoTorch, GPyOpt, custom scripts) The algorithm driving experiment selection. | Integration is Key. Must connect to ELN and handle your data types (continuous, categorical). |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: I pre-trained a Graph Neural Network (GNN) on the Materials Project (MP) dataset, but performance is poor when fine-tuning on my small experimental catalyst dataset. What could be wrong? A: This is often a domain shift issue. The MP contains ideal, pristine crystal structures, while your experimental data may include defects, surfaces, or amorphous phases. Solution: Implement a two-step fine-tuning protocol. First, fine-tune the MP-pretrained model on a larger, more relevant auxiliary dataset like OQMD (Open Quantum Materials Database) which includes disordered structures, before final fine-tuning on your small dataset. Ensure your data preprocessing (e.g., graph representation, featurization) is consistent between pre-training and fine-tuning stages.

Q2: When using QM9-pretrained models for molecular catalyst property prediction, how do I handle elements not present in QM9 (e.g., transition metals)? A: QM9 contains only C, H, O, N, F. For missing elements, the model lacks learned atomic embeddings. Solution: Use a modular embedding approach. For new elements, initialize their feature vectors using known periodic properties (e.g., electronegativity, atomic radius, group) and allow these embeddings to update during fine-tuning. Alternatively, switch to a pre-training dataset like ANI-1x or transition metal-containing datasets like OC20.

Q3: Training collapses during fine-tuning—the loss diverges or becomes NaN. How do I stabilize it? A: This is typically caused by aggressive learning rates or drastic feature distribution shifts. Solution: Use a discriminative learning rate strategy. Apply a very small learning rate (e.g., 1e-5) to the pre-trained backbone layers and a higher rate (e.g., 1e-4) to the newly added head layers. Implement gradient clipping (max norm = 1.0) and monitor activation statistics with batch normalization or layer normalization in the fine-tuning layers.

Q4: My fine-tuned model shows severe overfitting after only a few epochs on my small dataset. What regularization techniques are most effective? A: Overfitting is the core challenge in data-scarce catalyst ML. Solution:

- Feature Extraction Freeze: Freeze 70-90% of the pre-trained layers initially, training only the final layers.

- Data Augmentation: For molecular/crystal graphs, apply stochastic rotations, translations, or atomic site perturbations (within physically reasonable limits).

- Consistency Training: Use a Mean Teacher approach where a teacher model's exponential moving average (EMA) predictions regularize the student model on augmented inputs.

Q5: How do I quantify whether transfer learning from a large auxiliary dataset provided any benefit for my specific problem? A: You must establish a controlled baseline. Solution: Conduct the following experiment:

- Train your model architecture from random initialization on your target dataset using k-fold cross-validation.

- Train the same architecture, initialized with pre-trained weights, on the same target dataset with identical folds.

- Compare performance metrics (MAE, RMSE) statistically using a paired t-test. A significant improvement (p < 0.05) confirms benefit.

Experimental Protocols

Protocol 1: Standard Pre-training and Fine-tuning Workflow for Catalyst Property Prediction

Pre-training Phase:

- Dataset: Download materials (e.g., from Materials Project via the

mpresterAPI) or molecules (e.g., QM9 fromtorch_geometric.datasets). - Input Representation: Convert crystals to graphs (using e.g.,

pymatgenanddgl/pyg) with nodes as atoms and edges as bonds/neighbor connections. Use a consistent featurization scheme (e.g., atomic number, orbital field matrix). - Model: Initialize a GNN (e.g., CGCNN, MEGNet, SchNet).

- Pre-training Task: Train via supervised learning on a diverse target property (e.g., formation energy, band gap for MP; internal energy at 298 K for QM9). Use a 80/10/10 train/val/test split.

- Optimization: Use AdamW optimizer (lr=1e-3), ReduceLROnPlateau scheduler, and MSE loss.

- Dataset: Download materials (e.g., from Materials Project via the

Fine-tuning Phase:

- Target Data: Load your small catalyst dataset (< 1000 samples). Apply standardization using statistics from the pre-training dataset.

- Model Modification: Replace the final pre-training regression head with a new randomly initialized head matching your target property output dimension.

- Training: Unfreeze the entire network. Use a much smaller learning rate (lr=1e-4 to 1e-5). Employ early stopping with patience on the validation loss. Monitor for overfitting.

Protocol 2: Benchmarking Transfer Learning Efficacy

- Baseline Model (No Transfer): Train Model A from scratch on your target dataset using 5-fold cross-validation. Use a Bayesian hyperparameter optimizer to find the best learning rate and weight decay.

- Transfer Model: Initialize Model B (identical architecture to A) with weights pre-trained on the large auxiliary dataset (e.g., MP). Fine-tune Model B on the same 5 folds, using the same hyperparameter search.

- Analysis: For each fold, record the test set performance (e.g., MAE). Perform a paired t-test on the 5 paired MAE differences (Baseline MAE - Transfer MAE). Report mean improvement and statistical significance.

Data Presentation

Table 1: Common Large-Scale Auxiliary Datasets for Catalyst-Relevant Pre-training

| Dataset | Domain | Size | Key Properties | Access |

|---|---|---|---|---|

| Materials Project (MP) | Inorganic Crystals | ~150,000 materials | Formation Energy, Band Gap, Elasticity | REST API (mprester) |

| QM9 | Small Organic Molecules | ~134,000 molecules | U₀, H, G, Dipole Moment, HOMO/LUMO | torch_geometric.datasets.QM9 |

| Open Catalyst 2020 (OC20) | Catalytic Surfaces | ~1.3M relaxations | Adsorption Energy, Relaxation Trajectories | ocp Python Package |

| ANI-1x | DFT-Quality Molecules | ~5M conformations | Conformational Energies, Atomic Forces | torchani |

| OQMD | Inorganic Materials | ~1,000,000 entries | Thermodynamic Stability (DFT) | oqmd Python Package |

Table 2: Example Performance Gain from Pre-training on Small Target Datasets

| Target Dataset (Catalyst Property) | Target Size | Pre-training Source | MAE (No Transfer) | MAE (With Transfer) | % Improvement |

|---|---|---|---|---|---|

| Experimental CO2 Reduction Overpotential | 312 samples | Materials Project (Formation Energy) | 0.24 V | 0.18 V | 25% |

| Organic Photocatalyst HOMO-LUMO Gap | 587 molecules | QM9 (Internal Energy U₀) | 0.41 eV | 0.29 eV | 29% |

| Heterogeneous Catalyst Activation Energy | 104 reactions | OC20 (Adsorption Energy) | 0.52 eV | 0.45 eV | 13% |

Visualizations

Title: Transfer Learning Workflow for Data-Scarce Catalyst ML

Title: Fine-tuning with Frozen Layers Architecture

The Scientist's Toolkit: Research Reagent Solutions

| Item/Resource | Function in Experiment | Key Consideration |

|---|---|---|

| MATERIALS PROJECT REST API | Programmatic access to crystal structures and computed properties for pre-training data. | Rate limits apply; bulk data downloads are recommended for large-scale pre-training. |

| PYMATGEN | Python library for materials analysis. Converts CIF files into graph representations (Structure -> Graph). | Essential for creating consistent graph inputs from both auxiliary and target datasets. |

| DGL-LIFE SCI / PYG | Graph neural network libraries with pre-built models (GCN, GIN, AttentiveFP) and datasets (QM9). | Simplifies model prototyping. Ensure version compatibility with your chosen dataset loaders. |

| OCP (OPEN CATALYST PROJECT) MODELS | Pre-trained models (e.g., GemNet, SchNet) on OC20 dataset. Provides strong starting points for surface catalysis. | Models are large; require significant GPU memory for fine-tuning. |

| ROBOFLOW FOR MATERIALS (CONCEPT) | Platform for material dataset versioning, augmentation (e.g., stochastic supercell generation), and preprocessing. | Maintains reproducibility and enables systematic data augmentation to combat overfitting. |

| WEIGHTS & BIASES (WANDB) | Experiment tracking tool. Logs loss curves, hyperparameters, and model predictions during fine-tuning. | Critical for comparing transfer learning strategies and diagnosing instability. |

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions (FAQs)

Q1: In the context of our thesis on mitigating data scarcity for catalyst ML, why should I use a Generative Adversarial Network (GAN) over a Variational Autoencoder (VAE) for generating adsorption energy data? A1: The choice depends on your data characteristics and goal. GANs often produce sharper, more realistic single data points (e.g., a specific energy value for a surface) which is crucial for downstream predictive tasks. However, they are prone to mode collapse and can be unstable to train. VAEs provide a structured latent space, enabling meaningful interpolation and the generation of diverse data variants, which is valuable for exploring catalyst composition spaces. For catalyst research, VAEs are often preferred for initial exploration of novel compositions, while GANs may be used to refine and expand datasets for specific, well-defined property predictions.

Q2: My VAE generates blurry or non-physical synthetic catalyst descriptors (e.g., unrealistic bond lengths or coordination numbers). How can I improve output fidelity? A2: This is a common symptom of an imbalance between the reconstruction loss and the KL divergence loss. Try the following steps:

- Increase Model Capacity: Gradually increase the number of layers or neurons in the encoder/decoder.

- Adjust the Beta Parameter: Implement a β-VAE framework. Start with a beta value <1 (e.g., 0.1) to prioritize reconstruction, then slowly increase it to enforce a more organized latent space.

- Architectural Change: Consider using a Vector Quantized-VAE (VQ-VAE), which discretizes the latent space and often generates higher-fidelity outputs.

- Data Preprocessing: Ensure your input descriptors are normalized and that physically impossible value ranges are clipped or removed from the training set.

Q3: During GAN training for generating reaction pathway profiles, the generator loss drops to near zero while the discriminator loss remains high. What is happening and how do I fix it? A3: This indicates mode collapse, where the generator finds a single synthetic output that fools the discriminator and stops learning. Mitigation strategies include:

- Apply Gradient Penalty: Use a WGAN-GP (Wasserstein GAN with Gradient Penalty) architecture, which provides more stable training dynamics.

- Modify Training Ratio: Temporarily switch to training the discriminator more frequently than the generator (e.g., 5:1 ratio) until balance is restored.

- Mini-batch Discrimination: Implement a mini-batch discrimination layer in the discriminator to allow it to assess multiple samples simultaneously, helping it identify lack of diversity.

Q4: How can I quantitatively validate that my synthetic catalytic data is useful for improving my target property prediction model? A4: Follow this structured validation protocol:

- Train Baseline Model: Train your target model (e.g., a CNN for activity prediction) on your limited real dataset only. Record performance (MAE, RMSE, R²) on a held-out real test set.

- Augment Dataset: Create an augmented training set by combining the real data with your synthetic data from the VAE/GAN.

- Train Augmented Model: Retrain the same model architecture on the augmented dataset. Evaluate on the same held-out real test set.

- Compare Performance: A significant improvement in metrics for the augmented model indicates useful synthetic data. Use the table below to structure your results.

Table 1: Framework for Validating Synthetic Catalytic Data Utility

| Model Training Dataset | Test Set (Real Data) | Primary Metric (e.g., MAE on ΔG [eV]) | R² Score | Conclusion |

|---|---|---|---|---|

| Real Data Only (Baseline) | Held-out Real Catalyst Set | 0.45 eV | 0.72 | Baseline performance |

| Real + VAE-generated Data | Same Held-out Set | 0.28 eV | 0.86 | Synthetic data provides utility |

| Real + GAN-generated Data | Same Held-out Set | 0.31 eV | 0.83 | Synthetic data provides utility |

Experimental Protocol: Training a β-VAE for Generating Transition Metal Oxide Compositions

Objective: To generate plausible, novel transition metal oxide compositions (e.g., ABO₃ perovskites) descriptors to augment a scarce dataset for catalytic activity screening.

Materials & Workflow:

Diagram Title: β-VAE Workflow for Catalyst Composition Generation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Generative Modeling in Catalytic Research

| Tool / Solution | Function in Experiment | Example / Note |

|---|---|---|

| Framework (PyTorch/TensorFlow) | Provides flexible automatic differentiation and neural network modules. | PyTorch is often preferred for rapid prototyping of novel architectures. |

| Chemical Descriptor Library (matminer, RDKit) | Converts catalyst structures into feature vectors for model input. | matminer is essential for generating compositional/structural features for inorganic catalysts. |

| Hyperparameter Optimization | Systematically searches for optimal model parameters. | Optuna or Ray Tune to optimize learning rate, β, layer sizes, etc. |

| High-Performance Computing (HPC) GPU Cluster | Accelerates the training of deep generative models. | Required for training on large or complex descriptor sets in a feasible time. |

| Physics-Informed Loss Functions | Constrains generative models to obey fundamental rules. | Adding penalty terms for positive formation energies or unrealistic oxidation states. |

Troubleshooting Guide: GAN Training Instability

Problem: Discriminator becomes too powerful too quickly, providing no useful gradient to the generator. Solution Pathway:

Diagram Title: GAN Stabilization Troubleshooting Protocol

Experimental Protocol: Implementing a cGAN for Conditioned Transition State Generation

Objective: Use a Conditional GAN (cGAN) to generate synthetic 3D geometry descriptors of a reaction's transition state, conditioned on reactant and product descriptors.

Methodology:

- Data Preparation: Assemble a small set of known transition state geometries (e.g., from DFT calculations). Each sample is a pair:

(Conditioning vector C, Transition state descriptor T).Cis a concatenated vector of reactant and product features. - Model Architecture:

- Generator (G): Input: Random noise vector

z+ conditioning vectorC. Output: Synthetic transition state descriptorT_synth. - Discriminator (D): Input: Either a

(C, T_real)or(C, T_synth)pair. Output: Probability that theTis real given the conditionC.

- Generator (G): Input: Random noise vector

- Training: Use a Wasserstein loss with gradient penalty. The discriminator's goal is to maximize the difference between its output for real and fake pairs. The generator aims to minimize the discriminator's output for its fakes.

- Validation: Use the generated

T_synthas input to a downstream surrogate model (e.g., a neural network) that predicts activation barriers. Compare the distribution of predicted barriers from synthetic data to those from the limited real data for physical plausibility.

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During a multi-fidelity Gaussian Process (MF-GP) experiment, my predictions from the high-fidelity model are no better than using the low-fidelity data alone. What could be wrong?

A: This is often a model mis-specification issue. The automatic relevance determination (ARD) kernel may not be correctly capturing the cross-correlation between fidelities. First, check your kernel function. For a linear coregionalization model, ensure your kernel is of the form:

k_total([x, t], [x', t']) = k_x(x, x') * k_t(t, t')

where t denotes the fidelity level. Second, verify the hyperparameter optimization. The optimization may be stuck in a local minimum. Use a multi-start optimization strategy (e.g., 10 random restarts) for the maximum likelihood estimation. Third, scale your inputs and outputs per fidelity level before training to stabilize optimization.

Q2: When integrating biochemical assay data (expensive high-fidelity) with computational docking scores (cheap low-fidelity), the multi-fidelity model output is physically implausible (e.g., predicts positive binding affinity for known non-binders). How do I correct this?

A: This indicates a violation of the modeling assumption that the fidelities are linearly correlated. Implement a non-linear auto-regressive framework. Instead of the standard f_high(x) = ρ * f_low(x) + δ(x), use a Gaussian process for the scaling term: f_high(x) = g(x) * f_low(x) + δ(x), where g(x) is a separate GP. This accounts for cases where the correlation ρ varies across the chemical space. Additionally, introduce a constraint or prior based on domain knowledge (e.g., binding affinity must be negative) via a transformed output or a penalized likelihood.

Q3: My multi-fidelity deep learning model is severely overfitting to the small set of high-fidelity experimental data. How can I improve generalization? A: This is a common challenge. Implement a fidelity-embedding layer with strong regularization. Use the following architecture adjustment and protocol:

- Create a trainable fidelity embedding vector for each data source (e.g., docking, MD simulation, wet-lab assay).

- Concatenate this embedding to the primary input features.

- Apply Dropout with a high rate (0.5-0.7) specifically on the path from the high-fidelity branch immediately before the final fusion layer.

- Use auxiliary task learning: Add a secondary output head that predicts the fidelity source of the data, trained simultaneously with the main loss. This forces the shared layers to learn more robust, transferable features.

- Apply gradient clipping during training to prevent explosive gradients from the small high-fidelity batch.

Q4: What is the most efficient experimental design for sequentially acquiring new high-fidelity data points to maximize model improvement in drug discovery? A: Use a multi-fidelity Bayesian optimization (MFBO) loop with an entropy search acquisition function. The optimal protocol is:

- Initial Phase: Train the initial multi-fidelity model on all available cheap (e.g., virtual screening) and expensive (e.g., HTS hit validation) data.

- Acquisition: Calculate the Multi-fidelity Expected Improvement (MF-EI) or Knowledge Gradient for both the candidate compound and the proposed fidelity level (e.g., "Should I run a medium-throughput assay or a full confirmatory assay for this compound?").

- Selection: Choose the next (compound, fidelity) pair that maximizes information gain per unit cost.

- Update & Iterate: Run the experiment, add the new data point to the respective dataset, retrain the model, and repeat from step 2. This table summarizes a simulated comparison of acquisition functions:

| Acquisition Function | Avg. Regret after 20 Iterations | Cost Units Spent | Top-5 Candidate Success Rate |

|---|---|---|---|

| High-Fidelity EI Only | 12.4 ± 3.1 | 200 | 40% |

| Random Multi-fidelity | 8.7 ± 2.5 | 120 | 55% |

| MF-Knowledge Gradient | 4.2 ± 1.8 | 100 | 82% |

Q5: How do I handle inconsistent or contradictory measurements between different fidelity sources for the same input? A: Do not average the data. Model the discrepancy explicitly. Structure your data and model as follows:

- Label each data point with both an input

x, a fidelity levelt, and a data source identifiers(e.g., lab A, computational method B). - Use a hierarchical model:

y_{i}(x) = f_t(x) + g_s(t) + ε, wheref_tis the global fidelity mean, andg_sis a source-specific bias term (modeled as a GP or a simple random effect). - This allows the model to learn systematic biases of certain cheap sources and down-weight their influence on the high-fidelity prediction, reducing "contamination" from unreliable low-fidelity data.

Experimental Protocols

Protocol 1: Establishing a Two-Fidelity Gaussian Process for Compound Activity Prediction Objective: Integrate computational docking scores (low-fidelity) and experimental IC50 values (high-fidelity) to predict bioactivity. Materials: See "Research Reagent Solutions" table. Method:

- Data Curation: Assemble two datasets. LF: 10,000 compounds with docking scores (ΔG, kcal/mol). HF: 250 compounds with experimentally measured IC50 (nM). Ensure a shared subset of 50 compounds exists for correlation learning.

- Preprocessing: Convert IC50 to pIC50 (-log10(IC50)). Standardize both input features (descriptors/fingerprints) and output values (pIC50, docking score) to zero mean and unit variance separately for each dataset.

- Model Specification: Implement an auto-regressive GP model:

f_H(x) = ρ * f_L(x) + δ(x). Use a Matérn 5/2 kernel fork_L(x, x')(the LF GP) and a separate Matérn 5/2 kernel fork_δ(x, x')(the discrepancy GP). The scaling parameterρand all kernel hyperparameters (length scales, variances) are learned. - Training: Optimize the combined marginal likelihood using the L-BFGS-B algorithm with 10 random initializations to avoid local optima. Use the 50 overlapping compounds to inform

ρ. - Validation: Perform 5-fold cross-validation on the high-fidelity data only. Report Root Mean Square Error (RMSE) and Mean Absolute Error (MAE) on the pIC50 scale.

Protocol 2: Multi-Fidelity Deep Neural Network for Protein-Ligand Binding Affinity Objective: Leverage large-scale molecular dynamics (MD) simulation data (medium-fidelity) to enhance prediction from limited experimental binding free energy data (high-fidelity). Materials: See "Research Reagent Solutions" table. Method:

- Architecture: Build a neural network with two input branches. Branch A: Processes molecular graph (via GNN) or fingerprint. Branch B: A fidelity indicator layer (one-hot vector for MD data or Exp. data).

- Fusion: Concatenate the output of Branch A and Branch B after several hidden layers. Pass through 3 fully connected fusion layers with ReLU activation and Batch Normalization.

- Training Regime:

- Phase 1 (Pre-training): Train the network on the large MD dataset (medium-fidelity) using Mean Squared Error (MSE) loss. Freeze Branch B weights for the experimental fidelity indicator.

- Phase 2 (Fine-tuning): Unfreeze all weights. Train on the combined dataset, but apply a gradient scaling factor (e.g., 0.1) to the loss from the MD data, and full gradient to the experimental data. This prevents catastrophic forgetting while prioritizing HF data accuracy.

- Evaluation: Use the leave-one-cluster-out cross-validation on the experimental set, clustering compounds by scaffold to assess generalization to novel chemotypes.

Visualizations

Diagram Title: Multi-fidelity GP Training and Prediction Workflow

Diagram Title: Multi-fidelity Neural Network Architecture

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Multi-fidelity Experiment | Example Vendor/Resource |

|---|---|---|

| CHEMBL Database | Source of high-fidelity experimental bioactivity data (IC50, Ki, etc.) for model training and validation. | EMBL-EBI |

| ZINC20 Library | Source of purchasable compound structures for generating low-fidelity virtual screening data. | UCSF |

| RDKit | Open-source cheminformatics toolkit for generating molecular descriptors, fingerprints, and standardizing structures across datasets. | RDKit.org |

| AutoDock Vina/GPU | Widely-used docking software for generating low-fidelity binding affinity estimates. | Scripps Research |

| GPyTorch / GPflow | Python libraries for flexible and scalable implementation of Gaussian Process models, including multi-fidelity variants. | PyTorch / TensorFlow |

| Schrödinger Suite | Commercial platform providing integrated tools for high-quality molecular docking (Glide) and MD simulation (Desmond) as medium-fidelity sources. | Schrödinger |

| OpenMM | Open-source, high-performance toolkit for molecular dynamics simulation, useful for generating custom medium-fidelity data. | Stanford University |

| PyMOL / Maestro | Visualization software for analyzing and interpreting the structural predictions from the multi-fidelity model. | Schrödinger / Schrödinger |

Welcome to the Technical Support Center. This resource is designed to support researchers in implementing machine learning models for catalyst discovery, specifically under the constraint of limited experimental data, a core challenge in modern catalytic informatics.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My model trained on a small dataset (≤50 points) is severely overfitting. What are the primary regularization techniques I should prioritize? A: With scarce data, preventing overfitting is critical. Prioritize these methods:

- Bayesian Regularization: Incorporate prior knowledge (e.g., physical bounds on parameters) directly into the model architecture.

- Dropout: Randomly "drop" neurons during training to prevent co-adaptation, especially effective in neural networks.

- Early Stopping: Monitor validation loss during training and halt when performance plateaus or degrades.

- Feature Selection: Use domain knowledge to reduce input dimensionality (e.g., using only confirmed catalytic descriptors) before modeling.

Q2: Which model architectures are most robust for very small datasets in catalysis? A: Simpler, uncertainty-aware models often outperform complex deep learning on tiny datasets.

- Gaussian Process Regression (GPR): Excellently quantifies prediction uncertainty, crucial for guiding future experiments.

- Bayesian Neural Networks (BNNs): Provide a probabilistic interpretation of weights, offering uncertainty estimates.

- Random Forests (with heavy regularization): Use very shallow trees and limit their number.

Q3: How can I validate my model's performance reliably when I have so few data points? A: Traditional train/test splits are unreliable. Use:

- Nested Cross-Validation: An outer loop for performance estimation and an inner loop for hyperparameter tuning. This minimizes bias.

- Leave-One-Out Cross-Validation (LOOCV): Suitable for datasets as small as 20-30 points, though computationally intensive.

- Bootstrapping: Repeated random sampling with replacement to create many training sets and assess stability.

Q4: My active learning loop seems stuck, repeatedly selecting similar candidates. How can I improve exploration? A: This is a common issue with pure uncertainty sampling. Modify your acquisition function:

- Use a hybrid query strategy: Combine uncertainty sampling with diversity sampling (e.g., maximize Euclidean distance in feature space from existing points).

- Implement Expected Improvement (EI): Balances probing uncertain regions and exploiting known high-performance areas.

- Add a random component: Select a small percentage (e.g., 10%) of queries randomly to explore uncharted space.

Key Experimental Protocols

Protocol 1: Implementing a Gaussian Process Regression (GPR) Model with Limited Data

- Feature Engineering: Compose a feature vector for each catalyst (max 20-30 descriptors). Common descriptors include: adsorption energies, d-band center, coordination number, elemental properties.

- Data Normalization: Standardize all feature columns to have zero mean and unit variance.

- Kernel Selection: Initialize with a Matern kernel (e.g., Matern 5/2), which is less smooth than RBF and often better for physical data. Combine with a WhiteKernel to model noise.

- Model Training: Use a library like

scikit-learnorGPyTorch. Optimize kernel hyperparameters by maximizing the log-marginal-likelihood. - Prediction & Uncertainty: For a new candidate, the model outputs a mean predicted activity and a standard deviation (σ). The σ is your quantitative uncertainty.

Protocol 2: Setting Up an Active Learning Loop for Catalyst Screening