Beyond Trial-and-Error: Optimizing Catalyst Selection with Expected Improvement in Bayesian Optimization

This article provides a comprehensive guide for researchers and drug development professionals on applying the Expected Improvement (EI) acquisition function to accelerate the discovery and optimization of catalytic systems.

Beyond Trial-and-Error: Optimizing Catalyst Selection with Expected Improvement in Bayesian Optimization

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying the Expected Improvement (EI) acquisition function to accelerate the discovery and optimization of catalytic systems. We explore the foundational mathematics of EI, detail its methodological implementation for high-throughput catalyst screening, address common pitfalls in real-world deployment, and validate its performance against other acquisition strategies. The full scope covers how EI intelligently balances exploration and exploitation to efficiently navigate vast chemical spaces, ultimately reducing experimental cost and time in developing novel catalysts for pharmaceutical synthesis and other applications.

Expected Improvement 101: A Primer for Materials Scientists on Bayesian Search

Application Notes

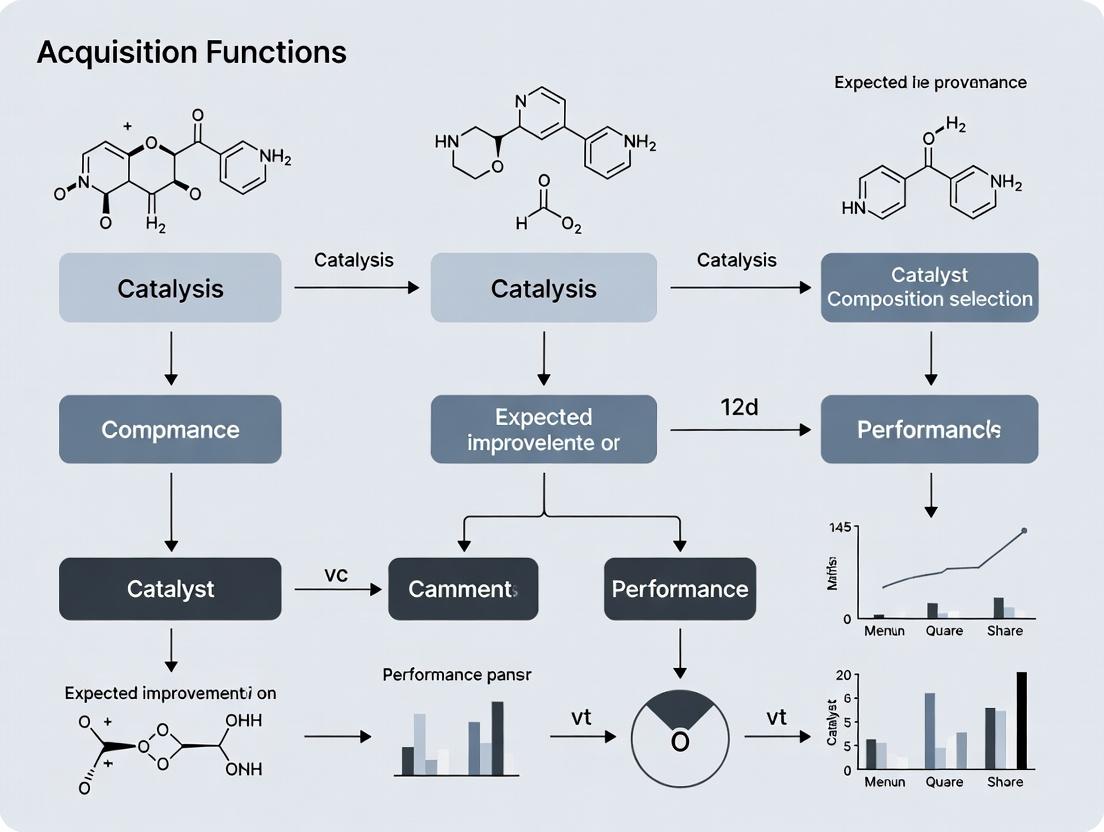

Within the broader thesis on acquisition functions for expected improvement in catalyst composition selection, these notes address the application of Bayesian optimization (BO) for high-throughput experimentation (HTE) in heterogeneous catalyst discovery. The primary challenge is the astronomical size of the compositional space when considering multi-metallic nanoparticles (e.g., quinary alloys) on diverse supports with variable promoters.

Key Application: Accelerating the discovery of novel bimetallic and trimetallic catalysts for the electrochemical oxygen reduction reaction (ORR), a critical process for fuel cells.

Quantitative Performance Data: Table 1: Comparison of Acquisition Functions for Catalyst Optimization

| Acquisition Function | Iterations to 90% Peak Activity | Avg. Improvement per Cycle (mA/cm²) | Exploitation vs. Exploration Balance |

|---|---|---|---|

| Expected Improvement (EI) | 14 | 1.23 | Balanced |

| Probability of Improvement (PI) | 22 | 0.87 | High Exploitation |

| Upper Confidence Bound (UCB) | 18 | 1.05 | High Exploration (tunable) |

| Random Sampling | 45+ | 0.45 | None |

Table 2: Top Catalyst Compositions Identified via BO-EI for ORR

| Catalyst Composition (Pt:X:Y) | Support | Mass Activity @ 0.9V (A/mgₚₜ) | Stability (% activity retained) |

|---|---|---|---|

| Pt₃Co | Carbon | 0.56 | 78% |

| Pt₃Ni | Nitrogen-doped Carbon | 0.71 | 65% |

| Pt₅₈Cu₁₅Ni₂₇ | Carbon | 0.82 | 72% |

| Pt₇₅Pd₁₅Fe₁₀ | Carbon | 0.48 | 92% |

Experimental Protocols

Protocol 1: High-Throughput Synthesis of Alloy Catalyst Libraries via Incipient Wetness Impregnation Objective: To prepare a spatially addressed library of bimetallic catalysts on a multi-well substrate.

- Substrate Preparation: Load a 96-well ceramic plate with a pre-weighed mass (e.g., 10 mg) of high-surface-area carbon support in each well.

- Precursor Solution Preparation: Calculate the total metal loading (e.g., 2 wt%). Prepare stock solutions of hexachloroplatinic acid (H₂PtCl₆), cobalt nitrate (Co(NO₃)₂), nickel chloride (NiCl₂), etc., in dilute hydrochloric acid (0.1M).

- Automated Dispensing: Use a liquid handling robot to dispense precise volumetric mixtures of the precursor stock solutions into each well to achieve the desired compositional gradients (e.g., Pt₁₀₀₋ₓCoₓ, where x varies from 0 to 100 in 5% increments).

- Drying & Reduction: Dry the plate at 80°C for 2 hours, then transfer to a tubular furnace. Reduce the catalysts under a 5% H₂/Ar flow at 300°C for 3 hours with a ramp rate of 5°C/min.

- Passivation: Cool to room temperature under inert Ar and expose to a 1% O₂/Ar flow for 1 hour to passivate surfaces.

Protocol 2: Parallel Electrochemical Screening for ORR Activity Objective: To measure the electrochemical activity of catalyst libraries in parallel.

- Ink Formulation: To each well, add 1 mL of a solution containing 0.5% Nafion and isopropanol. Sonicate the entire plate for 30 minutes to form homogeneous inks.

- Working Electrode Preparation: Using a microarrayer, spot 2 µL of each catalyst ink onto a polished glassy carbon electrode array (16-well format).

- Electrochemical Cell Setup: Employ a multi-channel potentiostat. Use a common Pt mesh counter electrode and a common reversible hydrogen electrode (RHE) in 0.1M HClO₄ electrolyte saturated with O₂.

- Activity Measurement: For each channel, perform cyclic voltammetry (CV) from 0.05 to 1.0 V vs. RHE at 50 mV/s to clean the surface. Then, perform linear sweep voltammetry (LSV) from 1.0 to 0.05 V vs. RHE at 10 mV/s and 1600 RPM. Record the kinetic current at 0.9 V vs. RHE.

- Data Processing: Normalize kinetic currents to the mass of precious metal (Pt) loaded to calculate mass activity (A/mgₚₜ).

Mandatory Visualization

Diagram 1: Bayesian Optimization Loop for Catalyst Discovery

Diagram 2: 4e⁻ Oxygen Reduction Reaction (ORR) Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for High-Throughput Catalyst Discovery

| Item/Reagent | Function & Application Notes |

|---|---|

| Multi-Well Ceramic/Glass Plates | Inert substrate for parallel synthesis of catalyst libraries; enables high-temperature treatments. |

| Liquid Handling Robot (e.g., Positive Displacement) | Enables precise, reproducible dispensing of precursor solutions for combinatorial synthesis. |

| Metal Salt Precursors (e.g., H₂PtCl₆, Ni(NO₃)₂) | Source of active metal components. Must be high-purity and soluble for accurate formulation. |

| High-Surface-Area Carbon Supports (e.g., Vulcan XC-72) | Conductive support material to maximize catalyst dispersion and electronic conductivity. |

| Multi-Channel Potentiostat/Galvanostat | Allows simultaneous electrochemical characterization of multiple catalyst samples. |

| Glassy Carbon Electrode (GCE) Arrays | Provides standardized, reusable substrates for drop-casting catalyst inks for screening. |

| Rotating Disk Electrode (RDE) Setups | Controls mass transport of O₂ to the catalyst surface, allowing measurement of intrinsic activity. |

| Nafion Perfluorinated Resin Solution | Binder for catalyst inks; provides proton conductivity and adhesion to the electrode. |

| High-Purity Gases (O₂, N₂, H₂/Ar mix) | For electrolyte saturation (O₂), inert atmospheres (N₂/Ar), and catalyst reduction (H₂/Ar). |

This Application Note details the methodology of Bayesian Optimization (BO) as applied to the research thesis: "Advancing Acquisition Functions for Expected Improvement in Catalyst Composition Selection for Drug Development." The selection of optimal heterogeneous catalyst compositions for key pharmaceutical synthesis steps is a high-dimensional, expensive, and data-scarce challenge. BO provides a principled framework to navigate this complex design space efficiently, minimizing the number of required experimental trials by iteratively suggesting the most promising compositions based on probabilistic models and strategic acquisition functions.

Foundational Concepts: Gaussian Processes (GPs)

A Gaussian Process is a non-parametric probabilistic model used as a surrogate for the unknown objective function (e.g., catalyst yield or selectivity). It defines a distribution over functions and is fully specified by a mean function m(x) and a covariance (kernel) function k(x, x').

Key Kernel Functions:

| Kernel Name | Mathematical Form | Hyperparameters | Best For |

|---|---|---|---|

| Radial Basis (RBF) | $k(xi, xj) = \sigmaf^2 \exp(-\frac{1}{2l^2} |xi - x_j|^2)$ | Length-scale (l), Signal variance ($\sigma_f^2$) | Smooth, continuous functions. |

| Matérn 5/2 | $k(xi, xj) = \sigma_f^2 (1 + \frac{\sqrt{5}r}{l} + \frac{5r^2}{3l^2}) \exp(-\frac{\sqrt{5}r}{l})$ | Length-scale (l), Signal variance ($\sigma_f^2$) | Less smooth than RBF, accommodates noise. |

| Constant | $k(xi, xj) = \sigma_c^2$ | Constant ($\sigma_c^2$) | Capturing a constant bias. |

Where $r = \|x_i - x_j\|$

GP Prior to Posterior Update Workflow:

Title: GP Posterior Formation from Prior and Data

Core Component: Acquisition Functions

Acquisition functions balance exploration and exploitation to propose the next experiment. They use the GP posterior (mean $\mu(x)$ and variance $\sigma^2(x)$) to quantify the utility of evaluating a candidate point.

Quantitative Comparison of Common Acquisition Functions:

| Function | Formula | Key Characteristic | Theta Parameter |

|---|---|---|---|

| Probability of Improvement (PI) | $\alpha_{PI}(x) = \Phi(\frac{\mu(x) - f(x^+) - \xi}{\sigma(x)})$ | Exploitative; seeks immediate gain. | $\xi$ (jitter) |

| Expected Improvement (EI) | $\alpha_{EI}(x) = (\mu(x) - f(x^+) - \xi)\Phi(Z) + \sigma(x)\phi(Z)$ where $Z = \frac{\mu(x) - f(x^+) - \xi}{\sigma(x)}$ | Balances exploration/exploitation. | $\xi$ (jitter) |

| Upper Confidence Bound (UCB) | $\alpha_{UCB}(x) = \mu(x) + \kappa \sigma(x)$ | Explicit balance parameter. | $\kappa$ |

| Predictive Entropy Search | Complex, based on information gain. | Information-theoretic; global search. | -- |

Where $\Phi$ is CDF, $\phi$ is PDF of std. normal, $f(x^+)$ is best observation, $\xi, \kappa$ are tunable.

Acquisition Function Decision Logic:

Title: Selecting Next Experiment via Acquisition Function Maximization

Detailed Experimental Protocol: BO for Catalyst Selection

Protocol 1: High-Throughput Initialization and Iterative BO Loop

Objective: To identify a catalyst composition (e.g., Pd-Au-Ce/ZrO2 ratios, dopant level) maximizing yield for a Suzuki-Miyaura coupling relevant to API synthesis.

Materials & Reagents: See "The Scientist's Toolkit" below.

Procedure:

- Design Space Definition: Define bounds for each compositional element (e.g., 0-5 wt% Pd, 0-3 wt% Au, 0-10 wt% Ce, balance ZrO2). Include process variables (calcination temperature: 300-600°C).

- Initial Design: Perform a space-filling design (e.g., Latin Hypercube Sampling) for n=10 initial catalyst formulations. Synthesize and test these catalysts in the target reaction (see Protocol 2).

- BO Loop: a. Surrogate Modeling: Construct a GP model using the accumulated data (composition -> yield). Use a Matérn 5/2 kernel. b. Acquisition Optimization: Maximize the Expected Improvement (EI) acquisition function across the defined compositional space using a global optimizer (e.g., L-BFGS-B or DIRECT). c. Candidate Selection: The point maximizing EI is selected as the next catalyst composition to test. d. Experimental Evaluation: Synthesize and test the proposed catalyst (Protocol 2). e. Data Augmentation: Add the new (composition, yield) data pair to the dataset. f. Iteration: Repeat steps a-e for a predetermined budget (e.g., 30 total experiments) or until a performance threshold is met.

Protocol 2: Standardized Catalyst Synthesis & Testing (Key Cited Experiment)

Objective: To evaluate the performance of a single catalyst composition proposed by the BO loop.

Procedure:

- Wet Impregnation Synthesis:

- Calculate required volumes of precursor solutions (e.g., Pd(NO3)2, HAuCl4, Ce(NO3)3) to achieve target loadings on ZrO2 support.

- Add the support to the mixed precursor solution. Stir for 2 hours at room temperature.

- Dry the slurry overnight at 120°C.

- Calcine the dried powder in a muffle furnace at the specified temperature (from design space) for 4 hours in static air.

- Catalytic Testing (Suzuki-Miyaura Coupling):

- Charge a parallel reaction vessel with aryl halide (1.0 mmol), phenylboronic acid (1.5 mmol), base (K2CO3, 2.0 mmol), and catalyst (50 mg).

- Add solvent (water:ethanol 3:1, 5 mL).

- Heat the reaction block to 80°C with stirring for 2 hours.

- Cool, filter to remove catalyst, and analyze reaction mixture via quantitative HPLC against a calibrated standard curve to determine yield.

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Catalyst BO Research | Example Product/Specification |

|---|---|---|

| Metal Precursors | Source of active catalytic components for precise impregnation. | Pd(NO3)2•xH2O (99.9%), HAuCl4•3H2O (ACS grade), Ce(NO3)3•6H2O (99%). |

| High-Surface Area Support | Provides a stable, dispersive matrix for active metals. | ZrO2 powder, BET surface area >80 m²/g, pore volume >0.3 cm³/g. |

| High-Throughput Reactor | Enables parallel synthesis or testing of multiple catalyst candidates. | 16-parallel glass reactor block with individual temperature control. |

| Quantitative HPLC | Essential for accurate, high-throughput yield determination of reaction products. | System with C18 column, PDA detector, and autosampler. |

| BO Software Library | Implements GP regression and acquisition function optimization. | Python libraries: scikit-optimize, BoTorch, or GPyOpt. |

Advanced Considerations for Drug Development

In pharmaceutical applications, BO can be extended to multi-objective optimization (e.g., maximizing yield while minimizing costly metal loading or impurity formation). Adaptive acquisition functions, which dynamically adjust their balance parameter (e.g., $\kappa$ in UCB) based on iteration progress, are a key focus of the broader thesis. This aims to accelerate the discovery of sustainable, cost-effective catalysts for green pharmaceutical manufacturing.

Within the broader thesis on acquisition function-driven catalyst composition selection for drug development, Expected Improvement (EI) serves as a critical Bayesian optimization component. It formalizes the search for optimal catalyst formulations by balancing exploration of uncertain regions and exploitation of known high-performance areas. This protocol details its mathematical formulation, application workflow, and implementation for high-throughput experimentation.

Core Mathematical Formulation

The Expected Improvement acquisition function quantifies the potential gain over the current best-observed function value, ( f^* ), at a candidate point ( \mathbf{x} ), given a Gaussian process (GP) surrogate model providing a predictive mean ( \mu(\mathbf{x}) ) and standard deviation ( \sigma(\mathbf{x}) ).

The improvement is defined as: [ I(\mathbf{x}) = \max(0, f(\mathbf{x}) - f^) ] Since ( f(\mathbf{x}) ) is modeled as a Gaussian distribution ( \mathcal{N}(\mu(\mathbf{x}), \sigma^2(\mathbf{x})) ), the *expected value of this improvement is: [ EI(\mathbf{x}) = \mathbb{E}[I(\mathbf{x})] = \begin{cases} (\mu(\mathbf{x}) - f^)\Phi(Z) + \sigma(\mathbf{x})\phi(Z) & \text{if } \sigma(\mathbf{x}) > 0 \ 0 & \text{if } \sigma(\mathbf{x}) = 0 \end{cases} ] where: [ Z = \frac{\mu(\mathbf{x}) - f^}{\sigma(\mathbf{x})} ] Here, ( \Phi(\cdot) ) and ( \phi(\cdot) ) are the cumulative distribution function (CDF) and probability density function (PDF) of the standard normal distribution, respectively.

Table 1: EI Equation Components and Interpretation

| Symbol | Term | Role in Catalyst Selection |

|---|---|---|

| ( \mu(\mathbf{x}) ) | Predictive Mean | Estimated performance (e.g., yield, selectivity) of catalyst composition ( \mathbf{x} ). |

| ( \sigma(\mathbf{x}) ) | Predictive Uncertainty | Uncertainty in the performance estimate at ( \mathbf{x} ). |

| ( f^* ) | Incumbent Best | Best currently observed performance from prior experiments. |

| ( Z ) | Standardized Improvement | Measures how many standard deviations the mean is above ( f^* ). |

| ( \Phi(Z) ) | CDF term | Exploitation weight: probability of improvement. |

| ( \sigma(\mathbf{x})\phi(Z) ) | PDF term | Exploration weight: rewards high uncertainty. |

EI-Driven Catalyst Selection Workflow

A standardized protocol for applying EI in high-throughput catalyst screening.

Protocol 3.1: Iterative Optimization Cycle Using EI Objective: Identify the catalyst composition maximizing reaction yield within a defined chemical space. Materials: High-throughput robotic synthesis platform, parallel pressure reactors, GC-MS/HPLC for analysis, computational server for GP modeling. Procedure:

- Initial Design: Perform a space-filling design (e.g., Latin Hypercube) of 20-30 catalyst compositions varying metal ratios, ligand structures, and support types. Synthesize and test.

- Surrogate Modeling: Fit a Gaussian Process model with a Matérn kernel to the experimental data (yield vs. composition descriptors).

- EI Calculation & Optimization: a. Compute ( f^* ), the maximum yield observed so far. b. For many candidate points ( \mathbf{x} ) in the composition space, calculate ( \mu(\mathbf{x}) ) and ( \sigma(\mathbf{x}) ) from the GP. c. Compute ( EI(\mathbf{x}) ) using the formula in Section 2. d. Identify the candidate point ( \mathbf{x}{next} = \arg\max{\mathbf{x}} EI(\mathbf{x}) ).

- Experimental Validation: Synthesize and test the top 3-5 proposals from Step 3d.

- Update & Iterate: Append new results to the dataset. Return to Step 2 until performance plateaus or budget is exhausted. Validation: Compare final optimized catalyst performance against a baseline identified through traditional one-variable-at-a-time (OVAT) screening.

Diagram 1: EI-Driven Catalyst Optimization Loop

Comparative Analysis of Acquisition Functions

Table 2: Quantitative Comparison of Key Acquisition Functions

| Function | Formula | Exploration vs. Exploitation | Typical Performance in Catalyst Search |

|---|---|---|---|

| Expected Improvement (EI) | ( (\mu - f^*)\Phi(Z) + \sigma\phi(Z) ) | Balanced adaptive trade-off. | Consistently high; finds global optimum efficiently. |

| Upper Confidence Bound (UCB) | ( \mu(\mathbf{x}) + \kappa \sigma(\mathbf{x}) ) | Explicitly tuned by ( \kappa ). | Good but sensitive to ( \kappa ) choice; can over-explore. |

| Probability of Improvement (PI) | ( \Phi(Z) ) | Strong exploitation bias. | Often gets stuck in local optima; faster initial gains. |

| Thompson Sampling | Sample ( f(\mathbf{x}) \sim \mathcal{N}(\mu(\mathbf{x}), \sigma^2(\mathbf{x})) ), maximize sample. | Stochastic, inherent balance. | Very effective in practice; requires sampling. |

Performance data synthesized from benchmark studies in materials informatics (2023-2024).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for EI-Guided Catalyst Experimentation

| Item / Reagent | Function in Protocol |

|---|---|

| Precursor Salt Libraries (e.g., metal acetates, nitrates) | Provides modular building blocks for high-throughput synthesis of varied catalyst compositions. |

| Ligand Arrays (e.g., phosphine, amine, carbene libraries) | Systematically modulates electronic and steric properties of the catalytic center. |

| Porous Support Particles (e.g., Al2O3, SiO2, C, MOFs) | Standardized supports for immobilizing active components, testing dispersion effects. |

| Internal Standard Kits (for GC-MS/HPLC) | Enables accurate, reproducible quantification of reaction yield and selectivity in parallel. |

| Bayesian Optimization Software (e.g., BoTorch, GPyOpt, scikit-optimize) | Provides computational backend for GP modeling and EI calculation/optimization. |

| HTE Reactor Blocks (e.g., 48- or 96-well plates with pressure/temperature control) | Enables parallel synthesis and testing under consistent, automated conditions. |

Diagram 2: EI Calculation Logical Flow

Why EI for Catalysis? Addressing the Exploration-Exploitation Dilemma

In catalyst composition research, the Exploration-Exploitation Dilemma is central: should one explore new, uncertain regions of the compositional space or exploit known high-performing regions? Expected Improvement (EI), a prominent Bayesian optimization acquisition function, provides a mathematically principled balance. This Application Note details protocols for employing EI to accelerate the discovery of novel heterogeneous catalysts, framed within a thesis on advanced acquisition functions for materials selection.

Table 1: Comparison of Key Acquisition Functions for Catalyst Search

| Acquisition Function | Primary Objective | Risk Preference | Best for Phase |

|---|---|---|---|

| Expected Improvement (EI) | Maximizes probability of improvement over best-known target | Balanced | General-purpose optimization |

| Probability of Improvement (PI) | Maximizes chance of improvement, regardless of magnitude | Risk-seeking | Early exploration |

| Upper Confidence Bound (UCB) | Explores regions of high uncertainty | Tunable (via κ parameter) | Systematic exploration |

| Entropy Search (ES) | Maximizes information gain about optimum | Information-driven | Global mapping |

Table 2: Illustrative EI Performance Metrics in Catalysis Studies

| Study Focus (Catalyst System) | Search Dimension | Initial Data Points | EI-Guided Experiments to Find Optimum | Performance Gain Over Baseline |

|---|---|---|---|---|

| Pt-Pd-Au Ternary Nanoparticles | 3 (compositions) | 20 | 15 | 2.1x activity |

| Mixed Metal Oxide (5 elements) | 5 | 30 | 22 | 3.4x selectivity |

| Zeolite-supported Co/Mo | 4 (Co/Mo ratio, temp, pressure) | 15 | 18 | 1.8x yield |

Experimental Protocols

Protocol 1: Setting Up the Bayesian Optimization Loop for Catalyst Screening

Objective: To iteratively select catalyst compositions for testing using an EI-driven workflow.

Materials & Computational Setup:

- High-throughput catalyst synthesis platform (e.g., automated liquid handler, sputter system).

- Characterization suite (e.g., XRD, XPS, automated reaction screening).

- Bayesian Optimization software (e.g., GPyTorch, Scikit-optimize, Ax Platform).

- Defined compositional search space (ranges for each element or synthesis parameter).

Procedure:

- Initial Design: Perform a space-filling initial design (e.g., Latin Hypercube Sampling) to synthesize and test N initial catalyst candidates (typically N = 10-30).

- Model Training: After each batch of experiments, train a Gaussian Process (GP) surrogate model. The model uses compositional features as input and maps them to the target performance metric (e.g., turnover frequency, yield).

- EI Calculation: Compute the EI acquisition function across a dense grid of candidate compositions. EI is defined as: EI(x) = E[max(f(x) - f(x), 0)]* where f(x) is the predicted performance at point x, and f(x)* is the current best observed performance.

- Candidate Selection: Select the next batch of compositions where EI is maximized.

- Iteration: Synthesize, test, and characterize the selected candidates. Append the new data to the training set.

- Termination: Repeat steps 2-5 until a performance threshold is met or the experimental budget is exhausted.

Protocol 2: High-Throughput Synthesis & Screening for Validation

Objective: To experimentally validate the top candidate catalysts identified by the EI-guided search.

Synthesis Workflow (for supported metal catalysts):

- Precursor Deposition: Using an automated dispenser, deposit aqueous metal precursor solutions onto a high-surface-area support (e.g., Al2O3, SiO2) arrayed in a well plate.

- Drying & Calcination: Dry the plate at 120°C for 2 hours, followed by calcination in a muffle furnace under static air at 500°C for 4 hours.

- Reduction: Activate the catalysts in a parallel flow reactor under H2/N2 (5%/95%) at 300°C for 2 hours.

Performance Testing:

- Transfer catalyst samples to a parallel, fixed-bed microreactor system.

- Conduct catalytic testing (e.g., CO oxidation at 250°C, 1 atm) with online GC analysis.

- Record key metrics: Conversion (%), Selectivity (%), and Turnover Frequency (TOF, s⁻¹).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for EI-Guided Catalyst Discovery

| Item | Function in Workflow | Example/Supplier Note |

|---|---|---|

| Multi-Element Metal Precursors | Enables precise composition control in high-throughput synthesis. | e.g., Tetraamminepalladium(II) nitrate, Chloroplatinic acid, Gold(III) chloride. |

| High-Throughput Support Wafers | Provides uniform, arrayed substrate for catalyst library. | e.g., Alumina-coated quartz wafers (5mm x 5mm wells). |

| Automated Liquid Handling System | Ensures reproducible, micro-scale dispensing of precursor solutions. | e.g., Hamilton Microlab STAR. |

| Parallel Flow Reactor System | Allows simultaneous activity/selectivity testing of multiple catalysts. | e.g., Symyx Technologies / Freeslate screening tools. |

| Gaussian Process Modeling Software | Core engine for building surrogate models and calculating EI. | e.g., GPyTorch (Python library). |

| Bayesian Optimization Platform | Integrates modeling, acquisition function, and experiment management. | e.g., Meta's Ax Platform. |

Visualizations

Diagram Title: EI-Guided Catalyst Discovery Workflow

Diagram Title: EI Balances Exploration and Exploitation

Application Notes

In the context of optimizing acquisition functions for Expected Improvement (EI) in catalyst composition selection for drug development, three key concepts form the computational backbone. These are integral to Bayesian optimization (BO) frameworks used to efficiently navigate high-dimensional composition spaces, minimizing expensive experimental cycles.

- Surrogate Model: A probabilistic, computationally inexpensive model that approximates the relationship between catalyst composition variables (e.g., ratios of metals, ligands, dopants) and the target performance metric (e.g., reaction yield, enantiomeric excess). In BO for catalyst research, a Gaussian Process (GP) is the standard surrogate, as it provides uncertainty estimates alongside predictions.

- Posterior Distribution: The updated probabilistic belief about the objective function after incorporating observed experimental data. For a GP surrogate, the posterior at a new composition point is a full probability distribution (characterized by a mean and variance), quantifying both the predicted performance and the prediction uncertainty.

- The Incumbent: The best-observed catalyst composition found so far in the optimization process, based on its evaluated performance metric. In the EI acquisition function, the incumbent (often denoted as ( f^* ) or ( f_{best} )) serves as the benchmark for calculating potential improvement.

The synergy is as follows: A surrogate model (GP), conditioned on all experimental data, provides a posterior distribution over the entire search space. An acquisition function (EI) uses this posterior and the current incumbent value to quantify the utility of evaluating any untested composition. EI is mathematically defined as the expected value of improvement ( I(x) = \max(0, f(x) - f^) ) under the posterior distribution, where ( f^ ) is the incumbent.

Data Presentation

Table 1: Performance Comparison of Surrogate Models in Simulated Catalyst Optimization

| Surrogate Model Type | Average Regret after 50 Iterations (Lower is Better) | Mean Prediction Time (ms) | Handles High-Dim (>10) Compositions? | Key Advantage for Catalyst Screening |

|---|---|---|---|---|

| Gaussian Process (RBF Kernel) | 0.12 ± 0.03 | 245 | Moderate | Excellent uncertainty quantification |

| Random Forest | 0.18 ± 0.05 | 45 | Yes | Handles discrete/categorical variables well |

| Bayesian Neural Network | 0.15 ± 0.04 | 120 | Yes | Scalability to very high dimensions |

| Sparse Gaussian Process | 0.14 ± 0.04 | 85 | Moderate | Reduced compute for large datasets |

Table 2: Impact of Incumbent Selection Strategy on EI Performance

| Selection Strategy | Description | Convergence Rate (Iterations to 95% Optimum) | Robustness to Noisy Experimental Data |

|---|---|---|---|

| Best Observed | Simple max/min of evaluated samples | 22 | Low (overfits to outliers) |

| Posterior Mean Maximizer | Point with highest posterior mean | 25 | Medium |

| Penalized Best (Recommended) | Best observed, penalized by its posterior uncertainty | 19 | High |

Experimental Protocols

Protocol 1: Establishing the Gaussian Process Surrogate for Catalyst Composition Space

- Design Initial Library: Use space-filling design (e.g., Sobol sequence) to select 5-10 initial catalyst compositions (e.g., varying Pd:Pt ratio, ligand loading, solvent dielectric constant).

- High-Throughput Experimentation: Synthesize and test initial library in parallel via automated flow/plate reactors. Record primary performance metric (e.g., turnover number).

- Data Preprocessing: Normalize all compositional variables to [0,1] range. Apply log-transform to the performance metric if variance is non-stationary.

- Model Training: Optimize GP hyperparameters (length scales, noise) by maximizing the log marginal likelihood on the initial data.

- Model Validation: Use leave-one-out cross-validation. Calculate standardized mean squared error (SMSE); a value ~1.0 indicates a well-calibrated surrogate.

Protocol 2: Iterative Optimization Loop using Expected Improvement

- Identify Incumbent: From the current dataset (D{1:t}), select the composition with the best observed performance as the incumbent (f^*t). Apply penalty if using noisy data (see Table 2).

- Compute Posterior: Using the trained GP surrogate, compute the posterior mean ( \mut(x) ) and variance ( \sigma^2t(x) ) for all candidate compositions in a discretized search space.

- Calculate EI: For each candidate (x), compute (EIt(x) = \mathbb{E}[max(0, f(x) - f^*t)] ). Under the GP posterior, this has the closed form: (EIt(x) = (\mut(x) - f^_t - \xi)\Phi(Z) + \sigma_t(x)\phi(Z) ), where (Z = \frac{\mu_t(x) - f^t - \xi}{\sigmat(x)}), and ( \xi ) is a small exploration parameter (e.g., 0.01).

- Select Next Experiment: Choose the composition (x{t+1} = \arg\max EIt(x)).

- Execute Experiment & Update: Synthesize and test (x{t+1}), record result (y{t+1}). Augment dataset: (D{1:t+1} = D{1:t} \cup {(x{t+1}, y{t+1})}).

- Iterate: Re-train the GP surrogate on (D_{1:t+1}). Repeat from Step 1 until performance improvement plateaus or budget is exhausted.

Mandatory Visualization

Bayesian Optimization Workflow for Catalysis

EI Calculation from Posterior and Incumbent

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for High-Throughput Catalyst Optimization

| Item / Reagent | Function in Protocol | Key Consideration for BO |

|---|---|---|

| Pre-catalyst Libraries (e.g., metal salt mixtures, ligand sets) | Provides the variable compositional space for the surrogate model to explore. | Ensure broad, well-defined chemical space coverage for initial design. |

| Automated Liquid Handling/Synthesis Robot (e.g., Chemspeed, Unchained Labs) | Enables precise, reproducible preparation of catalyst compositions from digital designs generated by the EI algorithm. | Integration with lab informatics system for direct data transfer to the model is critical. |

| High-Throughput Screening Reactor (e.g., plate-based parallel reactors, flow microreactors) | Generates the performance data (yield, selectivity) required to update the posterior distribution. | Data quality (noise level) must be characterized as it directly impacts GP hyperparameter training. |

| GPy/BOTorch/Scikit-learn Software | Provides the computational implementation for building the Gaussian Process surrogate, calculating the posterior, and optimizing the EI acquisition function. | Choice of kernel (e.g., Matern 5/2 for continuous variables) and optimizer significantly affects performance. |

| Lab Information Management System (LIMS) | Acts as the central data hub, linking experimental composition variables (inputs) with analytical results (outputs) for model training. | Must maintain strict metadata association for accurate model interpretation. |

A Step-by-Step Guide: Implementing EI for High-Throughput Catalyst Screening

This document details an integrated workflow architecture designed to accelerate the discovery of heterogeneous catalysts. The protocols are framed within a broader thesis on using Expected Improvement (EI)—a core Bayesian optimization acquisition function—to guide the selection of catalyst compositions. The workflow synergistically combines autonomous robotic experimentation for synthesis and testing with high-throughput Density Functional Theory (DFT) calculations to provide atomic-scale insights. This closed-loop system iteratively proposes optimal experiments, minimizing the number of trials required to identify high-performance catalysts.

Application Notes: Integrated Workflow Architecture

The architecture is a data-centric pipeline where each module feeds information to the next, creating a cycle of hypothesis, experimentation, and learning.

EI as the Decision Engine: The Expected Improvement acquisition function balances exploration of uncertain regions of the composition space with exploitation of known high-performance areas. It quantifies the potential utility of testing a new candidate, mathematically expressed as:

EI(x) = E[max(0, f(x) - f(x*))]wheref(x)is the predicted performance of candidatex, andf(x*)is the current best observed performance.Role of Robotic Experimentation: Automated platforms execute the physical synthesis (e.g., via inkjet printing, spin coating) and characterization (e.g., catalytic activity screening via mass spectrometry) of the candidates proposed by the EI algorithm. This generates rapid, reproducible, and quantitative experimental data.

Role of DFT Calculations: Parallel to experimentation, DFT calculations model the electronic structure and surface adsorption energies for proposed or synthesized compositions. This provides explanatory power and identifies descriptors (e.g., d-band center, oxygen vacancy formation energy) that can be fed back into the machine learning model to improve its predictive accuracy.

Closed-Loop Integration: The key innovation is the feedback of both experimental and computational results into a unified database. A machine learning model (e.g., Gaussian Process) is trained on this combined dataset. The EI function then queries this model to propose the next most informative set of compositions for both robotic synthesis and DFT investigation.

Core Protocols

Protocol 3.1: Expected Improvement-Driven Candidate Proposal

Objective: To select the next batch of catalyst compositions for experimental testing using Bayesian optimization. Materials: Computing workstation, Python environment with libraries (scikit-optimize, GPyTorch, numpy). Procedure:

- Initialize: Start with a small, space-filling initial dataset (D) of

ncompositions (e.g., 10-20) and their measured performance metrics (e.g., turnover frequency, yield). - Train Model: Fit a Gaussian Process (GP) surrogate model to dataset D, specifying a kernel (e.g., Matérn 5/2) appropriate for compositional data.

- Calculate EI: For a large set of candidate compositions in the search space (e.g., 10,000 random points), compute the Expected Improvement at each point using the trained GP model and the current best performance

f(x*). - Select & Output: Identify the candidate composition

xthat maximizes EI(x). Output this composition, along with a user-defined number of next-best candidates, for the robotic experimentation queue. - Update: After experimental results are obtained, add the new

(x, f(x))pair to dataset D and repeat from Step 2.

Protocol 3.2: High-Throughput Robotic Synthesis & Screening

Objective: To autonomously synthesize and test solid-state catalyst libraries. Materials: Automated liquid handler or inkjet printer, multi-well substrate (e.g., alumina wafer), precursor solutions, robotic arm, integrated gas chromatograph/mass spectrometer (GC-MS) flow reactor. Procedure:

- Substrate Preparation: Load substrate into the robotic platform. Execute a standard cleaning protocol (e.g., UV-ozone treatment).

- Precision Dispensing: Translate the digital composition list from Protocol 3.1 into dispensing commands. Use the liquid handler to mix and deposit precursor solutions onto designated locations on the substrate.

- Automated Processing: Transfer the substrate to integrated furnaces for calcination and reduction under programmed temperature ramps and gas flows (e.g., 400°C in air, then 500°C in H₂/Ar).

- Activity Screening: The robotic arm sequentially positions each catalyst spot under the inlet of a packed-bed microreactor connected to GC-MS. Measure catalytic performance (e.g., CO₂ conversion for methanation) under standardized conditions (e.g., 300°C, 1 bar, CO₂:H₂ = 1:4).

- Data Logging: Automatically record performance metrics (conversion, selectivity, rate) and associate them with the precise composition and synthesis parameters in the master database.

Protocol 3.3: High-Throughput DFT Workflow for Descriptor Calculation

Objective: To compute electronic structure descriptors for candidate compositions. Materials: High-performance computing cluster, DFT software (VASP, Quantum ESPRESSO), workflow manager (Fireworks, AiiDA). Procedure:

- Structure Generation: For each proposed composition, generate likely surface slab models (e.g., (111) facet of a ternary alloy).

- Job Submission: Launch DFT calculations using a standardized input set: PBE functional, plane-wave basis set with defined cutoff, PAW pseudopotentials, and k-point mesh. First perform geometry relaxation.

- Property Calculation: On relaxed structures, run single-point calculations to extract the density of states (DOS). Compute key descriptors:

a. d-band center: Calculate as the first moment of the projected d-band DOS.

b. Adsorption Energy (E_ads): For key intermediates (e.g., *CO, *O), calculate

E_ads = E(slab+adsorbate) - E(slab) - E(adsorbate_gas). c. Formation Energy: For defects like oxygen vacancies. - Data Parsing: Automatically parse output files to populate a computational database with the calculated descriptors for each composition.

Data Presentation

Table 1: Performance Data from an Iterative EI-Driven Catalyst Screening Cycle for CO₂ Hydrogenation

| Iteration | Proposed Composition (A-B-C) | Experimental TOF (h⁻¹) | DFT d-band center (eV) | EI Value (Normalized) |

|---|---|---|---|---|

| 0 (Seed) | Co₆₀Fe₂₀Ni₂₀ | 120 | -1.85 | N/A |

| 0 (Seed) | Co₂₀Fe₆₀Ni₂₀ | 85 | -1.92 | N/A |

| 1 | Co₅₀Fe₄₀Ni₁₀ | 210 | -1.78 | 0.65 |

| 1 | Co₄₅Fe₁₅Ni₄₀ | 95 | -1.95 | 0.21 |

| 2 | Co₅₅Fe₃₅Ni₁₀ | 380 | -1.72 | 0.89 |

| 3 | Co₆₀Fe₃₀Ni₁₀ | 350 | -1.70 | 0.15 |

Table 2: Essential Research Reagent Solutions & Materials

| Item | Function in Workflow |

|---|---|

| Metal Nitrate Precursor Solutions (0.1M) | Standardized stock solutions for precise robotic dispensing of active metal components. |

| Alumina-coated Si Wafer Substrate | High-surface-area, inert support for creating catalyst libraries via printing. |

| Calibration Gas Mixture (e.g., 5% CO₂, 20% H₂, balance Ar) | Standard reactant stream for reproducible catalytic activity screening. |

| PAW Pseudopotential Library | Essential for accurate and efficient DFT calculations of transition metal systems. |

| Gaussian Process Kernel (Matérn 5/2) | Core mathematical function defining similarity between compositions in the surrogate model. |

Mandatory Visualizations

Diagram Title: Closed-Loop Catalyst Discovery Workflow Architecture

Diagram Title: Expected Improvement Iteration Protocol

1. Introduction Within the context of Bayesian optimization for catalyst discovery, the acquisition function (e.g., Expected Improvement) guides the selection of the next candidate for experimental testing. The efficacy of this process is fundamentally constrained by how the multidimensional search space of catalyst formulations is defined and encoded. This protocol details the systematic encoding of catalyst compositions, supports, and dopants into numerical feature vectors, forming the critical input space for machine learning models in acquisition function-driven research.

2. Encoding Schemes and Quantitative Data A practical encoding strategy combines categorical, compositional, and structural descriptors. The following tables summarize key encoding approaches and their quantitative impact on search space dimensionality.

Table 1: Primary Encoding Schemes for Catalyst Components

| Encoding Scheme | Application Example | Description | Dimensionality per Element |

|---|---|---|---|

| One-Hot / Label | Support Type (Al2O3, SiO2, TiO2, Carbon) | Binary vector for each distinct category. | 1 (expands to N categories) |

| Atomic Fraction | Active Metal (Ni, Co, Fe) in a bimetallic catalyst | Molar ratio of each element in the active phase. | 1 (sums to 1 for the phase) |

| Weight Loading | 1 wt%, 5 wt% Pt on support | Mass percentage of active component. | 1 |

| Physical Descriptor | Support Surface Area, Pore Volume | Measured scalar property of the material. | 1 |

| Crystallographic | Dopant Ionic Radius, Dopant Electronegativity | Elemental property of a dopant atom. | 1 |

Table 2: Example Encoded Catalyst Formulation Vector

| Feature Category | Specific Feature | Encoding Method | Example Value (Catalyst: 2%Ni-0.5%Cu/SBA-15) |

|---|---|---|---|

| Support | Support Type: SBA-15 | One-Hot (vs. Al2O3, TiO2) | [1, 0, 0] |

| Support Surface Area (m²/g) | Physical Descriptor | 600 | |

| Active Metals | Ni Weight Loading | Weight Loading | 2.0 |

| Cu Weight Loading | Weight Loading | 0.5 | |

| Ni Atomic Fraction in Metal Phase | Atomic Fraction | 0.86 | |

| Cu Atomic Fraction in Metal Phase | Atomic Fraction | 0.14 | |

| Dopant | Presence of K Dopant | Binary (0/1) | 0 |

| Preparation | Calcination Temp (°C) | Physical Descriptor | 500 |

| Total Vector Dimensionality | 8 |

3. Experimental Protocol: Generating the Encoded Dataset for Bayesian Optimization

Protocol 3.1: Systematic Feature Vector Construction Objective: To translate a library of synthesized catalyst formulations into a standardized numerical matrix. Materials: Catalyst synthesis records, characterization data (e.g., BET, ICP-OES), elemental property tables (e.g., Pauling electronegativity, ionic radius).

Procedure:

- Define the Universal Feature Set: List every unique feature relevant to the catalyst space (e.g., Support_Al2O3, Support_SiO2, Ni_wt%, Cu_wt%, Calcination_Temp). This defines the columns of your design matrix.

- Populate Feature Vectors per Catalyst:

a. For categorical features (support, dopant presence), assign

1if true,0otherwise. b. For compositional features, input the measured or target weight loading or atomic fraction. c. For physical and elemental descriptors, input the measured or tabulated value. - Handle Missing Data: For unreported features, use imputation (e.g., median value for physical descriptors) or a dedicated null indicator (e.g.,

-999), ensuring the model is aware of the imputation. - Normalize Features: Apply standard scaling (z-score) or min-max scaling to all continuous features to ensure equal weighting during model training.

- Associate with Target Property: Align each catalyst's feature vector with its corresponding performance metric (e.g., yield, TOF) from activity testing. This forms the complete dataset for the surrogate model.

Protocol 3.2: Iterative Search Space Expansion via Acquisition Function Objective: To integrate the encoded search space into the Bayesian optimization loop for candidate selection. Workflow Diagram:

Title: Bayesian Optimization Loop with Search Space Encoding

4. The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Catalyst Synthesis & Encoding

| Item / Reagent | Function in Search Space Definition |

|---|---|

| High-Throughput Impregnation Robot | Enables precise, automated synthesis of catalyst libraries with varying compositions/dopant levels, generating consistent data for encoding. |

| Inductively Coupled Plasma Optical Emission Spectrometry (ICP-OES) | Provides quantitative elemental analysis for accurate encoding of weight loading and atomic fraction features. |

| Surface Area & Porosimetry Analyzer (BET) | Measures critical physical descriptor features (surface area, pore volume) of catalyst supports. |

| Crystallographic Database (ICSD, COD) | Source for ionic radius and structural descriptors for dopant and active phase encoding. |

| Elemental Property Table (e.g., CRC Handbook) | Source for electronegativity, valence electron count used as dopant/site descriptors. |

| Data Curation Software (e.g., CATKit, custom Python/R scripts) | Essential for automating the transformation of synthesis records into standardized, encoded feature vectors. |

5. Logical Framework for Search Space Definition The following diagram illustrates the hierarchical and combinatorial nature of defining the catalyst search space.

Title: From Catalyst Components to Acquisition Function Input

Choosing and Training the Surrogate Model for Catalytic Performance Prediction

Within the broader thesis on using acquisition functions, specifically Expected Improvement (EI), for catalyst composition selection, the surrogate model is the cornerstone. It acts as a computationally cheap proxy for expensive experimental or high-fidelity computational (e.g., DFT) evaluations of catalytic performance (e.g., activity, selectivity). This document details the application notes and protocols for selecting, training, and validating surrogate models to enable efficient Bayesian optimization (BO) loops for catalyst discovery.

Surrogate Model Options: Comparison and Selection

The choice of model depends on dataset size, dimensionality, and noise characteristics. Below is a comparative analysis of commonly used models in catalyst informatics.

Table 1: Comparison of Surrogate Model Candidates for Catalytic Performance Prediction

| Model Type | Key Advantages | Key Limitations | Recommended Use Case | Key Hyperparameters to Tune |

|---|---|---|---|---|

| Gaussian Process (GP) | Provides uncertainty estimates natively, well-suited for BO. Strong theoretical foundation. | Poor scalability with data (O(n³)). Kernel choice is critical. | Small to medium datasets (<10k samples). High-value experiments where uncertainty quantification is critical. | Kernel type (RBF, Matern), length scales, noise level. |

| Random Forest (RF) | Handles high dimensions, robust to outliers and irrelevant features. Lower computational cost for training. | Uncertainty estimates are less reliable than GP. Extrapolation performance can be poor. | Medium to large datasets. Mixed feature types (compositional, structural). | Number of trees, max depth, min samples split. |

| Gradient Boosting Machines (GBM) | Often higher predictive accuracy than RF. Handles mixed data types well. | More prone to overfitting. Requires careful tuning. Sequential training is slower. | Medium to large datasets where predictive accuracy is paramount. | Learning rate, number of estimators, max depth. |

| Neural Networks (NN) | Extremely flexible, can model complex non-linear interactions. Scalable to very large datasets. | Requires large data. Uncertainty estimation not inherent (requires techniques like dropout or ensemble). | Very large datasets (>50k samples). Complex descriptor spaces (e.g., graph representations of catalysts). | Network architecture, learning rate, dropout rate. |

| Sparse Gaussian Process | Retains GP benefits (uncertainty) with improved scalability. | Approximation introduces error. More complex implementation. | Medium-sized datasets where GP is ideal but computationally prohibitive. | Inducing point number and initialization. |

Application Note 2.1: For a typical catalyst discovery BO loop with an expensive-to-evaluate function (e.g., experimental turnover frequency) and a dataset size of a few hundred points, the Gaussian Process with a Matern 5/2 kernel is often the default recommendation due to its balanced performance and native uncertainty quantification essential for EI.

Detailed Protocol: Training and Validating a Gaussian Process Surrogate Model

Protocol 3.1: Data Preprocessing for Catalyst Features

- Feature Engineering: Generate a unified feature vector for each catalyst candidate. This may include:

- Compositional Features: Elemental fractions, statistical moments (mean, variance) of atomic properties (electronegativity, radius).

- Structural/Conditional Features: Surface coverage, reaction temperature, pressure descriptors.

- Descriptors: Use libraries like

matminerordscribeto compute oxidation states, bond length distributions, etc.

- Feature Scaling: Standardize all features to have zero mean and unit variance using

StandardScalerfrom scikit-learn. Fit the scaler on the training set only, then transform both training and test sets. - Target Variable Handling: For regression (predicting a continuous performance metric), check for outliers. Consider log-transformation if the target values span several orders of magnitude.

Protocol 3.2: Model Training, Validation, and Uncertainty Calibration

- Train-Test Split: Perform a stratified split (e.g., 80/20) based on key compositional families or use spatial splitting (e.g., Kennard-Stone) to ensure the test set is representative of the chemical space.

- Kernel Selection & Initialization:

- Use a

Matern(length_scale=1.0, nu=2.5)kernel as a robust default for modeling catalytic landscapes. - Add a

WhiteKernel(noise_level=0.1)to account for experimental noise. - The final kernel is often the sum:

Matern() + WhiteKernel().

- Use a

- Model Fitting: Use

GaussianProcessRegressor(scikit-learn) or GPyTorch/GPflow for more flexibility. Optimize the kernel hyperparameters by maximizing the log-marginal likelihood. - Validation: Predict on the held-out test set. Calculate key metrics: Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and Coefficient of Determination (R²).

- Uncertainty Calibration (Critical for EI): Ensure the model's predicted standard deviation is meaningful. A well-calibrated model's uncertainty should correlate with prediction error. Use calibration plots (predicted std. dev. vs. actual absolute error).

Table 2: Example Performance Metrics for a GP Model on a Bimetallic Catalyst Dataset (n=420)

| Data Split | Sample Size | R² | MAE (TOF, s⁻¹) | RMSE (TOF, s⁻¹) | Avg. Predictive Std. Dev. |

|---|---|---|---|---|---|

| Training | 336 | 0.89 | 0.18 | 0.25 | 0.21 |

| Test | 84 | 0.82 | 0.25 | 0.34 | 0.29 |

Integration with Expected Improvement Acquisition Function

Once trained and validated, the surrogate model is integrated into the BO loop. The Expected Improvement (EI) for a candidate catalyst x is calculated as:

EI(x) = E[max( f(x) - f(x), 0 )]

where f(x) is the surrogate model's prediction (a Gaussian distribution: N(μ(x), σ²(x))), and f(x*) is the best performance observed so far.

*Implementation Note: Use a library like BoTorch or scikit-optimize which provides efficient, numerically stable implementations of EI that handle the exploration-exploitation trade-off.

Visualization of the Workflow

Title: Surrogate Model Training and BO Loop for Catalysts

Title: Thesis Context Model Hierarchy

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Catalyst Surrogate Modeling

| Item | Function & Application Note |

|---|---|

| scikit-learn | Core library for ML models (GP, RF, GBM), preprocessing, and validation. Use GaussianProcessRegressor for basic GP implementations. |

| GPyTorch / GPflow | Advanced libraries for scalable, flexible Gaussian Process modeling, essential for larger datasets or custom kernels. |

| matminer / dscribe | Libraries for generating feature descriptors from material compositions and structures (e.g., elemental property statistics, SOAP descriptors). |

| BoTorch | A Bayesian optimization library built on PyTorch. Provides state-of-the-art implementations of acquisition functions like EI and supports compositional spaces. |

| pymatgen | Python materials analysis library for parsing, analyzing, and representing catalyst structures and compositions. |

| Catalysis-Hub.org | A public repository for surface reaction energies and barriers from DFT, a potential source of training data for surrogate models. |

| StandardScaler | The default tool for feature standardization (zero mean, unit variance). Critical for distance-based models like GP and NN. |

| Matern Kernel (ν=2.5) | The recommended default kernel for GPs in this domain, offering a good balance of smoothness and flexibility to model catalytic response surfaces. |

Application Notes

Expected Improvement (EI) is the predominant acquisition function for Bayesian optimization (BO), a sequential design strategy for global optimization of expensive-to-evaluate black-box functions. In catalyst composition selection research, EI enables efficient navigation of high-dimensional, combinatorial search spaces (e.g., multi-metallic ratios, dopants, supports) by quantifying the potential utility of evaluating a candidate composition based on a probabilistic surrogate model, typically a Gaussian Process (GP).

Core Algorithmic Implementation:

The EI acquisition function for a minimization problem at a candidate point x is defined as:

EI(x) = (μ(x) - f(x*) - ξ) * Φ(Z) + σ(x) * φ(Z), if σ(x) > 0, else 0.

Where: Z = (μ(x) - f(x*) - ξ) / σ(x).

Here, μ(x) and σ(x) are the GP posterior mean and standard deviation, f(x*) is the current best observed function value (incumbent), ξ is a user-defined trade-off parameter balancing exploration and exploitation, and Φ and φ are the CDF and PDF of the standard normal distribution, respectively. Maximizing EI selects the next point for experimental synthesis and testing.

Key Software Libraries: Modern libraries implement robust, scalable EI optimization, handling gradients, constraints, and parallel evaluation.

Table 1: Comparison of Primary Software Libraries for EI

| Library | Primary Language | Key Features for EI & Catalyst Research | License |

|---|---|---|---|

| BoTorch | Python (PyTorch) | High-dimensional optimization, compositional/one-hot encoding for categorical variables (e.g., support type), batch (parallel) EI, analytic gradients. | MIT |

| GPyOpt | Python (GPy) | Easy-to-use interface, basic sequential and batch EI. | BSD 3-Clause |

| Dragonfly | Python | Handles variables of mixed types (continuous, discrete, categorical), suitable for complex catalyst parameter spaces. | MIT |

| scikit-optimize | Python | Simple "ask-and-tell" interface, supports expected improvement for numerical spaces. | BSD 3-Clause |

Experimental Protocols

Protocol 2.1: High-Throughput Virtual Screening of Bimetallic Catalysts Using EI

This protocol outlines a computational workflow for optimizing the composition and strain of a bimetallic alloy catalyst for oxygen reduction reaction (ORR) activity.

Objective: Maximize predicted ORR activity descriptor (e.g., ΔG_O - ΔG_OH) via Density Functional Theory (DFT) calculations guided by EI. Design Space: Two continuous variables: Composition (A$x$B${1-x}$, x ∈ [0,1]) and Biaxial Strain (ε ∈ [-5%, +5%]). Surrogate Model: Gaussian Process with Matérn 5/2 kernel. Acquisition Function: Expected Improvement (ξ = 0.01).

Procedure:

- Initial Design: Select 10 points via Latin Hypercube Sampling (LHS) across the 2D space.

- Initial Evaluation: Perform DFT calculations at these 10 points to obtain the activity descriptor values. This forms the initial dataset

D. - BO Loop (Iterate for 30 cycles):

a. Model Training: Fit a GP to the current dataset

D. b. EI Maximization: Using BoTorch'sqEIwith L-BFGS-B, find the pointx_nextthat maximizes EI. Incorporate known physical constraints via penalty functions if needed. c. Parallel Evaluation: For batch mode (e.g., 4 candidates per batch), useqEIto select a batch of points that jointly maximize information gain. d. Expensive Evaluation: Run DFT calculation forx_next(or batch). e. Data Augmentation: Append{x_next, y_next}to datasetD. - Termination & Validation: After 30 iterations, validate the top 3 predicted optimal compositions with higher-fidelity DFT calculations or literature comparison.

Protocol 2.2: Experimental Optimization of Zeolite Catalyst Synthesis via Batch EI

This protocol guides the lab-scale optimization of zeolite synthesis conditions for maximizing yield.

Objective: Maximize zeolite product yield (wt%). Design Space: Four continuous variables: Hydrothermal Temperature (140-180°C), Time (12-72 hr), SiO2/Al2O3 Ratio (20-50), and OH-/SiO2 Ratio (0.2-0.5). Surrogate Model: Gaussian Process with Matérn 5/2 kernel with Automatic Relevance Determination (ARD). Acquisition Function: Expected Improvement (ξ = 0.1) with a noisy observations assumption.

Procedure:

- Initial Design: Select 15 experimental conditions via LHS.

- Initial Synthesis & Characterization: Execute syntheses in parallel autoclaves, filter, dry, and weigh products to determine yields. Record in dataset

D. - BO Loop (Iterate for 20 batches):

a. GP Training: Fit a GP model to

D, using a noise likelihood to account for experimental variability. b. Batch EI Optimization: Using BoTorch'sqNoisyExpectedImprovement(qNEI), select a batch of 4 synthesis conditions that maximize joint EI, accounting for pending experiments. c. Experimental Execution: A technician carries out the 4 synthesis and characterization protocols in parallel. d. Data Update: Append the new results toD. - Analysis: Identify the optimal synthesis condition from the final dataset. Characterize the resultant zeolite material via XRD and BET surface area analysis.

Visualizations

EI-Driven Catalyst Optimization Workflow

EI Calculation for Candidate Selection

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for EI-Guided Catalyst Discovery

| Item / Solution | Function in Protocol |

|---|---|

| BoTorch / GPyOpt Library | Core software for implementing the Bayesian optimization loop, including GP fitting and EI maximization. |

| High-Performance Computing (HPC) Cluster | Executes parallel DFT calculations (Protocol 2.1) or manages computational jobs for surrogate modeling. |

| Parallel Synthesis Reactor Array | Enables high-throughput experimental batch evaluation (e.g., 4-8 simultaneous hydrothermal syntheses in Protocol 2.2). |

| Automated Characterization Suite | Provides rapid feedback on catalyst properties (e.g., yield, selectivity, surface area) to feed the BO data loop. |

| Domain-Specific Descriptor Calculator | Translates catalyst composition/structure into quantitative features for the GP model if not using raw variables. |

1. Introduction and Thesis Context

This application note details a case study demonstrating the efficacy of Expected Improvement (EI) as an acquisition function within a Bayesian optimization (BO) framework for the discovery of novel heterogeneous bimetallic catalysts. The work is situated within a broader thesis positing that EI, which balances exploration of uncertain regions and exploitation of known high-performance areas, is uniquely suited for navigating the high-dimensional, costly-to-evaluate composition spaces typical in catalyst discovery. The target reaction is the Suzuki-Miyaura cross-coupling, a pivotal C-C bond-forming reaction in pharmaceutical synthesis, where improving catalyst activity, selectivity, and stability under mild conditions remains a key industrial objective.

2. Experimental Design and Bayesian Optimization Workflow

The experimental space was defined by two continuous variables: the atomic ratio of Palladium (Pd) to a second, earth-abundant metal (M), and the calcination temperature of the catalyst support. A Gaussian Process (GP) surrogate model was trained on an initial dataset of 12 randomly selected compositions. The EI acquisition function was then used to sequentially select the next candidate catalyst for synthesis and testing, maximizing the expected gain over the current best performance (here, yield %).

2.1. Detailed Experimental Protocol: Catalyst Synthesis (Impregnation & Calcination)

- Objective: To prepare a series of Pd-M bimetallic catalysts on a mesoporous carbon support.

- Materials: See "Research Reagent Solutions" table.

- Procedure:

- Solution Preparation: Calculate the required masses of Pd(NO₃)₂·xH₂O and M(NO₃)ₙ·xH₂O to achieve the target Pd:M atomic ratio (e.g., 1:1, 3:1, 1:3) for a total metal loading of 2 wt.% on 1.0 g of support.

- Wet Impregnation: Dissolve the calculated metal precursors in 10 mL of deionized water. Add 1.0 g of mesoporous carbon powder to the solution. Stir the slurry at room temperature for 4 hours.

- Drying: Remove water via rotary evaporation at 60°C under reduced pressure until a dry powder is obtained.

- Calcination: Transfer the dry powder to a quartz boat. Place in a tube furnace under a flowing N₂ atmosphere (50 mL/min). Heat to the target temperature (range: 300°C–600°C) at a ramp rate of 5°C/min, hold for 3 hours, then allow to cool to room temperature under N₂.

- Reduction (Optional, in situ): For testing, the catalyst is reduced in situ in the reaction vessel under H₂ flow prior to reaction commencement, unless otherwise specified by the calcination protocol.

- Characterization: Perform X-ray diffraction (XRD) and X-ray photoelectron spectroscopy (XPS) on select samples to confirm alloy formation and metal oxidation states.

2.2. Detailed Experimental Protocol: Suzuki-Miyaura Coupling Reaction Screening

- Objective: To evaluate catalyst performance in the coupling of 4-bromotoluene with phenylboronic acid.

- Materials: See table.

- Procedure:

- In a dried 10 mL Schlenk tube under N₂ atmosphere, combine 4-bromotoluene (1.0 mmol, 171 mg), phenylboronic acid (1.5 mmol, 183 mg), and K₂CO₃ (2.0 mmol, 277 mg).

- Add a solvent mixture of toluene/water (4:1 v/v, 5 mL total).

- Add the synthesized bimetallic catalyst (25 mg, 0.5 mol% Pd relative to aryl halide).

- Seal the tube and heat the reaction mixture to 80°C with vigorous stirring (800 rpm).

- Monitor reaction progress by thin-layer chromatography (TLC) or withdraw aliquots at 1, 2, 4, and 8 hours for GC-MS analysis.

- After 8 hours, cool the reaction to room temperature. Dilute with ethyl acetate (10 mL) and filter through a Celite pad to recover the catalyst.

- Analyze the organic phase by gas chromatography with flame ionization detection (GC-FID) using dodecane as an internal standard to determine yield.

3. Data Presentation and Optimization Results

Table 1: Representative Experimental Data from the EI-Guided Campaign

| Experiment | Pd:M Ratio | Calcination Temp. (°C) | Yield (%) @ 8h | EI Selection Rank |

|---|---|---|---|---|

| Initial-01 | 1:1 (Co) | 400 | 45 | N/A |

| Initial-02 | 3:1 (Ni) | 500 | 62 | N/A |

| ... | ... | ... | ... | ... |

| EI-01 | 1:2 (Cu) | 350 | 78 | 1 |

| EI-02 | 2:1 (Co) | 450 | 65 | 2 |

| ... | ... | ... | ... | ... |

| EI-07 | 1:3 (Cu) | 375 | >99 | 1 |

| Final Best | 1:3 (Cu) | 375 | >99 | - |

Table 2: Comparison of Optimal Catalyst vs. Benchmarks

| Catalyst | Pd Loading (mol%) | Yield (%) | Turnover Number (TON) | Selectivity (%) |

|---|---|---|---|---|

| Commercial Pd/C | 0.5 | 85 | 170 | >99 |

| Pd-Ni (Initial Best) | 0.5 | 62 | 124 | >99 |

| Pd-Cu (EI-Optimized) | 0.5 | >99 | >198 | >99 |

| Monometallic Pd | 0.5 | 70 | 140 | >99 |

4. Visualization of Workflows and Relationships

Title: Bayesian Optimization Loop for Catalyst Discovery

Title: Suzuki-Miyaura Catalytic Cycle on Pd-Cu Site

5. The Scientist's Toolkit: Research Reagent Solutions

| Item | Function / Role in Experiment |

|---|---|

| Pd(NO₃)₂·xH₂O | Palladium precursor for catalyst synthesis. |

| Cu(NO₃)₂·3H₂O | Copper precursor; co-metal in optimal bimetallic catalyst. |

| Mesoporous Carbon Support | High-surface-area support for dispersing metal nanoparticles. |

| 4-Bromotoluene | Model aryl halide coupling partner. |

| Phenylboronic Acid | Model boronic acid coupling partner. |

| Potassium Carbonate (K₂CO₃) | Base, activates boronic acid and facilitates transmetalation. |

| Toluene/Water (4:1) Solvent | Biphasic solvent system common for Suzuki reactions. |

| GC-MS & GC-FID System | For reaction monitoring and quantitative yield analysis. |

| Schlenk Line/Tube | For conducting air-sensitive reactions under inert (N₂) atmosphere. |

| Bayesian Optimization Software | (e.g., GPyOpt, BoTorch) To implement the GP and EI algorithm. |

Overcoming Practical Hurdles: Tuning EI for Noisy, Constrained, and Multi-Objective Catalyst Data

Handling Experimental Noise and Replicability in Catalytic Activity Measurements

1. Introduction and Context Within catalyst discovery driven by Bayesian optimization and acquisition functions like Expected Improvement (EI), the fidelity of the catalytic activity measurement is the critical bottleneck. Noisy or irreproducible data misdirects the composition search, wasting iterations and resources. This document provides protocols to quantify, mitigate, and account for experimental noise, ensuring that the "improvement" sought by the EI function is statistically significant and replicable.

2. Quantifying Measurement Noise: A Pre-Optimization Requirement Before initiating any high-throughput experimentation (HTE) or optimization loop, baseline noise for the primary activity assay must be established.

Protocol 2.1: Determining Assay Signal-to-Noise Ratio (SNR) and Z'-Factor

- Plate Design: On a single microtiter plate, prepare two sets of control wells: high-control (catalyst known to give strong signal) and low-control (no catalyst or deactivated catalyst). Use a minimum of n=16 replicates for each control type, distributed across the plate.

- Assay Execution: Run the standard catalytic activity assay under identical conditions.

- Data Analysis: Calculate the mean (μ) and standard deviation (σ) for both high and low controls.

- SNR: (μhigh - μlow) / σ_high

- Z'-Factor: 1 - [ (3σhigh + 3σlow) / |μhigh - μlow| ]

- Interpretation: A Z'-Factor > 0.5 indicates an excellent assay suitable for screening. SNR >10 is typically desirable. These values must be re-checked periodically.

Table 1: Example Baseline Noise Metrics for a Model Hydrogenation Reaction

| Control Type | Mean Conversion (%) | Std Dev (σ) | N | SNR (vs. Low) | Z'-Factor |

|---|---|---|---|---|---|

| High (5% Pd/C) | 95.2 | 2.1 | 16 | 45.3 | 0.86 |

| Low (No Catalyst) | 1.5 | 0.7 | 16 | - | - |

3. Core Protocol: Replicable Catalyst Activity Measurement This protocol is designed for solid heterogeneous catalysts in liquid-phase batch reactions, with conversion measured by GC.

Protocol 3.1: Standardized Catalyst Testing Workflow

- Catalyst Synthesis & Loading: Precisely control precursor concentrations, deposition sequences, and calcination/reduction temperature ramps (±2°C). For supported catalysts, use an analytical balance (±0.01 mg) to load a precise mass (e.g., 10.0 mg) into the reaction vessel.

- Reaction Setup (Inert Atmosphere): Perform all transfers in a glovebox or using Schlenk-line techniques. Use septum-sealed vials/reactors.

- Pre-treatment: Activate catalysts in situ under a flow of relevant gas (e.g., H₂, 20 mL/min) at specified temperature for 1 hour, then cool under inert gas.

- Reaction Initiation: Inject a degassed, precise volume of substrate solution via syringe pump to ensure consistent start time and mixing.

- Sampling: At defined timepoints (e.g., 5, 15, 30, 60 min), withdraw a small, consistent aliquot (e.g., 50 µL) via syringe, immediately quench, and dilute for analysis.

- Analysis: Use gas chromatography (GC) or high-performance liquid chromatography (HPLC) with internal standard calibration. Each sample is analyzed in triplicate injections.

- Data Processing: Report conversion, turnover frequency (TOF), and selectivity. TOF should be calculated from the initial slope (first 10-15% conversion) to minimize mass-transfer artifacts.

4. Noise Mitigation Through Experimental Design

- Blocking: When testing a library of compositions across multiple plates or batches, include a shared reference catalyst in each block to correct for inter-batch variability.

- Randomization: Test compositions in a randomized order to avoid systematic bias from instrument drift or reagent degradation.

- Replication Strategy: Use a nested replication model. Technical replicates (multiple aliquots from the same reaction mixture) quantify analytical noise. Independent experimental replicates (separately synthesized and tested catalysts) quantify synthesis and holistic experimental noise. For EI, prioritize independent replicates for promising compositions.

Table 2: Replication Strategy for Different Optimization Phases

| Phase | Goal | Independent Replicates (Synthesis) | Technical Replicates (Analysis) | Primary Output |

|---|---|---|---|---|

| Initial Screening | Identify hits | n=1 | n=3 (injection) | Conversion ± SD |

| EI Candidate Evaluation | Reliable ranking for EI | n=2 | n=3 | Mean TOF & 95% CI |

| Validation | Confirm final leads | n=3 | n=5 | Full kinetics ± Error |

5. Integrating Noise into the Acquisition Function The EI acquisition function can be modified to account for noise by using a noisy expected improvement criterion, which uses the posterior predictive distribution from a Gaussian Process (GP) model that incorporates a noise term (σ²_n).

Protocol 5.1: Configuring a Noise-Aware GP Model for Catalyst Data

- Model Definition: Use a GP prior with a Matérn kernel (e.g., ν=5/2) to model the catalyst composition-activity landscape.

- Likelihood: Employ a Gaussian likelihood function where the total variance is the sum of the GP variance and the observed noise variance (σ²_obs) for each data point.

- Input Data: For each tested composition i, input the mean activity (yi) and the standard error of the mean (SEMi) derived from replicates.

- Hyperparameter Optimization: Optimize kernel hyperparameters (length scale, variance) and the global noise hyperparameter by maximizing the marginal log-likelihood.

- Noisy EI Calculation: The acquisition function αEI(x) is computed using the posterior mean μ(x) and variance σ²(x) + σ²n, where σ²_n is the estimated noise at the candidate point x.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Noise-Reduced Catalytic Testing

| Item | Function & Rationale |

|---|---|

| Automated Liquid Handling Robot | Enables precise, sub-microliter dispensing of catalyst precursors and reagents, eliminating pipetting variability in library synthesis. |

| High-Pressure Parallel Reactor System | Provides consistent temperature (±0.5°C) and agitation control across multiple catalyst tests, removing environmental noise. |

| Online GC/MS or HPLC with Autosampler | Allows for automated, timed sampling and analysis, ensuring consistent quenching and injection volumes, critical for kinetic profiles. |

| Deuterated Internal Standards | Added to reaction aliquots before analysis to correct for variations in sample preparation and injection volume in quantitative GC/LC-MS. |

| Certified Reference Catalyst (e.g., EUROPT-1) | A well-characterized, commercial silica-supported Pt catalyst used as a benchmark to validate reactor performance and analytical protocols across labs. |

| Degassed, HPLC-Grade Solvents in Sealed Bottles | Minimizes variability in solvent purity and dissolved oxygen content, which can poison or alter catalyst performance. |

6. Visualization of Workflows and Concepts

Title: Bayesian Optimization Loop with Noise Handling

Title: Noise Sources and Corresponding Mitigation Protocols

Within the broader thesis on "Advanced Acquisition Functions for Catalyst Composition Selection in Drug Development," the Expected Improvement (EI) criterion serves as a cornerstone for Bayesian optimization (BO) of high-value, multi-property catalytic materials. Traditional EI solely maximizes an objective function (e.g., reaction yield), often leading to proposals that are chemically intractable, prohibitively expensive, or unstable. This document details protocols for integrating cost, stability, and synthetic feasibility as explicit constraints into the EI framework, enabling the efficient navigation of complex composition spaces towards viable, developable catalysts.

Mathematical Formulation of Constrained EI

The constrained Expected Improvement (cEI) modifies the standard EI by multiplying it with a probability of feasibility. For multiple constraints, the acquisition function becomes:

cEI(x) = EI( f(x) ) * ∏ P( Ci(x) ≤ thresholdi )

Where:

- EI(f(x)) is the standard Expected Improvement on the primary objective (e.g., yield, selectivity).

- P( Ci(x) ≤ thresholdi ) is the probability that the i-th predicted constraint (modeled by a separate Gaussian Process) is within a specified acceptable limit.

Table 1: Quantitative Metrics and Their Corresponding Constraint Thresholds

| Constraint Dimension | Representative Metric (C_i) | Typical Threshold (for P = 0.95) | GP Kernel Common Choice |

|---|---|---|---|

| Cost | Estimated $/kg of Catalyst | ≤ $5,000 | Matérn 5/2 |

| Stability | % Activity Loss after 24h | ≤ 10% | Matérn 3/2 |

| Synthetic Feasibility | Predicted Step Score (0-1) | ≥ 0.7 | Radial Basis Function (RBF) |

Experimental Protocols for Constraint Modeling

Protocol 3.1: Data Generation for Cost Modeling

Objective: To create a dataset linking catalyst composition (e.g., %Pt, %Pd, support identity) to a normalized cost metric. Procedure:

- Define Basis: List all precursor salts, ligands, and supports for the target catalyst library.

- Price Aggregation: Query current bulk prices (≥100g) from Sigma-Aldrich, Fisher Scientific, and Strem Chemicals for each component. Record date and source.

- Calculate Composition Cost: For each hypothetical catalyst

Cat_{A_x,B_y}, compute:Cost = Σ (molar_frac_i * MW_i * price_$_per_g_i) / Target_MW_Catalyst. - Normalize: Scale all costs from 0-1 relative to the most expensive plausible composition in the design space.

Key Output: A lookup table or a trained surrogate model

GP_cost= f(composition).

Protocol 3.2: Accelerated Stability Screening Protocol

Objective: To rapidly assess catalyst stability under simulated reaction conditions. Procedure:

- Material: 10 mg of each candidate catalyst (synthesized via Protocol 3.3).

- Equipment: High-throughput parallel pressure reactor array (e.g., from Unchained Labs).

- Process:

a. Charge each reactor with catalyst, substrate, and solvent under inert atmosphere.

b. Run the main reaction at standard conditions (T, P) for 1 hour. Take an aliquot for initial activity

A_0(e.g., via UPLC yield analysis). c. Without catalyst removal, maintain the reaction mixture at an elevated temperature (e.g., T + 20°C) for 24 hours. d. Cool, take a final aliquot, and measure final activityA_f. - Calculation: Stability Metric =

[1 - (A_0 - A_f)/A_0] * 100%. Key Output: % Activity retained for each tested composition.

Protocol 3.3: Synthesis Feasibility Scoring

Objective: To assign a quantitative feasibility score (0-1) to a proposed catalyst composition. Procedure:

- Retrosynthetic Analysis: Decompose target composition into plausible synthetic steps (e.g., co-impregnation, sequential deposition, co-precipitation).

- Rule-Based Scoring: Apply the following scoring rubric (weights are tunable):

- Step Complexity (w=0.4): 1.0 for one-pot, 0.6 for two-step, 0.3 for >2 steps.

- Condition Severity (w=0.3): 1.0 for ambient T/P, 0.7 for T<100°C, 0.4 for T>100°C or P>10 bar.

- Literature Precedence (w=0.3): 1.0 for >5 analogous reported syntheses, 0.5 for 1-5, 0.1 for none.

- Calculate Score:

Feasibility_Score = Σ (weight_i * rule_score_i). Key Output: A scalar score for each composition; a threshold (e.g., 0.7) defines the feasible region.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Constrained Catalyst Optimization Studies

| Item | Function/Description | Example Supplier |

|---|---|---|

| Parallel Pressure Reactors | Enables high-throughput activity & stability testing under inert/reactive atmospheres. | Unchained Labs, AMTEC |

| Precursor Chemical Libraries | Pre-curated sets of metal salts, ligands, and supports for rapid catalyst formulation. | Strem Chemicals, Sigma-Aldrich Custom Kit |

| Automated Liquid Handling Robot | For precise, reproducible catalyst synthesis via impregnation or slurry preparation. | Hamilton, Opentrons |

| Bench-top UPLC-MS | Provides rapid, quantitative analysis of reaction yields and selectivity for EI objective. | Waters, Agilent |

| Thermogravimetric Analysis (TGA) | Critical for stability assessment, measuring catalyst decomposition under programmed heating. | Mettler Toledo, TA Instruments |

| Chemical Cost Database Access | Subscription service for up-to-date bulk pricing of chemicals and materials. | Sigma-Aldrich Quote, Knowde |

Workflow for Constrained Catalyst Selection

Diagram 1: Constrained Bayesian Optimization Workflow

Visualization of the Constrained EI Decision Surface