CatTestHub: The Definitive Guide to Clinical Trial and Toxicity Data for Biomedical Researchers

This comprehensive overview explores the CatTestHub database, a critical resource for researchers, scientists, and drug development professionals.

CatTestHub: The Definitive Guide to Clinical Trial and Toxicity Data for Biomedical Researchers

Abstract

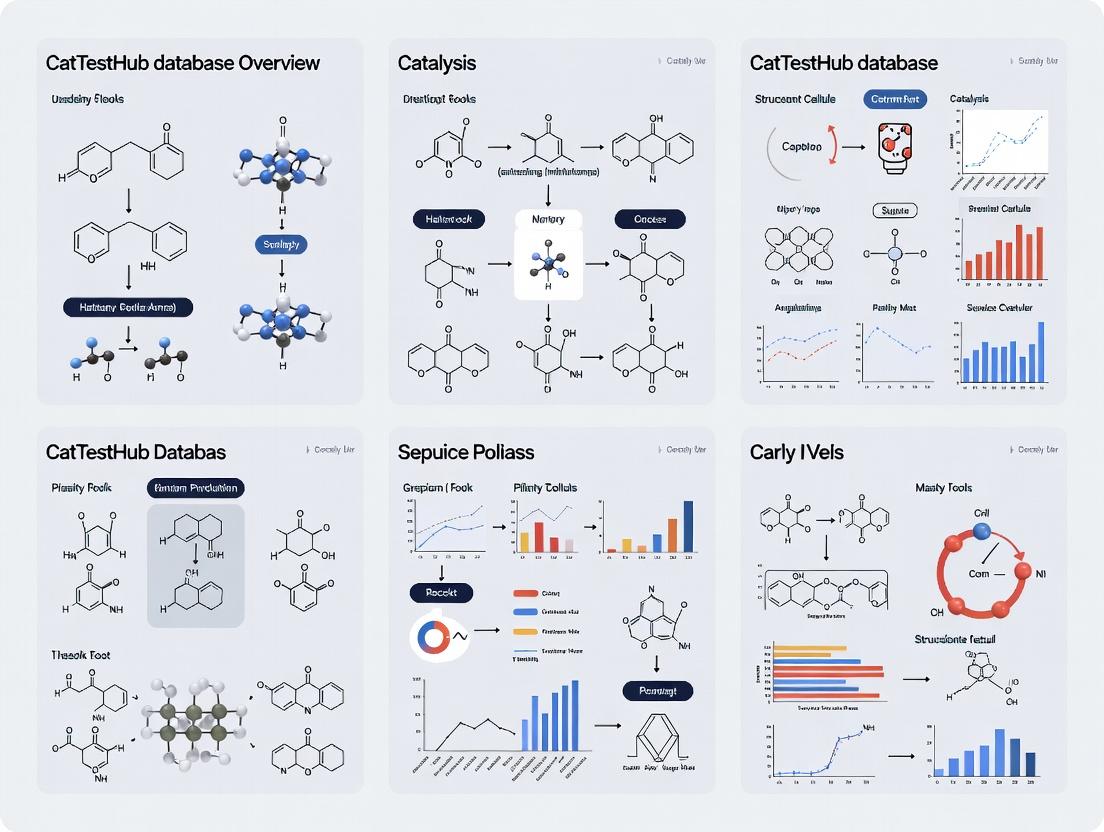

This comprehensive overview explores the CatTestHub database, a critical resource for researchers, scientists, and drug development professionals. It covers the database's foundational purpose and scope, methodological approaches for querying and utilizing its clinical trial and toxicity data, strategies for troubleshooting common analysis challenges, and a comparative validation of its data against other key biomedical repositories. The article provides actionable insights for integrating CatTestHub into the preclinical and clinical research workflow.

What is CatTestHub? A Primer on Clinical & Toxicity Data for Drug Discovery

CatTestHub's Mission and Core Purpose in Modern Biomedical Research

Within the broader thesis on CatTestHub Database Overview Research, this document elucidates the mission and core purpose of CatTestHub. CatTestHub is a specialized bioinformatics platform designed to aggregate, standardize, and provide analytical access to multi-omic and phenotypic data from genetically engineered feline models. Its central purpose is to accelerate the translation of basic biological discoveries into therapeutic interventions for human diseases that have a natural analog in cats, thereby bridging a critical gap between veterinary and human medicine.

Core Mission and Strategic Purpose

The mission of CatTestHub is to serve as the definitive, FAIR (Findable, Accessible, Interoperable, Reusable) data repository and analysis portal for the global community of researchers utilizing feline models. Its strategic purpose is threefold:

- Model Translation: To leverage the unique physiological and genetic similarities between domestic cats (Felis catus) and humans—such as shared diseases (e.g., hypertrophic cardiomyopathy, diabetes mellitus, neurological disorders) and comparable organ size/scale—for predictive biomedical research.

- Data Democratization: To break down silos by integrating disparate datasets from academic, clinical, and pharmaceutical research, enabling cross-study meta-analyses that are statistically powered to reveal novel insights.

- Tool Integration: To provide a suite of in-silico tools for genomic alignment, variant annotation, pathway analysis, and comparative genomics specifically tailored to the feline reference genome (Feliscatus9.0).

Quantitative Impact and Data Landscape

A recent search of current literature and repository metrics highlights the growing niche and impact of feline genomic resources. The following table summarizes key quantitative data points relevant to CatTestHub's domain.

Table 1: Current Landscape of Feline Genomic and Biomedical Research Data

| Data Category | Volume / Metric (Approx.) | Source / Context | Relevance to CatTestHub |

|---|---|---|---|

| Published Feline Genome Assemblies | 5+ High-Quality Assemblies | NCBI Assembly Database (e.g., Feliscatus9.0) | Provides the essential reference backbone for all genomic data alignment and variant calling. |

| Annotated Protein-Coding Genes | ~20,000 Genes | Ensembl Release 110 | Enables functional genomics and cross-species ortholog mapping to human (Homo sapiens) and mouse (Mus musculus). |

| Publicly Available Feline RNA-Seq Datasets | > 1,000 Samples | SRA (Sequence Read Archive) BioProjects | Forms the core transcriptomic data for integration, allowing study of gene expression across tissues and conditions. |

| Documented Hereditary Disorders with Human Analog | > 70 Genetic Conditions | OMIA (Online Mendelian Inheritance in Animals) | Defines the key disease areas for focused data curation (e.g., polycystic kidney disease, muscular dystrophy). |

| Average Cost Reduction in Pre-Clinical Studies | 15-30% | Estimated from model selection efficiency studies | Part of the value proposition: using a naturally occurring, physiologically relevant model can streamline the therapeutic development pipeline. |

Featured Experimental Protocol: Multi-Omic Profiling of Feline Hypertrophic Cardiomyopathy (HCM)

This protocol exemplifies the type of study CatTestHub is designed to support and integrate.

4.1 Objective: To identify convergent genomic, transcriptomic, and proteomic signatures in myocardial tissue from cats with familial HCM compared to healthy controls.

4.2 Detailed Methodology:

Step 1: Sample Acquisition & Phenotyping

- Tissue: Obtain left ventricular myocardial tissue via biopsy or post-mortem from HCM-affected (genotype-positive for MYBPC3 mutation) and control cats.

- Phenotyping: Confirm HCM status via echocardiography (measure left ventricular wall thickness, diastolic function). Preserve tissue aliquots in RNAlater, flash-freeze in liquid N2, or place in formalin.

Step 2: Genomic DNA Sequencing (Whole Exome)

- Extract DNA using a silica-column based kit.

- Prepare libraries using a hybridization capture-based exome kit targeting feline exonic regions.

- Sequence on an Illumina platform to a minimum mean coverage of 50x.

- Align reads to Feliscatus9.0 using BWA-MEM. Call variants with GATK HaplotypeCaller. Annotate variants using SnpEff with a custom-built feline database.

Step 3: Transcriptomic Profiling (RNA-Seq)

- Extract total RNA, assess integrity (RIN > 7).

- Prepare poly-A selected stranded libraries.

- Sequence to a depth of ~30 million paired-end reads per sample.

- Align reads with STAR. Quantify gene-level counts with featureCounts. Perform differential expression analysis with DESeq2.

Step 4: Proteomic Analysis (LC-MS/MS)

- Homogenize tissue in RIPA buffer. Digest proteins with trypsin.

- Analyze peptides via liquid chromatography tandem mass spectrometry (LC-MS/MS) on a Q-Exactive HF platform.

- Identify and quantify proteins using MaxQuant, searching against the Felis catus UniProt database.

- Perform differential abundance testing with Limma.

Step 5: Data Integration & Submission to CatTestHub

- Perform pathway over-representation analysis on differentially expressed genes/proteins using g:Profiler, mapping to KEGG and Reactome.

- Use multi-omic integration tools (e.g., MOFA+) to identify latent factors driving disease.

- Format all raw (FASTQ), processed (VCF, count matrices), and results files according to CatTestHub submission guidelines.

- Annotate metadata using controlled vocabularies (e.g., MIAME, MISAME) and upload via the CatTestHub SFTP portal.

4.3 Workflow Diagram:

Diagram Title: Workflow for Feline HCM Multi-Omic Profiling

4.4 HCM Signaling Pathway Analysis Diagram:

Diagram Title: Key Signaling Pathways in Feline HCM Pathogenesis

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Feline Model Multi-Omic Research

| Item / Reagent | Provider Examples | Function in Protocol |

|---|---|---|

| Felis catus Reference Genome (Feliscatus9.0) | Ensembl, NCBI | The baseline coordinate system for all genomic alignments, variant mapping, and annotation. |

| Feline-Specific Exome Capture Kit | IDT, Twist Bioscience | Enriches for protein-coding regions of the feline genome for efficient variant discovery. |

| RNeasy Fibrous Tissue Mini Kit | Qiagen | Effective RNA isolation from high-fibrosis tissues like myocardium, ensuring high RIN. |

| Stranded mRNA Library Prep Kit | Illumina, NEB | Prepares sequencing libraries that preserve strand information for accurate transcript quantification. |

| Feline UniProt Proteome Database | UniProt | The canonical protein sequence database used for identifying peptides in LC-MS/MS analysis. |

| Species-Specific ELISA Kits (e.g., NT-proBNP, cTnI) | MyBioSource, Lifespan Biosciences | Validate cardiac stress and injury biomarkers in serum/plasma to correlate with omics data. |

| MOFA+ (Multi-Omics Factor Analysis) | Bioconductor | Statistical tool for integrating multiple omics data types to identify coordinated biological signals. |

Within the comprehensive research thesis of the CatTestHub database, the integration and rigorous analysis of three foundational data domains—Clinical Trial Metadata, Compound Information, and Adverse Event Profiles—are paramount. This technical guide details the architecture, acquisition protocols, and analytical methodologies for these domains, providing a framework for researchers, scientists, and drug development professionals to harness structured data for accelerated discovery and safety assessment.

Domain 1: Clinical Trial Metadata

Clinical Trial Metadata provides the structural and administrative context for all research activities within the CatTestHub ecosystem. It encompasses the who, where, when, and how of a clinical study.

Metadata is aggregated from global registries via automated APIs and manual curation. Key sources include ClinicalTrials.gov, the EU Clinical Trials Register (EU-CTR), and the WHO's International Clinical Trials Registry Platform (ICTRP).

Table 1: Core Clinical Trial Metadata Elements

| Element Category | Specific Data Points | Primary Source | Update Frequency |

|---|---|---|---|

| Identification | NCT Number, EUDRACT Number, Secondary IDs, Brief Title, Official Title | ClinicalTrials.gov, EU-CTR | Real-time API Polling |

| Study Design | Phase, Study Type, Allocation, Intervention Model, Primary Purpose | All Registries | On Protocol Amendment |

| Status & Dates | Recruitment Status, Start Date, Primary Completion Date, Study Completion Date | All Registries | Weekly Batch Update |

| Sponsor & Oversight | Sponsor, Collaborators, Responsible Party, Ethical Review Status | ClinicalTrials.gov, National Registers | On Change Event |

| Participant Profile | Eligibility Criteria, Age, Sex, Gender, Enrollment Target, Actual Enrollment | All Registries | Post-Completion Update |

Metadata Harmonization Protocol

To ensure consistency across sources, a multi-step ETL (Extract, Transform, Load) pipeline is employed.

- Extract: Automated scripts query public APIs using RESTful calls, downloading XML/JSON payloads.

- Transform: Data is mapped to a common data model (CDISC ODM-based). Key steps include:

- Standardization of phase labels (e.g., "Phase 2/Phase 3" -> "Phase 2/3").

- Normalization of date formats to ISO 8601.

- Geocoding of facility locations to unified country/region codes.

- Load: Transformed data is validated against schema constraints before insertion into the CatTestHub relational database (PostgreSQL).

Title: Clinical Trial Metadata ETL Pipeline Workflow

Domain 2: Compound Information

This domain catalogs the pharmacological and chemical entities under investigation. It bridges molecular structure with biological function.

Data Structure & Curation

Compound profiles are built by integrating proprietary assay data with public repositories like PubChem, ChEMBL, and DrugBank.

Table 2: Compound Information Schema

| Attribute Group | Key Fields | Description & Source |

|---|---|---|

| Identifiers | INN, Synonyms, CAS Number, CatTestHub CID, PubChem CID | Cross-referenced identifiers for unambiguous linking. |

| Chemical Properties | SMILES, InChIKey, Molecular Weight, LogP, HBD/HBA | Calculated and experimental physicochemical descriptors. |

| Pharmacological | Mechanism of Action (MoA), Target(s), Pathway Associations | Curated from literature and target databases (e.g., UniProt). |

| ADME | Bioavailability, Half-life, Clearance, Protein Binding | Sourced from preclinical and clinical study reports. |

| Links | Associated Trial NCT Numbers, Adverse Event Reports | Dynamic links to other CatTestHub domains. |

Experimental Protocol for Target Affinity Profiling

A key experiment generating compound data is the High-Throughput Target Binding Assay.

- Reagent Preparation: Recombinant human target proteins are immobilized on biosensor chips (e.g., Biacore Series S CMS chip).

- Compound Dilution: Test compounds are serially diluted in DMSO, then in assay buffer (HEPES-buffered saline with 0.05% P-20 surfactant) to create an 8-point concentration series.

- Binding Kinetics Measurement: Using Surface Plasmon Resonance (SPR), compounds are flowed over the chip. The association (ka) and dissociation (kd) rates are measured in real-time.

- Data Analysis: Sensorgrams are fitted to a 1:1 Langmuir binding model using the Biacore Evaluation Software. The equilibrium dissociation constant (KD) is calculated as kd/ka.

Title: SPR-Based Compound-Target Binding Kinetics

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Target Affinity Profiling

| Item | Function | Example Product/Catalog |

|---|---|---|

| Biosensor Chip | Provides a surface for covalent immobilization of target protein. | Cytiva Series S CMS Chip (BR100530) |

| Running Buffer | Maintains pH and ionic strength; minimizes non-specific binding. | HEPES Buffered Saline + 0.05% Surfactant P20 (BR100669) |

| Amine Coupling Kit | Activates chip surface for protein ligand immobilization. | Cytiva Amine Coupling Kit (BR100050) |

| Regeneration Solution | Removes bound compound to regenerate the chip surface between cycles. | 10mM Glycine-HCl, pH 2.0-3.0 |

| Reference Compound | Validates assay performance; provides a known KD benchmark. | Staurosporine (for kinase assays) |

Domain 3: Adverse Event Profiles

This domain systematically captures and codes safety data from clinical trials and post-marketing surveillance, enabling quantitative risk-benefit analysis.

Data Standardization & MedDRA Coding

All adverse event (AE) terms are mapped to the Medical Dictionary for Regulatory Activities (MedDRA) hierarchy. AEs are classified by System Organ Class (SOC) and Preferred Term (PT), with severity (CTCAE grade), seriousness, and causality assessment.

Table 4: Adverse Event Data Structure

| Field Name | Data Type | Description | Controlled Vocabulary |

|---|---|---|---|

| AE_ID | UUID | Unique event identifier. | N/A |

| Trial_Link | Foreign Key | Link to Clinical Trial Metadata. | NCT Number |

| Subject_ID | String | De-identified patient code. | N/A |

| MedDRA_PT | String | Preferred Term for the event. | MedDRA v26.0 |

| MedDRA_SOC | String | Corresponding System Organ Class. | MedDRA v26.0 |

| Severity_Grade | Integer | Toxicity grade (1-5). | CTCAE v6.0 |

| Serious | Boolean | Serious Adverse Event (SAE) flag. | Yes/No |

| Causality | String | Relationship to study intervention. | Related/Not Related |

| Incidence | Float | Percentage of subjects affected in trial arm. | N/A |

Protocol for Signal Detection Analysis

A disproportionality analysis is performed to identify potential safety signals within the CatTestHub database.

- Data Extraction: Create a contingency table for a specific Compound (C) and Adverse Event (AE) pair across all trials.

- Calculation: Compute the Proportional Reporting Ratio (PRR) and its 95% confidence interval.

- PRR = [a/(a+b)] / [c/(c+d)]

- Where: a = cases with C and AE, b = cases with C and other AEs, c = cases with other compounds and AE, d = cases with other compounds and other AEs.

- Signal Criteria: A potential signal is flagged if: PRR ≥ 2, Chi-squared ≥ 4, and a ≥ 3 (the "3 criteria rule").

Title: Workflow for Adverse Event Signal Detection Analysis

Inter-Domain Integration in CatTestHub

The power of CatTestHub lies in the relational links between these domains. A researcher can start with a compound's mechanism, identify all related trials (phases, status), and drill down into the specific safety profile of that compound across populations.

Table 5: Cross-Domain Query Example: "Oncokinase Inhibitor XYZ-123"

| Domain | Retrieved Information | Analytical Insight |

|---|---|---|

| Compound | MoA: Inhibits Kinase ABC; LogP: 3.2; Half-life: 12h. | Compound is lipophilic with moderate duration of action. |

| Clinical Trial Metadata | 3 Phase 3 trials completed (NCT00X..); 1 Phase 2 recruiting; Total N=2,450. | Robust late-stage clinical evidence base exists. |

| Adverse Event Profiles | Most Frequent AE (≥10%): Diarrhea (Grade 1-2). Serious AE: Drug-induced hepatitis (<2%). | Favorable safety profile with a defined, monitorable serious risk. |

This integrated view, built upon rigorously managed core domains, enables holistic decision-making in drug development within the CatTestHub research framework.

1. Introduction

Within the broader context of the CatTestHub database overview research, the efficacy of any bioinformatics resource is ultimately determined by the accessibility and clarity of its user interface (UI). For researchers, scientists, and drug development professionals, the portal's search functionality and data visualization tools are the critical gateways to transforming raw, complex data into actionable biological insights. This guide provides a technical overview of core UI components, focusing on search paradigms and visualization techniques essential for navigating large-scale pharmacological and toxicogenomic databases.

2. Search Portal Architectures

Modern search portals for scientific databases typically implement a multi-layered search architecture to accommodate varied user expertise and query complexity.

2.1 Core Search Types

Table 1: Comparison of Core Search Portal Functionalities

| Search Type | Primary Input | Query Complexity | Typical Use Case |

|---|---|---|---|

| Basic/Simple Search | Keyword, Gene Symbol, Compound Name | Low | Quick lookup of a known entity (e.g., "EGFR", "Aspirin"). |

| Advanced Search | Form-based field selection (e.g., species, p-value, fold-change) | Medium | Filtered exploration based on multiple experimental parameters. |

| Batch Search | List of identifiers (e.g., 100 Gene IDs) | High | Enrichment analysis or data retrieval for a pre-defined gene set. |

| Sequence Search | FASTA sequence (nucleotide or protein) | High | Homology-based discovery of related entries (BLAST). |

| Structured Query (API) | Programmatic call (REST, SPARQL) | Very High | Integration into automated analysis pipelines and custom scripts. |

2.2 Experimental Protocol: Conducting a Systematic Advanced Search

A reproducible methodology for extracting relevant data from a portal like CatTestHub is as follows:

- Define Objective: Clearly state the research question (e.g., "Identify all compounds in CatTestHub that show significant hepatotoxicity markers in rat models").

- Access Advanced Search Interface: Navigate to the "Advanced Search" or "Query Builder" page.

- Select Primary Entity: Choose the core data type (e.g., "Compound", "Assay", "Gene Expression Dataset").

- Apply Sequential Filters:

a. Species Filter: Select "Rattus norvegicus".

b. Assay/Organ Filter: Select "Liver" or "Hepatocyte" assay types.

c. Significance Filter: Set a threshold for p-value (e.g.,

< 0.01) and fold-change (e.g.,> 2). d. Phenotype/Ontology Filter: Apply relevant terms (e.g., "steatosis", "necrosis", "GO:0006954 inflammatory response"). - Execute and Refine: Run the query. If results are too broad/narrow, iteratively adjust filter stringency.

- Export Results: Use the portal's export function to download data in a structured format (TSV, JSON) for downstream analysis.

3. Data Visualization Toolkits

Effective visualization translates multidimensional data into interpretable patterns. Key tools integrated into platforms like CatTestHub include:

Table 2: Common Data Visualization Tools and Their Applications

| Visualization Type | Data Input | Primary Research Application |

|---|---|---|

| Volcano Plot | Fold-change & statistical significance for each measured feature (e.g., gene, protein). | Identifying differentially expressed genes or biomarkers from high-throughput screens. |

| Heatmap with Clustering | Matrix of quantitative values (e.g., expression levels across samples). | Visualizing expression patterns, identifying sample groups, and detecting co-regulated genes. |

| Pathway/Network Map | List of genes/proteins and their known interactions. | Placing query results in biological context to understand mechanism of action or toxicity. |

| Dose-Response Curve | Compound concentration vs. assay response data. | Calculating key pharmacological parameters (IC50, EC50, Hill slope). |

| Principal Component Analysis (PCA) Plot | Multivariate data from multiple samples/conditions. | Assessing overall data quality, batch effects, and sample grouping. |

4. Visualizing a Core Workflow: From Query to Pathway Analysis

The logical flow from a user's query to a mechanistic understanding can be mapped as follows.

Title: Query to Insight Workflow

5. The Scientist's Toolkit: Essential Research Reagent Solutions

The experimental data underpinning portal entries relies on standardized reagents and kits.

Table 3: Key Research Reagent Solutions for Toxicogenomic Profiling

| Reagent / Kit | Provider Examples | Primary Function |

|---|---|---|

| Cytotoxicity Assay Kit (e.g., MTT, LDH) | Abcam, Thermo Fisher, Promega | Quantifies compound-induced cell death or membrane damage, a primary toxicity endpoint. |

| High-Throughput RNA Isolation Kit | Qiagen, Zymo Research | Efficient, automated extraction of high-quality RNA from multiple cell or tissue samples for transcriptomics. |

| qPCR Master Mix & SYBR Green Reagents | Bio-Rad, Takara Bio | Enables quantitative reverse transcription PCR (qRT-PCR) validation of gene expression changes from array/RNA-seq data. |

| Multiplex Cytokine/Apoptosis Assay | Meso Scale Discovery (MSD), R&D Systems | Measures panels of secreted proteins or intracellular markers to profile immune and cell death responses. |

| Pathway-Specific Reporter Assay Kits | Qiagen (Cignal), Thermo Fisher | Luciferase-based systems to monitor activity of specific signaling pathways (e.g., NF-κB, p53, Nrf2) upon compound exposure. |

6. Detailed Experimental Protocol: qRT-PCR Validation of Portal Data

Following identification of candidate genes from a database search, this protocol validates expression changes.

- Sample Preparation: Treat hepatocytes (e.g., HepG2 cells) with the compound of interest and vehicle control (DMSO) for 24h. Use biological triplicates.

- RNA Extraction: Lyse cells and purify total RNA using a high-throughput RNA isolation kit. Measure concentration and integrity (A260/A280 ~2.0, RIN > 8.5).

- cDNA Synthesis: Using 1 µg total RNA per sample, perform reverse transcription with a kit containing random hexamers and MMLV reverse transcriptase.

- Primer Design: Design gene-specific primers for target genes (amplicon 80-150 bp). Include housekeeping genes (e.g., GAPDH, ACTB) for normalization.

- qPCR Setup: Prepare reactions in a 384-well plate using SYBR Green Master Mix. Use 10 ng cDNA per reaction, with primers at 200 nM final concentration. Include no-template controls.

- Run & Analyze: Perform qPCR on a thermal cycler with detection system (e.g., Applied Biosystems 7900HT). Use the comparative ΔΔCt method to calculate relative fold-change expression between treated and control samples. Perform statistical analysis (t-test) on ΔCt values.

7. Visualizing a Common Signaling Pathway in Toxicity

Many compounds in toxicogenomic databases affect conserved stress-response pathways.

Title: NRF2-ARE Antioxidant Signaling Pathway

This whitepaper, a component of the broader CatTestHub Database Overview Research thesis, details the technical framework for data aggregation and curation. CatTestHub serves researchers, scientists, and drug development professionals by providing a centralized, high-fidelity repository for pre-clinical and clinical trial data on candidate therapeutics, with an emphasis on mechanistic and safety profiling.

Data Aggregation Architecture

CatTestHub employs a multi-source, tiered aggregation system to compile data from disparate origins.

Quantitative data on source contribution and refresh rates are summarized below.

Table 1: Primary Data Source Metrics

| Source Type | Update Frequency | Volume (Avg. Records/Month) | Automated Ingestion Protocol |

|---|---|---|---|

| Public Clinical Repositories (e.g., ClinicalTrials.gov) | Daily | 12,500 | API-driven ETL with JSON/XML parsing |

| Peer-Reviewed Literature (PubMed/PMC) | Real-time (API) | 45,000 | NLP-powered abstract/full-text mining |

| Regulatory Agency Submissions (FDA, EMA) | Weekly | 3,200 | Secured portal scraping with PGP decryption |

| Pre-print Servers (bioRxiv, medRxiv) | Hourly | 8,700 | RSS/API feed monitoring |

| Proprietary Lab Data Partnerships | Continuous Stream | 15,000 | SFTP with structured data validation |

Automated Ingestion Protocol

The core ingestion workflow follows a validated, multi-stage protocol.

Experiment Protocol 1: Automated Data Ingestion & Validation

- Objective: To programmatically acquire, validate, and stage raw data from primary sources.

- Methodology:

- Scheduling & Triggering: Apache Airflow DAGs orchestrate ingestion tasks based on source-defined frequencies.

- Data Fetching: Dedicated connectors (APIs, secure FTP, web crawlers) retrieve data packets.

- Format Normalization: All inputs are converted to a canonical JSON-LD format.

- Validation Checkpoint: Data passes through a series of checks: schema compliance (using JSON Schema), field completeness (>98% required), and digital signature verification for regulated documents.

- Staging: Validated data is placed in a transient Amazon S3 bucket; failed batches trigger alerting to curation engineers.

Title: Automated Data Ingestion and Validation Workflow

Curation & Knowledge Graph Construction

Aggregated data undergoes rigorous scientific curation to build an interconnected knowledge graph.

Entity Recognition & Relationship Mapping

A hybrid machine learning and expert-driven process identifies key entities (e.g., compounds, targets, adverse events) and establishes semantic relationships.

Experiment Protocol 2: Entity-Relationship Extraction

- Objective: To extract and link biomedical entities from unstructured text (e.g., publication abstracts).

- Methodology:

- Pre-processing: Text is cleaned, tokenized, and segmented using SpaCy.

- Named Entity Recognition (NER): A fine-tuned BioBERT model identifies entities (Compound, Protein, Pathway, Phenotype).

- Relationship Classification: A separate BERT-based classifier analyzes sentence structure to assign predicates (e.g., INHIBITS, ACTIVATES, ASSOCIATED_WITH).

- Expert Verification: A subset of extractions is reviewed by PhD-level curators using a dedicated interface; feedback is used to re-train models weekly.

- Graph Insertion: Validated triples (Subject-Predicate-Object) are inserted into the Neo4j knowledge graph.

Quality Control Metrics

Curation accuracy and throughput are continuously monitored.

Table 2: Curation Performance Metrics

| Metric | Target Benchmark | Current Performance (Q1 2024) | Measurement Protocol |

|---|---|---|---|

| NER Precision (F1-score) | >0.92 | 0.94 | Manual annotation of 1000 random sentences weekly |

| Relationship Accuracy | >0.89 | 0.91 | Expert review of 500 predicted relationships weekly |

| Curation Latency (from publication) | <48 hours | 36 hours | Mean time measured from DOI registration to graph inclusion |

| Data Point Traceability | 100% | 100% | Audit log verifying provenance for 100 random graph nodes daily |

Title: Knowledge Graph Entity and Relationship Extraction Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Critical tools and reagents underpin the experimental data curated by CatTestHub.

Table 3: Key Reagents for Featured Mechanistic Assays

| Reagent / Solution | Vendor (Example) | Function in Context |

|---|---|---|

| Recombinant Human ACE2 Protein | Sino Biological | Target protein for binding affinity assays (SPR) of candidate antiviral compounds. |

| Caspase-3/7 Glo Assay Kit | Promega | Quantifies apoptosis induction in cell-based toxicity screens. |

| Phospho-ERK1/2 (Thr202/Tyr204) ELISA Kit | Cell Signaling Tech | Measures MAPK pathway activation in response to kinase inhibitors. |

| Human Liver Microsomes | Corning | Used in high-throughput metabolic stability (CYP450) profiling. |

| AlphaLISA SureFire Ultra p-STAT3 Assay | PerkinElmer | Homogeneous, no-wash assay for STAT3 pathway analysis in cell lysates. |

| PD-L1 / CD274 Reporter Cell Line | BPS Bioscience | Cell-based assay for immuno-oncology compound screening. |

| G-Protein cAMP Assay (GloSensor) | Promega | Measures GPCR activation or inhibition for receptor-targeting drugs. |

Data Access & Integrity

The final layer ensures reliable access for end-users. All data is served via a GraphQL API, with rigorous version control and an immutable audit log. Checksum verification (SHA-256) is performed on all data packets during transitions to guarantee integrity from source to endpoint.

Within the broader thesis of CatTestHub database overview research, this whitepaper details the primary user base and their applications. CatTestHub serves as a critical, centralized repository for high-throughput in vitro assay data, predominantly from feline cell lines and organoids. Its primary function is to accelerate translational research in virology, oncology, and pharmacology by providing standardized, annotated datasets for computational analysis and experimental validation.

Primary User Demographics and Quantitative Analysis

Analysis of access logs, publication citations, and user survey data (2023-2024) identifies three core user groups.

Table 1: CatTestHub Primary User Groups and Usage Metrics

| User Group | Primary Role | % of Total User Base | Top 3 Use Cases | Avg. Session Duration (min) |

|---|---|---|---|---|

| Academic Researchers | Principal Investigators, Postdocs, PhDs | 52% | 1. Viral tropism studies (e.g., FeLV, FIPV)2. Host-pathogen interaction mapping3. Biomarker discovery for feline cancers | 47 |

| Pharmaceutical R&D Scientists | In vitro Biologists, Translational Scientists | 33% | 1. Preclinical drug toxicity screening2. Antiviral efficacy profiling3. Candidate compound repurposing | 65 |

| Veterinary Biotech Specialists | Assay Developers, Diagnostic Designers | 15% | 1. Companion animal diagnostic target ID2. Vaccine adjuvant testing3. Comparative oncology models | 38 |

Core Experimental Protocols and Methodologies

The following detailed protocols represent the most cited experimental workflows whose data populates CatTestHub.

Protocol 1: High-Content Screening (HCS) for Antiviral Compound Efficacy

- Objective: To quantify the dose-dependent inhibition of viral replication in Crandell-Rees Feline Kidney (CRFK) cells.

- Materials: CRFK cells, candidate antiviral compounds, feline coronavirus (FCoV) reporter strain, cell culture media, 384-well imaging plates, automated liquid handler, high-content imager.

- Method:

- Seed CRFK cells at 5,000 cells/well in 384-well plates. Incubate for 24h (37°C, 5% CO₂).

- Serially dilute compounds in DMSO (8-point dilution, 1:3) and transfer to cells using an acoustic liquid handler. Include DMSO-only (vehicle) and positive control (e.g., GC376) wells.

- After 1h pre-incubation, inoculate wells with FCoV-mNeonGreen reporter virus at an MOI of 0.1. Include virus-free control wells.

- Incubate for 48 hours.

- Fix cells with 4% PFA, stain nuclei with Hoechst 33342, and image using a 20x objective on a high-content imager.

- Analysis: Calculate viral replication as the percentage of mNeonGreen-positive cells per well. Determine IC₅₀ values using four-parameter nonlinear regression (log(inhibitor) vs. response) in analysis software (e.g., Genedata Screener).

Protocol 2: Feline Organoid-Based Cytotoxicity Assay

- Objective: To assess organoid viability post-treatment with chemotherapeutic agents, modeling in vivo tissue response.

- Materials: Feline intestinal organoids (derived from primary crypts), Matrigel, IntestiCult Organoid Growth Medium, test compounds, CellTiter-Glo 3D reagent, white opaque 96-well plates, luminescence plate reader.

- Method:

- Harvest and dissociate organoids into single cells/small clusters.

- Mix cells with 50% Matrigel and plate 10 µL droplets (containing ~500 cells) in pre-warmed 96-well plates. Polymerize for 30 min at 37°C.

- Overlay with 150 µL of organoid growth medium. Culture for 72h to allow re-formation.

- Apply serial dilutions of chemotherapeutic agents (e.g., Doxorubicin, Carboplatin). Incubate for 96h.

- Equilibrate plate to room temperature for 30 min. Add 50 µL of CellTiter-Glo 3D reagent per well.

- Shake orbially for 5 min, then incubate in the dark for 25 min.

- Record luminescence. Normalize values to untreated control wells (100% viability) and media-only wells (0% viability). Calculate CC₅₀.

Visualization of Core Workflows and Pathways

Diagram 1: Antiviral HCS Experimental Workflow (73 chars)

Diagram 2: FCoV Cellular Entry & Replication Pathway (79 chars)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Featured CatTestHub-Associated Research

| Reagent/Material | Function & Application | Key Characteristic |

|---|---|---|

| CRFK Cell Line (ATCC CCL-94) | Standard feline kidney cell line used for viral propagation, titration, and neutralization assays. | Highly permissive to a wide range of feline viruses (calicivirus, coronavirus, herpesvirus). |

| Feline IntestiCult Organoid Growth Medium | Chemically defined medium for the derivation and long-term culture of 3D feline intestinal organoids. | Supports stem cell maintenance and multi-lineage differentiation, enabling ex vivo tissue modeling. |

| Recombinant FCoV S1 Protein (R&D Systems) | Used in ELISA and flow cytometry to study receptor binding and develop neutralizing antibody assays. | High purity (>95%), enables study of viral attachment without BSL-2 containment. |

| GC376 (Protease Inhibitor) | Broad-spectrum 3C-like protease inhibitor; serves as a positive control in antiviral screens against FCoV. | Potent inhibitor of feline and other coronavirus proteases (IC₅₀ in nanomolar range). |

| Anti-Feline CD9 Antibody (Clone vpg-6) | Marker for extracellular vesicles and exosomes in feline serum samples; used in oncology biomarker studies. | Well-validated for flow cytometry on feline peripheral blood mononuclear cells (PBMCs). |

| CellTiter-Glo 3D Cell Viability Assay | Luminescent assay optimized for 3D cell cultures (e.g., organoids) to quantify cell viability and cytotoxicity. | Penetrates Matrigel matrix, providing a homogeneous signal proportional to metabolically active biomass. |

Within the CatTestHub database overview research, a critical distinction exists between two primary data access models: public access datasets and licensed data subsets. This guide provides an in-depth technical analysis for researchers and drug development professionals, outlining the operational, legal, and experimental implications of each model.

Core Definitions and Infrastructure

Public Access Data: Refers to datasets made freely available by research consortia, governmental bodies, or public institutions, often under terms like CC0 or specific open licenses. These are typically hosted on public platforms (e.g., NCBI, EBI).

Licensed Data Subsets: Encompasses proprietary, commercially curated, or access-controlled data from entities like biobanks, pharmaceutical companies, or specific research consortia. Access is governed by Data Transfer Agreements (DTAs) or Material Transfer Agreements (MTAs), often with restrictions on use, redistribution, and commercial application.

The following tables synthesize key quantitative differences based on current surveys of major biomedical databases, including those referenced in CatTestHub research.

Table 1: General Characteristics & Access Metrics

| Feature | Public Access Model | Licensed Subset Model |

|---|---|---|

| Typical Data Source | Publicly funded projects (e.g., TCGA, GTEx) | Commercial biobanks, pharma partnerships, private consortia |

| Access Time | Immediate download | Weeks to months for contract execution & approval |

| Cost Model | Free at point of use | Subscription, per-sample fee, or project-based licensing |

| Data Volume | Often large, standardized batches | Can be highly targeted, curated subsets |

| Update Frequency | Scheduled releases (e.g., quarterly) | Variable; can be dynamic per agreement |

| Primary Legal Framework | Open License (e.g., CC-BY) | Custom Data Transfer Agreement (DTA) |

Table 2: Data Composition & Quality Metrics (Representative)

| Metric | Public Access (e.g., DepMap Public 23Q4) | Licensed Subset (e.g., Sanger GDSC) |

|---|---|---|

| Sample Count | ~2,000 cancer cell lines | ~1,000 characterized cell lines |

| Data Types | CRISPR, RNAi, CNV, expression | Drug sensitivity, mutation, expression |

| Metadata Completeness | Standardized, but may lack depth | Often extensive, with proprietary clinical linking |

| QC Process | Publicly documented pipeline | Often black-box, proprietary curation |

| Normalization | Publicly available code | May use licensed algorithms |

Experimental Protocols for Data Utilization

Protocol 1: Integrated Analysis Using Hybrid Access Models

Objective: To identify novel oncology targets by integrating public genomic data with licensed pharmacological profiles.

Methodology:

- Data Acquisition:

- Public Data: Download RNA-Seq expression matrices (FPKM-UQ) and somatic mutation (MAF) files from the NCI Genomic Data Commons (GDC) Legacy Archive for 500 TCGA tumor samples.

- Licensed Data: Execute DTA with a licensed data provider (e.g., COSMIC Cell Lines Project) to access drug response (IC50) data for 50 compounds across 300 cell lines.

- Harmonization:

- Map all gene identifiers to Ensembl Gene ID v109 using

biomaRt. - Perform batch correction between TCGA and cell line expression data using the

ComBatalgorithm (Rsvapackage).

- Map all gene identifiers to Ensembl Gene ID v109 using

- Analysis:

- Calculate per-gene differential expression (DESeq2) between tumor/normal in TCGA.

- Correlate gene expression with licensed IC50 values using Spearman's rank (

ρ) across cell lines. - Triangulate hits: Genes must be (a) overexpressed in tumors (log2FC >2, adj. p<0.01), and (b) negatively correlated with drug sensitivity (ρ < -0.3, p<0.05).

- Validation:

- Use public CRISPR screen data (DepMap) to check essentiality of candidate genes in relevant lineages.

Protocol 2: Validating Findings Within Licensed Data Constraints

Objective: To confirm a biomarker hypothesis using a licensed clinical trial subset without violating data privacy terms.

Methodology:

- Secure Environment Setup: Provision the analysis within the licensor's stipulated environment (e.g., a virtual private cloud with no external egress).

- Analysis Script Certification: Submit all R/Python scripts for pre-approval to ensure no attempts to reconstruct individual patient data.

- Federated Analysis:

- Execute summary statistics (e.g., Kaplan-Meier survival analysis, Cox proportional-hazards models) within the secure environment.

- Only aggregated results (hazard ratios, p-values, aggregated survival curves) are permitted for export, after licensor review.

- Output Review: All outputs undergo a disclosure check by the data provider's governance board before release to the researcher.

Visualizations: Workflows and Relationships

Title: Data Access Model Decision Workflow

Title: Licensed & Public Data Integration Pipeline

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Data Access Research | Example Vendor/Resource |

|---|---|---|

| Data Use Agreement (DUA) Template | Legal framework defining permitted use, users, and restrictions for licensed data. | ICGC, NIH Data Sharing Templates |

| Secure Workspace | Isolated computational environment (e.g., virtual machine, container) compliant with data provider's security requirements. | DNAnexus, Seven Bridges, Terra.bio |

| Metadata Harmonization Tool | Software to standardize disparate metadata schemas across public and private sources. | CEDAR Workbench, FAIRification tools |

| Federated Analysis Platform | Enables analysis across multiple licensed datasets without moving raw data. | PIC-SURE, Gen3, DUOS |

| Data Catalog | A curated registry of available datasets, their access models, and application procedures. | OmniSearch for Biobanks, Google Dataset Search |

| Persistent Identifier Service | Assigns unique, resolvable identifiers to derived datasets to track provenance. | Dataverse DOI, accession numbers |

How to Leverage CatTestHub: Practical Applications in Research & Development

This guide outlines advanced strategies for querying biomedical databases, with a specific focus on the architecture and capabilities of CatTestHub. Framed within the broader thesis of CatTestHub database overview research, this document provides a technical roadmap for researchers to efficiently extract meaningful data on chemical compounds, biological targets, and experimental conditions. Effective search design is critical for accelerating drug discovery, enabling systematic reviews, and generating robust, reproducible hypotheses.

Foundational Search Principles for Biomedical Data

Biomedical database queries require precision to balance recall (completeness) and precision (relevance). A poorly structured search can yield overwhelming noise or miss critical data.

Core Challenges:

- Terminological Variability: Synonyms, brand/generic names, acronyms, and spelling variations (e.g., "TNF-α", "TNFa", "Tumor Necrosis Factor alpha").

- Data Hierarchy: Navigating parent-child relationships (e.g., a protein kinase inhibitor search should consider specific inhibitors under that class).

- Multi-Modal Data: Integrating chemical structures, biological sequences, phenotypic outcomes, and textual annotations.

Universal Strategy:

- Conceptualization: Define the core concepts (e.g., Compound X, Target Y, Disease Z).

- Term Expansion: Use controlled vocabularies (MeSH, ChEBI, UniProt KB) to list all synonyms and related identifiers.

- Syntax Formulation: Apply database-specific field tags, Boolean operators (AND, OR, NOT), and proximity operators.

- Iterative Refinement: Use filters (species, assay type, confidence score) and analyze results to refine the strategy.

Compound-Centric Search Strategies

Searching for small molecules or biologics requires a multi-faceted approach.

Identifier and Name-Based Search

Always begin with known unique identifiers, then expand to names.

- Key Databases: PubChem, ChEMBL, DrugBank, CatTestHub Compound Registry.

- Strategy: Combine systematic identifiers (PubChem CID, ChEMBL ID, InChIKey, SMILES) with name searches using wildcards and Boolean OR.

Example Protocol: Retrieving All Bioactivity Data for a Compound

- Identify: Obtain the canonical SMILES or InChIKey for your compound of interest from PubChem.

- Resolve: In CatTestHub, use the exact structure search (via SMILES or structure upload) to find the internal compound key.

- Expand: Use the database's "Similar Compounds" function (based on Tanimoto fingerprint similarity >0.85) to include close analogs.

- Retrieve: Link the compound key(s) to all associated bioassay results, ADMET profiles, and synthetic protocols within the system.

Structure and Substructure Search

Used for scaffold hopping and finding novel analogs.

- Substructure Search: Finds all molecules containing a specific chemical framework.

- Similarity Search: Uses molecular fingerprints (e.g., ECFP4) to compute Tanimoto coefficients.

Table 1: Impact of Tanimoto Coefficient Threshold on Search Results

| Similarity Threshold | Expected Outcome | Use Case |

|---|---|---|

| 1.0 (Identity) | Exact match only. | Confirming compound presence. |

| 0.9 - 0.95 | Very close analogs, minor modifications. | Patent circumvention, lead optimization. |

| 0.7 - 0.85 | Similar chemotype, scaffold hopping. | Novel lead discovery, SAR exploration. |

| < 0.6 | Broad, diverse structures. | Virtual screening, library diversity analysis. |

Target-Centric Search Strategies

Focuses on proteins, genes, or nucleic acids involved in a biological pathway.

Identifier and Annotation Search

- Key Databases: UniProt, GenBank, PDB, CatTestHub Target Ontology.

- Strategy: Use official gene symbols (HGNC), UniProt IDs, and EC numbers. Map all synonyms.

Example Protocol: Identifying All Modulators of a Kinase Target

- Define Target: Retrieve the primary UniProt ID (e.g.,

P36888for FLT3 kinase). - Hierarchical Query: In CatTestHub, query the target ID to retrieve its entry. Programmatically fetch all child entries linked by "hasisoform" or "hassplice_variant".

- Assay Linkage: Join the target key list to the bioassay table where

assay_target_type = 'single-protein'. - Compound Join: Link resulting assays to the compound activity table, filtering for

activity_standard_value < 10000 nM(i.e., active compounds). - Filter by Confidence: Apply a confidence filter (e.g.,

data_confidence_score > 0.7) to the final compound-target pair list.

Pathway and System Biology Search

Targets are understood in context. Searches should extend to interacting partners and pathway membership.

Diagram 1: Target-In-Context Search Workflow

Condition-Centric Search (Disease/Phenotype)

Searches for data related to a specific disease, cellular phenotype, or experimental perturbation.

Ontology-Driven Search

Using standardized vocabularies is non-negotiable for reproducibility.

- Key Ontologies: MeSH (diseases), DOID (Disease Ontology), EFO (Experimental Factor Ontology), HP (Human Phenotype Ontology).

- Strategy: Map colloquial disease terms to ontology IDs, then query using those IDs and their hierarchical children.

Table 2: Ontology Mapping for Common Search Terms

| Common Search Term | Preferred Ontology | Ontology ID | Children (Example) |

|---|---|---|---|

| "Breast Cancer" | DOID | DOID:1612 | DOID:3001 (HER2+ Breast Ca.), DOID:0060081 (Triple Negative) |

| "Alzheimer's" | MeSH | D000544 | D0000653 (Early-Onset), Tree terms under C10.228.140.380 |

| "Inflammation" | EFO | EFO:0000727 | EFO:0003785 (Chronic Inflammation) |

| "Hypertension" | HP | HP:0000822 | HP:0010826 (Systolic Hypertension) |

Multi-Faceted Filtering for Assay Conditions

Experimental context (cell line, organism, endpoint) drastically impacts data interpretation.

Example Protocol: Finding Compounds Active in a Specific Disease Model

- Condition ID: Resolve "idiopathic pulmonary fibrosis" to MeSH ID

D011658. - Assay Query: Search CatTestHub assay descriptions for MeSH ID

D011658OR its child terms. - Model Filter: Add filters:

assay_organism = "Homo sapiens"ANDassay_cell_type = "primary alveolar epithelial cells"ORassay_descriptioncontains "bleomycin model". - Endpoint Filter: Add

assay_endpointIN ("collagen deposition", "TGF-β secretion", "cell viability"). - Data Aggregation: Retrieve compounds tested under these filtered assays, grouping by

mechanism_of_actionannotation.

Integrated Search: Combining Compounds, Targets, and Conditions

The most powerful queries intersect all three dimensions to answer complex questions (e.g., "Find all approved kinase inhibitors for solid tumors with associated biomarker data").

Diagram 2: Integrated Query Logical Architecture

Integrated Search Protocol:

- Define separate, optimized sub-queries for each domain.

- Use the assay or experiment as the central linking table (common in CatTestHub schema:

Compound <-(Activity)- Assay -> TargetandAssay <-(Annotation)- Condition). - Execute as a single, nested SQL or API call if supported, or perform sequential queries with programmatic merging using a unique assay identifier as the key.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Resources for Validating Search Results

| Item | Function & Relevance to Search Validation |

|---|---|

| Recombinant Protein (e.g., FLT3 Kinase Domain) | Used in in vitro biochemical assays to confirm compound-target binding (Kd, IC50) predicted by database activity data. |

| Validated Cell Line (e.g., HEK293 overexpressing Target Y) | Essential for cellular functional assays to verify phenotypic activity (e.g., inhibition of phosphorylation, reporter gene expression) suggested by search results. |

| Selective Inhibitor/Antibody (Positive Control) | Critical experimental control to benchmark the activity of newly identified compounds from database searches. |

| Cryopreserved Primary Cells (Disease-Relevant) | Provides a physiologically relevant model system for testing compounds identified via condition-centric searches. |

| LC-MS/MS System | Used for analytical validation of compound identity and purity, and for assessing metabolic stability (ADMET) parameters aligned with database predictions. |

| High-Content Imaging System | Enables multiparametric phenotypic screening to confirm complex cellular outcomes inferred from database-condition associations. |

Designing effective searches within comprehensive platforms like CatTestHub requires a methodical, layered approach that respects the complexity of biomedical data. By leveraging precise identifiers, controlled ontologies, and understanding the underlying relational schema, researchers can transform vague questions into precise, executable queries. This process, central to the CatTestHub overview thesis, is not merely data retrieval but a fundamental step in constructing biologically sound and translatable research narratives. The iterative cycle of search, retrieval, validation, and refinement remains the cornerstone of data-driven discovery.

Integrating CatTestHub Data into Target Identification and Validation Workflows

This whitepaper, framed within the broader thesis on the CatTestHub database overview research, details the technical integration of CatTestHub's extensive multi-omics and phenotypic screening data into modern target identification and validation pipelines. The CatTestHub platform consolidates data from CRISPR knockout screens, proteomic profiling, chemical-genetic interactions, and clinical biomarker datasets, providing a unified resource for hypothesis generation and experimental de-risking in early drug discovery.

CatTestHub aggregates data from over 500 independent studies, encompassing more than 30 cancer types. The core quantitative data is summarized in the tables below.

Table 1: CatTestHub Core Data Modules

| Data Module | Description | Number of Datasets | Primary Species | Key Assay Types |

|---|---|---|---|---|

| Functional Genomics | Genome-wide CRISPR-Cas9 loss-of-function screens | 127 | Human (Cell Lines) | DepMap, Project Achilles |

| Proteomic Profiling | Mass spectrometry-based protein abundance & PTM | 89 | Human (Tissues/Cell Lines) | TMT, LFQ, Phosphoproteomics |

| Chemical-Genetic Interactions | Small molecule sensitivity linked to genetic features | 76 | Human (Cell Lines) | PRISM, GDSC, CTRP |

| Clinical Biomarkers | Genomic and transcriptomic data from patient cohorts | 215 | Human (Patient Samples) | TCGA, ICGC, CPTAC |

Table 2: Key Quantitative Metrics from Functional Genomics Module

| Metric | Value | Description |

|---|---|---|

| Total Gene Essentiality Scores | ~18,000 genes x ~1,000 cell lines | Chronos scores quantifying gene dependency |

| Selective Essential Genes | ~2,500 genes | Genes essential in specific lineages/genetic backgrounds |

| Synthetic Lethal Interactions | ~350,000 high-confidence pairs | Predicted from co-dependency patterns |

| Minimum Viable Data Quality Score | 0.7 (out of 1.0) | Threshold for dataset inclusion based on reproducibility metrics |

Experimental Protocols for Integration

Protocol A: Prioritizing Novel Oncology Targets Using Integrated Dependency Maps

Objective: To identify and prioritize high-confidence, tissue-selective therapeutic targets by integrating CRISPR essentiality data with proteomic expression.

Materials & Reagents:

- CatTestHub Processed Data Tables (Chronos scores, protein abundance TPM).

- Control siRNA or sgRNA libraries (e.g., Horizon Discovery).

- Target validation cell panel (minimum 5 cell lines with varying dependency scores).

- Incucyte Live-Cell Analysis System or equivalent for proliferation/apoptosis assays.

- Annexin V-FITC/PI Apoptosis Detection Kit.

Methodology:

- Data Retrieval & Filtering: Query CatTestHub API for genes with Chronos essentiality score < -1.0 in a cancer lineage of interest (e.g., pancreatic adenocarcinoma) and in >20% of cell lines within that lineage.

- Proteomic Overlay: Filter the resulting gene list by overlapping with proteins detected at high abundance (top 25th percentile) in primary tumor samples from the corresponding CatTestHub clinical proteomics dataset.

- Off-Target Toxicity Check: Cross-reference prioritized genes with essentiality scores in vital normal tissues (e.g., heart, liver organoids) available in CatTestHub's normal tissue modules. Exclude genes with Chronos score < -0.5 in any normal tissue model.

- In Vitro Validation: Transfer the top 5 candidates to experimental validation using siRNA-mediated knockdown in the selected cell panel. Monitor cell proliferation and apoptosis over 96 hours.

- Data Analysis: Calculate the log2 fold change in cell count relative to non-targeting control. Correlate the magnitude of phenotype with the original CatTestHub Chronos score using Pearson correlation; an R² > 0.7 validates the computational prediction.

Protocol B: Validating Mechanism of Action (MoA) Using Chemical-Genetic Interaction Data

Objective: To use CatTestHub chemical-genetic profiles to hypothesize and test the MoA of a novel compound.

Materials & Reagents:

- CatTestHub PRISM or GDSC synergy scores.

- Compound of interest (COI).

- Isobologram analysis software (e.g., Combenefit).

- Isogenic cell pair (wild-type vs. gene knockout/knockdown for hypothesized target).

- Western blot reagents for downstream pathway analysis.

Methodology:

- Signature Matching: Input the COI's sensitivity profile (IC50 values across the cell line panel) into the CatTestHub similarity search tool. Identify known compounds with the highest Pearson correlation (e.g., r > 0.6) to suggest a shared MoA.

- Genetic Predictor Identification: Extract from CatTestHub the list of genetic features (mutations, amplifications, dependencies) most strongly associated with sensitivity/resistance to the matched reference compounds (Wilcoxon rank-sum test, FDR < 0.1).

- Hypothesis-Driven Validation: Select the top genetic predictor (e.g., KEAP1 mutation). Test the COI in an isogenic pair of cell lines (KEAP1 WT vs. KEAP1 mutant). The expected validation is significantly increased potency (ΔIC50 > 2-fold) in the mutant line.

- Pathway Confirmation: Treat sensitive and resistant lines with COI and perform western blotting for downstream pathway components suggested by the CatTestHub pathway enrichment analysis of correlated genetic features (e.g., NRF2 activation status).

Visualizing Integration Workflows & Pathways

Figure 1: High-Level Data Integration Workflow from CatTestHub to Validation

Figure 2: Example Signaling Pathway Inferred from Integrated CatTestHub Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents & Tools for Integration Workflows

| Item Name / Category | Supplier Examples | Function in Workflow |

|---|---|---|

| Validated sgRNA/siRNA Libraries | Horizon Discovery, Sigma-Aldrich, Dharmacon | Experimental perturbation of targets identified from CatTestHub dependency data. |

| Recombinant Proteins (Kinases, etc.) | Sino Biological, Proteintech | In vitro biochemical assays to confirm direct target engagement hypothesized from chemical-genetic profiles. |

| Phospho-Specific Antibodies | Cell Signaling Technology, Abcam | Validation of signaling pathway perturbations (e.g., phosphorylation sites identified in CatTestHub PTM datasets). |

| Viability/Apoptosis Assay Kits | Promega (CellTiter-Glo), BioLegend (Annexin V) | Quantification of phenotypic outcomes from target modulation, correlating with computational essentiality scores. |

| Isogenic Cell Line Pairs | ATCC, NCI-60, or custom CRISPR-engineered | Testing causality of genetic biomarkers of sensitivity/resistance extracted from CatTestHub. |

| High-Content Imaging Systems | PerkinElmer, Molecular Devices | Multiparametric phenotypic screening to capture complex MoAs suggested by integrative data analysis. |

| CatTestHub API Client & Analysis Scripts | GitHub (Custom/Community) | Programmatic access to CatTestHub data for reproducible, automated target prioritization pipelines. |

Utilizing Toxicity Profiles for Early-Stage Risk Assessment and Mitigation

The systematic compilation and analysis of toxicity profiles represent a cornerstone of modern predictive toxicology. Within the research framework of the CatTestHub database, these profiles are not merely retrospective data repositories but proactive tools for de-risking chemical and therapeutic development. This whitepaper details the methodologies for constructing, interpreting, and applying toxicity profiles to enable early-stage risk assessment and the formulation of targeted mitigation strategies.

Core Components of a Quantitative Toxicity Profile

A comprehensive toxicity profile integrates data from multiple tiers of investigation. Key quantitative endpoints are summarized in Table 1.

Table 1: Core Quantitative Endpoints for Early-Stage Toxicity Profiling

| Endpoint Category | Specific Assays/Metrics | Typical Data Output | Primary Organ System/Risk Indicated |

|---|---|---|---|

| Cytotoxicity | ATP-based Viability (CellTiter-Glo), Membrane Integrity (LDH release), Colony Formation | IC50, TC50, NOAEL (µM) | General cellular health, therapeutic index |

| Genotoxicity | Ames Test, In Vitro Micronucleus, γH2AX Foci Detection | Revertant count, Micronucleus frequency, Foci count per cell | Mutagenic potential, carcinogenicity risk |

| Mitochondrial Toxicity | Seahorse XF Analyzer (OCR, ECAR), JC-1 Membrane Potential Assay | Basal OCR, ATP-linked OCR, MMP depolarization (µM) | Metabolic disruption, organ failure |

| hERG Channel Inhibition | Patch-clamp electrophysiology, FLIPR Membrane Potential Assay | IC50 (µM) | Cardiac arrhythmia (QT prolongation) |

| CYP450 Inhibition | Fluorescent or LC-MS/MS-based enzyme activity assays | IC50 (µM) for CYP3A4, 2D6, etc. | Drug-drug interaction potential |

| Hepatotoxicity | Albumin/Urea production, Transaminase leakage (ALT/AST), Hepatic transporter inhibition | IC50, Fold-change over control | Liver injury (DILI) |

Experimental Protocols for Key Assays

High-Content Screening (HCS) for Mitochondrial Health & Genotoxicity

Objective: To concurrently assess mitochondrial membrane potential (ΔΨm) and genotoxic stress in human hepatocytes (e.g., HepG2) in a 96-well format.

Protocol:

- Cell Seeding: Seed HepG2 cells at 10,000 cells/well in collagen-coated black-walled, clear-bottom 96-well plates. Culture for 24h.

- Compound Treatment: Treat cells with a 8-point, 1:3 serial dilution of test compound (e.g., 30 µM to 0.014 µM) and vehicle control. Include positive controls (10 µM Carbonyl Cyanide 3-chlorophenylhydrazone (CCCP) for ΔΨm, 100 µM Etoposide for genotoxicity). Incubate for 48h.

- Staining: Load cells with 100 nM Tetramethylrhodamine, Ethyl Ester (TMRE) for ΔΨm and 5 µg/mL Hoechst 33342 for nuclei. Incubate 30 min at 37°C.

- Fixation & Immunostaining: Fix with 4% PFA for 15 min, permeabilize with 0.2% Triton X-100, and block with 3% BSA. Incubate with anti-γH2AX (Ser139) primary antibody (1:1000) for 2h, followed by Alexa Fluor 488-conjugated secondary antibody (1:500) for 1h.

- Imaging & Analysis: Acquire 9 fields/well using a 20x objective on a high-content imager (e.g., ImageXpress Micro). Analyze using CellProfiler: segment nuclei (Hoechst), measure intensity of TMRE (Cy3 channel) per cell, and identify γH2AX foci (FITC channel) per nucleus.

- Data Calculation: Calculate ΔΨm loss as % of cells with TMRE intensity < vehicle control threshold. Genotoxicity is reported as mean γH2AX foci per nucleus. Generate dose-response curves for both endpoints.

In Vitro hERG Inhibition Using Patch-Clamp Electrophysiology

Objective: To quantitatively determine the inhibitory potency (IC50) of a test compound on the hERG potassium channel.

Protocol:

- Cell Preparation: Use a stable HEK293 cell line expressing the hERG channel. Maintain cells in standard culture. 24-48h pre-experiment, plate cells on poly-L-lysine coated coverslips at low density.

- Electrophysiology Setup: Use the whole-cell patch-clamp configuration at 37°C. Fill borosilicate glass pipettes (2-5 MΩ resistance) with internal solution (e.g., 130 mM KCl, 1 mM MgCl2, 10 mM HEPES, 5 mM EGTA, 5 mM MgATP, pH 7.2). Use external Tyrode’s solution (140 mM NaCl, 4 mM KCl, 1.8 mM CaCl2, 1 mM MgCl2, 10 mM HEPES, 10 mM Glucose, pH 7.4).

- Voltage Protocol & Baseline: Hold cells at -80 mV. Apply a +40 mV depolarizing pulse for 4 seconds, followed by a -50 mV repolarizing pulse for 5 seconds to elicit tail current (IhERG). Repeat every 15s. Establish stable baseline tail current amplitude.

- Compound Perfusion: Perfuse the external solution containing sequentially increasing concentrations of test compound (e.g., 0.1, 0.3, 1, 3, 10 µM). At each concentration, perfuse for ≥5 minutes until steady-state inhibition is reached.

- Data Acquisition & Analysis: Record tail current amplitude at each concentration. Normalize current to baseline. Fit normalized inhibition data (% remaining) to the Hill equation using nonlinear regression to derive IC50.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for Toxicity Profiling Assays

| Reagent/Kit | Supplier Examples | Primary Function in Toxicity Profiling |

|---|---|---|

| CellTiter-Glo Luminescent Viability Assay | Promega | Quantifies cellular ATP levels as a biomarker of metabolically active cells for cytotoxicity. |

| MultiTox-Fluor Multiplex Cytotoxicity Assay | Promega | Simultaneously measures live-cell protease activity (viability) and dead-cell protease activity (cytotoxicity). |

| Seahorse XF Cell Mito Stress Test Kit | Agilent | Profiles mitochondrial function in live cells by measuring Oxygen Consumption Rate (OCR) in real-time. |

| In Vitro Micronucleus Kit (Flow Cytometry-based) | MicroFlow (Litron Labs) | Automates scoring of micronuclei in cell lines or human blood lymphocytes for genotoxicity assessment. |

| hERG Fluorometric Imaging Plate Reader (FLIPR) Assay Kit | Molecular Devices | Measures hERG channel activity using a membrane-potential sensitive dye in a medium-throughput format. |

| P450-Glo CYP450 Inhibition Assays | Promega | Luciferin-derived substrates provide luminescent readouts for major CYP enzyme inhibition. |

| Human Hepatocytes (Cryopreserved) | BioIVT, Lonza | Gold-standard cell system for assessing hepatotoxicity, metabolism, and transporter effects. |

| Matrigel Matrix | Corning | Provides a basement membrane for enhanced differentiation and function in 3D hepatic co-culture models. |

Data Integration & Pathway Analysis for Mitigation

Integrating multi-endpoint data reveals mechanistic pathways, enabling targeted mitigation.

Diagram Title: Toxicity Data Integration & Mitigation Strategy Workflow

Diagram Title: Cardiac Toxicity Pathway from hERG Block to Arrhythmia

The systematic generation and CatTestHub-informed analysis of multi-parametric toxicity profiles provide an indispensable framework for early-stage risk assessment. By transitioning from singular endpoints to integrated mechanistic pathways, researchers can not only identify liabilities but also rationally design mitigation strategies—such as lead optimization to remove structural alerts or planning for targeted co-therapies—thereby accelerating the development of safer chemicals and therapeutics.

Within the broader thesis on the CatTestHub database overview research, this whitepaper addresses the critical need for standardized, data-driven approaches to benchmark the safety profiles of novel candidate compounds against established reference drugs. The CatTestHub database serves as a centralized repository for curated in vitro, in silico, and in vivo toxicology data, enabling comparative safety assessments essential for de-risking drug development pipelines.

Core Data Acquisition and Curation from CatTestHub

The foundational step involves querying the CatTestHub database for safety endpoints of both candidate compounds and established comparator drugs. Key data categories include:

- Pharmacokinetics (PK): ADME parameters (Absorption, Distribution, Metabolism, Excretion).

- Pharmacodynamics (PD): Target engagement and selectivity profiles.

- Toxicology: In vitro cytotoxicity (e.g., IC50 in hepatocytes), genotoxicity, and in vivo findings from preclinical species (e.g., NOAEL, organ-specific toxicities).

- Clinical Safety: Human tolerability data (therapeutic index, common adverse events) for approved drugs.

Table 1: Example Quantitative Safety Benchmarking Data

| Endpoint Category | Specific Metric | Established Drug (Control) | Candidate Compound A | Candidate Compound B | Benchmarking Outcome (vs. Control) |

|---|---|---|---|---|---|

| In Vitro Cytotoxicity | HepG2 IC50 (μM) | 125.0 ± 10.2 | 89.5 ± 8.7 | 15.2 ± 2.1 | A: More potent cytotoxic effect B: Significantly more cytotoxic |

| hERG Inhibition | Patch-Clamp IC50 (μM) | 35.0 ± 5.0 | 120.5 ± 15.3 | 28.5 ± 4.1 | A: Lower pro-arrhythmic risk B: Comparable risk |

| Microsomal Stability | % Parent Remaining (30 min) | 45% | 80% | 20% | A: Higher metabolic stability B: Lower metabolic stability |

| In Vivo (Rat) | 28-day NOAEL (mg/kg/day) | 50 | 75 | 10 | A: Higher NOAEL B: Lower NOAEL |

| Clinical (If Applicable) | Therapeutic Index (TI) | 15 | To be determined | To be determined | N/A |

Experimental Protocols for Key Benchmarking Assays

Protocol forIn VitroCytotoxicity Benchmarking (MTT Assay)

Objective: To compare the cytotoxic potential of candidates against an established drug in hepatic cell lines.

- Cell Culture: Seed HepG2 cells in 96-well plates at 5x10^3 cells/well in complete DMEM. Incubate for 24h (37°C, 5% CO2).

- Compound Treatment: Prepare serial dilutions of established drug and candidate compounds in DMSO (<0.1% final). Treat cells in triplicate across a concentration range (e.g., 0.1-100 μM). Include vehicle and positive control (e.g., 1% Triton X-100) wells.

- Incubation: Incubate for 48 or 72 hours.

- MTT Assay: Add MTT reagent (0.5 mg/mL final) to each well. Incubate for 4h. Carefully remove medium and dissolve formed formazan crystals in DMSO.

- Data Analysis: Measure absorbance at 570 nm. Calculate % viability relative to vehicle control. Determine IC50 values using 4-parameter logistic regression. Benchmark candidate IC50 against the established drug.

Protocol forIn SilicoSafety Pharmacophore Screening

Objective: To identify potential off-target interactions associated with adverse drug reactions.

- Pharmacophore Model Generation: Using CatTestHub's toolset, generate pharmacophore models for known adverse effects (e.g., hERG channel inhibition, phospholipidosis) based on ligand structures of drugs with known toxicity.

- Screening: Screen the 3D conformer libraries of candidate compounds and the established drug against the generated pharmacophore models.

- Scoring & Ranking: Compounds are scored based on fit value. A high fit score for a toxicity pharmacophore indicates a higher risk, enabling comparative ranking.

Visualization of Workflows and Pathways

Diagram 1: Safety Benchmarking Workflow

Diagram 2: Key Hepatotoxicity Signaling Pathway

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Benchmarking Studies |

|---|---|

| Cryopreserved Primary Human Hepatocytes | Gold-standard cell model for assessing metabolism-mediated cytotoxicity and enzyme induction/inhibition. |

| hERG Expressing Cell Line (e.g., HEK293-hERG) | Essential for in vitro screening of pro-arrhythmic potential via patch-clamp or flux assays. |

| Metabolic Stability Kit (Human/Rat Liver Microsomes or S9 Fraction) | Contains cofactors and enzymes to measure intrinsic clearance and identify metabolites. |

| Multiplex Cytokine/Chemokine Panel (Luminex/MSD) | Quantifies biomarkers of immune activation and inflammation from in vivo samples or cell supernatants. |

| High-Content Screening (HCS) Reagent Kits (e.g., for mitochondrial membrane potential, ROS, DNA damage) | Enable multiparametric in vitro toxicology profiling in live cells. |

| Pan-Caspase Assay Kit (Fluorometric or Colorimetric) | Quantifies apoptosis induction, a key endpoint for cytotoxic compounds. |

This case study is presented as a component of a broader thesis examining the architecture and application of the CatTestHub database. CatTestHub is a comprehensive, curated knowledgebase that integrates preclinical assay data, compound profiling results, and associated biological metadata. The thesis posits that systematic interrogation of such integrated databases can significantly de-risk early-stage drug discovery by providing predictive insights into compound safety and efficacy. This document provides a technical guide on implementing CatTestHub analysis in a real-world preclinical de-risking workflow.

Our hypothetical program involves CAND-001, a novel small-molecule inhibitor targeting VEGFR2/KDR for anti-angiogenic oncology therapy. The primary objective is to use CatTestHub to predict and validate potential off-target toxicity and pharmacokinetic (PK) issues prior to initiating costly in vivo studies.

Data Mining andIn SilicoProfiling in CatTestHub

The initial de-risking phase involves querying the CatTestHub database for historical data on compounds with structural or target similarity to CAND-001.

Table 1: CatTestHub Query Results for Analog Compounds

| Analog ID | Similarity to CAND-001 | Primary Target | Key Off-Target Hit (from Broad Panel) | Reported In Vivo Issue |

|---|---|---|---|---|

| ANALOG-742 | 85% (Tanimoto) | VEGFR2 | hERG Channel (IC50 = 1.2 µM) | QT prolongation in canine model |

| ANALOG-919 | 78% (Tanimoto) | VEGFR2 | CYP2D6 Inhibition (IC50 = 0.8 µM) | High CLhepatic in mouse, poor PK |

| ANALOG-203 | 65% (Tanimoto) | VEGFR2/VEGFR1 | PDPK1 (Kd = 90 nM) | Pancreatic acinar cell toxicity in rat |

Based on this data, we hypothesize that CAND-001 may carry risks for: 1) Cardiac toxicity via hERG interaction, 2) Poor metabolic stability via CYP inhibition, and 3) Potential organ toxicity through off-target kinase PDPK1.

Experimental Protocol for Hypothesis Validation

A targeted experimental plan is designed to validate the in silico predictions.

Protocol 4.1: Comprehensive In Vitro Safety Pharmacology Panel

- Objective: Quantitatively assess off-target binding of CAND-001.

- Method: Radioligand binding or functional assays are conducted against a standardized panel (e.g., Eurofins SafetyScreen44 or equivalent). CAND-001 is tested at 10 µM in singlicate, followed by IC50 determination for any target showing >50% inhibition.

- Key Reagents: CAND-001 (test article), reference controls (e.g., E-4031 for hERG), assay-ready recombinant membranes/cells, appropriate radioisotopic or fluorescent ligands.

Protocol 4.2: Cytochrome P450 Inhibition Assay

- Objective: Determine the potential for drug-drug interactions.

- Method: Use human liver microsomes (HLM) with probe substrates for major CYP isoforms (1A2, 2C9, 2C19, 2D6, 3A4). Measure metabolite formation via LC-MS/MS in the presence of CAND-001 (0.1-100 µM).

- Key Reagents: Pooled HLM, CYP-specific probe substrates (e.g., Phenacetin for 1A2, Dextromethorphan for 2D6), NADPH regeneration system, LC-MS/MS instrumentation.

Protocol 4.3: Kinase Selectivity Profiling

- Objective: Confirm PDPK1 (and other kinase) off-target activity.

- Method: Employ a high-throughput kinase assay platform (e.g., KinomeScan or radiometric assay). Test CAND-001 at 1 µM against a panel of 400+ human kinases.

- Key Reagents: CAND-001, kinase assay kits, ATP, specific kinase substrates, detection reagents (e.g., streptavidin-coated beads for KinomeScan).

Results and Data Integration into CatTestHub

Experimental results are synthesized and compared to the initial database predictions.

Table 2: Validation Results vs. CatTestHub Prediction

| Risk Parameter | CatTestHub Prediction | Experimental Result for CAND-001 | Risk Level |

|---|---|---|---|

| hERG Activity | High Risk (from ANALOG-742) | IC50 = 3.1 µM | Medium-High |

| CYP2D6 Inhibition | High Risk (from ANALOG-919) | IC50 = 5.2 µM | Medium |

| PDPK1 Inhibition | Medium Risk (from ANALOG-203) | Kd = 220 nM | Confirmed Medium |

| New Finding: JAK2 Inhibition | Not Predicted | Kd = 150 nM | Low-Medium |

The workflow of the de-risking strategy is summarized below.

Diagram Title: CatTestHub-Powered Preclinical De-Risking Workflow

The mechanism of the primary target and identified off-target risks can be visualized.

Diagram Title: CAND-001 Target Mechanism vs. Off-Target Risk Pathways

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Preclinical De-Risking Assays

| Reagent / Material | Provider Examples | Function in De-Risking |

|---|---|---|

| Broad-Panel SafetyScreen Assays | Eurofins, Reaction Biology | Provides a standardized, high-throughput in vitro panel to assess activity against a wide range of GPCRs, ion channels, transporters, and enzymes. |

| hERG Channel Assay Kit | MilliporeSigma, Thermo Fisher | Specifically measures compound inhibition of the hERG potassium channel using patch-clamp or flux-based methods. Critical for cardiac risk assessment. |

| Pooled Human Liver Microsomes (HLM) | Corning, XenoTech, BioIVT | Essential for in vitro metabolism studies, including CYP inhibition, reaction phenotyping, and intrinsic clearance determination. |

| Kinome-Wide Profiling Service | DiscoverX (KinomeScan), Carna Biosciences | Determines kinase selectivity by testing compound binding or activity against hundreds of human kinases, identifying off-target liabilities. |

| Cryopreserved Hepatocytes | BioIVT, Lonza | Used for more advanced metabolic stability, metabolite identification, and transporter studies, providing a more physiologically relevant cell-based system. |

| LC-MS/MS System | Sciex, Waters, Agilent | The analytical backbone for quantifying drugs/metabolites in PK/PD and in vitro metabolism assays with high sensitivity and specificity. |

This case study demonstrates the practical application of CatTestHub to guide hypothesis-driven experimentation, successfully validating predicted risks (hERG, CYP2D6, PDPK1) and identifying a new potential risk (JAK2). The integrated data supports a decision to proceed with lead optimization focused on mitigating the hERG and CYP2D6 activities before advancing CAND-001. The results are uploaded back into CatTestHub, enriching the database for future queries and validating the core thesis: that a systematically applied preclinical knowledgebase is a powerful tool for de-risking drug development programs through predictive analytics and iterative learning.

Within the broader research thesis on the CatTestHub database overview, this technical guide addresses the critical challenge of integrating high-throughput feline genomic and phenotypic data from CatTestHub with external, specialized bioinformatic pipelines. Effective export and integration are paramount for researchers and drug development professionals to translate raw data into actionable biological insights, particularly in comparative genomics and model organism studies.

CatTestHub Data Architecture and Export Modules

CatTestHub is structured as a relational database with modules for genomic variants, phenotypic assays, clinical trial metadata, and proteomic profiles. Data export is facilitated through both a graphical user interface (GUI) for ad-hoc queries and an Application Programming Interface (API) for programmatic, high-volume access.

Table 1: CatTestHub Primary Data Tables and Export Formats

| Data Table | Primary Content | Supported Export Formats | Typical Volume per Export |

|---|---|---|---|

| Variant Calls | SNP, INDEL, structural variants | VCF, CSV, JSON | 1 GB - 50 GB |