Mastering Z-Score Analysis: A Complete Guide for Normal Distribution of Catalytic Data in Biomedical Research

This comprehensive guide explores the application of Z-score analysis for normally distributed catalytic data in biomedical research and drug development.

Mastering Z-Score Analysis: A Complete Guide for Normal Distribution of Catalytic Data in Biomedical Research

Abstract

This comprehensive guide explores the application of Z-score analysis for normally distributed catalytic data in biomedical research and drug development. We establish the foundational principles of the normal distribution and Z-scores, detail a step-by-step methodology for application to enzymatic and reaction data, address common challenges in real-world datasets, and validate the approach through comparison with alternative statistical methods. The article provides researchers and scientists with practical strategies for robust data standardization, outlier detection, and quality control in catalytic studies.

Understanding the Basics: Normal Distribution and Z-Scores in Catalytic Data Analysis

Why Normal Distribution Matters for Enzymatic and Catalytic Data

Enzymatic and catalytic data, derived from high-throughput screening (HTS), kinetic assays, and inhibition studies, are foundational to drug discovery and biochemical research. The inherent variability in these measurements—due to instrumental noise, biological heterogeneity, and experimental conditions—often conforms to a Normal (Gaussian) distribution. Within the broader thesis of employing Z-score normalization for catalytic data research, recognizing and leveraging this distribution is critical. It allows for robust statistical standardization, enabling accurate comparison of data across different plates, batches, and laboratories. This application note details the protocols and rationale for applying normal distribution-based Z-score methods to enzymatic data, enhancing reliability in hit identification and structure-activity relationship (SAR) analysis.

The Statistical Foundation: Z-Scores for Normalized Data

The Z-score transforms raw data into a standardized scale, describing how many standard deviations an observation is from the mean. For a dataset assumed to be normally distributed: Z = (X – μ) / σ Where X is the raw data point, μ is the population mean, and σ is the population standard deviation.

Table 1: Interpretation of Z-scores in Enzymatic Screening

| Z-score Range | Interpretation in Inhibition Assay | Action in Primary Screening |

|---|---|---|

| Z ≤ -3 | Strong inhibitory signal | Priority hit for validation |

| -3 < Z ≤ -2 | Moderate inhibitory signal | Secondary hit candidate |

| -2 < Z < 2 | Normal enzyme activity (noise) | No action |

| Z ≥ 3 | Strong activation or artifact | Investigate for errors/activation |

Core Experimental Protocols

Protocol 3.1: High-Throughput Enzymatic Assay for Z-score Normalization

Objective: To generate normally distributed catalytic activity data suitable for Z-score analysis from a target kinase. Materials: See "The Scientist's Toolkit" below. Procedure:

- Plate Setup: Dispense 10 µL of assay buffer into all wells of a 384-well plate. Include 32 control wells for high activity (no inhibitor, DMSO only) and 32 for low activity (saturating concentration of a known inhibitor).

- Compound Addition: Using a non-contact dispenser, add 100 nL of test compound in DMSO to appropriate wells. For controls, add DMSO only.

- Enzyme Addition: Add 10 µL of kinase solution (2 nM final concentration) to all wells. Incubate for 10 minutes at room temperature.

- Substrate/ATP Addition: Initiate the reaction by adding 10 µL of a substrate/ATP mix (ATP at Km concentration, substrate at optimal concentration for the detection method).

- Incubation: Incubate plate for 60 minutes at 25°C, protected from light.

- Detection: Add detection reagent per manufacturer's instructions (e.g., luminescent, fluorescent). Read signal on a plate reader.

- Data Processing: Calculate % inhibition for each well:

%Inh = (1 - (Signal_Low - Sample)/(Signal_Low - Signal_High)) * 100. - Z-score Calculation: For each plate, calculate the mean (μ) and standard deviation (σ) of the % inhibition values for all test compound wells (excluding controls). Compute the Z-score for each well.

Protocol 3.2: Validating Data Normality for Catalytic Parameters (IC₅₀, kcat/Km)

Objective: To test if derived catalytic parameters from replicate experiments follow a normal distribution, justifying Z-score use for outlier identification. Procedure:

- Data Generation: Perform a full dose-response assay (e.g., 10-point, 3-fold serial dilution in triplicate) for a reference inhibitor across 20 independent experimental runs.

- Parameter Fitting: For each run, fit the dose-response data to a four-parameter logistic model to determine the IC₅₀ value. For kinetic parameters, perform Michaelis-Menten analysis per run.

- Normality Test: Collect all fitted IC₅₀ values (n=20). Perform the Shapiro-Wilk test using statistical software (α=0.05).

- Visualization: Create a Q-Q plot. If points approximate a straight line, normality is supported.

- Application: If normality holds, calculate μ and σ for the log(IC₅₀) values. The Z-score for any new experimental IC₅₀ can identify statistically significant shifts in potency.

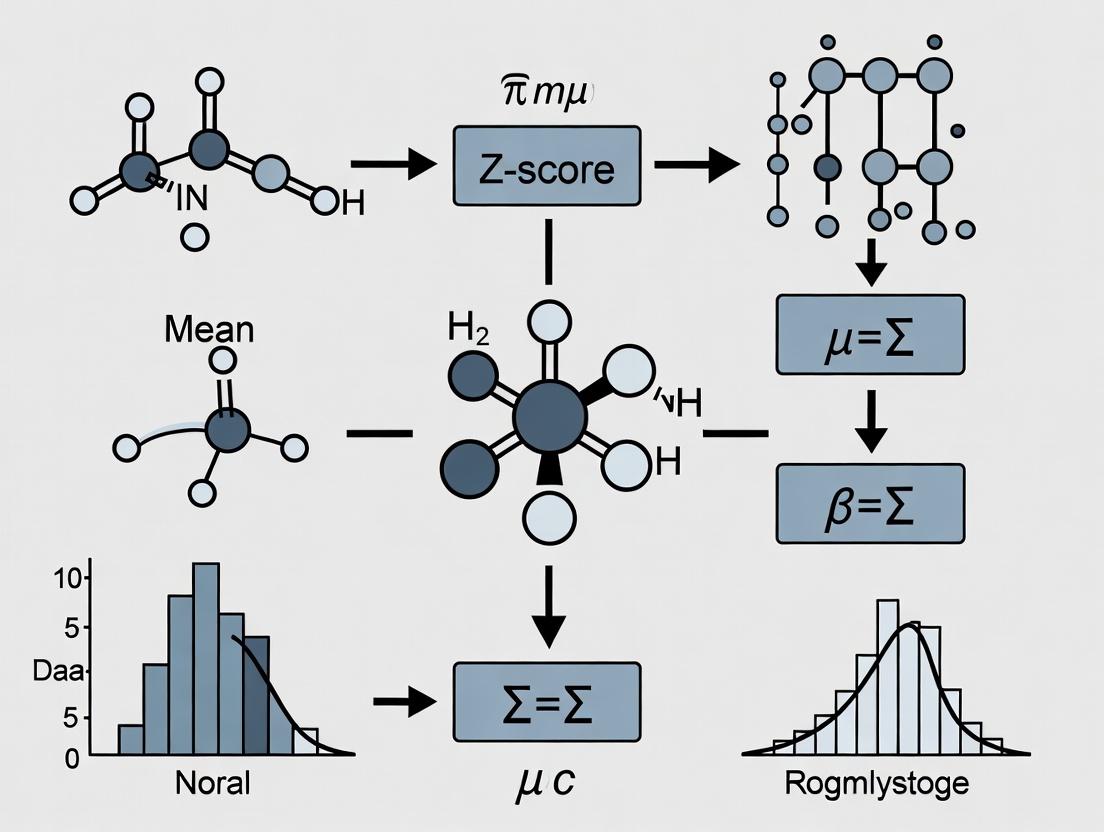

Visualization of Workflows and Relationships

Workflow for Z-score Based Hit Identification in HTS

Logical Relationship: Normal Distribution Enables Robust Analysis

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Enzymatic Z-score Studies

| Item | Function in Protocol | Example/Note |

|---|---|---|

| Recombinant Enzyme | Catalytic target of interest. | Human kinase, ≥90% purity, aliquoted at -80°C. |

| Fluorogenic/Luminescent Substrate | Generates signal proportional to activity. | Peptide substrate with FITC or Eu-chelate label. |

| ATP Solution | Essential co-substrate for kinases, etc. | Prepared fresh in assay buffer; used at Km. |

| Reference Inhibitor (Control) | Provides low-activity control for normalization. | Well-characterized, potent inhibitor (e.g., Staurosporine for kinases). |

| Assay Buffer | Maintains optimal pH and enzyme stability. | Typically contains Tris/Hepes, Mg²⁺, DTT, BSA, 0.01% Tween-20. |

| DMSO (100%) | Vehicle for compound libraries. | Use low-batch, high-purity; keep final concentration ≤1%. |

| Detection Reagent | Stops reaction and generates readout. | ADP-Glo, HTRF, or other commercial kits. |

| 384-Well Assay Plates | Reaction vessel for HTS. | Low-volume, white, solid-bottom plates for luminescence. |

| Liquid Handling System | Ensures precision and reproducibility. | Non-contact acoustic dispenser for compound addition. |

| Statistical Software | For normality testing and Z-score calculation. | R, Python (SciPy), GraphPad Prism, or ActivityBase. |

In the analysis of normally distributed catalytic data—such as enzyme reaction rates, inhibitor potencies (IC50/Ki), or protein expression levels—the Z-score is a fundamental statistical tool. It standardizes individual data points by quantifying their distance from the population mean in units of the standard deviation. This transformation enables direct comparison of measurements from different experimental scales, assays, or units, which is critical for robust data integration and meta-analysis in drug development.

Theoretical Foundation & Calculation

The Z-score for a data point x is defined as: Z = (x - μ) / σ Where:

- x = the raw data point.

- μ = the mean of the population.

- σ = the standard deviation of the population.

For sample data, the sample mean (x̄) and sample standard deviation (s) are often used as estimates for μ and σ.

Table 1: Z-Score Interpretation for Normal Catalytic Data

| Z-Score Range | Interpretation (Relative to Population Mean) | Approx. % of Data (Normal Dist.) | Example in Catalytic Research |

|---|---|---|---|

| -3σ | Extreme Low Outlier | Candidate inhibitor shows negligible activity. | |

| -2 ≤ Z < -1 | Below Average | ~13.6% | Moderately less potent compound variant. |

| -1 ≤ Z < 0 | Slightly Below Average | ~34.1% | Wild-type enzyme activity under sub-optimal conditions. |

| Z = 0 | Exactly at Mean | - | Reference standard or control reaction rate. |

| 0 < Z ≤ 1 | Slightly Above Average | ~34.1% | Enhanced catalyst performance in a screening assay. |

| 1 < Z ≤ 2 | Above Average | ~13.6% | Highly promising lead compound. |

| Z > 2 | Exceptional High Value | ~2.3% | Potential "hit" with outstanding catalytic efficiency. |

Experimental Protocol: Z-Score Normalization of High-Throughput Screening (HTS) Data

This protocol details the application of Z-score standardization for hit identification from a primary HTS campaign measuring enzymatic inhibition.

Materials & Reagents

Table 2: Research Reagent Solutions for Catalytic HTS

| Item / Reagent | Function in Protocol |

|---|---|

| Target Enzyme (Recombinant) | Catalytic entity under investigation. Purified to homogeneity for consistent activity. |

| Fluorogenic/Chromogenic Substrate | Generates measurable signal (fluorescence/absorbance) proportional to enzymatic turnover. |

| Assay Buffer (Optimized pH/Ionic Strength) | Maintains enzymatic activity and compound solubility. |

| Positive Control (Known Potent Inhibitor) | Provides reference for 100% inhibition (defines lower bound of signal). |

| Negative Control (DMSO Vehicle) | Defines 0% inhibition (maximum enzyme activity, defines upper bound of signal). |

| Compound Library (in DMSO) | Small molecules or biologics screened for inhibitory activity. |

| 384- or 1536-Well Microplate | Platform for miniaturized, parallel reactions. |

| Plate Reader (Fluorescence/Absorbance) | Instrument for high-throughput signal detection. |

Step-by-Step Methodology

Assay Setup & Data Acquisition:

- Dispense buffer, enzyme, and substrate into microplate wells according to optimized kinetic assay conditions.

- Using an automated liquid handler, transfer nanoliter volumes of test compounds from the library.

- Include 32 wells each for positive control (100% inhibition) and negative control (0% inhibition), distributed across the plate to assess spatial variability.

- Incubate under defined conditions (time, temperature) and measure the signal endpoint or initial rate.

Raw Data Processing:

- Calculate percent inhibition (I) for each test well:

I = [1 - (S_compound - S_positive) / (S_negative - S_positive)] * 100where S is the measured signal. - Compile all percent inhibition values from the screen (e.g., 100,000 data points).

- Calculate percent inhibition (I) for each test well:

Z-Score Calculation & Hit Identification:

- Calculate the mean (μ) and standard deviation (σ) of the percent inhibition values for all compound wells (excluding control wells).

- Compute the Z-score for each compound's inhibition value:

Z = (I - μ) / σ. - Hit Selection Criteria: Flag compounds with

Z ≥ 3.0(i.e., inhibition 3 standard deviations above the screen mean) as primary hits for confirmation. This corresponds to a statistically significant outlier in a normal distribution.

Advanced Application: Cross-Experiment Comparison

Z-scores are vital for combining data from multiple experimental runs (e.g., different days, plate batches). Normalizing each batch's data to its own mean and standard deviation (batch Z-scoring) removes inter-batch variation, allowing merged analysis.

Diagram Title: Z-Score Workflow for Cross-Batch Data Normalization

Limitations & Considerations

- Assumption of Normality: The Z-score's probabilistic interpretation is most accurate when the underlying data is normally distributed. Catalytic data (e.g., initial rates) often are, but should be tested (e.g., Shapiro-Wilk test).

- Sensitivity to Outliers: μ and σ are sensitive to extreme outliers, which can distort Z-scores for all points. Use robust measures (median, Median Absolute Deviation) for highly outlier-prone data.

- Sample vs. Population: Using sample statistics (x̄, s) is acceptable for large screening datasets but introduces slight bias in small sample sizes (n<30).

The Z-score method is a parametric statistical technique used to standardize data points, allowing for comparison across different normal distributions. Its valid application is strictly contingent upon specific core assumptions regarding the underlying data. Within catalytic data research (e.g., enzyme kinetics, catalyst screening), misapplication leads to erroneous conclusions regarding catalytic efficiency, inhibitor potency, and structure-activity relationships.

Formal Statement of Assumptions

The method requires three core assumptions to be reasonably met:

- Normality: The data from which the Z-score is calculated must be drawn from a population that follows a Gaussian (normal) distribution.

- Independence: Each data point must be independently and randomly sampled. The value of one observation must not influence another.

- Known Parameters (for Parametric Z-score): The population mean (μ) and standard deviation (σ) are known or can be accurately estimated from a large sample. For sample-based Z-scores (often called standard scores), the sample mean and standard deviation are used as estimates.

Quantitative Validation of Assumptions

Table 1: Quantitative Tests for Validating Normality Assumption

| Test Name | Test Statistic | Threshold for Normality (p-value > 0.05) | Applicable Sample Size | Notes for Catalytic Data |

|---|---|---|---|---|

| Shapiro-Wilk Test | W | p > 0.05 | n < 50 | Preferred for small-scale enzyme assay datasets (e.g., n=10 replicates). |

| Anderson-Darling Test | A² | p > 0.05 | n > 50 | More sensitive to tails; useful for high-throughput screening (HTS) data. |

| Kolmogorov-Smirnov Test | D | p > 0.05 | n > 50 | Less powerful than Shapiro-Wilk for normality specifically. |

| Q-Q Plot | Visual inspection | Points adhere closely to reference line | Any | Essential for graphical diagnosis of deviations (e.g., outliers in IC₅₀ values). |

Table 2: Common Data Transformations for Achieving Normality

| Data Type (Catalytic Context) | Recommended Transformation | Formula | When to Apply |

|---|---|---|---|

| Reaction Rate / Velocity | Logarithmic | z = log₁₀(y) | For rate data spanning several orders of magnitude. |

| Percent Inhibition/Activity | Arcsine Square Root | z = arcsin(√(p)) | For percentage data (0-100%) from initial activity screens. |

| Michaelis Constant (Kₘ) | Reciprocal or Logarithmic | z = 1/y or log(y) | When Kₘ estimates are skewed right. |

| Error (SD) depends on Mean | Square Root | z = √(y) | For count data (e.g., turnover number frequency). |

Experimental Protocol for Validating Z-Score Application

Protocol 1: Pre-Analysis Workflow for Z-Score Applicability in Catalytic Datasets

Objective: To systematically assess if a given dataset from catalytic research meets the core assumptions for Z-score analysis.

Materials & Input Data: A single column of continuous numerical data (e.g., initial velocity V₀, IC₅₀, % yield, turnover frequency (TOF)) from n independent experimental replicates or conditions.

Procedure:

Data Audit & Independence Check:

- Review experimental design to confirm measurements are biologically/technically independent replicates.

- Plot

Measurement Ordervs.Valueto visually check for autocorrelation (runs or trends).

Outlier Identification (Grubbs' Test):

- Calculate the G statistic for the most extreme point:

G = |(suspect value - sample mean)| / sample standard deviation. - Compare

Gto critical value fornand α=0.05. IfGexceeds threshold, flag as outlier. Investigate experimental cause; do not remove arbitrarily. - Repeat until no outliers are detected.

- Calculate the G statistic for the most extreme point:

Normality Testing:

- For

n ≤ 50, perform the Shapiro-Wilk test.- Sort data in ascending order.

- Calculate test statistic

Wusing standard coefficients. - Obtain p-value from

Wdistribution.

- For

n > 50, perform the Anderson-Darling test. - Decision: If p-value < 0.05, reject the null hypothesis of normality. Proceed to Step 4.

- For

Data Transformation (If Non-Normal):

- Apply a suitable transformation from Table 2.

- Re-test the transformed data for normality (Step 3).

- Note: If normality cannot be achieved, consider non-parametric methods (e.g., using median and Median Absolute Deviation).

Z-Score Calculation:

- Once assumptions are met, calculate Z-scores.

- For population comparison:

Z = (X - μ) / σ, where μ and σ are known reference values. - For sample standardization:

Z = (X - X̄) / s, where X̄ is sample mean andsis sample standard deviation.

Title: Workflow for Validating Z-Score Assumptions in Catalytic Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Tools for Generating Z-Score Ready Data

| Item / Reagent | Function in Catalytic Research | Relevance to Z-Score Assumptions |

|---|---|---|

| High-Purity Enzyme / Catalyst | Minimizes lot-to-lot variability in kinetic parameters (Kₘ, k꜀ₐₜ). | Ensures data independence and reduces systemic error, supporting a stable μ and σ. |

| Fluorogenic/Kinetic Substrate | Provides continuous, precise measurement of reaction velocity. | Generives continuous, high-resolution data suitable for normality testing. |

| Automated Liquid Handler | Performs highly reproducible reagent dispensing for assay plates. | Critical for ensuring data point independence and reducing technical variance. |

| Real-Time PCR or Plate Reader | Captures continuous kinetic data or high-density endpoint readings. | Allows collection of large n for accurate estimation of population parameters. |

| Statistical Software (R, Python, GraphPad) | Performs Shapiro-Wilk test, generates Q-Q plots, calculates Z-scores. | Essential for the formal quantitative validation of core assumptions. |

| Reference Inhibitor/Control Compound | Provides a benchmark for inter-assay normalization and comparison. | Enables the use of a known population μ and σ for parametric Z-scores. |

This document forms part of a broader thesis applying the Z-score method to normally distributed catalytic data in biochemical and pharmacological research. The accurate determination of the population mean (μ) and standard deviation (σ) for catalytic parameters (e.g., reaction rate, enzyme activity, inhibitor potency) is fundamental. These parameters define the normal distribution (N(μ, σ²)) against which individual experimental results are compared using the Z-score (Z = (x - μ)/σ), enabling the standardization of data and identification of statistical outliers or significant deviations in high-throughput screening.

Application Notes: Defining μ and σ in Catalysis

In catalytic research, μ and σ are not merely statistical abstractions but describe the central tendency and variability of key performance metrics. Their precise calculation is critical for robust data interpretation.

Table 1: Core Catalytic Parameters and Their Statistical Descriptors

| Catalytic Parameter | Typical Symbol | Biological/Chemical Meaning | Statistical Mean (μ) Represents | Standard Deviation (σ) Quantifies |

|---|---|---|---|---|

| Michaelis Constant | Kₘ | Substrate concentration at half Vmax | Average binding affinity across replicates | Variability in affinity due to experimental conditions |

| Maximum Velocity | Vₘₐₓ | Maximum catalytic turnover rate | Average maximal enzyme activity | Experimental spread in rate measurements |

| Turnover Number | k_cat | Molecules converted per active site per second | Central catalytic efficiency | Precision of kinetic assays |

| Inhibition Constant | Kᵢ / IC₅₀ | Potency of an inhibitory molecule | Mean inhibitory concentration | Reproducibility of inhibition assays |

| Catalytic Efficiency | k_cat/Kₘ | Overall enzyme proficiency | Average substrate specificity & efficiency | Combined variability of k_cat and Kₘ |

| Variability Source | Impact on σ | Mitigation Strategy |

|---|---|---|

| Enzyme Purification Batch | High | Normalize activity per batch; use internal controls. |

| Substrate Quality/Stock | Medium | Use high-purity reagents; standardize preparation. |

| Assay Conditions (pH, T) | Medium | Rigorous buffer calibration; use thermostated equipment. |

| Detector Sensitivity Drift | Low | Regular calibration with standard curves. |

| Cellular Background (in-cell assays) | High | Use isogenic control cell lines; replicate extensively. |

Experimental Protocols for Determining μ and σ

Protocol 1: Determining μ and σ for Enzyme Kinetic Parameters (Vₘₐₓ, Kₘ)

Objective: To establish reliable population parameters for Michaelis-Menten constants from replicated initial velocity experiments.

Materials: See "Scientist's Toolkit" below.

Procedure:

- Enzyme Preparation: Prepare a standardized aliquot of purified enzyme. Repeat this preparation for n independent batches (minimum n=5).

- Substrate Dilution Series: For each batch, prepare an identical series of substrate concentrations (typically 0.2-5 x estimated Kₘ).

- Initial Rate Assays: For each batch, measure initial velocity (v₀) at each substrate concentration [S] under identical, controlled conditions (temperature, pH, detection method).

- Non-Linear Regression: Fit the data (v₀ vs. [S]) for each individual batch to the Michaelis-Menten equation (v₀ = (Vₘₐₓ * [S]) / (Kₘ + [S])) to obtain batch-specific estimates of Vₘₐₓ and Kₘ.

- Parameter Population Calculation:

- Calculate the sample mean (x̄) of all batch-derived Vₘₐₓ values. For a sufficiently large, representative sample, x̄ ≈ μVmax.

- Calculate the sample standard deviation (s) of the Vₘₐₓ values. This estimates the population σVmax.

- Repeat steps for Kₘ values to obtain μKm and σKm.

- Z-score Application: For any future kinetic experiment with this enzyme under identical conditions, calculate Z-scores for its derived Vₘₐₓ (or Kₘ) using the established μ and σ. A |Z| > 2 may indicate a statistically significant deviation warranting investigation.

Protocol 2: Determining μ and σ for High-Throughput Screening (HTS) IC₅₀ Data

Objective: To establish a normalized distribution of compound potency in a target enzyme assay for outlier identification.

Procedure:

- Reference Compound Screening: A well-characterized, standard inhibitor is included on every assay plate in a 10-point dose-response format.

- Replicated Testing: This reference compound is tested across m independent assay plates and days (minimum m=20 plates).

- IC₅₀ Curve Fitting: For each plate, the reference compound's dose-response data is fit to a four-parameter logistic model to derive a plate-specific IC₅₀.

- Population Distribution: The collection of m IC₅₀ values for the reference compound forms a distribution. Calculate μIC50 and σIC50.

- Plate-Wise Normalization: All test compound IC₅₀ values from a given plate can be reported as Z-scores relative to the reference compound's population parameters, correcting for inter-plate variability.

Z_IC50(test) = (IC50(test) - μ_IC50(reference)) / σ_IC50(reference)

Visualizations

Diagram 1: Workflow for determining μ and σ for enzyme kinetics.

Diagram 2: Role of μ and σ within the Z-score thesis framework.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Determining μ and σ |

|---|---|

| High-Purity Recombinant Enzyme | Minimizes batch-to-batch variability (reduces σ) in kinetic studies. Source from reliable vendors or standardize in-house expression/purification. |

| Standardized Substrate Libraries | Pre-formulated, QC-tested substrate stocks ensure consistent [S] across replicates, critical for accurate Kₘ estimation. |

| Reference Inhibitor Compound | A well-characterized, potent inhibitor serves as a benchmark for determining μIC₅₀ and σIC₅₀ in inhibition assays. |

| Fluorogenic/Coupled Assay Kits | Provide optimized, uniform detection systems for activity measurements, reducing assay-derived variance. |

| QC-Plates for HTS | Microplates containing control enzymes/inhibitors at set positions to monitor per-plate performance and calculate plate-wise Z-scores. |

| Statistical Software (e.g., GraphPad Prism, R) | Essential for robust non-linear regression (to get individual parameters) and subsequent calculation of population means, standard deviations, and Z-scores. |

Within the broader thesis on applying the Z-score method to normally distributed catalytic data, verifying the assumption of normality is a critical preliminary step. This protocol details the use of histograms and Quantile-Quantile (Q-Q) plots to visually assess the normality of catalyst activity data, a common metric in heterogeneous catalysis and enzymatic drug development research.

Materials and Research Reagent Solutions

Table 1: Essential Toolkit for Normality Visualization

| Item/Category | Function in Analysis |

|---|---|

| Statistical Software (R, Python, GraphPad Prism) | Provides functions for generating histograms, Q-Q plots, and calculating descriptive statistics. |

| Catalyst Activity Dataset | Primary quantitative data, typically measured as turnover frequency (TOF), yield, or conversion rate. |

| Normality Test Algorithms | Shapiro-Wilk or Kolmogorov-Smirnov tests for formal, quantitative normality assessment alongside visual tools. |

| Data Visualization Libraries | ggplot2 (R), matplotlib/seaborn (Python) for creating publication-quality graphs. |

| Reference Normal Distribution | A theoretical normal distribution with the same mean and standard deviation as the sample data for overlay comparison. |

Experimental Protocol: Visual Normality Assessment

Data Preparation

Objective: Prepare catalyst activity data for analysis. Steps:

- Compile n independent measurements of the catalyst activity (e.g., from n experimental runs under identical conditions).

- Calculate sample mean (x̄) and sample standard deviation (s).

- Input data into chosen statistical software in a single column or vector.

Protocol A: Generating the Histogram with Normal Overlay

Objective: Create a histogram to visualize the empirical distribution of the data against a theoretical normal curve. Methodology:

- Determine the number of bins (k). Use a rule-of-thumb (e.g., Square-root: k = √n) or software default.

- Plot the frequency distribution of the binned data as bars.

- Overlay a line plot of the probability density function of a normal distribution with mean = x̄ and standard deviation = s.

- Visually assess the symmetry, modality, and how closely the bars follow the overlaid normal curve.

Protocol B: Generating the Normal Q-Q Plot

Objective: Compare the quantiles of the sample data to the quantiles of a theoretical normal distribution. Methodology:

- Sort the n data points in ascending order (these are the sample quantiles).

- Calculate the corresponding theoretical normal quantiles (z-scores) for each percentile rank. Typically, for the i-th value, the z-score is calculated for the percentile (i - 0.5)/n.

- Create a scatter plot with theoretical quantiles on the x-axis and sample quantiles on the y-axis.

- Add a reference line (y = x̄ + s*x). A perfectly normal dataset will lie approximately along this line.

- Assess deviations: Systematic "S-shaped" curves indicate skewness, convex/concave curves indicate kurtosis, and outliers appear as points far from the reference line.

Data Presentation: Simulated Catalyst Activity Analysis

Table 2: Descriptive Statistics for Simulated Catalyst Turnover Frequency (TOF, s⁻¹) Datasets

| Dataset | n | Mean (x̄) | Std. Dev. (s) | Skewness | Shapiro-Wilk p-value |

|---|---|---|---|---|---|

| Catalyst A (Simulated Normal) | 50 | 12.5 | 1.8 | 0.15 | 0.42 |

| Catalyst B (Simulated Right-Skewed) | 50 | 14.2 | 3.5 | 1.32 | <0.01 |

Workflow and Conceptual Diagrams

Diagram 1: Normality Check Workflow for Catalytic Data

Diagram 2: Role of Visual Checks in the Thesis Framework

Step-by-Step Guide: Calculating and Applying Z-Scores to Your Catalytic Dataset

Within the framework of a thesis advocating for the Z-score method as a standardization tool for normally distributed catalytic data, rigorous data preparation is paramount. Accurate comparison of catalytic performance metrics—such as Turnover Frequency (TOF) and Reaction Rate—across studies and laboratories hinges on meticulous cleaning and organization. This protocol details the systematic preparation of catalytic measurement datasets for subsequent statistical normalization and analysis.

Foundational Concepts and Data Standardization Table

Key catalytic metrics require clear definition and consistent units to enable valid Z-score transformation. The following table summarizes core quantitative parameters.

Table 1: Core Catalytic Measurement Parameters and Standard Units

| Parameter | Symbol | Standard Unit (IUPAC recommended) | Typical Data Range (Example) | Purpose in Analysis |

|---|---|---|---|---|

| Turnover Frequency | TOF | s⁻¹ (or h⁻¹) | 10⁻³ to 10³ s⁻¹ | Intrinsic activity per active site. |

| Reaction Rate | r | mol·(g_cat⁻¹·s⁻¹) or mol·(m²⁻¹·s⁻¹) | Variable | Bulk catalytic activity. |

| Conversion | X | % | 0-100% | Extent of reactant consumption. |

| Selectivity | S | % | 0-100% | Preference for desired product. |

| Activation Energy | Eₐ | kJ·mol⁻¹ | 20-250 kJ·mol⁻¹ | Energy barrier of the reaction. |

Protocol 1: Data Cleaning and Curation Workflow

This step-by-step protocol ensures a consistent, high-quality dataset ready for Z-score analysis.

Objective: To identify, rectify, and document inconsistencies, outliers, and missing values in raw catalytic data.

Materials & Software:

- Raw data files (e.g., .csv, .xlsx from lab instruments).

- Data processing software (e.g., Python/Pandas, R, or Microsoft Excel).

- Metadata sheets detailing experimental conditions.

Procedure:

- Data Assembly: Consolidate all raw data from individual experiments into a single, structured master table. Each row should represent a unique experiment (catalyst, condition), and each column a variable (TOF, Temperature, Pressure, etc.).

- Unit Harmonization: Convert all reported TOF and reaction rate values to a consistent unit system (e.g., SI). Document all conversion factors applied.

- Missing Value Annotation: Identify cells with missing data. Do not impute arbitrarily. Flag with "NA" and note the probable cause (e.g., instrument failure, sample lost) in a separate log.

- Outlier Detection via Grubbs' Test: For each key metric (e.g., TOF) under identical conditions, perform Grubbs' test (for normally distributed data) to identify statistical outliers.

- Calculate the G statistic: G = |suspect value - sample mean| / sample standard deviation.

- Compare G to the critical value for the sample size (N) and chosen significance level (α=0.05).

- If G > G_critical, flag the value as an outlier. Investigate the original lab notes for that experiment to determine if it is a legitimate anomaly or an error.

- Error Log Creation: Maintain a "Data Curation Log" that records every alteration: unit conversions, outlier removals (with justification), and notes on missing data.

Protocol 2: Data Organization for Z-Score Analysis

Objective: To structure the cleaned dataset to facilitate the grouping and calculation of Z-scores, which require data subsets that are presumed to be normally distributed.

Procedure:

- Subset Definition: Group the data into subsets where a normal distribution is theoretically expected (e.g., "TOF values for Pd-based catalysts in reaction A at 100°C"). These subsets form the basis for independent Z-score calculations.

- Feature Column Creation: Create new categorical columns in your master table to define these subsets (e.g., "CatalystClass", "ReactionType", "Temperature_Range").

- Z-Score Calculation: For each defined, normally distributed subset, calculate the Z-score for each TOF or rate value.

- Formula: Z = (xᵢ - μ) / σ

- Where xᵢ is an individual measurement, μ is the subset mean, and σ is the subset standard deviation.

- Normalized Table Creation: Generate a final analysis-ready table containing the original cleaned values, the defining categorical features, and the calculated Z-scores for each relevant metric.

Visualization of the Data Preparation Workflow

Diagram 1: Catalytic Data Preparation Workflow for Z-Score Analysis

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Materials for Catalytic Measurement Experiments

| Item | Function & Relevance to Data Quality |

|---|---|

| Internal Standard (e.g., deuterated analog, inert gas) | Added to reaction stream or analysis sample; its known response factor allows for precise quantification and correction for instrument drift, improving data accuracy. |

| Certified Calibration Gas Mixtures | Provides absolute reference points for gas chromatography (GC) or mass spectrometry (MS) detectors, ensuring reaction rate and TOF calculations are traceable to standards. |

| High-Purity Reactant Gases/Liquids (≥99.99%) | Minimizes side reactions and catalyst poisoning from impurities, leading to more reproducible TOF measurements. |

| Reference Catalyst (e.g., NIST Standard Reference Material) | A catalyst with well-characterized activity used to validate experimental setups and protocols, crucial for inter-laboratory data comparability. |

| Metal ICP-MS Standard Solutions | Used to quantify active metal loading via Inductively Coupled Plasma Mass Spectrometry, essential for accurate TOF calculation (moles product / moles active site / time). |

| Temperature Calibrator (e.g., certified thermocouple) | Verifies reactor temperature readings. Small temperature errors lead to large errors in rate and activation energy calculations. |

Within the broader thesis on applying the Z-score method to normally distributed catalytic data, this protocol details the practical calculation of Z-scores for the fundamental enzyme kinetic parameters, kcat (turnover number) and KM (Michaelis constant). The Z-score transformation standardizes raw kinetic data, enabling robust comparison of enzyme variants, conditions, or inhibitor effects across disparate experiments by expressing values in terms of standard deviations from the population mean.

Core Z-Score Formula & Application

For a given dataset of kinetic parameters (e.g., k_cat values from 50 enzyme mutants), the Z-score for an individual observation is calculated as:

Z = (X - μ) / σ

Where:

- X = Individual measured value of kcat or KM.

- μ = Population mean (mean of all kcat or KM values in the reference dataset).

- σ = Population standard deviation of the reference dataset.

This transformation centers the data at zero (mean) and scales it by the standard deviation.

Table 1: Z-Score Interpretation for Kinetic Parameters

| Z-Score Range | Statistical Percentile (approx.) | Interpretation for k_cat | Interpretation for K_M |

|---|---|---|---|

| Z ≥ +2.0 | ≥ 97.7th | Exceptional high activity variant. | Much weaker substrate binding (higher K_M). |

| +1.0 ≤ Z < +2.0 | 84.1st - 97.7th | Above-average activity variant. | Weaker substrate binding. |

| -1.0 < Z < +1.0 | 15.9th - 84.1st | Variant within the normal range. | Binding within the normal range. |

| -2.0 < Z ≤ -1.0 | 2.3rd - 15.9th | Below-average activity variant. | Stronger substrate binding (lower K_M). |

| Z ≤ -2.0 | ≤ 2.3rd | Severely impaired activity variant. | Exceptional, much stronger binding. |

Protocol: Calculating Z-Scores for Enzyme Kinetic Datasets

A. Prerequisites: Obtaining kcat and KM

Protocol 3.1: Standard Michaelis-Menten Kinetics Assay

Objective: Determine initial reaction velocities (v₀) at varying substrate concentrations ([S]) to calculate kcat and KM via nonlinear regression.

Materials (Research Reagent Solutions):

| Reagent/Material | Function |

|---|---|

| Purified Enzyme | The catalyst of interest; must be stable and active under assay conditions. |

| Substrate Solution(s) | Prepared at a stock concentration ≥10x the expected K_M, serially diluted. |

| Activity Assay Buffer | Maintains optimal pH, ionic strength, and includes necessary cofactors. |

| Detection System | Spectrophotometer, fluorimeter, or coupled enzyme system to quantify product formation. |

| Stop Solution (if needed) | Halts the reaction at precise time points (e.g., acid, base, denaturant). |

| Nonlinear Regression Software | (e.g., Prism, GraphPad, Python SciPy) to fit v₀ vs. [S] to the Michaelis-Menten equation. |

Procedure:

- Prepare eight substrate dilutions spanning 0.2KM to 5KM.

- Pre-incubate enzyme and substrate separately in assay buffer at the experimental temperature (e.g., 30°C) for 5 minutes.

- Initiate reactions by mixing enzyme with substrate. Final reaction volume is typically 50-200 µL.

- Measure the initial linear rate of product formation (v₀) for each [S]. Use a time course where <10% of substrate is consumed.

- Fit the data (v₀ vs. [S]) to the Michaelis-Menten equation: v₀ = (Vmax [S]) / (KM + [S]) using nonlinear regression.

- Calculate kcat = Vmax / [E]total, where [E]total is the molar concentration of active enzyme.

B. Core Z-Score Calculation Protocol

Protocol 3.2: Z-Score Transformation for a Kinetic Parameter Set

Objective: Convert a set of experimentally determined kcat (or KM) values into Z-scores.

Procedure:

- Define Population: Assemble the reference dataset (e.g., k_cat values for all 50 single-point mutants of Enzyme X measured under identical conditions).

- Calculate Population Statistics:

- Mean (μ): μ = (Σ Xi) / N, where N is the total number of variants in the population.

- Standard Deviation (σ): σ = √[ Σ (Xi - μ)² / N ].

- Compute Individual Z-Scores: For each variant i, calculate Zi = (Xi - μ) / σ.

- Analysis: Rank variants by Z-score. Identify outliers (|Z| > 2) for further investigation.

Table 2: Example Z-Score Calculation for k_cat of Fictional Enzyme Variants

| Variant ID | k_cat (s⁻¹) | Deviation from Mean (X - μ) | Z-Score (k_cat) | Interpretation |

|---|---|---|---|---|

| Wild-Type | 150.0 | -12.5 | -0.42 | Within normal range. |

| Mutant A | 215.0 | +52.5 | +1.76 | Above-average activity. |

| Mutant B | 85.0 | -77.5 | -2.60 | Severely impaired activity. |

| Mutant C | 162.5 | 0.0 | 0.00 | Exactly average. |

| Population Stats | μ = 162.5, σ = 29.8 | (N=4 for this example) |

Visualizing Data Relationships and Workflow

Visual Workflow: From Raw Data to Z-Score Integration

Z-Score Scale: Interpretation for Michaelis Constant (K_M)

Within the broader thesis on the Z-score method for normally distributed catalytic data research in drug development, interpreting individual Z-score values is fundamental. A Z-score, or standard score, quantifies how many standard deviations a raw data point is from the population mean. This Application Note provides detailed protocols and frameworks for interpreting Z-scores of +2, 0, and -2 in the context of high-throughput screening (HTS), enzyme kinetics, and assay validation.

Quantitative Interpretation of Key Z-Score Values

The following table summarizes the probabilistic and practical interpretation of the three key Z-scores for a normal distribution.

Table 1: Interpretation of Key Z-Score Values in Catalytic Data Research

| Z-Score Value | Location Relative to Mean | Approx. Percentile Rank | Probability (More Extreme Value) | Common Research Interpretation |

|---|---|---|---|---|

| +2 | 2 SD above the mean | ~97.7th | p ~ 0.0228 | Strong positive hit (e.g., enhanced catalytic rate, potent activation). May be a candidate for follow-up. |

| 0 | At the mean | 50th | p = 0.5 | Typical/expected activity. Represents the average population response (e.g., negative control, baseline activity). |

| -2 | 2 SD below the mean | ~2.3rd | p ~ 0.0228 | Strong negative hit (e.g., significant inhibition, loss-of-function). May indicate a potent inhibitor or inactivating mutation. |

SD = Standard Deviation; p = one-tailed probability.

Experimental Protocols for Generating and Using Z-Scores

Protocol 3.1: Z-Score Normalization of High-Throughput Screening (HTS) Data

Objective: To identify potential drug candidates (hits) from a large compound library by normalizing assay readouts to plate-based controls.

Materials & Reagents:

- Compound library plated in 384-well format.

- Target enzyme/substrate in assay buffer.

- Positive Control (e.g., known potent inhibitor, 100% inhibition reference).

- Negative Control (e.g., DMSO vehicle, 0% inhibition reference).

- Fluorescent or luminescent detection reagent.

Procedure:

- Assay Execution: For each microplate, include 32 wells of negative control (n=32) and 16 wells of positive control (n=16). Run the catalytic assay according to standard kinetics protocols.

- Raw Data Collection: Measure the catalytic output (e.g., initial velocity, total product formed) for all sample (S) and control wells.

- Plate-based Normalization:

- Calculate the mean (µ) and standard deviation (σ) of the negative control wells for each plate independently.

- For each well i on the plate (both samples and controls), compute the Z-score: Zi = (Xi - µnegative) / σnegative

- This transforms all data points into standard deviation units from the negative control mean.

- Hit Identification: Apply a threshold (commonly Z ≤ -2 or Z ≥ +2, depending on assay direction) to flag primary hits for confirmatory screening.

Protocol 3.2: Assessing Replicate Agreement in Enzyme Kinetics

Objective: To evaluate the consistency of replicate measurements (e.g., kcat, Km) for a mutant enzyme compared to a wild-type dataset.

Procedure:

- Establish Reference Population: Determine the mean (µ) and standard deviation (σ) for a catalytic parameter (e.g., catalytic efficiency kcat/Km) from N≥20 independent wild-type enzyme preparations.

- Test Sample Measurement: Perform the same kinetic assay in m replicates (m≥3) for the new mutant enzyme. Calculate the mean value for the mutant.

- Z-Score Calculation: Compute the Z-score for the mutant's mean value relative to the wild-type population: Zmutant = (µmutant - µwild-type) / σwild-type

- Interpretation: A Z_mutant of -2 indicates the mutant's efficiency is 2 SD below the wild-type mean, suggesting a significant deleterious effect on catalysis.

Visualization of Workflows and Conceptual Relationships

Title: HTS Hit Identification Workflow Using Z-Score Normalization

Title: Conceptual Map of Z-Score Values and Their Meanings

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Catalytic Assays in Z-Score Analysis

| Item | Function in Protocol | Example / Specification |

|---|---|---|

| Reference Enzyme (Wild-Type) | Serves as the biological standard for establishing the normative population mean (µ) and standard deviation (σ) for catalytic parameters. | Recombinant, purified protein with confirmed specific activity. |

| Positive & Negative Control Compounds | Provides assay response anchors on each plate for robust Z-score normalization and quality control (QC). | Potent inhibitor for positive control; vehicle (e.g., DMSO) for negative control. |

| Homogeneous Assay Detection Kit | Enables quantitative measurement of catalytic activity (e.g., fluorescence, luminescence) suitable for high-density microplate formats. | Luminescent ATP detection kit for kinase assays; fluorogenic substrate for protease assays. |

| Statistical Analysis Software | Performs bulk Z-score calculations, population statistics, and visualization for hit identification and data quality assessment. | Tools like Genedata Screener, Spotfire, or custom R/Python scripts. |

| QC Plate (Control Chart) | A dedicated plate with control samples run periodically to monitor assay stability (µ and σ over time) and validate Z-score thresholds. | Plate containing standardized controls at defined positions. |

Within the broader thesis on the Z-score method for normally distributed catalytic data, the identification of outliers in High-Throughput Screening (HTS) is a critical pre-processing step. HTS generates massive datasets to identify 'hits'—compounds or genes with significant biological activity. However, systematic errors, technical artifacts, and true biological extremes can produce outlying data points that skew analysis. The Z-score method, predicated on data following a normal distribution after robust normalization, provides a statistically grounded framework to flag these outliers, ensuring the integrity of downstream hit selection and structure-activity relationship studies.

Theoretical Basis and Data Presentation

The Z-score measures how many standard deviations an observation is from the mean. For a data point ( x ), the Z-score is calculated as: [ Z = \frac{x - \mu}{\sigma} ] where ( \mu ) is the sample mean and ( \sigma ) is the sample standard deviation. In HTS, plates are normalized first (e.g., using median polish or B-score correction) to remove row/column biases, then the Z-score is calculated for each well within the normalized dataset. Common thresholds for outlier identification are |Z| > 3 or |Z| > 4.

Table 1: Z-score Thresholds and Interpretations in HTS

| Z-score Range | Interpretation | Recommended Action in HTS |

|---|---|---|

| -3 ≤ Z ≤ 3 | Data point within expected variation. | Include in primary hit analysis. |

| Z < -3 or Z > 3 | Statistical outlier. | Flag for further investigation; possible artifact or extreme hit. |

| Z < -4 or Z > 4 | Extreme statistical outlier. | High probability of being a technical artifact or a very potent hit; requires strict validation. |

Table 2: Impact of Outlier Removal on a Representative HTS Campaign (Simulated Data)

| Metric | Before Outlier Removal | After Outlier Removal ( | Z | >3) |

|---|---|---|---|---|

| Total Data Points | 100,000 | 98,650 | ||

| Identified Outliers | 0 | 1,350 (1.35%) | ||

| Assay Z' Factor | 0.45 | 0.68 | ||

| Hit Rate (Primary) | 3.2% | 2.1% | ||

| Coefficient of Variation (CV) | 25% | 15% |

Experimental Protocols

Protocol 1: HTS Plate Normalization and Z-score Calculation for Outlier Identification

Objective: To normalize raw HTS data and subsequently identify outliers using the Z-score method. Materials: See "The Scientist's Toolkit" below. Procedure:

- Data Acquisition: Load raw luminescence/absorbance/fluorescence data from the HTS instrument into statistical analysis software (e.g., R, Python, or specialized HTS software).

- Background Correction: Subtract the average signal of negative control wells (e.g., vehicle-only) from all wells on a per-plate basis.

- Plate Normalization (Median Polish Method): a. For each plate, calculate the plate median ((M)). b. Calculate the row medians and column medians. c. Subtract the row effect and column effect from each well value to yield residuals. d. Add the global plate median (M) back to all residuals to obtain normalized values.

- Calculate Descriptive Statistics: For the entire normalized dataset (or per plate for large campaigns), compute the robust mean (( \mu )) and standard deviation (( \sigma )). Using the median and Median Absolute Deviation (MAD) is often more robust: ( \mu{robust} = median(data) ), ( \sigma{robust} = 1.4826 * MAD(data) ).

- Compute Z-scores: Apply the formula (Z = (x - \mu{robust}) / \sigma{robust}) to each normalized data point.

- Flag Outliers: Flag all data points where |Z| > 3.5 (or chosen threshold) as outliers.

- Visual Inspection: Generate a scatter plot of well position vs. Z-score to identify spatial patterns indicative of systematic errors.

Protocol 2: Validation of Outliers as True Hits or Artifacts

Objective: To distinguish between technical artifacts and true biological outliers (hits). Procedure:

- Retest Analysis: Re-test compounds flagged as outliers (both positive and negative) in a dose-response format, using the original assay conditions.

- Counter-Screen: Subject outliers to a counter-screen against an unrelated target or a viability assay to identify non-specific or cytotoxic agents.

- Liquid Handling Inspection: Review the original plate maps for outliers clustered around specific tips or wells, suggesting pipetting errors.

- Kinetic Analysis (if applicable): For time-course data, inspect the raw kinetic traces of outlier wells for irregularities (e.g., signal drift, bubbles).

Visualizations

Diagram 1: HTS Data Outlier ID Workflow

Diagram 2: Outlier Triage Decision Tree

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for HTS Outlier Analysis

| Item | Function in HTS/Outlier Analysis |

|---|---|

| 384 or 1536-well Assay Plates | Microplate format enabling high-density screening, minimizing reagent use and increasing throughput. |

| Positive Control Compound | Provides a known strong response to validate assay performance and signal window on every plate. |

| Negative Control (Vehicle) | Defines baseline activity; crucial for normalization and Z-score calculation. |

| Liquid Handling Robots | Ensure precision and reproducibility in reagent and compound dispensing, reducing one source of outlier-generating error. |

| Cell Viability Assay Kit (e.g., CellTiter-Glo) | Counterscreen to identify cytotoxic outliers that may cause signal interference rather than target-specific effects. |

| Statistical Software (R/Python with ggplot2/matplotlib) | Platforms for implementing robust normalization algorithms, calculating Z-scores, and generating diagnostic plots. |

| Plate Reader (Multimode) | Instrument for detecting fluorescence, luminescence, or absorbance signals from HTS assays with high sensitivity. |

| Laboratory Information Management System (LIMS) | Tracks plate layouts, compound structures, and raw data, allowing traceability of outliers back to source materials. |

1. Introduction and Thesis Context Within the broader thesis on Z-score normalization for normally distributed catalytic data research, this document addresses a critical pre-processing challenge: the integration of data from multiple experimental batches. Batch effects—systematic non-biological variations introduced by different times, equipment, or personnel—can obscure true biological signals and compromise statistical analysis. This protocol details a standardized pipeline for batch effect correction, ensuring that Z-score transformed data from disparate catalytic activity assays (e.g., enzyme kinetics, high-throughput screening) are comparable and valid for meta-analysis.

2. Key Concepts and Data Presentation Table 1: Common Sources of Batch Effects in Catalytic Data Generation

| Source Category | Specific Examples | Impact on Catalytic Data |

|---|---|---|

| Technical | Different reagent lots, plate readers, assay kit versions | Alters baseline absorbance/fluorescence, Vmax drift. |

| Procedural | Variation in incubation time, temperature, technician | Affects reaction rate constants, increases intra-group variance. |

| Biological | Cell passage number, primary tissue donor variability | Modifies enzyme expression levels, leading to shifted activity distributions. |

| Environmental | Room humidity, daily calibration drift | Introduces non-linear noise across experimental runs. |

Table 2: Comparison of Batch Effect Correction Methods

| Method | Principle | Best For | Key Assumption | Post-Correction Output |

|---|---|---|---|---|

| ComBat (Empirical Bayes) | Models batch as additive/multiplicative effect, shrinks batch parameters. | Small sample sizes, strong batch effects. | Batch effect is systematic and adjustable. | Batch-adjusted activity values. |

| Z-Score Standardization per Batch | Centers (μ=0) and scales (σ=1) data within each batch independently. | Pre-processing for downstream analysis, normally distributed data. | Each batch contains a similar biological distribution. | Unitless, comparable Z-scores across batches. |

| SVA (Surrogate Variable Analysis) | Estimates hidden factors (surrogate variables) for regression. | Complex designs where batch is unknown or confounded. | Batch effects are orthogonal to biological signal. | Residuals with removed unwanted variation. |

| Limma (removeBatchEffect) | Linear model to estimate and subtract batch means. | Microarray or RNA-seq derived catalytic expression data. | Additive batch effect. | Model residuals free of batch mean. |

3. Experimental Protocols

Protocol 3.1: Diagnostic Assessment of Batch Effects Objective: Visually and statistically confirm the presence of batch effects prior to correction.

- Data Preparation: Compile raw catalytic data (e.g., initial velocity, IC50, % inhibition) from all batches, including metadata for Batch ID and experimental Group (e.g., control vs. treated).

- Principal Component Analysis (PCA):

- Perform PCA on the log-transformed or raw data matrix.

- Generate a 2D PCA plot (PC1 vs. PC2) colored by Batch ID. A clear separation by batch indicates a strong batch effect.

- Generate a second PCA plot colored by Experimental Group. If the group separation is weak or absent in the first plot but emerges after correction, the correction is effective.

- Statistical Test: Perform a PERMANOVA (Adonis) test using the Batch ID as a factor on the distance matrix. A significant p-value (p < 0.05) confirms a statistically significant batch effect.

Protocol 3.2: Integrated Pipeline for Z-Score Standardization with Batch Correction Objective: Generate batch-corrected, Z-score normalized data from multiple catalytic experiments.

- Pre-processing & Log Transformation: Apply a log2 transformation to the raw activity metrics to stabilize variance and improve normality.

- Batch Effect Correction (Using ComBat as an example):

- Input: A matrix of log-transformed data where rows are features (e.g., compounds, enzymes) and columns are samples.

- Define the

batchvector (categorical) and optionally themodmatrix (containing biological groups of interest). - Execute the Empirical Bayes adjustment (e.g., using

sva::ComBatin R). This step estimates and removes additive and multiplicative batch effects.

- Z-Score Standardization:

- Using the batch-corrected data matrix, calculate the Z-score for each feature across all samples:

Z = (x - μ) / σ- where

xis the batch-corrected value for a feature,μis the mean of that feature across all samples, andσis the standard deviation.

- Using the batch-corrected data matrix, calculate the Z-score for each feature across all samples:

- Validation:

- Re-run PCA on the final Z-score matrix. The samples should now cluster primarily by biological group, not by batch.

- Calculate the Average Silhouette Width for batch vs. group clustering before and after correction. Successful correction increases silhouette for groups and decreases it for batches.

4. Mandatory Visualization

Diagram Title: Batch Effect Correction and Standardization Workflow

Diagram Title: Conceptual Model of Batch Effect Removal

5. The Scientist's Toolkit: Research Reagent Solutions Table 3: Essential Materials for Cross-Experimental Catalytic Studies

| Item | Function & Rationale |

|---|---|

| Reference Standard Compound | A chemically stable compound with known catalytic effect, run in every batch to monitor and correct for inter-batch potency drift. |

| Internal Control Enzyme/Recombinant Protein | A standardized aliquot from a single large preparation, used to deconvolute assay performance variation from sample-specific variation. |

| Multi-Plate Calibration Dye (Fluorescence/Luminescence) | Provides a stable signal across plates and readers, enabling normalization of instrument gain and detector variability. |

| Standardized Assay Kit (Lyophilized) | Minimizes reagent preparation variability. Using kits from the same lot across batches is ideal for critical studies. |

| Automated Liquid Handlers | Reduces procedural variability in pipetting steps, a major source of technical noise in high-throughput catalytic screens. |

| Plate Reader with Temperature & CO2 Control | Ensures identical environmental conditions for kinetic reads over time, crucial for time-sensitive catalytic measurements. |

| Laboratory Information Management System (LIMS) | Tracks critical metadata (reagent lot numbers, instrument calibration logs, operator) essential for diagnosing batch effect sources. |

| Statistical Software (R/Python with key packages) | Essential for implementing correction algorithms (e.g., sva, limma in R; scikit-learn, pyComBat in Python) and generating diagnostic plots. |

Solving Real-World Problems: Pitfalls and Best Practices for Z-Score Analysis

Within the thesis framework advocating the Z-score method for normally distributed catalytic data analysis, the prevalent issue of non-normal, skewed data represents a primary methodological challenge. The Z-score’s assumption of normality is violated by skewed distributions, leading to inaccurate probability estimates and erroneous significance thresholds. This application note details protocols for diagnosing skewness and applying robust data transformation techniques to meet parametric test assumptions.

Diagnosis of Skewness and Distribution Assessment

Initial analysis must quantify the degree and direction of skewness prior to any statistical modeling.

Table 1: Quantitative Metrics for Skewness Assessment

| Metric | Formula | Interpretation for Catalytic Data (e.g., Reaction Rate, k_cat) | Threshold for Concern |

|---|---|---|---|

| Skewness Coefficient (g1) | ( g1 = \frac{\mu3}{\sigma^3} ) where (\mu3) is the third central moment | Positive g1: Right-skew (long tail of high activity outliers). Negative g1: Left-skew (tail of low activity). | |g1| > 0.5 suggests moderate skew; |g1| > 1.0 indicates substantial skew. |

| Shapiro-Wilk Test (p-value) | W statistic | Tests null hypothesis that data is from a normal distribution. | p < 0.05 rejects normality, indicating transformation is needed. |

| Q-Q Plot Visual Inspection | Data quantiles vs. theoretical normal quantiles | Systematic deviation from the reference line indicates non-normality. | Subjective but critical for pattern recognition. |

Experimental Protocol 1.1: Distribution Diagnostic Workflow

- Data Collection: Compile catalytic parameter dataset (e.g., initial velocities from 96-enzyme variant screen, measured in triplicate).

- Calculate Descriptive Statistics: Compute mean, median, standard deviation, and skewness coefficient (g1) using statistical software (e.g., Python

scipy.stats.skew, Rmoments::skewness). - Perform Normality Test: Execute Shapiro-Wilk test (e.g.,

scipy.stats.shapiroin Python,shapiro.test()in R) on the dataset. - Visualization: Generate a histogram with a superimposed normal distribution curve and a Q-Q plot.

- Decision Point: If g1 > \|0.5\| AND Shapiro-Wilk p < 0.05, proceed with data transformation protocols.

Title: Diagnostic Workflow for Identifying Skewed Catalytic Data

Data Transformation Protocols for Right-Skewed Data

Right-skewed data (common in activity screens) requires variance-stabilizing transformations.

Table 2: Common Transformations for Right-Skewed Catalytic Data

| Transformation | Function | Use Case | Effect on Distribution | Post-Transformation Z-score Formula |

|---|---|---|---|---|

| Logarithmic | ( x' = \log_{10}(x) ) or ( \ln(x) ) | Rates, concentrations, signal intensities. | Compresses large values, reduces positive skew. | ( Z = \frac{\log{10}(x) - \mu{\log}}{\sigma_{\log}} ) |

| Square Root | ( x' = \sqrt{x} ) | Count data (e.g., turnover numbers). | Milder compression than log. | ( Z = \frac{\sqrt{x} - \mu{\sqrt{}}}{\sigma{\sqrt{}}} ) |

| Box-Cox Power | ( x' = \frac{x^\lambda - 1}{\lambda} ) for λ ≠ 0 | Generalized, optimal transformation. | Finds optimal λ to maximize normality. | ( Z = \frac{(x^\lambda - 1)/\lambda - \mu{BC}}{\sigma{BC}} ) |

Experimental Protocol 2.1: Box-Cox Transformation for Optimal Normalization

- Precondition: Ensure all catalytic data points (x) are positive. Add a small constant to any zero values if necessary.

- Parameter Estimation: Use maximum likelihood estimation (MLE) to find the optimal λ value that maximizes the log-likelihood function for normality. (Implementation:

scipy.stats.boxcoxin Python;MASS::boxcoxin R). - Apply Transformation: Transform the entire dataset using the optimal λ.

- Validation: Re-calculate skewness coefficient and perform Shapiro-Wilk test on the transformed data. Generate a new Q-Q plot.

- Z-score Calculation: Compute the mean (μBC) and standard deviation (σBC) of the transformed data. Calculate normalized Z-scores for each original data point via the Box-Cox formula.

Title: Box-Cox Transformation Protocol for Catalytic Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Catalytic Data Generation & Transformation

| Item | Function & Relevance to Protocol |

|---|---|

| High-Throughput Microplate Reader (e.g., Tecan Spark, BMG CLARIOstar) | Enables rapid kinetic data collection (initial rates) across hundreds of enzyme variants or conditions, generating the large datasets where skewness is commonly identified. |

Robust Statistical Software (R with tidyverse, moments, MASS; Python with SciPy, pandas, statsmodels) |

Essential for calculating skewness metrics, performing Shapiro-Wilk and Box-Cox transformations, and generating diagnostic plots. |

| Validated Enzyme Activity Assay Kit (e.g., coupled spectrophotometric assays, fluorescent substrate kits) | Provides reproducible, quantitative catalytic data (absorbance/fluorescence per time) as the primary input for distribution analysis. Consistency is key. |

| Laboratory Information Management System (LIMS) | Tracks sample provenance and raw data, ensuring the integrity of the dataset from assay to statistical transformation, a critical step for reproducible Z-score analysis. |

| Reference Control Enzyme (Wild-Type) | Included on every assay plate to normalize for inter-assay variability, reducing systematic noise that can distort distribution shape before transformation. |

Within the broader thesis on the application of the Z-score method for analyzing normally distributed catalytic data in drug discovery, the issue of sample size is paramount. A Z-score, calculated as ( Z = \frac{(X - \mu)}{\sigma} ), where ( X ) is the observed value, ( \mu ) is the population mean, and ( \sigma ) is the population standard deviation, is a cornerstone for standardizing data and identifying outliers. However, its reliability is intrinsically linked to the accuracy of the estimates for ( \mu ) and ( \sigma ), which are severely compromised by small sample sizes (n < 30). Small samples lead to high variance in standard deviation estimation, inflated Z-score magnitudes, and increased rates of Type I (false positives) and Type II (false negatives) errors, ultimately jeopardizing decision-making in high-throughput screening (HTS) and lead optimization.

Quantitative Impact Analysis

The table below summarizes the effects of small sample sizes on key statistical parameters, based on Monte Carlo simulations from current literature.

Table 1: Impact of Sample Size on Z-Score and Statistical Reliability

| Sample Size (n) | Std Dev Estimation Error (% CV) | False Positive Rate (α=0.05) | False Negative Rate (Power) | Minimum Detectable Effect (MDE) |

|---|---|---|---|---|

| 5 | 35-40% | 12-18% | >60% | >2.5σ |

| 10 | 22-25% | 8-10% | ~45% | ~1.8σ |

| 20 | 15-16% | 6-7% | ~30% | ~1.3σ |

| 30 | ~13% | ~5.5% | ~20% | ~1.0σ |

| 50 | ~10% | ~5.2% | <15% | <0.8σ |

| 100 | ~7% | ~5.05% | <10% | <0.6σ |

CV: Coefficient of Variation; MDE: For 80% power.

Experimental Protocols for Mitigation

Protocol 3.1: Bootstrap Resampling for Robust Z-Score Estimation

Objective: To generate a more reliable estimate of the population mean and standard deviation from a small dataset (n=5-15).

- Input: A small sample dataset ( D = {x1, x2, ..., x_n} ).

- Resampling: Generate B (e.g., 5000) bootstrap samples by randomly selecting n values from ( D ) with replacement.

- Parameter Calculation: For each bootstrap sample i, calculate the sample mean (( \mu^_i )) and sample standard deviation (( \sigma^_i )).

- Aggregation: Determine the bootstrap aggregated estimates:

- ( \mu{boot} ) = Median of all ( \mu^i )

- ( \sigma{boot} ) = Median of all ( \sigma^i )

- Z-score Calculation: Compute the adjusted Z-score for each original data point: ( Z{boot} = \frac{(X - \mu{boot})}{\sigma_{boot}} ).

- Validation: Compare the distribution of ( Z_{boot} ) to the standard normal distribution using a Q-Q plot.

Protocol 3.2: Application of the t-statistic and Corrected Critical Values

Objective: To control the false positive rate when screening for outliers with small n.

- Assumption Check: Confirm approximate normality of the underlying catalytic data using a Shapiro-Wilk test (if n > 7).

- t-statistic Calculation: For each observation ( X ), calculate the t-statistic: ( t = \frac{(X - \bar{X})}{s} ), where ( \bar{X} ) and ( s ) are the sample mean and standard deviation.

- Critical Value Adjustment: Do not use the standard Z critical value (e.g., ±1.96 for α=0.05). Instead, use the critical value from the Student's t-distribution with ( \nu = n-1 ) degrees of freedom and a Bonferroni-corrected α if performing multiple comparisons: ( \alpha_{adj} = \alpha / m ), where m is the number of tests.

- Decision Rule: Flag an observation as a significant outlier if ( |t| > t{critical}(\alpha{adj}, \nu) ).

Visualizations

Diagram: Small Sample Impact on Z-Score Reliability

Diagram: Bootstrap Resampling Protocol Workflow

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Catalytic Assay & Statistical Validation

| Item / Solution | Function in Context |

|---|---|

| High-Throughput Screening (HTS) Assay Kits (e.g., fluorescence-based) | Provide standardized, sensitive readouts of catalytic activity (e.g., enzyme velocity) for generating primary data. Essential for collecting the initial 'n' observations. |

Statistical Software/Libraries (R boot package, Python SciPy/NumPy, GraphPad Prism) |

Perform bootstrap resampling, calculate robust estimates, generate t-distribution critical values, and create validation plots (Q-Q plots). |

| Positive/Negative Control Inhibitors/Activators | Serve as known effectors to validate the assay's dynamic range and to test the outlier detection capability of the Z-score method under small n conditions. |

| Data Normalization Reagents (e.g., background quenchers, blank assay buffers) | Critical for pre-processing raw catalytic data (e.g., background subtraction) to ensure the underlying distribution approximates normality before Z-score analysis. |

Sample Size Planning Tools (G*Power, R pwr package) |

Used a priori to determine the minimum sample size (n) required to detect a biologically relevant effect size with sufficient power (e.g., 80%), mitigating the small-n issue from the outset. |

Within the broader thesis on utilizing the Z-score method for analyzing normally distributed catalytic data in drug development, achieving normality in dataset distributions is a critical prerequisite. Many biochemical assays, such as enzyme activity (e.g., nmol/min/mg), inhibitor IC₅₀ values (nM), or high-throughput screening readouts, generate positive, continuous data that is inherently right-skewed. Applying Z-score normalization ((Z = (X - \mu)/\sigma)) to such non-normal data can lead to erroneous statistical conclusions, invalidating subsequent t-tests, ANOVA, or quality control limits. This protocol details the application of monotonic data transformations—specifically logarithmic and square root transformations—to reshape skewed catalytic data distributions towards normality, thereby enabling robust parametric analysis.

Application Notes: Transformation Selection & Impact

The choice of transformation depends on the severity of skewness. The table below summarizes the mathematical operation, ideal use case, and effect based on current statistical guidance.

Table 1: Comparative Analysis of Normality-Promoting Transformations

| Transformation | Formula (Y') | Primary Use Case | Effect on Distribution | Key Assumption/Limitation |

|---|---|---|---|---|

| Logarithmic (Natural) | ( Y' = \ln(Y) ) | Severe right-skewness; data spans several orders of magnitude (e.g., catalytic rates, concentration-response data). | Compresses large values more strongly than small ones, reducing positive skew. | Data must be strictly positive (Y > 0). For zeros, use ( \ln(Y+1) ). |

| Logarithmic (Base 10) | ( Y' = \log_{10}(Y) ) | Same as natural log, often preferred for interpretability (e.g., fold-change). | Identical proportional effect as ln, but scale differs. | Same as above. |

| Square Root | ( Y' = \sqrt{Y} ) | Moderate right-skewness; data are counts (e.g., number of catalytic events) or mild positive skew. | A milder compression than log, effective for variance stabilization. | Data must be non-negative (Y ≥ 0). |

| Box-Cox Power | ( Y' = \frac{Y^\lambda - 1}{\lambda} (\lambda \neq 0) ) | Unknown or complex skew; automated optimization of parameter λ. | Finds optimal power transformation for normality. | Computationally intensive; assumes positive data. |

Experimental Protocol: Assessing and Applying Transformations

This step-by-step protocol integrates transformation into a catalytic data analysis pipeline.

Protocol 3.1: Normality Assessment and Transformation Workflow

Objective: To diagnose non-normality in a dataset of enzyme inhibition percentages and apply an appropriate transformation to enable Z-score analysis.

Materials & Software: Raw catalytic dataset (e.g., .csv file), statistical software (R, Python, or GraphPad Prism), visualization tools.

Procedure:

Initial Data Preparation:

- Import the raw catalytic data. Clean the data by removing non-numeric entries and obvious outliers due to measurement error.

- Plot the raw data as a histogram with a superimposed density curve. Calculate descriptive statistics (mean, median, skewness).

Quantitative Normality Testing:

- Perform the Shapiro-Wilk test (preferred for n < 50) or the Kolmogorov-Smirnov test. Record the test statistic (W or D) and p-value.

- Decision Point: If p-value > 0.05, the distribution is not significantly different from normal. Proceed to Z-score calculation. If p-value < 0.05, proceed to transformation.

Application of Transformation:

- For Logarithmic Transformation: Create a new variable

log_transformed = ln(original_value)orlog10(original_value). If zeros are present, useln(original_value + 1). - For Square Root Transformation: Create a new variable

sqrt_transformed = sqrt(original_value). - Visual Assessment: Generate new histograms and Q-Q plots for each transformed variable.

- For Logarithmic Transformation: Create a new variable

Post-Transformation Validation:

- Re-run the chosen normality test on the transformed data.

- Compare skewness and kurtosis metrics before and after transformation.

- Decision Point: Select the transformation that yields the highest p-value for normality without over-correction.

Downstream Z-score Analysis:

- Using the validated transformed data, calculate the mean (μ) and standard deviation (σ).

- Compute the Z-score for each observation: ( Zi = (Y'i - \mu)/\sigma ).

- Use Z-scores for outlier detection (e.g., |Z| > 3), hit identification in screening, or comparative statistical tests.

Troubleshooting: If neither log nor square root achieves normality, consider the Box-Cox transformation or consult non-parametric statistical methods.

Visual Workflow: Data Transformation for Z-score Analysis

Diagram Title: Workflow for Data Transformation Prior to Z-score Analysis

The Scientist's Toolkit: Essential Reagents & Software

Table 2: Research Reagent Solutions for Catalytic Data Generation & Analysis

| Item | Function/Description |

|---|---|

| Recombinant Enzyme (e.g., Kinase, Protease) | Catalytic target for activity assays. Source (e.g., baculovirus expression) and purity are critical for reproducible data. |

| Fluorogenic/Luminescent Substrate | Provides a measurable signal upon enzymatic conversion (e.g., release of a fluorophore). Enables high-throughput kinetic readouts. |

| Reference Inhibitor/Control Compound | A well-characterized molecule (e.g., Staurosporine for kinases) for assay validation and normalization between experimental runs. |

| Statistical Software (R/Python with packages) | Essential for transformation (e.g., scipy.stats in Python, car package in R) and normality testing. Enables scripting for reproducibility. |

| Microplate Reader (with kinetic capability) | Instrument for measuring catalytic activity in real-time via absorbance, fluorescence, or luminescence in a 96- or 384-well format. |

| Data Visualization Tool (e.g., ggplot2, Matplotlib) | Generates histograms, Q-Q plots, and box plots for visual assessment of distribution shape before and after transformation. |

| Assay Buffer System (Optimized pH, Cofactors) | Maintains optimal enzymatic activity and compound solubility. Variability here is a major source of non-biological skew in data. |

This document provides application notes and protocols within the context of research on the Z-score method for analyzing normally distributed catalytic data, common in enzyme kinetics, high-throughput screening (HTS), and drug development. The central question is whether the traditional Z-score thresholds of ±3 (encompassing ~99.73% of data) are sufficiently rigorous for quality control and hit identification, or if tighter controls (e.g., ±2, ±2.5) are warranted to reduce false positives and negatives in critical assays.

Data Presentation: Threshold Comparison Table

Table 1: Statistical Outcomes of Different Z-Score Thresholds

| Z-Score Threshold | % of Data Within Threshold (Normal Dist.) | Expected False Positive/Negative Rate | Typical Application Context |

|---|---|---|---|

| ± 3.0 | 99.73% | 0.27% | Initial QC of robust, high-signal assays; General process control. |

| ± 2.5 | 98.76% | 1.24% | Intermediate control for moderately critical data streams. |

| ± 2.0 | 95.45% | 4.55% | Tighter control for primary HTS hit selection; Critical catalytic parameter validation. |

| ± 1.96 | 95.00% | 5.00% | Standard for statistical significance (p<0.05); Commonly used in comparative analyses. |

Table 2: Impact on Hit Identification in a 100,000-Compound Screen (Assuming Normal Distribution of Control Data)

| Threshold | Compounds Flagged as "Hits" | Approx. False Positives (If 1% True Hits) | Primary Risk |

|---|---|---|---|

| ± 3.0 | ~ 2,700 | ~ 243 | Missing true hits (False Negatives). |

| ± 2.0 | ~ 4,550 | ~ 4,455 | Chasing false leads (False Positives). |

Experimental Protocols

Protocol 1: Establishing Baseline and Threshold Determination for Catalytic Activity Assays

Objective: To define the normal distribution of catalytic rate data and determine an appropriate Z-score threshold for ongoing quality control and outlier detection.

Materials: (See Scientist's Toolkit) Procedure:

- Control Experiment Execution: Perform the catalytic activity assay (e.g., enzyme kinetic readout) using a standardized positive control (e.g., substrate with known enzyme) and negative control (e.g., no enzyme, inactive mutant) across a minimum of N=30 independent replicates. Replicates should be performed over multiple days by different analysts to capture routine variance.

- Data Collection: Record the primary catalytic metric (e.g., initial velocity V₀, k_cat, % conversion) for each control replicate.

- Normality Assessment: Test the dataset for normal distribution using the Shapiro-Wilk test (for n<50) or the Anderson-Darling test. A p-value > 0.05 suggests normality.

- Baseline Calculation: Calculate the mean (μ) and standard deviation (σ) of the control dataset.

- Z-score Calculation for Future Data: For any new experimental observation (X), calculate its Z-score: Z = (X - μ) / σ.

- Threshold Application & Decision:

- For routine QC: Flag any control sample with |Z| > 3 for investigation.

- For primary hit identification in a screen: Apply a two-threshold system. Flag compounds with |Z| > 3 for confirmation. Use a secondary, tighter threshold (e.g., |Z| > 2) for prioritization if the assay signal-to-noise is very high and false positives are a major resource concern.