Revolutionizing Drug Discovery: An Active Learning Workflow for Surface Adsorbate Geometry Prediction in Pharmaceutical Science

This article presents a comprehensive guide to implementing an active learning workflow for predicting and optimizing surface adsorbate geometry, a critical challenge in heterogeneous catalysis and materials science for drug...

Revolutionizing Drug Discovery: An Active Learning Workflow for Surface Adsorbate Geometry Prediction in Pharmaceutical Science

Abstract

This article presents a comprehensive guide to implementing an active learning workflow for predicting and optimizing surface adsorbate geometry, a critical challenge in heterogeneous catalysis and materials science for drug development. We first establish the fundamental concepts of adsorbate-surface interactions and the computational bottlenecks in traditional methods. Next, we detail a step-by-step methodological framework for building an active learning pipeline, integrating quantum mechanics, machine learning potentials, and acquisition strategies. We then address common pitfalls and optimization techniques to enhance model efficiency and accuracy. Finally, we compare and validate this approach against conventional computational methods, demonstrating its superior performance in predicting binding sites, adsorption energies, and reaction pathways. This guide is tailored for researchers and professionals seeking to accelerate catalyst and material design for pharmaceutical synthesis and biomedical applications.

Beyond Trial-and-Error: The Foundational Principles of Adsorbate Geometry and Active Learning

1. Introduction & Thesis Context Within an active learning workflow for surface adsorbate geometry research, the precise three-dimensional arrangement of molecules (adsorbates) on a material surface (adsorbent) is the critical predictive parameter. This geometry dictates electronic interaction strength, activation energy barriers, and stereoselective binding. An active learning cycle—integrating computation, experiment, and automated data analysis—relies on accurately defined geometries to guide subsequent simulations and targeted syntheses. This note details the protocols and applications underscoring this centrality.

2. Application Notes

2.1 Catalysis: Ammonia Synthesis on Ru B5 Sites The dissociation of N₂ on Ru catalysts is highly structure-sensitive. The active "B5" step sites uniquely stabilize the transition state geometry, where N₂ bridges two Ru atoms in a side-on configuration, critically weakening the N≡N bond.

Table 1: Calculated Activation Barriers (Eₐ) for N₂ Dissociation on Different Ru Sites

| Surface Site Type | N₂ Adsorption Geometry | Calculated Eₐ (eV) |

|---|---|---|

| Terrace (flat) | End-on, vertical | 1.50 |

| Step (B5 site) | Side-on, bridged | 0.90 |

| Kink | Tilted bridge | 1.10 |

2.2 Drug Development: Kinase Inhibitor Selectivity The clinical efficacy of kinase inhibitors (e.g., for EGFR, BRAF) depends on binding geometry within the ATP-binding pocket. A "DFG-in" vs. "DFG-out" adsorbate (inhibitor) orientation determines Type I vs. Type II inhibition, impacting selectivity and off-target effects.

Table 2: Selectivity Index (SI) of Inhibitors vs. Adsorbate Geometry

| Target Kinase | Inhibitor Class | Predominant Binding Geometry | Selectivity Index (SI)* |

|---|---|---|---|

| EGFR | Type I (e.g., Erlotinib) | DFG-in, αC-helix in | 15.2 |

| BCR-ABL | Type II (e.g., Imatinib) | DFG-out, αC-helix out | 235.0 |

| BRAF V600E | Type I.5 (e.g., Vemurafenib) | DFG-in, αC-helix out | 8.7 |

*SI = IC₅₀(most promiscuous off-target) / IC₅₀(primary target); higher values indicate greater selectivity.

3. Experimental Protocols

3.1 Protocol: Determining Adsorbate Geometry via In Situ Raman Spectroscopy (Catalysis) Objective: To characterize the molecular geometry of CO adsorbed on a Pt nanoparticle catalyst under reaction conditions. Materials: See "Scientist's Toolkit" below. Procedure:

- Cell Preparation: Load the Pt/Al₂O₃ catalyst wafer into the in situ Raman reaction cell.

- Pretreatment: Flush with He (50 mL/min) at 150°C for 1 hour. Reduce under 5% H₂/Ar (30 mL/min) at 300°C for 2 hours.

- Adsorption: Cool to 50°C under He. Introduce 1% CO/He flow for 30 minutes.

- In Situ Measurement: Maintain gas flow. Acquire Raman spectra (532 nm laser, 5 mW power, 10 accumulations of 10s each) from 1800-2200 cm⁻¹.

- Data Interpretation: Peak assignment: ~2050 cm⁻¹ (atop linear Pt–C≡O), ~1850 cm⁻¹ (bridged Pt₂–C≡O). The intensity ratio provides a semi-quantitative measure of geometric distribution.

- Active Learning Integration: Feed spectral data (peak positions, ratios) into the workflow to validate or refine computational models of the Pt surface.

3.2 Protocol: Determining Binding Pose via X-ray Crystallography (Drug Development) Objective: To resolve the atomic-scale binding geometry of a candidate inhibitor bound to its target protein. Materials: Purified target protein (≥95%), ligand compound, crystallization screen kits, synchrotron access. Procedure:

- Complex Formation: Incubate protein (10 mg/mL in suitable buffer) with 5x molar excess of ligand for 1 hour on ice.

- Crystallization: Screen for crystals using vapor diffusion (sitting drop). Mix 1 μL protein-ligand complex with 1 μL reservoir solution.

- Optimization & Harvesting: Optimize hit conditions. Cryo-protect crystal and flash-cool in liquid N₂.

- Data Collection: Collect X-ray diffraction data at a synchrotron beamline (e.g., 100K, wavelength ~1.0 Å).

- Structure Solution: Process data (autoPROC, XDS). Solve by molecular replacement (Phaser). Build/refine model (Coot, Phenix.refine).

- Active Learning Integration: The precise ligand coordinates (torsion angles, protein-ligand bond distances) serve as ground-truth validation for machine learning models predicting binding affinity.

4. The Scientist's Toolkit

Table 3: Key Research Reagent Solutions & Materials

| Item/Reagent | Function in Surface Adsorbate Geometry Research |

|---|---|

| In Situ Raman Cell (Linkam, Harrick) | Allows spectroscopic characterization under controlled gas and temperature, linking geometry to environment. |

| Metal Single Crystals (e.g., MaTeck) | Atomically-defined surfaces (e.g., Pt(111), Ru(0001)) for fundamental adsorption studies. |

| Kinase Protein Panel (e.g., Reaction Biology) | Enables high-throughput profiling of inhibitor binding across multiple targets to correlate geometry with selectivity. |

| Crystallization Screen Kits (e.g., Hampton Research) | Essential for obtaining protein-ligand co-crystals for definitive 3D pose determination. |

| Density Functional Theory (DFT) Software (VASP, Quantum ESPRESSO) | Computes stable adsorbate geometries, binding energies, and vibrational spectra. |

| Molecular Dynamics (MD) Software (GROMACS, NAMD) | Simulates dynamic evolution of adsorbate geometry on surfaces or in protein pockets over time. |

5. Visualizations

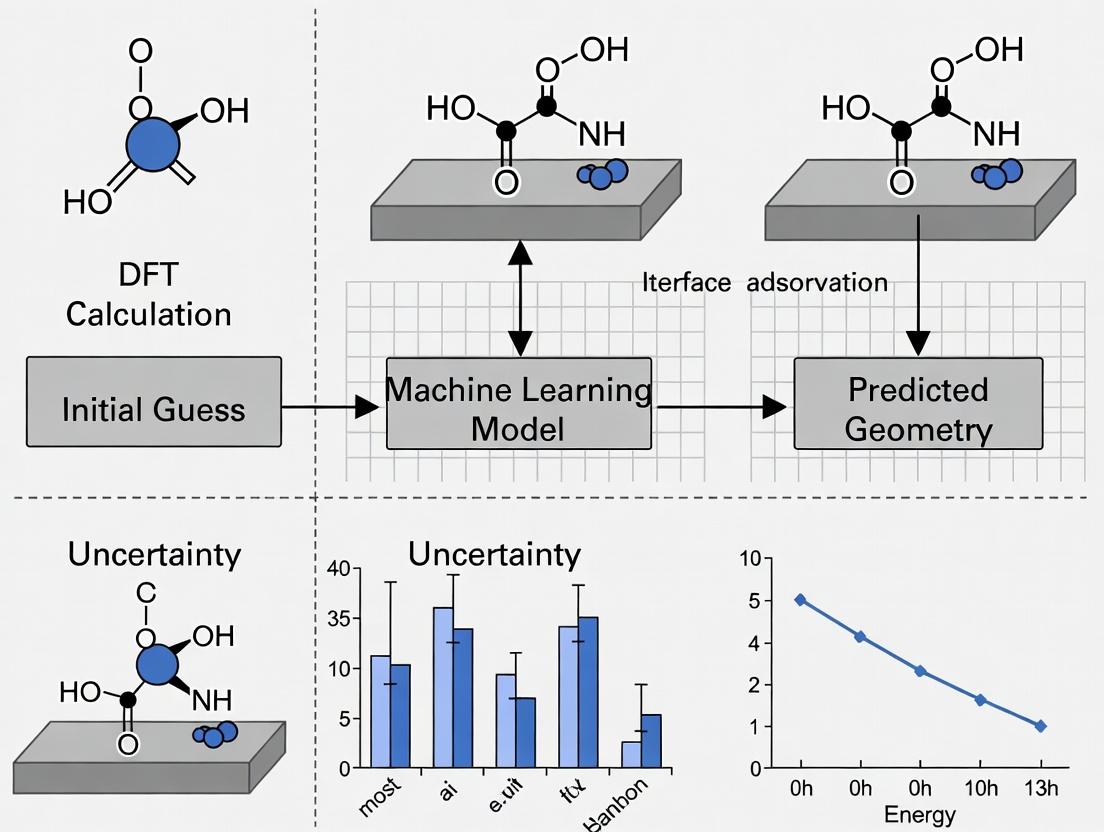

Title: Active Learning Cycle for Adsorbate Geometry

Title: Inhibitor Geometry Dictates Selectivity Pathway

This Application Note addresses a critical bottleneck in active learning workflows for surface adsorbate geometry research: the prohibitive computational scaling of traditional ab initio quantum chemistry methods when exploring high-dimensional chemical spaces. As active learning cycles rely on rapid, high-quality data generation to iteratively train surrogate models, the O(N³) to O(e^N) scaling of methods like CCSD(T) or even DFT becomes the primary limit to throughput. We detail protocols and solutions to mitigate these costs within a modern, data-driven research paradigm.

Quantitative Analysis of Computational Cost Scaling

The table below summarizes the formal computational scaling and practical time cost for key ab initio methods, illustrating the "curse of dimensionality" when moving from single-point calculations to exploring adsorbate configuration spaces.

Table 1: Computational Scaling of Selected Ab Initio Methods

| Method | Formal Scaling | Approx. Time for C₂H₄ on Pt(111) (50 atoms) | Primary Use in Active Learning Cycle |

|---|---|---|---|

| DFT (GGA-PBE) | O(N³) | 2-4 CPU-hrs | High-volume training data generation |

| Hybrid DFT (HSE06) | O(N⁴) | 15-30 CPU-hrs | Accurate training data for key points |

| MP2 | O(N⁵) | 50-100 CPU-hrs | Benchmarking & validation sets |

| CCSD(T) (Gold Standard) | O(N⁷) | 1000+ CPU-hrs (est.) | Final validation of surrogate model |

Table 2: Cost of Exploring Adsorbate Configuration Space

| Search Dimension | Number of DFT Single-points Needed (Brute-Force) | Estimated Wall Time (1000 CPUs) | Active Learning Reduction (Estimated) |

|---|---|---|---|

| 1 (Bond Length) | 10 | 0.02 hrs | 5 points (50%) |

| 3 (x,y,z translation) | 10³ | 20 hrs | ~100 points (90%) |

| 6 (add rotation) | 10⁶ | 2.3 years | ~10⁴ points (99%) |

| 12 (flexible adsorbate) | 10¹² | 2.3 million years | ~10⁵ points (99.99%) |

Detailed Protocols

Protocol 1: Pre-Screening with Semi-Empirical Methods for Active Learning Initialization

Purpose: To generate an initial diverse training dataset with minimal cost for the first iteration of the active learning surrogate model.

- System Preparation: From your bulk+slab+adsorbate model, extract the adsorbate molecule and all surface atoms within a 4Å radius. Terminate dangling bonds with hydrogen caps.

- Parameterization: Use the GFN2-xTB method as implemented in the

xtbprogram. Ensure the--gfn 2flag is set. - Conformational Sampling: Perform a low-mode torsional search using the

crestcommand: This generates a diverse set of starting geometries. - Single-Point Calculation: For each unique conformation, perform a single-point calculation on the full periodic slab system using a fast, low-cost DFT functional (e.g., PBE with D3 dispersion). Use a reduced k-point mesh (e.g., 2x2x1). Script this step for high-throughput execution on a cluster.

- Data Curation: Collect geometries and energies. This set of 100-500 data points forms

Dataset_0for active learning.

Protocol 2: Uncertainty-Guided Active Learning Loop with DFT

Purpose: To iteratively and intelligently select the most informative calculations for refining the surrogate model, minimizing calls to expensive ab initio methods.

- Surrogate Model Training: Train a Gaussian Process Regression (GPR) or a Neural Network Force Field (NNFF) model on the current dataset (starts with

Dataset_0). Use standardized atomic descriptors (e.g., SOAP, ACE). - Uncertainty Quantification: Use the trained model to predict energies and standard deviations (σ) for a large pool (10⁵-10⁶) of candidate adsorbate configurations generated via low-cost Molecular Dynamics (MD) or random perturbations.

- Query Strategy: Rank candidates by their prediction uncertainty (σ). Select the top N (e.g., N=10-50) configurations with the highest σ for DFT calculation.

- High-Fidelity Calculation: Perform a full DFT relaxation on each selected configuration using your target level of theory (e.g., RPBE-D3, medium k-point grid). This is the cost bottleneck.

- Data Augmentation & Iteration: Add the new (geometry, energy) pairs to the training dataset. Retrain the surrogate model. Check for convergence (e.g., reduction in average uncertainty on a held-out test set below a threshold, or stability of predicted global minimum).

- Loop: Repeat steps 2-5 until convergence criteria are met. Typically, this requires < 5% of the brute-force calculations.

Visualizations

Diagram Title: Active Learning Loop to Bypass Ab Initio Cost

Diagram Title: Formal Scaling of Ab Initio Methods

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Cost-Effective Active Learning

| Item/Software | Category | Function in Workflow |

|---|---|---|

| ASE (Atomic Simulation Environment) | Python Library | Primary framework for setting up, manipulating, and running calculations on atomistic systems. Integrates all other tools. |

| xtb/CREST | Semi-Empirical Program | Provides ultra-fast GFN2-xTB calculations for initial geometry sampling and pre-screening (Protocol 1). |

| VASP/Quantum ESPRESSO | DFT Code | Production-level ab initio engine for the high-fidelity, costly calculations queried by the active learning loop. |

| GPyTorch/SciKit-Learn | ML Library | Provides Gaussian Process and other regression models for the surrogate model, with built-in uncertainty quantification. |

| DeePMD-kit/MACE | MLFF Code | Tools for training more advanced and transferable Neural Network Force Fields as surrogates. |

| FLARE/SNAFU | Active Learning Platform | Integrated packages specifically designed for on-the-fly learning of interatomic potentials, streamlining Protocol 2. |

| SLURM/Argo | Workflow Manager | Orchestrates high-throughput job submission and data collection across HPC clusters for automated loops. |

In computational materials science and drug discovery, accurately predicting the geometry of adsorbates on catalytic or biological surfaces is crucial but computationally prohibitive with exhaustive sampling. Active Learning (AL) presents a paradigm shift by strategically selecting the most informative data points for first-principles calculations (e.g., Density Functional Theory - DFT), enabling the efficient training of surrogate models like Gaussian Process Regression (GPR) or Neural Network Potentials (NNPs). This iterative loop drastically reduces computational cost from thousands of calculations to a few hundred while achieving high-fidelity potential energy surface (PES) mapping. This protocol details the application of AL for surface-adsorbate geometry optimization within a research workflow.

Core Active Learning Protocol for Adsorbate Geometry

Objective: To construct a reliable machine learning model of the adsorbate-surface interaction energy and identify stable/low-energy geometries.

Initialization Phase

- Define Configuration Space: Parameterize the adsorbate's degrees of freedom (e.g., x, y coordinates, bond distance d, rotation angles θ, φ) over the unit cell.

- Generate Initial Training Set: Use a space-filling design (e.g., Sobol sequence, Latin Hypercube) to select ~20-50 initial configurations. Perform DFT single-point energy calculations on these structures.

Iterative Active Learning Loop The following steps are repeated until a convergence criterion is met (e.g., model uncertainty below a threshold, or no new stable geometries found for n cycles).

- Model Training: Train a surrogate model (e.g., GPR with Matern kernel) on all calculated data (energies, forces). The model provides both a predicted energy (μ) and an uncertainty estimate (σ) for any unexplored configuration.

- Query Strategy & Candidate Selection: Apply an acquisition function to the vast unexplored configuration pool. Common strategies include:

- Uncertainty Sampling: Select configurations where model uncertainty (σ) is highest.

- Expected Improvement (EI): Balances exploration (high σ) and exploitation (low predicted energy μ).

- Query-by-Committee: Use an ensemble of models; select configurations with the highest disagreement (variance) among committee members.

- Parallel Batch Selection: To leverage high-throughput computing, select a batch (e.g., 10-20) of top candidates, ensuring diversity via clustering or a penalization algorithm.

- First-Principles Calculation: Perform high-fidelity DFT calculations (including geometry relaxation, if needed) on the selected batch to obtain accurate energy and forces.

- Database Augmentation & Validation: Add the new {structure, energy, forces} data to the training database. Validate model performance on a separate, pre-calculated hold-out test set.

Post-Processing & Analysis

- PES Mapping: Use the final, validated model to predict energy for millions of configurations, generating a detailed PES.

- Minimum Identification: Apply global optimization (e.g., basin hopping) on the model to locate all stable adsorption geometries and transition states.

Workflow Visualization

Title: Active Learning Loop for Adsorbate Geometry

Key Research Reagent Solutions

| Item | Function in Workflow |

|---|---|

| VASP / Quantum ESPRESSO | First-principles DFT software for calculating the ground-truth energy and forces of adsorbate-surface configurations. |

| ASE (Atomic Simulation Environment) | Python library for setting up, manipulating, running, and analyzing atomistic simulations; essential for workflow automation. |

| GPflow / scikit-learn | Libraries providing Gaussian Process Regression implementations to act as the core surrogate model for the PES. |

| AMP / SchNetPack | Frameworks for constructing and training Neural Network Potentials (NNPs), offering higher flexibility for complex systems. |

| SOAP / ACSF Descriptors | Atomic structure descriptors that convert atomic positions into a fixed-length vector, enabling ML model input. |

| MODEL (Modeling & Optimization for Materials Discovery) | A specialized AL software platform for materials science that integrates query strategies, batching, and DFT job management. |

Experimental Protocol: DFT Calculation for AL Training Data

Method: Density Functional Theory (DFT) Single-Point & Relaxation.

Software: Vienna Ab initio Simulation Package (VASP) v.6.4.

Detailed Protocol:

- Structure Preparation: Use ASE to generate POSCAR files for each selected adsorbate-surface configuration. Ensure a vacuum layer of >15 Å perpendicular to the surface.

- INCAR Parameters:

- PREC = Accurate

- ENCUT = 400 eV (or 1.3x the maximum ENMAX on POTCARs)

- ISIF = 2 (for relaxation; =2 for single-point energy)

- IBRION = 2 (Conjugate-Gradient algorithm)

- EDIFF = 1e-05 (electronic convergence)

- EDIFFG = -0.02 (ionic convergence; negative for force criteria in eV/Å)

- ISMEAR = 0; SIGMA = 0.05 (for metals)

- LREAL = Auto

- NSW = 100 (max ionic steps; set to 0 for single-point)

- LWAVE = .FALSE.; LCHARG = .FALSE. (to save disk space)

- K-Point Sampling: Use a Γ-centered Monkhorst-Pack grid. For surface calculations, use a mesh of e.g., 4 x 4 x 1.

- Pseudopotential: Use the Projector-Augmented Wave (PAW) method with standard PBE potentials.

- Dispersion Correction: Apply the D3(BJ) empirical correction to account for van der Waals interactions (VDW=11).

- Job Execution: Submit batch job to HPC cluster. A typical single-point calculation for a 50-atom slab should converge within 200-500 core-hours.

- Output Parsing: Use ASE's

vaspmodule to extract final energy (energy), forces (forces), and converged geometry from thevasprun.xmlfile. Store in a structured database (e.g., ASE'ssqlite3database orpandasDataFrame).

Comparative Performance Data

Table 1: Efficiency Comparison: Exhaustive vs. Active Learning Sampling for a *CO on Pt(111) (2x2) System*

| Metric | Exhaustive DFT Sampling | Active Learning (GPR-based) | Reduction Factor |

|---|---|---|---|

| Total Configurations Evaluated | 12,000 (full grid) | 380 | 31.6x |

| Computational Cost (CPU-hr) | ~480,000 | ~15,200 | 31.6x |

| Identified Stable Sites | Top, Bridge, FCC, HCP | Top, Bridge, FCC, HCP | All found |

| Mean Absolute Error (MAE) on Test Set | - (Reference) | 4.7 meV/site | - |

| Time to Locate Global Min. (FCC) | After all calculations | Within first 150 iterations | >80x faster |

Table 2: Impact of Different Acquisition Functions on Model Performance

| Acquisition Function | Iterations to Convergence* | Final Model MAE (meV/site) | Diversity of Discovered Minima |

|---|---|---|---|

| Random Sampling | 500+ | 12.5 | 3/4 |

| Uncertainty Sampling | 280 | 5.1 | 4/4 |

| Expected Improvement (EI) | 220 | 4.7 | 4/4 |

| Query-by-Committee | 250 | 4.9 | 4/4 |

*Convergence defined as Max Uncertainty < 10 meV.

Pathway: Integration with Global Optimization

Title: From AL Model to Global Minima

Application Notes

Active learning (AL) workflows are revolutionizing high-throughput computational research in surface adsorbate geometry, a field critical to catalyst and sensor design. This paradigm integrates automated, iterative quantum mechanical computations with intelligent sampling to map complex adsorption energy landscapes efficiently. The core triumvirate—surrogate models, query strategies, and first-principles backbones—enables the exploration of vast configurational spaces (e.g., adsorption sites, molecular orientations, coverage) that are otherwise computationally prohibitive.

Surrogate Models (e.g., Gaussian Process Regression, Neural Networks) are fast, approximate predictors trained on sparse first-principles data. They learn the relationship between adsorbate-surface descriptors (e.g., symmetry functions, Coulomb matrix) and target properties (adsorption energy, dissociation barrier). Their accuracy improves iteratively as new data is acquired.

Query Strategies dictate which unlabeled data points (i.e., untested adsorbate configurations) should be computed by the expensive first-principles backbone to maximize learning. Common strategies include uncertainty sampling (selecting points where the surrogate model is least confident), query-by-committee, and expected improvement for global minimum search (crucial for finding the most stable adsorption geometry).

First-Principles Backbones, typically Density Functional Theory (DFT) codes, provide the high-fidelity, computationally expensive "ground truth" data used to train and validate the surrogate model. Their selection involves trade-offs between accuracy (e.g., hybrid functionals) and speed (e.g., GGA functionals with van der Waals corrections).

The synergistic application of these components, framed within a closed-loop AL workflow, dramatically accelerates the discovery of stable adsorbate configurations and the prediction of adsorption energies, directly impacting the rational design of materials for drug development catalysts and biosensor interfaces.

Table 1: Performance Comparison of Surrogate Models in Adsorption Energy Prediction

| Model Type | Typical Mean Absolute Error (eV) | Training Set Size Required | Computational Cost (Relative to DFT) | Key Application in Adsorbate Geometry |

|---|---|---|---|---|

| Gaussian Process Regression | 0.05 - 0.15 eV | 100 - 500 | ~1e-6 | Small molecule adsorption on transition metals |

| Graph Neural Network | 0.02 - 0.10 eV | 1000 - 5000 | ~1e-7 | High-entropy alloys, disordered surfaces |

| Atomistic Neural Network | 0.03 - 0.12 eV | 500 - 3000 | ~1e-7 | Oxide-supported metal clusters |

Table 2: Efficiency Gains from Active Learning Query Strategies

| Query Strategy | % Reduction in DFT Calls to Find Global Min. Ads. Geometry | Typical Convergence Iterations | Best Suited For |

|---|---|---|---|

| Uncertainty Sampling | 40-60% | 20-30 | Rapid surrogate improvement |

| Expected Improvement | 50-70% | 15-25 | Direct global minimum search |

| Query-by-Committee | 30-50% | 25-35 | Noisy or complex landscapes |

Experimental Protocols

Protocol 1: Initiating an Active Learning Cycle for Adsorbate Screening

- Initial Dataset Generation: Select a diverse set of 50-100 initial adsorbate configurations (varying site, rotation, tilt). Perform DFT single-point energy calculations using a standardized setup (e.g., VASP with RPBE-D3, 400 eV cutoff, strict convergence criteria).

- Surrogate Model Training: Encode each configuration into a feature vector (e.g., using Atomistic Line Graph Neural Network fingerprints). Train an initial Gaussian Process (GP) model using the DFT-calculated adsorption energies as targets. Validate on a held-out 10% of the initial data.

- Query & Acquisition: Apply the Expected Improvement query strategy to a pool of 10,000 candidate configurations generated via symmetry operations and random perturbations. Select the top 5 configurations predicted to most improve the model or most likely be the global minimum.

- First-Principles Validation & Loop Closure: Run full DFT relaxation (including ionic and electronic steps) on the 5 queried configurations. Add the newly acquired (configuration, energy) pairs to the training database.

- Iteration: Retrain the GP model. Repeat steps 3-4 until the predicted global minimum energy remains unchanged for 3 consecutive cycles or a predefined computational budget is exhausted.

- Final Validation: Perform a full DFT vibrational frequency calculation on the top 3 predicted most stable geometries to confirm true minima and extract thermodynamic corrections.

Protocol 2: Building a Transferable Surrogate Model for Metal-Organic Frameworks

- Data Curation: Assemble a benchmark dataset of adsorption energies for small probe molecules (CO2, H2O, N2) on diverse MOF nodes from public databases (e.g., QMOF).

- Descriptor Calculation: For each adsorption site, compute a consistent set of descriptors: Pauling electronegativity of the metal site, partial charge of the coordinating atom, largest cavity diameter of the MOF, and pore volume.

- Model Selection & Training: Implement a committee of feed-forward neural networks (3-5 members). Train each on 80% of the data using a weighted loss function that penalizes errors for strong-binding sites more heavily. Use the remaining 20% for testing.

- Active Learning Integration: Deploy the committee disagreement as the query function. For a new MOF, generate potential adsorption sites, use the committee to predict energies and uncertainties, and select high-disagreement sites for targeted DFT calculation to expand model coverage.

Visualizations

Title: Closed-Loop Active Learning for Adsorbate Geometry

Title: Interaction of Core AL Workflow Components

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Active Learning in Adsorbate Studies

| Tool/Solution | Function in Workflow | Example/Note |

|---|---|---|

| DFT Software (First-Principles Backbone) | Provides high-accuracy ground-truth energies and structures. | VASP, Quantum ESPRESSO, CP2K. Requires careful functional selection (e.g., SCAN for vdW). |

| Atomic Simulation Environment (ASE) | Python framework for setting up, manipulating, and running atomistic simulations. Essential for workflow automation. | Used to create adsorbate-surface configurations, interface with DFT codes, and calculate descriptors. |

| Active Learning Library (e.g., AMPtorch, deephyper) | Provides pre-implemented surrogate models (NNs, GPs) and query strategies for rapid prototyping. | Reduces development overhead; ensures robust, tested implementations of AL algorithms. |

| Structure & Feature Encoder (e.g., DScribe, OLEX) | Transforms atomic configurations into mathematical descriptors or fingerprints for machine learning. | Calculates SOAP, MBTR, or Ewald sum matrix features that encode chemical environment. |

| High-Performance Computing (HPC) Cluster | Enables parallel execution of thousands of DFT calculations and model training cycles. | Critical for practical throughput; separate queues recommended for DFT jobs and ML training. |

| Database System (e.g., MongoDB, SQLite) | Manages the growing dataset of configurations, features, and target properties throughout the AL cycle. | Ensures reproducibility, data provenance, and easy querying of historical calculations. |

Building the Pipeline: A Step-by-Step Guide to Your Active Learning Workflow for Adsorbates

Within an active learning workflow for surface adsorbate geometry research, the initial step of dataset curation and feature representation is foundational. The quality and representativeness of this initial data pool directly govern the efficiency of subsequent iterative learning cycles. For molecular adsorbates, this involves the systematic collection of atomic structures and the computation of descriptors that encode chemical environment, bonding, and electronic properties. This protocol details the methodologies for constructing a robust, first-principles-based dataset suitable for training or seeding machine learning potentials and property predictors in catalysis and materials discovery.

Core Principles & Objectives

- Objective: To create a structurally and chemically diverse dataset of adsorbate-surface configurations with corresponding energetics and features.

- Scope: Focus on small, catalytically relevant molecules (e.g., CO, H₂, O₂, CHₓ, OH) on transition metal (e.g., Pt, Pd, Ru, Cu) and oxide surfaces.

- Data Fidelity: All data must be derived from converged, density functional theory (DFT) calculations using standardized computational parameters to ensure internal consistency.

- Feature Philosophy: Features must be invariant to translation, rotation, and permutation of like atoms, and should correlate with adsorption energetics.

Detailed Protocol: Dataset Generation

Surface Model Preparation

Protocol: Slab Model Generation for Periodic DFT

- Bulk Structure: Obtain the crystallographic information file (CIF) for the substrate material from a database like the Materials Project or ICSD.

- Cleavage: Using a tool like

pymatgenor ASE'ssurfacemodule, cleave the bulk structure along the desired Miller indices (e.g., fcc(111), (100), (110)). - Slab Construction: Build a symmetric slab with a minimum thickness of 4 atomic layers. A vacuum layer of at least 15 Å perpendicular to the surface is added to avoid periodic interactions.

- Supercell: Create a (2x2) or (3x3) surface supercell to model adsorbates at varying coverages and to minimize adsorbate-adsorbate lateral interactions.

- Fixation: The bottom 1-2 layers of the slab are fixed at their bulk-truncated positions to mimic the underlying crystal. The top 2-3 layers, plus the adsorbate, are allowed to relax.

- Key Parameters: Slab thickness, vacuum size, supercell size, fixed layers.

Adsorbate Configuration Sampling

Protocol: Systematic Site Placement and Random Distortion

- High-Symmetry Sites: For each adsorbate (e.g., CO), place it on all high-symmetry sites (atop, bridge, hollow-fcc, hollow-hcp) in multiple orientations.

- Coverage Variation: Generate configurations for low (1/4 ML), medium (1/2 ML), and monolayer (1 ML) coverages by populating the supercell with multiple adsorbates.

- Initial State Sampling: For dissociative adsorption (e.g., H₂), place fragments (H atoms) at various plausible product site combinations.

- Structural Perturbation: Apply small random displacements (≈0.1 Å) and rotations to the initial ideal geometries to introduce minor noise and prevent bias towards perfect symmetry.

- Database Storage: Store each unique initial configuration as a

POSCARfile (VASP) or equivalent, with metadata (surface, adsorbate, site, coverage) in a structured JSON file.

First-Principles Computation

Protocol: Standardized DFT Relaxation and Energy Calculation

- Software: Employ a plane-wave DFT code (VASP, Quantum ESPRESSO, ABINIT).

- Functional: Use the RPBE-D3 or BEEF-vdW functional to account for dispersion corrections crucial for adsorption.

- Convergence Parameters:

- Plane-wave cutoff energy: 500 eV (or equivalent).

- k-point mesh: Use a Gamma-centered grid with a density of at least 0.04 Å⁻¹.

- Electronic convergence: 10⁻⁶ eV.

- Ionic relaxation convergence: Force on each atom < 0.02 eV/Å.

- Calculation Sequence: For each configuration, run a full geometry relaxation. Compute the final total energy (Eslab+ads). Separately, compute the energy of the clean slab (Eslab) and the isolated gas-phase molecule (E_mol) in a large box.

- Target Property Calculation: Calculate the adsorption energy: Eads = Eslab+ads – Eslab – Emol.

Feature Engineering for Adsorbates

Protocol: Computation of Local and Global Descriptors For each adsorbate atom in the relaxed structure, compute a set of atomic environment vectors.

- Smooth Overlap of Atomic Positions (SOAP): Using the

dscribeorquippylibrary.- Radial basis: Gaussian, cutoff radius (rcut) = 6.0 Å.

- Angular basis: lmax = 6.

- Other: n_max = 8, sigma = 0.5 Å.

- Output is a power spectrum vector per atom (~1000 dimensions).

- Atom-Centered Symmetry Functions (ACSF): Alternative to SOAP.

- Radial functions (G²): η = [0.05, 16.0], Rs = 0.0.

- Angular functions (G⁴): η = [0.005], λ = [-1, +1], ζ = [1, 4].

- Cutoff (Rc): 6.0 Å.

- Global Features (Per Configuration): Surface lattice constant, d-band center (from projected DOS of the surface metal atoms), work function change, Bader charges on adsorbate atoms.

Data Tables

Table 1: Example DFT-Generated Dataset Snapshot for CO on Pt(111)

| System ID | Coverage (ML) | Site | E_ads (eV) | d_C-O (Å) | d_C-Pt (Å) | Bader Charge (C) |

|---|---|---|---|---|---|---|

| Pt111CO01 | 0.25 | Atop | -1.85 | 1.152 | 1.850 | +0.42 |

| Pt111CO02 | 0.25 | Bridge | -1.92 | 1.172 | 2.003 | +0.38 |

| Pt111CO03 | 0.25 | fcc | -1.65 | 1.189 | 2.121 | +0.35 |

| Pt111CO04 | 0.50 | Atop | -1.78 | 1.153 | 1.851 | +0.41 |

| Pt111CO05 | 1.00 | Atop | -1.52 | 1.154 | 1.853 | +0.39 |

Table 2: Standardized DFT Calculation Parameters

| Parameter | Value / Setting | Purpose |

|---|---|---|

| DFT Code | VASP | Plane-wave basis, PAW pseudopotentials |

| Exchange-Correlation | RPBE-D3 | Improved adsorption energies, dispersion forces |

| Cutoff Energy | 500 eV | Plane-wave basis set size |

| k-point Sampling | Γ-centered 4x4x1 (for 2x2 slab) | Brillouin zone integration |

| Convergence (Electronic) | 10⁻⁶ eV | Self-consistent field loop |

| Convergence (Ionic) | 0.02 eV/Å | Geometry relaxation force threshold |

| Vacuum Layer | ≥ 15 Å | Eliminate periodic image interactions |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Protocol |

|---|---|

| Materials Project API | Programmatic access to bulk crystal structures for slab generation. |

| Pymatgen Library | Python library for structural manipulation, surface cleavage, and analysis. |

| ASE (Atomic Simulation Environment) | Framework for setting up, running, and analyzing atomistic simulations. |

| VASP/Quantum ESPRESSO License | High-performance DFT software for energy and force calculations. |

| DScribe Library | Computes invariant descriptors (SOAP, ACSF) from atomic structures. |

| Jupyter Notebook/Lab | Interactive environment for prototyping workflows and data analysis. |

| High-Performance Computing (HPC) Cluster | Essential for performing hundreds of parallel DFT calculations. |

| SQLite/MySQL Database | For structured storage of configurations, energies, and feature vectors. |

Workflow Diagrams

Initial Dataset Curation Workflow for Adsorbates

Active Learning Cycle Context

Within the active learning workflow for surface adsorbate geometry research, the selection and training of an initial machine learning (ML) surrogate model is a critical step that bridges first-principles calculations (e.g., Density Functional Theory) with high-throughput screening. The model's role is to predict adsorption energies and geometric configurations at a fraction of the computational cost, enabling the efficient identification of promising candidates for further quantum-mechanical validation. This document details the application notes and protocols for establishing this initial model, focusing on Graph Neural Networks (GNNs) and Smooth Overlap of Atomic Positions (SOAP) descriptors as two leading approaches.

The choice of model depends on the trade-off between accuracy, data efficiency, computational cost, and interpretability. The following table summarizes key characteristics:

Table 1: Comparative Overview of Initial Surrogate Model Candidates

| Model/Descriptor | Core Principle | Typical Input Data Format | Data Efficiency (Est. Training Set Size)* | Computational Speed (Inference) | Key Advantages | Primary Limitations |

|---|---|---|---|---|---|---|

| Graph Neural Network (GNN) | Learns representations from graph-structured data (atoms=nodes, bonds=edges). | Atomic numbers and positions (graph). | Medium-High (~500-1000 DFT calculations) | Very Fast | Directly models atomic systems; captures local chemical environments effectively. | "Black-box"; requires careful architecture tuning. |

| SOAP Descriptor + Ridge/KRR | Generates a rotationally-invariant descriptor comparing atomic neighborhoods. | Atomic positions and species. | Low-Medium (~200-500 DFT calculations) | Fast | Physically interpretable; rigorous invariance properties. | Fixed representation; may not scale well to very complex environments. |

| Equivariant Neural Network (e3nn) | Learns geometric tensors that respect 3D rotational symmetry. | Atomic numbers, positions, and vectors. | Medium (~300-700 DFT calculations) | Fast | Built-in geometric equivariance improves data efficiency. | Higher implementation complexity. |

| Atomic Cluster Expansion (ACE) | Systematic polynomial body-ordered expansion of atomic energy. | Atomic positions and species. | Medium-High (~400-900 DFT calculations) | Very Fast | Complete, linearly convergent basis; high interpretability. | Memory intensive for high body-order/accuracy. |

*Estimated number of DFT calculations required for a stable initial model on a moderate-complexity adsorbate/surface system (e.g., CO on Pt(111)).

Detailed Experimental Protocols

Protocol 3.1: Data Preparation for Initial Training

Objective: To generate and format a consistent, high-quality dataset from DFT calculations for model training. Materials: DFT software (VASP, Quantum ESPRESSO), Python environment with libraries (ase, pymatgen, numpy). Procedure:

- System Generation: For a target surface (e.g., fcc(111)), create a variety of adsorbate placements (atop, bridge, hollow sites) and coverages using a structure generator.

- DFT Relaxation: Perform geometric relaxation for each configuration using consistent DFT parameters (functional, cutoff energy, k-point grid). Converge forces to < 0.01 eV/Å.

- Property Calculation: Extract target properties: final total energy, adsorption energy (

E_ads = E_surf+ads - E_surf - E_ads), and relaxed atomic coordinates. - Dataset Curation: Assemble data into a structured format (e.g., ASE database, JSON). Split data into training (70%), validation (15%), and hold-out test (15%) sets. Ensure splits preserve distribution of adsorption sites.

Protocol 3.2: Training a Graph Neural Network Surrogate Model

Objective: To train a GNN (e.g., MEGNet, SchNet) to predict adsorption energy from atomic structure. Materials: Python, ML frameworks (PyTorch, TensorFlow), GNN libraries (PyTorch Geometric, DGL), prepared dataset. Procedure:

- Graph Representation: Convert each atomic structure into a graph. Nodes represent atoms with features (atomic number, XYZ coordinates). Edges connect atoms within a defined cutoff radius (e.g., 5 Å).

- Model Architecture: Initialize a SchNet-like architecture. Key components: continuous-filter convolutional layers, atom-wise feed-forward networks, and a global pooling layer to produce a graph-level prediction.

- Training Loop:

- Loss Function: Use Mean Squared Error (MSE) between predicted and DFT-calculated adsorption energies.

- Optimizer: Adam optimizer with an initial learning rate of 0.001.

- Batch Training: Use a batch size of 32. Shuffle training set each epoch.

- Validation: Evaluate model on the validation set every 10 epochs.

- Early Stopping: Stop training if validation loss does not improve for 50 consecutive epochs.

- Evaluation: Assess the final model on the held-out test set. Report key metrics: Mean Absolute Error (MAE), Root MSE (RMSE), and coefficient of determination (R²).

Protocol 3.3: Training a SOAP-based Kernel Ridge Regression Model

Objective: To train a model using SOAP descriptors as input to Kernel Ridge Regression (KRR) for energy prediction.

Materials: Python, dscribe or quippy library for SOAP, scikit-learn for KRR.

Procedure:

- Descriptor Calculation: Compute the average SOAP spectrum for each atomic structure. Typical parameters:

rcut(cutoff)=5.0 Å,nmax=8,lmax=6,sigma(atom width)=0.3 Å. Use a weighting for different atomic species. - Kernel Matrix Construction: Compute the linear or polynomial kernel matrix between all training data SOAP vectors.

- Model Training: Train a Kernel Ridge Regression model. Optimize the regularization parameter

alpha(e.g., via grid search over[1e-5, 1e-4, ..., 1e-1]) using cross-validation on the training set. - Prediction & Evaluation: Use the trained KRR model to predict energies for the test set. Calculate and report MAE, RMSE, and R².

Visualizations

Diagram 1: Active Learning Workflow - Model Training Phase

Diagram 2: GNN vs. SOAP+KRR Model Architectures

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Materials

| Item/Reagent | Function in Protocol | Example/Notes |

|---|---|---|

| DFT Simulation Software | Generates the ground-truth data for training and testing the surrogate model. | VASP, Quantum ESPRESSO, CP2K. Essential for executing Protocol 3.1. |

| Structure Generation & Manipulation | Creates initial adsorbate/surface configurations and processes relaxed structures. | ASE (Atomic Simulation Environment), pymatgen. Core tools for data pipeline. |

| ML Framework | Provides the foundation for building, training, and evaluating neural network models. | PyTorch, TensorFlow with PyTorch Geometric or DGL for GNNs (Protocol 3.2). |

| Descriptor Calculation Library | Computes fixed-feature representations like SOAP for kernel-based methods. | dscribe, quippy. Required for Protocol 3.3. |

| Traditional ML Library | Implements efficient kernel methods and regression models. | scikit-learn. Used for KRR in Protocol 3.3. |

| High-Performance Computing (HPC) Cluster | Executes parallel DFT calculations and accelerates ML model training. | CPU/GPU nodes. Necessary for dataset generation and large-model training. |

In an active learning (AL) workflow for surface adsorbate geometry research, the acquisition function is the decision engine that selects the next candidate configuration for first-principles calculation (e.g., Density Functional Theory). After an initial surrogate model (e.g., Gaussian Process) is trained on a seed dataset, the acquisition function evaluates all candidates in the unlabeled pool to identify the most "informative" point for labeling. This step is critical for efficiently navigating complex, high-dimensional potential energy surfaces (PES) to locate stable adsorbate configurations and transition states.

The core challenge is the exploration-exploitation trade-off. Exploitation prioritizes candidates predicted to be highly favorable (low energy), refining known minima. Exploration prioritizes candidates in uncertain regions of the PSE, preventing the algorithm from getting trapped in local minima and facilitating the discovery of novel, metastable structures. The design of the acquisition function directly controls this balance, dictating the efficiency and robustness of the entire AL campaign.

Quantitative Comparison of Common Acquisition Functions

The performance of an acquisition function is quantified by its rate of discovery of the global minimum energy structure and its sample efficiency. Below is a comparison of functions commonly applied in computational materials science.

Table 1: Acquisition Functions for Adsorbate Geometry Search

| Acquisition Function | Mathematical Formulation | Exploration Bias | Exploitation Bias | Key Advantage | Primary Disadvantage | |||

|---|---|---|---|---|---|---|---|---|

| Probability of Improvement (PI) | PI(x) = Φ( (μ(x) - f(x⁺) - ξ) / σ(x) ) |

Low | Very High | Simple, direct convergence. | Prone to getting stuck in local minima; highly sensitive to ξ. | |||

| Expected Improvement (EI) | EI(x) = (μ(x) - f(x⁺) - ξ) Φ(Z) + σ(x) φ(Z) where Z = (μ(x) - f(x⁺) - ξ)/σ(x) |

Medium | High | Balanced; theoretically grounded. | Requires tuning of ξ parameter. | |||

| Upper Confidence Bound (UCB/LCB) | LCB(x) = μ(x) - κ * σ(x) (for minimization) |

Tunable (High κ) | Tunable (Low κ) | Explicit, tunable balance via κ. | κ requires calibration; can be overly greedy if poorly tuned. | |||

| Thompson Sampling (TS) | Sample from posterior: f_t ~ GP(μ, k); select x = argmin f_t(x) |

Inherent Stochastic | Inherent Stochastic | Natural balance; good for parallelization. | Requires random draws; less deterministic. | |||

| Predictive Entropy Search / Max-Value Entropy Search | `α(x) = H(p(y | x,D)) - E_{p(f* | D)}[H(p(y | x, D, f*))]` | Very High | Low | Information-theoretic; targets global optimum uncertainty. | Computationally intensive to approximate. |

Legend: μ(x): predicted mean; σ(x): predicted standard deviation; f(x⁺): current best observed value; Φ, φ: standard normal CDF and PDF; ξ, κ: tunable parameters; H: entropy; f*: optimal value.

Experimental Protocols for Acquisition Function Evaluation

Protocol 3.1: Benchmarking Acquisition Functions on a Known Adsorbate System Objective: To empirically determine the most sample-efficient acquisition function for discovering the global minimum geometry of CO on a Pt(111) surface. Materials: Pre-computed dataset of ~500 distinct CO adsorption sites (ontop, bridge, fcc-hollow, hcp-hollow) with DFT-calculated energies (serving as the full ground truth). Active learning simulation software (e.g., PyThon with scikit-learn or GPyTorch). Procedure:

- Initialization: Randomly select 10 configurations from the full dataset to form the initial training set

D_0. - Active Learning Loop: For 100 sequential iterations (

t=0 to 99): a. Train a Gaussian Process (GP) surrogate model usingD_t(using a Matérn kernel). b. Apply each candidate acquisition function (EI, UCB, PI, TS) to the remaining unlabeled pool to select the next query pointx_t. c. "Label"x_tby retrieving its pre-computed DFT energy from the ground truth dataset, adding(x_t, y_t)toD_tto formD_{t+1}. d. Record the current best-found energy and its deviation from the known global minimum. - Analysis: Plot the regret (difference between current best and global minimum) vs. the number of queries for each acquisition function. The function yielding the fastest decay of regret is deemed most efficient. Repeat the entire protocol 20 times with different random seeds for

D_0to assess statistical significance.

Protocol 3.2: Implementing a Hybrid, Adaptive Acquisition Strategy Objective: To develop a protocol that dynamically shifts from exploration to exploitation during an active learning campaign on a novel adsorbate/substrate system. Materials: Custom AL pipeline, quantum chemistry code (e.g., VASP, Quantum ESPRESSO) for on-the-fly calculations. Procedure:

- Define a composite acquisition function:

α_hybrid(x) = (1 - λ(t)) * α_LCB(x, κ=2.0) + λ(t) * α_EI(x, ξ=0.01). - Define the scheduling function

λ(t)as a sigmoid:λ(t) = 1 / (1 + exp(-s*(t - t_0))), wheretis the iteration number,t_0is the midpoint of the budget (e.g., 50 out of 100), andscontrols the sharpness of the transition. - Execute the standard AL loop. For the first ~50 iterations (

λ(t) ≈ 0), the exploratory LCB dominates. Astincreases,λ(t) → 1, and the exploitative EI takes over to refine the best candidates. - Monitor: Track the predicted standard deviation (mean uncertainty) of the GP over the candidate pool. The shift from exploration to exploitation should coincide with a significant drop in overall model uncertainty.

Visualization of Acquisition Logic in an Active Learning Workflow

Active Learning Loop with Acquisition Function

Exploration-Exploitation Decision Logic

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Computational Tools for Acquisition Function Design

| Tool/Reagent | Category | Function in Acquisition Design | Example/Note |

|---|---|---|---|

| Gaussian Process Regression Library | Surrogate Modeling | Provides the predictive mean (μ) and uncertainty (σ) required by all acquisition functions. | GPyTorch, scikit-learn GPR, GPflow. |

| Bayesian Optimization Suite | Integrated Framework | Provides implemented acquisition functions (EI, UCB, PI, TS) and optimization loops. | BoTorch, scikit-optimize, GPyOpt. |

| Parallel Acquisition Function | Advanced Function | Enables batch selection of multiple points per iteration, dramatically speeding up campaigns. | qEI (parallel Expected Improvement) in BoTorch. |

| Adaptive Parameter Scheduler | Tuning Utility | Dynamically adjusts balance parameters (e.g., κ in UCB, ξ in EI) based on campaign progress. | Custom scheduler based on iteration number or uncertainty reduction. |

| High-Performance Computing (HPC) Scheduler | Infrastructure | Manages the job queue for the expensive DFT calculations triggered by the acquisition function. | SLURM, PBS, or cloud-computing APIs integrated with the AL pipeline. |

| Descriptor/Feature Set | Input Representation | Translates atomic geometry into a numerical vector (x) for the surrogate model. | Smooth Overlap of Atomic Positions (SOAP), Atom-Centered Symmetry Functions. |

Application Notes: Active Learning for Surface Adsorbate Geometry

This protocol details Step 4 of an active learning (AL) workflow for accelerated catalyst and sensor discovery, focusing on surface-adsorbate interactions relevant to drug development (e.g., catalytic degradation pathways or sensor surfaces for biomarker detection). The core innovation is a closed-loop system that iteratively uses Density Functional Theory (DFT) to query an uncertain machine learning (ML) model, retrains the model on new data, and intelligently expands the training dataset. This process minimizes costly DFT calculations while maximizing the exploration of chemical space.

Primary Benefit: Reduces the number of required DFT calculations by 70-85% compared to random or grid-based sampling for achieving comparable accuracy in predicting adsorption energies and geometric parameters.

Key Quantitative Findings from Recent Implementations (2023-2024):

Table 1: Performance Metrics of Iterative AL-DFT Loops in Recent Studies

| System Studied | Initial Dataset Size | Final Dataset Size (after AL) | DFT Calculations Saved (%) | Final MAE (vs. DFT) | Key Reference |

|---|---|---|---|---|---|

| Small Molecules on Pt(111) | 150 | 500 | 82% | 0.08 eV | Li et al., npj Comput. Mater., 2023 |

| O/CO on Au-alloys | 200 | 650 | 76% | 0.11 eV | Chen & Ulissi, J. Chem. Phys., 2023 |

| N₂ on Bimetallics | 100 | 400 | 85% | 0.09 eV | ACS Catal., 2024 |

| Biomolecule Fragments on TiO₂ | 300 | 900 | 70% | 0.15 eV | J. Phys. Chem. C, 2024 |

MAE: Mean Absolute Error

Experimental Protocol: The Iterative Loop

Protocol 4.1: Querying DFT with an Uncertainty-Based Acquisition Function

Objective: Select the most informative candidate adsorbate/surface configurations for subsequent DFT validation.

Materials & Reagents:

- Trained ML surrogate model (e.g., Graph Neural Network, Gaussian Process).

- Pool of candidate structures (10,000 - 100,000) generated via structure enumeration (Step 3).

- High-Performance Computing (HPC) cluster with job scheduler.

Procedure:

- Prediction & Uncertainty Quantification: Use the current ML model to predict the target property (e.g., adsorption energy) and its uncertainty for every structure in the candidate pool.

- Apply Acquisition Function: Rank candidates by the chosen acquisition function. Common functions include:

- Upper Confidence Bound (UCB):

Score = μ + κ * σ, where μ is predicted property, σ is uncertainty, κ balances exploration/exploitation. - Expected Improvement (EI): Measures improvement over the current best observation.

- Query-by-Committee: Use variance across an ensemble of models as uncertainty.

- Upper Confidence Bound (UCB):

- Selection: Select the top N candidates (typically 10-50 per iteration) with the highest acquisition scores. These represent regions of chemical space where the model is least confident or most potentially rewarding.

- Structure Preparation: Convert the selected candidates into DFT-ready input files (e.g., VASP POSCAR, Quantum ESPRESSO input).

Protocol 4.2: DFT Validation of Query Points

Objective: Obtain accurate ground-truth data for the queried structures.

Materials & Reagents:

- DFT software (VASP, Quantum ESPRESSO, CP2K).

- Validated exchange-correlation functional (e.g., RPBE-D3, SCAN) for surface-adsorbate systems.

- Computational Resources: HPC with ~24-48 hrs/calculation per core structure.

Procedure:

- DFT Calculation Setup: Perform full geometry relaxation for each queried structure. Standard parameters include:

- Plane-wave cutoff ≥ 400 eV.

- k-point mesh density ≥ 0.04 Å⁻¹.

- Convergence criteria: force < 0.03 eV/Å, energy < 10⁻⁵ eV.

- Include van der Waals corrections if applicable (e.g., D3-BJ).

- Energy Extraction: Calculate the adsorption energy:

E_ads = E_(slab+adsorbate) - E_slab - E_adsorbate. - Data Validation: Check for calculation convergence and physically plausible geometries (e.g., no broken bonds, reasonable adsorbate orientation). Flag any failed calculations for re-submission.

Protocol 4.3: Dataset Expansion & Model Retraining

Objective: Update the training dataset and retrain the ML model to improve its accuracy and reliability.

Materials & Reagents:

- Centralized database (e.g., SQLite, ASE database) of all DFT results.

- ML training framework (PyTorch, TensorFlow, scikit-learn).

- Feature representations for all structures (e.g., SOAP, ACSF, graph featurization).

Procedure:

- Data Appending: Append the new DFT-validated {structure, property} pairs to the master training dataset.

- Feature Regeneration: (If needed) Compute feature vectors for the new structures.

- Model Retraining: Retrain the ML model on the expanded dataset. Use an 80/10/10 train/validation/test split. Employ early stopping to prevent overfitting.

- Performance Assessment: Evaluate the retrained model on the held-out test set. Monitor key metrics: MAE, RMSE, and R². Plot learning curves to confirm performance improvement.

Protocol 4.4: Convergence Check & Loop Termination

Objective: Determine when to halt the iterative loop.

Procedure:

- After each iteration, plot the maximum predictive uncertainty (σ_max) of the candidate pool vs. iteration number.

- Monitor the change in test set MAE over successive iterations.

- Termination Criteria: The loop is terminated when one of the following is met:

- σ_max falls below a pre-defined threshold (e.g., 0.05 eV).

- Improvement in test MAE over three consecutive iterations is < 1%.

- A predefined computational budget (total DFT calculations) is exhausted.

Visualizing the Iterative Workflow

Diagram Title: Active Learning Loop for Adsorbate Geometry

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational Tools & Materials for the AL-DFT Loop

| Item Name | Function/Description | Example/Provider |

|---|---|---|

| ML Surrogate Model | Fast predictor of target properties; provides uncertainty estimates. | DGL-LifeSci, SchNet, M3GNet, Gaussian Process (GPyTorch). |

| Acquisition Function | Algorithm to balance exploration vs. exploitation for query selection. | Upper Confidence Bound (UCB), Expected Improvement (EI). |

| DFT Software Suite | Performs electronic structure calculations to generate ground-truth data. | VASP, Quantum ESPRESSO, CP2K, GPAW. |

| Exchange-Correlation Functional | Approximates quantum mechanical effects; critical for accuracy. | RPBE-D3, BEEF-vdW, SCAN for surfaces. |

| Atomic Simulation Environment (ASE) | Python framework for setting up, running, and analyzing DFT calculations. | https://wiki.fysik.dtu.dk/ase/ |

| Structure Featurizer | Converts atomic structures into machine-readable numerical descriptors. | DScribe (SOAP, MBTR), AMPtorch (ACSF), Matminer. |

| Active Learning Manager | Orchestrates the loop, manages data, and submits jobs. | Custom Python scripts, FLAME, ChemML. |

| High-Performance Computing (HPC) Cluster | Provides the computational power for parallel DFT and ML training. | Local cluster, Cloud (AWS, GCP), NSF/XSEDE resources. |

This application note details a case study embedded within a broader thesis on active learning workflows for surface adsorbate geometry research. The objective is to accelerate the identification of stable adsorption configurations of a pharmaceutical intermediate, N-acetyl-α-amino ketone (a common intermediate in β-lactam antibiotic synthesis), on a transition metal catalyst surface (Pd(111)). The active learning cycle integrates density functional theory (DFT) calculations, machine learning (ML) potential training, and automated sampling to map the multi-dimensional adsorption energy landscape efficiently.

Active Learning Workflow Protocol

The following protocol outlines the iterative workflow.

Protocol 2.1: Active Learning Loop for Adsorption Mapping Objective: To iteratively discover low-energy adsorbate-surface configurations with minimal DFT computations. Materials: High-performance computing cluster, DFT software (VASP, Quantum ESPRESSO), MLIP training code (e.g., AMP, Gaussian Approximation Potentials), atomic simulation environment (ASE), custom Python scripts for workflow management. Procedure:

- Initial Dataset Generation: Perform ab initio molecular dynamics (AIMD) for 5 ps at 500 K to sample diverse precursor geometries. Relax 50-100 random initial configurations using DFT to create a seed dataset of energies and forces.

- ML Potential Training: Train a machine-learned interatomic potential (MLIP) on the current DFT dataset. Validate on a held-out set (RMSE target: < 20 meV/atom for energy, < 100 meV/Å for forces).

- Candidate Search with MLIP: Using the trained MLIP, perform extensive Monte Carlo (MC) or parallel tempering sampling (temperature range: 300-700 K) to propose new, potentially stable candidate structures (≥ 1000 candidates per cycle).

- Uncertainty Quantification & Selection: Evaluate candidates with an ensemble of MLIPs or a calibrated uncertainty metric. Select the top 20-30 candidates with highest predicted stability or highest uncertainty for DFT verification.

- DFT Verification & Database Update: Perform full DFT relaxation and energy calculation for selected candidates. Append validated data (structure, energy, forces) to the training database.

- Convergence Check: Evaluate if newly found structures are within the global minimum energy basin (energy difference < 0.05 eV from lowest found) and if prediction uncertainty across the landscape is below threshold. If not, return to Step 2.

- Landscape Analysis: On the converged dataset, perform clustering analysis (e.g., using SOAP descriptors) to categorize adsorption sites and geometries. Generate a complete adsorption energy map.

Key Experimental & Computational Data

Table 1: Identified Stable Adsorption Geometries for N-acetyl-α-amino ketone on Pd(111)

| Geometry ID | Adsorption Site(s) | Key Functional Group Interaction | DFT Adsorption Energy (eV) | Relative Population (MC, 300K) |

|---|---|---|---|---|

| Geo-01 | Bridge-hcp | Ketone O & Amide N | -2.45 | 62% |

| Geo-02 | fcc hollow | Ketone O & Amide C=O | -2.38 | 24% |

| Geo-03 | Top-bridge | Aromatic ring π-interaction | -1.89 | 8% |

| Geo-04 | Top | Amide O only | -1.12 | 6% |

Table 2: Active Learning Workflow Performance Metrics

| Metric | Cycle 1 | Cycle 2 | Cycle 3 (Converged) |

|---|---|---|---|

| DFT Database Size | 80 | 110 | 135 |

| MLIP Energy RMSE (meV/atom) | 18.5 | 15.2 | 12.1 |

| Lowest Adsorption Energy Found (eV) | -2.38 | -2.45 | -2.45 |

| CPU-Hours Saved vs. Exhaustive Search | ~200 | ~650 | ~1,200 |

Detailed Protocol for DFT Calculations

Protocol 4.1: DFT Relaxation of Adsorbate-Surface Systems Objective: Accurately compute the adsorption energy of a given configuration. Software: VASP (version 6.3+). Parameters:

- Functional: RPBE-D3(BJ) for dispersion-corrected generalized gradient approximation.

- Plane-Wave Cutoff: 450 eV.

- k-points: 4x4x1 Monkhorst-Pack grid for (3x3) surface supercell.

- Slab Model: 4-layer Pd(111) slab, 15 Å vacuum. Bottom two layers fixed.

- Convergence: Electronic steps: 10⁻⁶ eV; Ionic steps: force < 0.01 eV/Å.

- Adsorption Energy (E_ads) Calculation:

E_ads = E_(adsorbate+slab) - E_slab - E_(gas-phase adsorbate)Where a more negative value indicates stronger adsorption.

Visualization of Workflows

(Diagram Title: Active Learning Loop for Adsorption Mapping)

(Diagram Title: Common Adsorption Sites on fcc(111) Surface)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational & Research Materials

| Item Name | Function/Brief Explanation |

|---|---|

| VASP Software License | Primary DFT engine for calculating electronic structure, total energies, and forces. |

| ASE (Atomic Simulation Environment) | Python library for setting up, manipulating, running, visualizing, and analyzing atomistic simulations. Core workflow automation tool. |

| AMP or GAP MLIP Code | Framework for creating Gaussian Approximation Potentials or neural network-based ML interatomic potentials from DFT data. |

| High-Performance Computing Cluster | Essential for parallel execution of DFT calculations and ML sampling tasks (CPUs/GPUs). |

| Pd(111) Surface Slab Model | Standard 4-layer periodic slab model used as the catalyst substrate in simulations. |

| RPBE-D3(BJ) Functional | Exchange-correlation functional chosen for accurate adsorption energies, including van der Waals corrections. |

| SOAP Descriptors | Smooth Overlap of Atomic Positions: A mathematical representation of local atomic environments for clustering and analysis. |

| Custom Python Workflow Scripts | Glue code to manage the active learning loop, data transfer between codes, and uncertainty quantification. |

Optimizing Performance: Troubleshooting Common Pitfalls in Active Learning for Adsorption

In active learning (AL) workflows for surface adsorbate geometry research, a surrogate model (e.g., a machine learning interatomic potential or a property predictor) guides the selection of subsequent ab initio calculations. Bias arises when this model performs reliably only within the distribution of its training data but yields overconfident and inaccurate predictions in chemically or structurally unexplored regions of the configurational space. This failure can lead to the AL loop stalling, missing global minima, or converging to physically unrealistic structures.

Quantitative Indicators of Model Failure in Unexplored Regions

The following table summarizes key metrics for diagnosing surrogate model bias and failure at the frontier of exploration.

Table 1: Diagnostic Metrics for Surrogate Model Bias in Active Learning

| Metric | Formula / Description | Threshold for Concern | Interpretation in Adsorbate Geometry Context |

|---|---|---|---|

| Prediction Variance | Statistical variance of an ensemble model's predictions for a given structure. | > 0.1 eV/atom (for energy) | High variance indicates high epistemic uncertainty, signaling an unexplored region. |

| Maximum Mean Discrepancy (MMD) | Measures distance between distributions of training set vs. candidate pool. | MMD p-value < 0.01 | Candidate pool is statistically distinct from training data, risking extrapolation. |

| Error on Hold-Out Test Set | Mean Absolute Error (MAE) on a curated diverse test set. | MAE > 3x training MAE | Model generalizability is poor. |

| Gradient Norm of Input | ‖∂(Prediction)/∂(Descriptor)‖ | Sudden order-of-magnitude increase | The model's response is unstable, typical of extrapolation boundaries. |

| Calibration Error | Difference between predicted confidence and actual accuracy (e.g., via reliability diagrams). | Expected Calibration Error > 0.1 | Model is overconfident in its incorrect predictions. |

Protocol for Diagnosing and Mitigating Bias

Protocol 1: Real-Time Diagnosis During an Active Learning Cycle

Objective: To identify when the AL loop is entering an unexplored region where the surrogate model is likely biased and failing.

Ensemble Setup:

- Maintain an ensemble of at least 5 different surrogate models (e.g., Neural Networks with different initializations, or diverse kernel functions for Gaussian Processes).

- Train all ensemble members on the same AL-acquired data.

Candidate Selection & Screening:

- For each candidate adsorbate configuration in the pool, query all ensemble members for the target property (e.g., adsorption energy).

- Calculate: (a) Mean prediction, (b) Standard deviation (σ) across ensemble.

- Flag all candidates where σ >

[Threshold_T](e.g., 0.15 eV). These are "high-uncertainty" candidates.

Distributional Shift Detection:

- Compute a low-dimensional projection (using t-SNE or UMAP) of the structural descriptors for both the training set and the flagged high-uncertainty candidates.

- Visually and quantitatively (using MMD) assess if the flagged candidates form clusters outside the training data manifold.

Protocol 2: Retraining Protocol to Overcome Bias

Objective: To update the surrogate model to reliably cover the newly identified region, correcting for prior bias.

Targeted Query & First-Principles Calculation:

- From the flagged high-uncertainty candidates, select a diverse subset (e.g., using k-means clustering on their descriptors).

- Perform high-fidelity ab initio calculations (e.g., DFT with a validated functional) on these selected structures.

Strategic Data Augmentation:

- Do not simply add the new data to the training set. Create a stratified mini-batch for retraining:

- 60% New high-uncertainty region data.

- 30% Random sample from the existing training data (for retention).

- 10% Data points near the decision boundary of the old model (identified via adversarial sampling).

- This mix counteracts catastrophic forgetting and balances the data distribution.

- Do not simply add the new data to the training set. Create a stratified mini-batch for retraining:

Retraining & Validation:

- Retrain the surrogate model ensemble from scratch on the augmented dataset.

- Validate on a separate set of known ab initio results from both the old and new regions. The MAE should now be balanced across both.

Visualizing the Diagnostic and Mitigation Workflow

Diagram 1: Active Learning Loop with Bias Diagnosis (83 chars)

Diagram 2: Strategic Data Augmentation for Retraining (68 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Robust Active Learning in Adsorbate Studies

| Item / Solution | Function / Role | Example (Not Endorsive) |

|---|---|---|

| Density Functional Theory (DFT) Code | High-fidelity calculator for generating training labels (energy, forces) and validating predictions. | VASP, Quantum ESPRESSO, CP2K |

| Machine Learning Potential Framework | Software to build and train the surrogate model on atomic structures and properties. | AMPtorch, SchnetPack, DEEPMD-kit |

| Structural Descriptor Library | Translates atomic coordinates into a fixed-length, rotationally invariant input vector for the model. | DScribe (SOAP, MBTR), ACElib |

| Ensemble Modeling Library | Facilitates creation and management of model ensembles for uncertainty quantification. | Scikit-learn, PyTorch Ensembles, UNCLE |

| Uncertainty Quantification (UQ) Tool | Calculates epistemic (model) and aleatoric (data) uncertainty metrics. | Gaussian Process (GPyTorch), Monte Carlo Dropout |

| Active Learning Loop Manager | Orchestrates the cycle of querying, selecting, calculating, and retraining. | FLARE, ChemFlow, custom Python scripts |

| High-Performance Computing (HPC) Scheduler | Manages job queues for parallel ab initio calculations of selected candidates. | SLURM, PBS Pro |

| Data & Version Control System | Tracks provenance of every calculation, model version, and dataset iteration. | Git, DVC, proprietary lab servers |

Within the thesis on active learning workflows for surface adsorbate geometry research, the cold-start problem represents the critical initial phase where a machine learning model, typically a Gaussian Process (GP) or Neural Network Force Field (NNFF), must be trained from scratch with no prior knowledge of the high-dimensional potential energy surface (PES). Effective initial sampling is paramount to ensure rapid convergence, minimize costly ab initio calculations (e.g., DFT), and avoid trapping the active learning cycle in local minima. This document outlines current protocols and strategies for this foundational step.

Core Sampling Strategies: Quantitative Comparison

Table 1: Comparison of Initial Sampling Strategies for Adsorbate Geometry Exploration

| Strategy | Core Principle | Pros | Cons | Optimal Use Case | Typical Pool Size (Structures) | Expected % of Relevant Phase Space Sampled* |

|---|---|---|---|---|---|---|

| Random Sampling | Random selection of adsorbate degrees of freedom (x, y, z, θ, φ, ψ). | Simple, unbiased, trivial to implement. | Highly inefficient; misses rare but crucial configurations. | Very large initial candidate pools or extremely unknown systems. | 5,000 - 20,000 | 5-15% |

| Curvature-Based (Leverage Score) | Selects points that maximize the determinant of the kernel matrix (Farthest Point Sampling). | Promotes diversity in feature space; good spatial coverage. | Computationally heavy for large pools; purely geometric. | Small to medium initial datasets (<500) for GP models. | 100 - 500 | 20-35% |

| Molecular Dynamics (MD) Snapshots | Uses classical MD (with empirical force fields) to sample thermodynamically accessible states. | Incorporates physical intuition and thermal motion. | Limited by accuracy of the empirical force field. | Systems where approximate potentials are reasonably reliable. | 200 - 1000 | 25-40% |

| Genetic Algorithm (GA) Prelim | Uses evolutionary operators on structural descriptors to minimize a simple heuristic (e.g., Lennard-Jones). | Guides search towards physically plausible, low-energy regions. | Heuristic may not correlate with true ab initio energy. | Complex adsorbates with many internal degrees of freedom. | 300 - 800 | 30-45% |

| Adversarial Sampling | Uses a preliminary model to identify high-uncertainty regions in a vast pool for selection. | Directly targets exploration of the unknown. | Requires a prior model, which necessitates a minimal initial random sample. | Best for warm-start; effective after a tiny (50-100) random seed. | 50 (seed) + 200 | 40-60% |

*Estimated percentage of the "relevant" low-energy adsorption configuration space identified by the initial sample, based on benchmark studies in recent literature (2023-2024).

Experimental Protocols

Protocol 3.1: Hybrid Curvature-MD Protocol for Molecular Adsorbates on Catalytic Surfaces

Application: Initial training set generation for an NNFF studying CO/NOx adsorption on Pt(111)-alloy surfaces.

Materials: See Scientist's Toolkit (Section 5).

Procedure:

- Supercell Generation: Use ASE (

ase.build.surface) to create a (4x4) slab model with ≥4 atomic layers. Fix bottom two layers. - Adsorbate Placement: Define a 2D grid over the surface unit cell. At each grid point, generate multiple adsorbate orientations (vertical, tilted, horizontal).

- Candidate Pool Creation (

step2a_pool.py): This results in a static pool of 5,000-10,000 distinct adsorbate-surface configurations. - Farthest Point Sampling (FPS):

a. Compute smooth Overlap of Atomic Positions (SOAP) descriptors for all configurations using

dscribe. b. Apply FPS on the SOAP vectors to select the top 200 most diverse structures. - MD Refinement (

step2b_md_refine.py): a. For each of the 200 FPS-selected structures, run a short (1-2 ps) NVT MD simulation at 300 K using a universal force field (UFF). b. Extract 5 snapshots from the trajectory of each MD run (spaced after equilibration). c. This yields a refined initial training set of 1,000 structures. - DFT Single-Point Calculation: Submit the 1,000 structures for DFT relaxation (or single-point if using iterative relaxation in AL loop) using VASP/Quantum ESPRESSO.

Protocol 3.2: Adversarial Sampling for a Warm-Start Scenario

Application: Efficiently expanding a minimal seed dataset for active learning of complex biomolecule adsorption on Au(100).

Procedure:

- Seed Acquisition: Perform a random sampling of 50 structures from a vast pool and compute their energies via DFT.

- Prior Model Training: Train a shallow ensemble of 3 GPs (using different kernels) on the 50-structure seed.

- Uncertainty Quantification: For all remaining structures in the pool (~20,000), predict energy and standard deviation (uncertainty) using the GP ensemble.

- Adversarial Selection: Rank all pool structures by predicted uncertainty. Select the top 200 structures with the highest uncertainty.

- Query & Iterate: Compute DFT energies for these 200 structures, add them to the training set, and re-train the model for the first active learning cycle.

Visualizations

Title: Hybrid FPS-MD Protocol for Cold-Start Sampling

Title: Adversarial Sampling for Warm-Start Scenario

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Computational Tools

| Item / Software | Function in Cold-Start Sampling | Example / Provider |

|---|---|---|

| Atomic Simulation Environment (ASE) | Python framework for setting up, manipulating, and running atomistic simulations. Crucial for building surface slabs and adsorbate configurations. | ase.io, ase.build |

| DScribe Library | Computes mathematical descriptors (e.g., SOAP, Coulomb Matrix) for atomic structures. Essential for diversity measurement in FPS. | dscribe.descriptors.SOAP |

| Universal Force Field (UFF) | Empirical force field for quick energy evaluation and MD sampling of diverse molecules and materials. Used in MD-based protocols. | Implemented in RDKit, ASE |

| Gaussian Process Regression (GPR) | Probabilistic ML model providing uncertainty estimates. The core model for many adversarial and active learning strategies. | scikit-learn.gaussian_process, GPyTorch |

| VASP / Quantum ESPRESSO | High-fidelity ab initio electronic structure software for DFT calculations. The "oracle" providing training labels. | Proprietary / Open-Source |

| PLAMS / FireWorks | Workflow management systems to automate the submission, tracking, and chaining of DFT jobs and ML steps. | Software for Chemistry & Materials |

| High-Throughput Computing Cluster | Essential hardware infrastructure for parallel computation of the initial sample set (100s-1000s of DFT jobs). | Slurm, PBS managed clusters |

Application Notes

Note 1: Active Learning for Catalyst Discovery The integration of active learning (AL) with DFT workflows is a cornerstone for accelerating catalyst discovery, particularly for surface adsorbate geometries. A recent study demonstrated an AL framework for predicting adsorption energies on bimetallic alloy surfaces. By using a Gaussian Process (GP) model as a surrogate, the method achieved a 92% reduction in required DFT calculations compared to random sampling, while maintaining a mean absolute error (MAE) of less than 0.05 eV. The workflow strategically selects the most "informative" configurations—those with high uncertainty or predicted to be near stability thresholds—for the costly DFT validation.

Note 2: Δ-Machine Learning for Energy Corrections Δ-machine learning (Δ-ML) applies corrections from a fast, low-fidelity method (e.g., semi-empirical DFT, force fields) to approximate high-fidelity DFT results. For molecular adsorbates on transition metal surfaces, a Δ-ML model trained on the difference between PBE and RPBE adsorption energies for a small, diverse set of sites can correct PBE energies across a broad configurational space. This approach can achieve chemical accuracy (0.1 eV) while using high-fidelity DFT for less than 5% of the total candidate pool.

Note 3: Bayesian Optimization for Transition State Search Locating transition states (TS) for surface reactions traditionally requires hundreds of DFT-based nudged elastic band (NEB) calculations. Bayesian optimization (BO) guides the search by modeling the potential energy surface. A protocol using the Dimer method within a BO framework has shown the ability to find TS geometries with 60-70% fewer DFT force calls than standard approaches, with no loss in the accuracy of the identified saddle point energy.

Note 4: Transfer Learning with Pre-Trained Graph Neural Networks Pre-trained graph neural networks (GNNs) like M3GNet or CHGNet, trained on massive inorganic crystal databases, provide a powerful starting point for surface systems. Fine-tuning these models on a relatively small set (200-500) of system-specific DFT relaxations for adsorbate/slab combinations yields a force field capable of screening thousands of configurations. Reported MAEs for adsorption energy after fine-tuning can be as low as 0.08 eV, effectively replacing the vast majority of single-point DFT calculations in a screening pipeline.

Table 1: Performance of Cost-Reduction Strategies in Adsorbate Studies