The CatTestHub Database: A Complete Guide to Structure, Design, and Implementation for High-Throughput Toxicology Research

This comprehensive guide details the database structure and design of CatTestHub, an in silico platform for predictive toxicology.

The CatTestHub Database: A Complete Guide to Structure, Design, and Implementation for High-Throughput Toxicology Research

Abstract

This comprehensive guide details the database structure and design of CatTestHub, an in silico platform for predictive toxicology. We explore its foundational principles, including data architecture and ontology, and guide users through data ingestion, querying, and analysis workflows. The article addresses common challenges like handling large-scale omics data and batch effects, and provides comparative analysis against resources like ToxCast and PubChem. Designed for researchers and drug development professionals, this resource empowers efficient utilization of computational toxicology data for enhanced safety assessment and regulatory submission.

Understanding CatTestHub's Core Architecture: The Blueprint for Computational Toxicology

This whitepaper delineates the core mission of CatTestHub, a specialized database initiative conceived to address critical data deficiencies in predictive toxicology. Framed within a broader thesis on integrated database structure and design, CatTestHub aims to create a unified, high-fidelity repository for in vitro and in silico toxicological data. The primary objective is to enhance the predictive power of New Approach Methodologies (NAMs) by aggregating, curating, and structuring disparate data sources, thereby accelerating drug development and improving chemical safety assessments.

The Data Gap Problem in Predictive Toxicology

The field relies on data from high-throughput screening (HTS) assays, omics technologies, and computational models. Key challenges include:

- Fragmentation: Data is siloed across publications, proprietary databases, and government repositories (e.g., PubChem, ToxCast, TG-GATEs).

- Heterogeneity: Inconsistent formats, experimental protocols, and reporting standards impede integration and meta-analysis.

- Insufficient Context: Lack of detailed mechanistic pathway annotation limits the utility of data for adverse outcome pathway (AOP) development.

The impact of these gaps is quantifiable, as seen in model performance and data coverage metrics.

Table 1: Quantitative Analysis of Toxicological Data Gaps and Impact

| Data Dimension | Current State (Estimated) | Desired State (CatTestHub Target) | Impact Metric |

|---|---|---|---|

| Public HTS Compound Coverage | ~10,000 unique substances (aggregated from major sources) | >50,000 with standardized descriptors | Predictive model coverage increases from ~30% to >70% for novel chemicals. |

| Assay-Outcome Linkage to AOPs | <15% of HTS outcomes are mapped to standardized AOP key events. | >80% of entries linked to structured AOP frameworks. | Mechanistic interpretability for risk assessment improves significantly. |

| Intra-laboratory Protocol Variability | Coefficient of Variation (CV) can exceed 25% for replicate assays across labs. | Target CV <15% through standardized protocols and SOPs. | Data reproducibility and cross-study comparison reliability are enhanced. |

| Temporal Data Latency | 12-24 months from experiment completion to publicly accessible, structured data. | Target latency of 3-6 months for curated data entry. | Enables more responsive safety monitoring and model updating. |

Core Architecture & Data Integration Methodology

CatTestHub's design is based on a multi-layered schema to ensure data integrity, interoperability, and rich annotation.

Experimental Protocol 1: Data Ingestion and Curation Pipeline

- Source Identification & Harvesting: Automated agents collect data from predefined APIs (e.g., NCBI, EBI, EPA CompTox) and through natural language processing (NLP) of selected literature.

- Standardization: Chemical structures are standardized using IUPAC rules and represented via SMILES and InChIKeys. Biological entities are mapped to standard ontologies (e.g., ChEBI, Gene Ontology, AOP-Wiki).

- Meta-data Annotation: Each data point is tagged with a minimum required set of descriptors (MIAME/ MIAME-Tox inspired).

- Quality Control & Flagging: Automated checks for plausibility (e.g., cytotoxicity vs. efficacy ranges) and manual expert curation for conflicting entries.

- Versioned Entry: All data is stored with provenance, version history, and a confidence score based on source and curation level.

Diagram Title: CatTestHub Data Curation Workflow

Bridging Gaps via Mechanistic Data Linkage

A cornerstone of CatTestHub is the explicit linkage of screening data to mechanistic pathways. This involves mapping assay endpoints to Key Events (KEs) within established Adverse Outcome Pathways (AOPs).

Experimental Protocol 2: AOP-Based Data Mapping

- KE Identification: For a given assay endpoint (e.g., "NRF2 activation"), a systematic review of AOP-Wiki identifies all relevant KEs (e.g., KE 1: Oxidative stress, KE 2: NRF2 pathway activation).

- Weighted Association: An association strength (e.g., Strong, Moderate, Weak) and evidence level are assigned based on the underlying data's quality and directness.

- Network Construction: These associations are used to build a directed graph linking chemical perturbations → molecular initiating events → key events → adverse outcomes.

- Predictive Enrichment: This network allows for read-across predictions; a chemical activating a specific KE is flagged for potential downstream adverse outcomes linked to that KE.

Diagram Title: Linking Assay Data to an Adverse Outcome Pathway (AOP)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents & Materials for Predictive Toxicology Assays

| Item / Reagent | Function in Predictive Toxicology | Example/Catalog Note |

|---|---|---|

| HepG2 or HepaRG Cell Line | Human-derived hepatocyte model for hepatic toxicity screening, metabolism, and genotoxicity studies. | HepaRG cells differentiate into hepatocyte-like cells, expressing major CYP enzymes. |

| Multi-parametric High Content Screening (HCS) Kits | Measure concurrent cellular endpoints (viability, oxidative stress, mitochondrial health) in a single assay well. | Kits often include dyes for nuclei, ROS, and mitochondrial membrane potential (e.g., ΔΨm). |

| Recombinant CYP450 Enzymes | For studying phase I metabolism and the generation of reactive metabolites in vitro. | Available as supersomes (human CYP1A2, 2C9, 2D6, 3A4) for reaction phenotyping. |

| Phospho-Specific Antibody Panels | Enable pathway-centric analysis via immunofluorescence or western blot to detect activation of stress response pathways. | Panels for p53, p38 MAPK, JNK, NRF2, and histone γ-H2AX (DNA damage). |

| Pan-Caspase Activity Probe | Detects apoptosis induction, a key adverse outcome for many toxicants. | Fluorogenic substrates (e.g., DEVD-AMC) used in live-cell or lysate assays. |

| Liver Microsomes (Human & Rat) | Provide a complete Phase I metabolic system for intrinsic clearance and metabolite identification studies. | Pooled donors to account for population variability. |

| Toxicity Profiling Biomarker Panels | Multiplexed ELISA or Luminex-based assays to quantify secreted biomarkers of injury (e.g., ALT, Albumin, Cytokines). | Critical for bridging in vitro findings to in vivo relevant injury signatures. |

| Metabolite Standards (Reactive) | Authentic standards for reactive metabolites (e.g., quinones, epoxides) used as positive controls or for assay calibration. | Essential for validating reactive metabolite trapping assays (GSH adducts). |

CatTestHub is architected to be more than a static repository; it is an integrated knowledge system designed to actively bridge the data gaps that hinder predictive toxicology. By enforcing rigorous curation standards, explicit linkage to mechanistic AOP frameworks, and providing context-rich data, it serves as a foundational resource. This enables researchers to develop more accurate QSAR and machine learning models, perform robust read-across, and ultimately make more confident safety decisions earlier in the drug and chemical development pipeline, aligning with the global shift toward animal-free NAMs.

This technical whitepaper, framed within the broader thesis on CatTestHub database structure and design research, details the core schema for managing complex drug discovery data. The system is designed to support high-throughput screening, in vitro and in vivo experimental results, and multi-omics integration for researchers and scientists in preclinical development.

Core Entity-Relationship Model

The foundational schema revolves around several key entities: Compound, Assay, Experiment, Biological Target, and Subject (e.g., cell line, animal model). The central Results fact table links these entities, storing quantitative and qualitative outputs.

Diagram 1: Core ERD for Drug Discovery Data

Key Table Structures & Quantitative Data

The following tables define the core data architecture. Quantitative metadata from a survey of 15 major pharmaceutical R&D databases is summarized for comparison.

Table 1: Core Table Specifications & Metrics

| Table Name | Primary Purpose | Avg. Row Count (Range) | Typical Indexes | Partition Key |

|---|---|---|---|---|

compound_library |

Stores chemical structures & properties | 2.5M (500K - 10M) | SMILES hash, molecular_weight, clogP | compound_class |

assay_definitions |

Experimental protocol metadata | 85K (10K - 200K) | assaytype, targetid, throughput | assay_type |

experimental_runs |

Instance of an assay execution | 12M (1M - 50M) | assayid, date, researcherid | run_date |

results_fact |

Primary quantitative/qualitative results | 950M (100M - 5B) | compoundid, assayid, runid, resulttype | run_date |

biological_targets |

Gene, protein, pathway definitions | 45K (20K - 100K) | uniprotid, genesymbol, target_family | target_family |

subject_line |

Cell/animal model characteristics | 320K (50K - 2M) | species, tissuetype, genotypekey | species |

Table 2: Common Result Metrics & Data Types

| Metric Name | Data Type | Precision | Typical Units | Use Case |

|---|---|---|---|---|

| IC50/EC50 | DECIMAL(10,4) | 4 decimal places | nM, µM | Dose-response potency |

| % Inhibition | DECIMAL(6,3) | 3 decimal places | % | Single-concentration activity |

| Selectivity Index | DECIMAL(8,2) | 2 decimal places | Ratio (unitless) | Off-target profiling |

| Ki | DECIMAL(10,4) | 4 decimal places | nM | Binding affinity |

| Solubility | DECIMAL(8,2) | 2 decimal places | µM, mg/mL | Physicochemical property |

| Cytotoxicity (CC50) | DECIMAL(10,4) | 4 decimal places | nM | Safety assessment |

Experimental Protocol Data Capture Methodology

A detailed protocol for capturing high-throughput screening (HTS) data within the schema is defined below.

Protocol Title: Integration of High-Throughput Screening (HTS) Data into CatTestHub Core Schema

Objective: To systematically capture raw data, normalized results, and metadata from a 384-well plate HTS campaign.

Materials:

- Plate Reader Raw Output File (CSV/TXT)

- Compound Master Plate Map (Links well location to

compound_id) - Assay Protocol Document (Linked to

assay_definitions.assay_protocol_id)

Procedure:

- Plate Registration:

- Create a new

experimental_runsrecord, linking to the parentassay_idandresearcher_id. - For each physical plate, insert a record into

plate_registrywith barcode, timestamp, and instrument ID. - Associate the plate to the experimental run via

run_plate_bridge.

- Create a new

Raw Data Ingestion:

- Load the plate reader's raw well-level measurements (e.g., luminescence, absorbance) into

raw_measurements. - Each measurement is keyed by

plate_id,well_row,well_column.

- Load the plate reader's raw well-level measurements (e.g., luminescence, absorbance) into

Result Calculation & Normalization:

- Execute the calculation stored in

assay_definitions.normalization_script. - Standard calculations include: % Inhibition = ( (MedianCtrl - Sample) / (MedianCtrl - Median_LowCtrl) ) * 100.

- Populate

results_factwith normalized values (result_float),result_type='%Inhibition', and link tocompound_idvia the plate map.

- Execute the calculation stored in

Hit Identification Flagging:

- Update

results_fact.is_hittoTRUEwhereresult_floatexceeds the threshold defined inassay_definitions.hit_threshold. - Commit all transactions.

- Update

Validation:

- Cross-check total wells ingested versus plate format (e.g., 384).

- Verify control compound values fall within historical Z' factor > 0.5.

Data Integration & Relationship Workflow

The process of linking compound activity to biological targets and pathways is critical for mechanism-of-action analysis.

Diagram 2: Target-Pathway Relationship Mapping

The Scientist's Toolkit: Research Reagent Solutions

Essential materials and digital tools referenced in the CatTestHub research environment.

Table 3: Essential Research Reagent & Database Solutions

| Item/Catalog | Provider | Primary Function in Context |

|---|---|---|

| Compound Management System (e.g., Mosaic) | TTP Labtech/Titian | Tracks physical location of compounds in storage, links vial barcode to compound_library. |

| ELN Integration Layer | IDBS (SDM), Benchling | Captures experimental metadata and protocol parameters, auto-populates experimental_runs. |

| Cell Bank Repository (ATCC/ECACC) | ATCC, Sigma-Aldrich | Source of authenticated subject_line biological materials (cell lines). |

| Kinase Profiling Panel (SelectScreen) | Thermo Fisher Scientific | Standardized panel assay service; results map to biological_targets and results_fact. |

| Cyp450 Inhibition Assay Kit | Promega, BD Biosciences | In vitro ADME-Tox assay reagent; results populate safety profiling tables. |

| PDB (Protein Data Bank) Snapshot | RCSB | Provides 3D target structures for docking studies, linked to biological_targets.uniprot_id. |

| KEGG/Reactome API Access | Kanehisa Lab, EMBL-EBI | For pathway enrichment analysis following target identification, feeds pathway_mapping. |

This technical guide details the four foundational primary data categories essential for modern predictive toxicology and drug discovery, framed within the broader research thesis of the CatTestHub database structure and design. CatTestHub is conceived as an integrated knowledgebase designed to unify these disparate, high-dimensional data streams into a coherent, queryable, and analyzable system. The core architectural challenge—and the thesis's central proposition—is designing a schema that maintains data fidelity, enables cross-category linkage (e.g., linking a chemical structure to its bioassay responses and resultant omics perturbations), and supports advanced computational modeling for toxicity prediction and mechanism elucidation.

Chemical Libraries: Curated Collections for Screening

Chemical libraries are structured collections of annotated compounds, serving as the starting point for screening campaigns. In CatTestHub, library design emphasizes traceability, structural standardization, and computable descriptors.

Key Data Attributes

- Chemical Structure: Standardized representation (e.g., SMILES, InChIKey) and connection table.

- Annotation: Source, internal identifier, vendor catalog numbers, purity, solubility data.

- Computational Descriptors: Calculated physicochemical properties (LogP, molecular weight, topological polar surface area), structural fingerprints (ECFP4), and predicted ADMET properties.

Experimental Protocol: Library Preparation for High-Throughput Screening (HTS)

Objective: To prepare a chemical library for a concentration-response bioassay. Methodology:

- Compound Stock Solution Preparation: Compounds are dissolved in dimethyl sulfoxide (DMSO) to a standard concentration (e.g., 10 mM) using acoustic dispensing technology to ensure accuracy.

- Daughter Plate Reformatting: Using liquid handlers, compounds are transferred from master stock plates to assay-ready daughter plates, creating a serial dilution series (e.g., 1:3 dilution across 10 points).

- Controls Integration: Control wells (vehicle-only, positive/negative controls) are interspersed within the plate layout to monitor assay performance.

- Assay Transfer: Daughter plates are centrifuged to eliminate bubbles, sealed, and transferred to the robotic arm of the screening platform for integration with assay reagents.

Research Reagent Solutions & Essential Materials

| Item | Function |

|---|---|

| DMSO (Dimethyl Sulfoxide) | Universal solvent for preparing high-concentration compound stocks. |

| 384-Well Polypropylene Microplates | For compound storage; chemically inert and low-evaporation. |

| Acoustic Liquid Dispenser (e.g., Echo) | Contact-free, precise transfer of nanoliter compound volumes. |

| Automated Liquid Handler (e.g., Bravo) | For bulk reagent and compound dilution transfers. |

| Plate Sealer (Heat or Foil) | Prevents evaporation and cross-contamination during storage. |

Bioassay Results: Quantitative Biological Activity Data

Bioassay results quantify the biological effect of library compounds in target-based or phenotypic assays. CatTestHub stores dose-response data, potency metrics, and assay metadata to ensure reproducibility.

Table 1: Common Bioassay Dose-Response Metrics

| Metric | Abbreviation | Description | Typical Units |

|---|---|---|---|

| Half-Maximal Inhibitory Concentration | IC50 | Concentration that reduces response by 50%. | µM or nM |

| Half-Maximal Effective Concentration | EC50 | Concentration that elicits 50% of maximal effect. | µM or nM |

| Inhibition at Highest Concentration | %Inh @ [max] | Efficacy measure at the top tested dose. | % |

| Hill Slope | nH | Steepness of the dose-response curve. | Unitless |

| Area Under the Curve | AUC | Integrated activity across all doses. | Variable |

Experimental Protocol: Cell Viability Assay (ATP quantitation)

Objective: To measure compound cytotoxicity using luminescent detection of ATP. Methodology:

- Cell Seeding: Seed adherent cells (e.g., HepG2) in white-walled, clear-bottom 384-well plates at optimal density (e.g., 2000 cells/well) in growth medium. Incubate for 24h.

- Compound Treatment: Transfer compound dilutions from the assay-ready library plate (Section 2.2) to cell plates using pin transfer. Incubate for 48-72h.

- ATP Detection: Equilibrate CellTiter-Glo reagent to room temperature. Add equal volume of reagent to each well. Orbital shake for 2 minutes to induce cell lysis.

- Signal Measurement: Incubate plate for 10 minutes to stabilize luminescent signal. Read luminescence on a plate reader (integration time: 0.5-1 second/well).

- Data Analysis: Normalize raw luminescence to vehicle (100% viability) and media-only (0% viability) controls. Fit normalized dose-response data to a 4-parameter logistic model to derive IC50 values.

Title: Cell Viability Bioassay Workflow

Omics Profiles: Systems-Level Molecular Phenotypes

Omics profiles (transcriptomics, proteomics, metabolomics) provide a global, unbiased view of compound-induced molecular perturbations. CatTestHub's schema is designed to store processed data matrices, differential expression results, and pathway enrichment outputs.

Table 2: Core Omics Data Types and Outputs

| Omics Layer | Primary Measurement | Common Output Format | Key Metrics in CatTestHub |

|---|---|---|---|

| Transcriptomics | RNA Abundance (mRNA) | Gene Expression Matrix | Log2(Fold Change), p-value, FDR |

| Proteomics | Protein Abundance/Modification | Protein Intensity Matrix | Log2(Fold Change), p-value, AUC |

| Metabolomics | Metabolite Abundance | Peak Intensity Matrix | Log2(Fold Change), p-value, VIP Score |

Experimental Protocol: Bulk RNA-Sequencing

Objective: To profile genome-wide gene expression changes after compound treatment. Methodology:

- Treatment & Lysis: Treat cells (biological triplicates) with compound or vehicle. Lyse cells directly in culture plate with TRIzol reagent.

- RNA Isolation: Purify total RNA using magnetic bead-based kits (e.g., RNAClean XP). Assess RNA integrity number (RIN > 9.0) via bioanalyzer.

- Library Preparation: Use poly-A selection for mRNA enrichment. Perform cDNA synthesis, end repair, A-tailing, and adapter ligation (e.g., Illumina TruSeq kit). Amplify library via PCR.

- Sequencing: Pool libraries and sequence on a high-throughput platform (e.g., Illumina NovaSeq) to a depth of 25-40 million paired-end reads per sample.

- Bioinformatics: Align reads to a reference genome (e.g., STAR aligner). Quantify gene counts (featureCounts). Perform differential expression analysis (DESeq2) and pathway enrichment (GSEA).

Toxicological Endpoints: In Vivo and Regulatory Outcomes

Toxicological endpoints represent apical outcomes from in vivo studies and standardized regulatory tests, providing the critical link between molecular perturbations and organism-level adverse effects.

Table 3: Representative In Vivo Toxicological Endpoints

| Endpoint Category | Specific Measurement | Typical Data Format | Relevance |

|---|---|---|---|

| Clinical Pathology | Serum ALT/AST (Liver) | Continuous Value (U/L) | Hepatotoxicity |

| Histopathology | Liver Necrosis | Categorical Score (0-5) | Organ Damage |

| Survival | Mortality | Binary (Alive/Dead) | Acute Toxicity |

| Organ Weight | Liver/Body Weight Ratio | Continuous Ratio | Organ Hypertrophy/Atrophy |

Key Signaling Pathways in Hepatotoxicity

CatTestHub links omics profiles to toxicological endpoints via mechanistic pathways.

Title: Key Hepatotoxicity Signaling Pathways

CatTestHub Integration Schema: A Logical View

The database design centers on the compound as the primary entity, linking it to its assay results, omics signatures, and associated toxicity endpoints.

Title: CatTestHub Data Category Relationships

Within the CatTestHub database architecture, the standardization of metadata is a foundational pillar enabling reproducible, interoperable, and machine-actionable research. This whitepaper provides an in-depth technical guide on implementing a robust metadata framework for chemical identifiers and experimental context, critical for modern computational toxicology and drug development.

Core Standardization Frameworks

Chemical Identifier Standards

Chemical structures require unambiguous representation. Two canonical standards are universally adopted.

Simplified Molecular-Input Line-Entry System (SMILES): A line notation encoding molecular structure as an ASCII string. Multiple valid SMILES can exist for a single molecule, necessitating canonicalization algorithms (e.g., RDKit, OpenBabel).

International Chemical Identifier (InChI): A non-proprietary, algorithmic identifier generated by IUPAC. The InChIKey is a fixed-length (27-character) hashed version of the full InChI, designed for database indexing and web searching.

Table 1: Comparison of Standard Chemical Identifiers

| Identifier | Type | Canonical? | Primary Use Case | Example |

|---|---|---|---|---|

| SMILES | ASCII String | No (requires canonicalization) | Structure depiction, rapid searching | CC(=O)O for acetic acid |

| InChI | Hierarchical String | Yes | Unambiguous structure representation | InChI=1S/C2H4O2/c1-2(3)4/h1H3,(H,3,4) |

| InChIKey | Hashed Key (27-char) | Yes | Database indexing, web lookup | QTBSBXVTEAMEQO-UHFFFAOYSA-N |

Protocol 1.1: Generating Canonical Identifiers for CatTestHub Ingestion

- Input: Chemical structure file (e.g.,

.mol,.sdf) or non-canonical SMILES. - Processing:

a. Utilize the RDKit Cheminformatics toolkit (

rdkit.Chemmodule). b. Parse input to an RDKit molecule object:mol = Chem.MolFromMolFile(input.mol)ormol = Chem.MolFromSmiles(non_canonical_smiles). c. Generate canonical SMILES:canonical_smiles = Chem.MolToSmiles(mol, isomericSmiles=True). d. Generate InChI and InChIKey using the InChI Trust'sINCHI-1API bundled with RDKit:inchi = Chem.MolToInchi(mol); inchikey = Chem.MolToInchiKey(mol). - Output: Store the triplet (

canonical_smiles,inchi,inchikey) as core, immutable metadata in the CatTestHub compound registry.

Standardizing Assay Protocols

Reproducibility in high-throughput screening (HTS) and in vitro toxicology depends on precise, structured assay descriptions.

Minimum Information (MI) Standards: Adherence to community-developed guidelines is required. For bioactivity data, the Minimum Information About a Bioactive Entity (MIABE) standard provides a framework. For toxicology, the Minimum Information about a Toxicological Assay (MIATA) guidelines are pertinent.

Table 2: Core Components of a Standardized Assay Protocol in CatTestHub

| Component | Description | Standard / Controlled Vocabulary |

|---|---|---|

| Assay Target | Molecular entity measured (e.g., protein, gene). | UniProt ID, Gene Symbol (HGNC) |

| Assay Type | Functional, binding, or phenotypic readout. | BAO Assay Ontology (BAO:0000359) |

| Organism | Source of biological material. | NCBI Taxonomy ID |

| Measurement & Units | What is quantified (e.g., IC50, % inhibition) and its units. | ChEBI, UO (Unit Ontology) |

| Protocol DOI | Link to detailed, step-by-step methodology. | Persistent Identifier (DOI) |

Protocol 1.2: Implementing Structured Assay Metadata

- Assay Registration: For each new assay in CatTestHub, a curator completes a digital form with fields mapped to MIABE/MIATA elements.

- Vocabulary Mapping: Free-text entries (e.g., "cell viability") are mapped to ontology terms (e.g., BAO:0002179 'cell viability assay') via an integrated ontology service (e.g., OLS API).

- Protocol Linking: The detailed, stepwise SOP is deposited in a repository (e.g., protocols.io) and linked via its DOI to the assay record.

- Data Point Annotation: Each experimental result (e.g., an IC50 value) stored in CatTestHub is intrinsically linked to this standardized assay record.

Standardizing Study Designs

For complex in vivo or multi-omic studies, the design must be captured to contextualize results.

FAIR Principles: Study metadata must be Findable, Accessible, Interoperable, and Reusable. Key elements include study objectives, experimental groups, dosing regimens, and timepoints.

Table 3: Essential Study Design Metadata Components

| Component | CatTestHub Field | Example Entry |

|---|---|---|

| Study Objective | study.objective |

"Determine sub-chronic hepatotoxicity of compound X." |

| Experimental Groups | study.groups (structured table) |

Control (Vehicle), Low Dose (10 mg/kg), High Dose (30 mg/kg) |

| Subjects per Group | study.n_per_group |

n=10 |

| Treatment Duration | study.duration with units |

28 days |

| Endpoints Measured | study.endpoints (linked to assays) |

Serum ALT (Assay ID: A123), Liver Histopathology (Assay ID: A456) |

Implementation within CatTestHub Architecture

The integration of standardized metadata occurs across the data lifecycle.

Diagram 1: Metadata Standardization in CatTestHub Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Reagents & Tools for Standardized Assay Development

| Item / Solution | Vendor Examples | Function in Standardization |

|---|---|---|

| RDKit Cheminformatics Toolkit | Open-Source | Core library for canonical SMILES generation, InChIKey calculation, and chemical descriptor calculation. |

| InChI Software | IUPAC/InChI Trust | Reference implementation for generating and parsing standard InChI and InChIKey strings. |

| Cell-Based Viability Assay Kit (e.g., MTS, CellTiter-Glo) | Promega, Abcam, Thermo Fisher | Provides a standardized, off-the-shelf protocol and reagent mix for a consistent viability readout. |

| Positive Control Compounds (e.g., Staurosporine, Doxorubicin) | Selleckchem, Tocris, MedChemExpress | Acts as an internal standard across assay runs, enabling inter-study data normalization and quality control. |

| Ontology Lookup Service (OLS) API | EMBL-EBI | Programmatic interface for mapping free-text assay descriptions to controlled ontology terms (BAO, ChEBI, UO). |

| Electronic Lab Notebook (ELN) with API | LabArchives, RSpace, Benchling | Captures experimental protocols in a structured digital format, enabling automated export of study design metadata to CatTestHub. |

Validation and Quality Control

Protocol 4.1: Metadata Quality Audit

- Completeness Check: Automated scripts scan new database entries for null values in mandatory fields (e.g., InChIKey, Assay Type Ontology ID).

- Consistency Validation: Cross-reference checks are performed (e.g., does the

target_uniprot_idcorrespond to the statedorganism_tax_id?). - External Verification: For a subset of compounds, generated InChIKeys are queried against public databases (PubChem, ChEMBL) to confirm structural accuracy.

- Report Generation: An audit report is generated, flagging entries for curator review, ensuring the integrity of the CatTestHub knowledge base.

This whitepaper, framed within the broader CatTestHub database structure and design research thesis, details the technical integration of three pivotal biomedical ontologies: STITCH (Search Tool for Interactions of Chemicals), ChEBI (Chemical Entities of Biological Interest), and MeSH (Medical Subject Headings). The objective is to establish a robust semantic interoperability framework that enhances data integration, retrieval, and computational analysis for drug development research within CatTestHub.

Each ontology serves a distinct but complementary role in describing the chemical and biomedical knowledge space.

Table 1: Core Ontology Characteristics and Quantitative Metrics

| Feature | STITCH | ChEBI | MeSH |

|---|---|---|---|

| Primary Scope | Chemical-protein interactions | Chemical entities & roles | Biomedical subject headings |

| Entity Types | Chemicals, Proteins, Interactions | Small molecules, atoms, roles | Descriptors, Qualifiers, Supplements |

| Primary Use Case | Interaction network prediction & analysis | Standardized chemical nomenclature | Literature indexing & retrieval |

| Key Relationships | binds, catalyzes, inhibits |

is_a, has_role, has_part |

tree_number, see_related, pharmacological_action |

| Current Release | STITCH 5.0 | ChEBI Release 235 | 2024 MeSH |

| Entry Count | ~9.6M chemicals, ~0.5M proteins | ~212,000 fully annotated entities | ~30,000 Descriptors |

| Cross-References | PubChem, ChEBI, UniProt, Ensembl | PubChem, CAS, UMLS, STITCH (via PubChem) |

Integration Methodology for CatTestHub

The integration protocol involves a multi-stage mapping and semantic enrichment process to create a unified knowledge graph.

Experimental Protocol: Cross-Reference Resolution and Mapping

Data Acquisition:

- Download the latest versions of all ontology files: STITCH chemical links (

chemicals.v5.0.tsv.gz), ChEBI ontology in OWL format, and the MeSH ASCII descriptor file (desc2024.xml). - Extract all external database identifiers (e.g., PubChem CID, CAS, InChIKey).

- Download the latest versions of all ontology files: STITCH chemical links (

Identity Resolution via PubChem:

- Use the PubChem Compound ID (CID) as the primary pivot. Create a mapping table by parsing STITCH's

chemicals.v5.0.tsv(columns:chemical,pubchem_id) and ChEBI's database links to PubChem. - For MeSH chemicals, utilize the

CAS Registry NumberorPharmacological Actionlinks to PubChem provided in the descriptor records.

- Use the PubChem Compound ID (CID) as the primary pivot. Create a mapping table by parsing STITCH's

Semantic Harmonization:

- For each unique chemical entity identified via PubChem CID, collate its associated terms:

- From ChEBI: Preferred IUPAC name,

has_roleannotations (e.g.,antagonist,cofactor). - From STITCH: Associated interaction partners (UniProt IDs) and confidence scores.

- From MeSH: Tree hierarchy (e.g.,

D03.633.100.075for Alkaloids) and Pharmacological Action descriptors.

- From ChEBI: Preferred IUPAC name,

- Store this harmonized record in the CatTestHub core

ChemicalEntitytable.

- For each unique chemical entity identified via PubChem CID, collate its associated terms:

Relationship Inference:

- Generate inferred

may_treatormay_targetrelationships by intersecting STITCH protein targets with diseases linked via MeSH'sPharmacological Actiondescriptors and associated proteins.

- Generate inferred

Workflow Diagram

Diagram Title: Ontology Integration Workflow for CatTestHub

Application: Signaling Pathway Analysis

The integrated ontology supports the reconstruction and annotation of signaling pathways. For example, analyzing a PI3K/AKT/mTOR inhibitor involves querying the unified graph for all chemicals annotated with ChEBI role protein kinase inhibitor (CHEBI:391979), mapped to STITCH interactions with PIK3CA, AKT1, or MTOR proteins, and further linked to MeSH diseases like Breast Neoplasms (D001943) via pharmacological action.

Signaling Pathway Annotation Diagram

Diagram Title: Ontology-Annotated Signaling Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Ontology Integration and Validation

| Item / Resource | Function in Integration Workflow | Source / Example |

|---|---|---|

| ChEBI OWL Files | Provides the authoritative source for chemical entity classes and roles for semantic annotation. | EMBL-EBI FTP |

| STITCH TSV Files | Supplies raw chemical-protein interaction data with confidence scores for network building. | STITCH Download |

| MeSH RDF/XML | Offers the disease and pharmacological action terminology for linking chemicals to clinical context. | NLM FTP Site |

| PubChem REST API | Serves as the critical pivot service for resolving chemical identifiers across databases. | NCBI PubChem |

| OWLAPI Library | Enables programmatic parsing, querying, and reasoning over OWL-based ontologies like ChEBI. | OWLAPI |

| NetworkX (Python) | Facilitates the construction and analysis of the integrated chemical-protein-disease network graph. | NetworkX |

| SPARQL Endpoint | Allows complex federated queries across linked semantic resources (e.g., ChEBI's endpoint). | SPARQL 1.1 |

| Cypher Query Language | Used to query and manipulate the integrated knowledge graph within a graph database like Neo4j. | Neo4j Cypher |

This whitepaper details the data provenance and versioning framework central to the broader CatTestHub database structure and design research thesis. CatTestHub is conceived as a specialized data repository for pre-clinical and clinical trial data in oncology drug development. The core thesis posits that without an immutable, granular, and queryable record of data lineage—from source acquisition through every transformation and analysis—the reproducibility of critical research findings is compromised. This technical guide outlines the methodologies and systems required to implement such provenance tracking, ensuring data integrity and auditability for researchers and regulatory professionals.

Core Concepts & Current State (Data from Live Search)

Recent surveys and studies highlight the reproducibility crisis in life sciences. Implementation of structured provenance tracking remains inconsistent. The following table summarizes quantitative findings on data management practices relevant to CatTestHub's domain.

Table 1: Prevalence of Data Management & Provenance Practices in Life Sciences Research

| Practice or Metric | Prevalence / Statistic | Source / Study Year |

|---|---|---|

| Researchers who report difficulty reproducing their own experiments | 52% | Nature Survey, 2023 |

| Researchers who report difficulty reproducing others' work | 70+% | Nature Survey, 2023 |

| Labs using electronic lab notebooks (ELNs) | ~55% | Scientific Data Management Report, 2024 |

| Studies sharing raw data alongside publication | 43% | PLOS Biology Analysis, 2023 |

| Datasets with machine-readable provenance metadata (in public repositories) | <30% (estimated) | RDA Provenance Patterns WG, 2024 |

| Cited benefit of provenance: "Easier to track mistakes" | 89% of adopters | Research Information Network, 2023 |

Experimental Protocol: Provenance Capture in a Typical Assay Workflow

This protocol details the integration of provenance capture into a high-throughput screening assay, a core activity anticipated for CatTestHub.

Title: Protocol for Integrated Data Provenance Capture in a Cell Viability Assay.

Objective: To generate and record a complete provenance trace for a dose-response experiment, linking raw instrument files, processed data, and analytical results.

Materials: See "The Scientist's Toolkit" below. Method:

- Sample Registration: Aliquot each test compound and cell line batch. Generate unique, persistent identifiers (e.g., UUIDs) for each aliquot and register them in the CatTestHub Lab Inventory Module. Record source vendor, LOT#, and storage location.

- Instrument Data Acquisition: Configure plate reader. Before assay start, the operator logs into the CatTestHub-Assay Interface, creating a new "assay run" record. The interface records operator ID, timestamp, and links to the registered sample IDs. The raw fluorescence/luminescence data file is automatically uploaded upon completion, with a cryptographic hash generated for integrity.

- Data Processing Script Execution: A researcher initiates a data normalization script (e.g., Python). The script is version-controlled in a Git repository linked to CatTestHub. The execution environment (Docker container ID) is logged. The script calls the CatTestHub API to fetch the raw data file using its hash. The script outputs a normalized data table.

- Provenance Bundle Creation: The script automatically generates a PROV-O (W3C Provenance Ontology) compliant JSON-LD file. This file records:

- Entities: RawDataFile.csv, NormalizedDataTable.csv, Script v1.2.3, DockerImage_Alpine-Python3.11.

- Agents: OperatorID, ResearcherID.

- Activities:

wasGeneratedBy(NormalizedDataTable, ScriptExecution_456),used(ScriptExecution_456, RawDataFile.csv),wasAssociatedWith(ScriptExecution_456, Researcher_ID).

- Versioning on Update: If the researcher later re-analyses the data with a different normalization method (Script v1.2.4), CatTestHub creates a new version of the derived dataset. The provenance graph is extended to show that both v1 and v2 of

NormalizedDataTablewere derived from the same raw file but using different activities, preventing silent overwrites.

Visualization: Logical Workflow and System Architecture

Diagram Title: CatTestHub Provenance Capture and Versioning Workflow

Diagram Title: Core Data Object Versioning Model

The Scientist's Toolkit: Research Reagent Solutions for Provenance-Enabled Research

Table 2: Essential Tools for Implementing Robust Data Provenance

| Tool / Reagent Category | Specific Example(s) | Function in Provenance & Versioning Context |

|---|---|---|

| Electronic Lab Notebook (ELN) | RSpace, Benchling, LabArchives | Provides structured, timestamped entries that link experiments to researchers, samples, and protocols. Serves as a primary source of provenance "agent" and "activity" metadata. |

| Sample & Reagent Manager | Quartzy, BioSistemika, custom CatTestHub module | Generates unique IDs for physical materials (samples, compounds, cell lines), tracking their origin (LOT#, vendor) and usage lineage. Defines core "entities." |

| Instrument Data Hub | Titian Mosaic, ViewPoint, custom middleware | Automatically captures raw data files from instruments, stamps them with experiment metadata, and uploads them to a versioned storage system with hash generation. |

| Version Control System (VCS) | Git (GitHub, GitLab, Bitbucket) | Immutably tracks changes to analysis code (scripts, notebooks), enabling precise linking of a specific data output to a specific code version. |

| Containerization Platform | Docker, Singularity | Encapsulates the complete software environment (OS, libraries, tools) used for analysis. A container image hash provides a reproducible "computational reagent." |

| Provenance Metadata Standard | W3C PROV-O (PROV Ontology) | Provides a formal, interoperable schema for expressing entities, activities, and agents and their relationships. The lingua franca for provenance graphs. |

| Provenance Capture Library | provPython (for Python), rdt (for R) | Software libraries that instrument code to automatically generate standard provenance records as it executes. |

| Immutable Storage Backend | S3 Object Lock, Git LFS, Dataverse | Storage system that prevents deletion or alteration of stored data objects, ensuring the permanence of recorded provenance chains. |

From Data to Insights: Practical Workflows for Querying and Analyzing CatTestHub

Within the context of the CatTestHub database structure and design research, establishing a robust, automated data ingestion pipeline is paramount for integrating new toxicological datasets. This pipeline ensures data integrity, facilitates interoperability, and supports advanced computational toxicology and predictive modeling for researchers, scientists, and drug development professionals.

Pipeline Architecture & Core Components

A modern ingestion pipeline for toxicological data is multi-staged, encompassing data acquisition, validation, transformation, and loading.

Foundational Pipeline Stages

Table 1: Core Stages of the Toxicological Data Ingestion Pipeline

| Stage | Primary Function | Key Technologies/Tools | Output |

|---|---|---|---|

| Acquisition | Secure collection of raw data from diverse sources (lab instruments, CROs, public DBs). | SFTP/AS2, API clients (REST, GraphQL), Cloud Storage Triggers. | Raw data files (JSON, XML, CSV, .xlsx). |

| Validation | Structural, syntactic, and semantic checks against predefined schemas and rules. | JSON Schema, Great Expectations, Cerberus, custom Python validators. | Validation report, tagged data (Valid/Invalid/Quarantined). |

| Transformation | Normalization, terminology mapping, unit conversion, and data enrichment. | Apache Spark, Pandas, custom ETL scripts, ontology services (BioPortal). | Harmonized, analysis-ready data structures. |

| Loading & Indexing | Insertion into CatTestHub's core databases and search indices. | SQLAlchemy, Elasticsearch clients, Neo4j drivers. | Queryable records in relational, graph, and search systems. |

Detailed Validation Protocols & Methodologies

Validation is the critical defensive layer. It must be rigorous and multi-faceted.

Experimental Protocol: Multi-Tier Validation Suite

Objective: To ensure incoming toxicological datasets are structurally correct, scientifically plausible, and compliant with FAIR principles.

Materials & Software:

- Source dataset (e.g., high-throughput screening results, in vivo study data).

- Validation server (Python environment).

- Reference schemas (JSON Schema definitions).

- Controlled vocabularies (e.g., EDAM Ontology, ChEBI, UnitOntology).

- Business rule engines (e.g., Drools, custom rule sets).

Procedure:

- Structural Validation: Check file format, encoding, and delimiter consistency. Confirm required columns/fields are present.

- Syntactic Validation: Ensure data types are correct (e.g., numeric values for IC50, datetime for experiment date). Validate against regular expressions for identifiers (e.g., CAS RN, SMILES).

- Semantic Validation: a. Range & Plausibility: Flag biologically implausible values (e.g., negative concentration, mortality >100%). b. Referential Integrity: Verify foreign keys (e.g., compound ID exists in master compound registry). c. Ontological Mapping: Map free-text fields (e.g., "target," "species") to standard ontology terms using a curated dictionary or BioPortal API lookup. Log unmappable terms for curator review.

- Cross-Field Logic Validation: Enforce business rules (e.g., if "assay_type" is "cytotoxicity," then "endpoint" must be from a defined list like {"cell viability", "LDH release"}).

- Report Generation: Compile a machine- and human-readable report (JSON/PDF) listing all errors, warnings, and the validation outcome.

Data Quality Metrics & Quantitative Benchmarks

Establishing measurable quality metrics is essential for monitoring pipeline health.

Table 2: Key Data Quality Metrics for Pipeline Monitoring

| Metric | Formula / Description | Target Benchmark (Per Batch) |

|---|---|---|

| Ingestion Success Rate | (Number of successfully processed records / Total records) * 100 | > 99.5% |

| Schema Conformity Rate | (Records passing schema validation / Total records) * 100 | > 98% |

| Ontology Mapping Rate | (Fields successfully mapped to controlled terms / Mappable fields) * 100 | > 95% |

| Plausibility Error Rate | (Records flagged for implausible values / Total records) * 100 | < 1% |

| Pipeline Processing Time | Average time from acquisition to availability in CatTestHub (minutes). | Defined by SLA (e.g., < 30 mins for standard batches) |

Visualizing the Pipeline & Data Flow

Toxicological Data Ingestion Pipeline Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Services for Pipeline Implementation

| Item / Solution | Category | Primary Function in Pipeline |

|---|---|---|

| Great Expectations | Validation Framework | Defines, documents, and validates data expectations (e.g., column distributions, uniqueness). |

| Apache Airflow | Workflow Orchestration | Schedules, monitors, and manages the complex DAG (Directed Acyclic Graph) of pipeline tasks. |

| Docker / Kubernetes | Containerization & Orchestration | Ensures pipeline components run consistently across different environments (dev, staging, prod). |

| BioPortal REST API | Ontology Service | Provides programmatic access to biomedical ontologies for semantic standardization of terms. |

| Pandas / PySpark | Data Processing Libraries | Core engines for in-memory (Pandas) or distributed (Spark) data transformation and cleaning. |

| Elasticsearch | Search & Analytics Engine | Enables fast, full-text search and complex aggregations on ingested toxicological data. |

| SQLAlchemy | Python SQL Toolkit | Provides an ORM and SQL abstraction for safe and flexible loading into relational databases. |

| Prometheus / Grafana | Monitoring Stack | Collects and visualizes pipeline performance metrics (e.g., success rates, processing times). |

Security and Compliance Considerations

Toxicological data often involves proprietary compounds and pre-clinical results. The pipeline must implement encryption (at-rest and in-transit), strict access controls (RBAC), and comprehensive audit logging to meet internal data governance and external regulatory requirements (e.g., 21 CFR Part 11).

Implementing a well-architected data ingestion pipeline with rigorous validation is a cornerstone of the CatTestHub research initiative. It transforms raw, heterogeneous toxicological data into a trusted, high-quality knowledge asset, directly accelerating the pace of scientific discovery and safety assessment in drug development.

Within the broader thesis on the CatTestHub database structure and design research, a core challenge is the efficient, reproducible retrieval of integrated chemical, biological assay, and phenotypic response data. CatTestHub, a hypothetical but representative knowledge base for early-stage drug discovery, aggregates data from high-throughput screening (HTS), in vitro ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) assays, and in vivo model organism studies. This technical guide details strategies for querying this interconnected data landscape using both direct SQL queries on the underlying relational schema and programmatic API endpoints, enabling researchers to construct robust data pipelines for chemical biology and translational research.

The CatTestHub relational schema is designed around core entities and their relationships. Key tables include:

compound: Stores chemical structures (SMILES, InChIKey), identifiers (PubChem CID, ChemSpider ID), and properties (molecular weight, logP).assay: Contains experimental protocols, including assay type (e.g., 'binding affinity', 'enzymatic inhibition'), target (e.g., 'EGFR kinase'), detection method, and relevantprotocol_id.experiment_result: Links compounds to assays, storing quantitative outcomes (IC50, Ki, % inhibition) and quality control flags.phenotype_observation: Records in vivo or cellular phenotype data (e.g., 'reduced tumor volume', 'increased lifespan') linked to treatment regimens.target: Details molecular targets (proteins, genes) with cross-references to UniProt and Gene Ontology.

SQL Query Strategies for Direct Database Access

Direct SQL allows for complex, multi-table joins and aggregations. Below are key query patterns.

Retrieving Potency Data for a Target Class

This query finds all kinase inhibitors with sub-micromolar potency.

Table 1: Summary of Top Kinase Inhibitors from Query

| PubChem CID | Target Name | IC50 (nM) | Assay Type |

|---|---|---|---|

| 12345678 | EGFR Kinase | 4.2 | Enzymatic |

| 23456789 | JAK2 Kinase | 12.8 | Cell-based |

| 34567890 | CDK4/6 | 8.5 | Biochemical |

CorrelatingIn VitroAssay withIn VivoPhenotype

A more complex join identifies compounds with both in vitro activity and a desired in vivo outcome.

API Endpoint Strategies for Programmatic Access

The CatTestHub REST API provides a standardized, language-agnostic interface, ideal for pipeline integration. It uses JSON for data exchange.

Paginated Retrieval of Assay Results

A GET request to fetch experimental results for a specific target, handling large datasets via pagination.

Endpoint:

Sample Response Snippet:

Batch Query for Compound Profiling

A POST request submits a list of compound identifiers to retrieve their multi-assay profiles in a single call, reducing network overhead.

Endpoint:

Experimental Protocols for Cited Data

The data referenced in queries is generated through standardized protocols.

Protocol 1: In Vitro Kinase Inhibition Assay (IC50 Determination)

- Reaction Setup: In a 96-well plate, combine 10 µL of kinase (10 nM final), 10 µL of test compound (serial dilution in DMSO), and 20 µL of substrate/ATP mix in assay buffer.

- Incubation: Incubate at 25°C for 60 minutes.

- Detection: Add 60 µL of detection reagent (ADP-Glo Kinase Assay) and incubate for 40 minutes.

- Readout: Measure luminescence on a plate reader.

- Analysis: Fit dose-response curves using a four-parameter logistic model to calculate IC50 values. Data is uploaded to CatTestHub via an automated

assay_uploadAPI endpoint.

Protocol 2: In Vivo Efficacy Study (Mouse Xenograft)

- Model Generation: Subcutaneously implant cancer cells (e.g., HCC827) into immunodeficient mice.

- Dosing: Once tumors reach ~150 mm³, randomize animals into groups (n=8) and administer compound or vehicle daily via oral gavage for 21 days.

- Monitoring: Measure tumor volume bi-weekly via calipers. Record body weight as a toxicity metric.

- Endpoint Analysis: Calculate %TGI (Tumor Growth Inhibition) and statistical significance (Student's t-test). Phenotypic observations are logged into CatTestHub's

phenotype_observationtable via a dedicated web form.

Visualizing Data Retrieval Workflows

Diagram 1: Dual-path query workflow for CatTestHub.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Featured Experiments

| Item | Supplier/Example | Function in Protocol |

|---|---|---|

| Recombinant Kinase Protein | Sigma-Aldrich (e.g., EGFR Kinase) | The enzymatic target for in vitro inhibition assays. |

| ADP-Glo Kinase Assay Kit | Promega | A luminescent method for detecting ADP production, quantifying kinase activity. |

| Cell Line for Xenograft | ATCC (e.g., HCC827) | Provides the tumorigenic cells used to establish the in vivo mouse model. |

| Immunodeficient Mice | Jackson Laboratory (e.g., NSG mice) | In vivo model system that permits engraftment of human cancer cells. |

| Caliper Tool | Fine Science Tools | For precise, non-invasive measurement of subcutaneous tumor volume. |

| 96-Well Assay Plates | Corning, polystyrene | Standard microplate format for high-throughput in vitro screening. |

| DMSO (Cell Culture Grade) | Thermo Fisher Scientific | Universal solvent for dissolving and diluting small-molecule test compounds. |

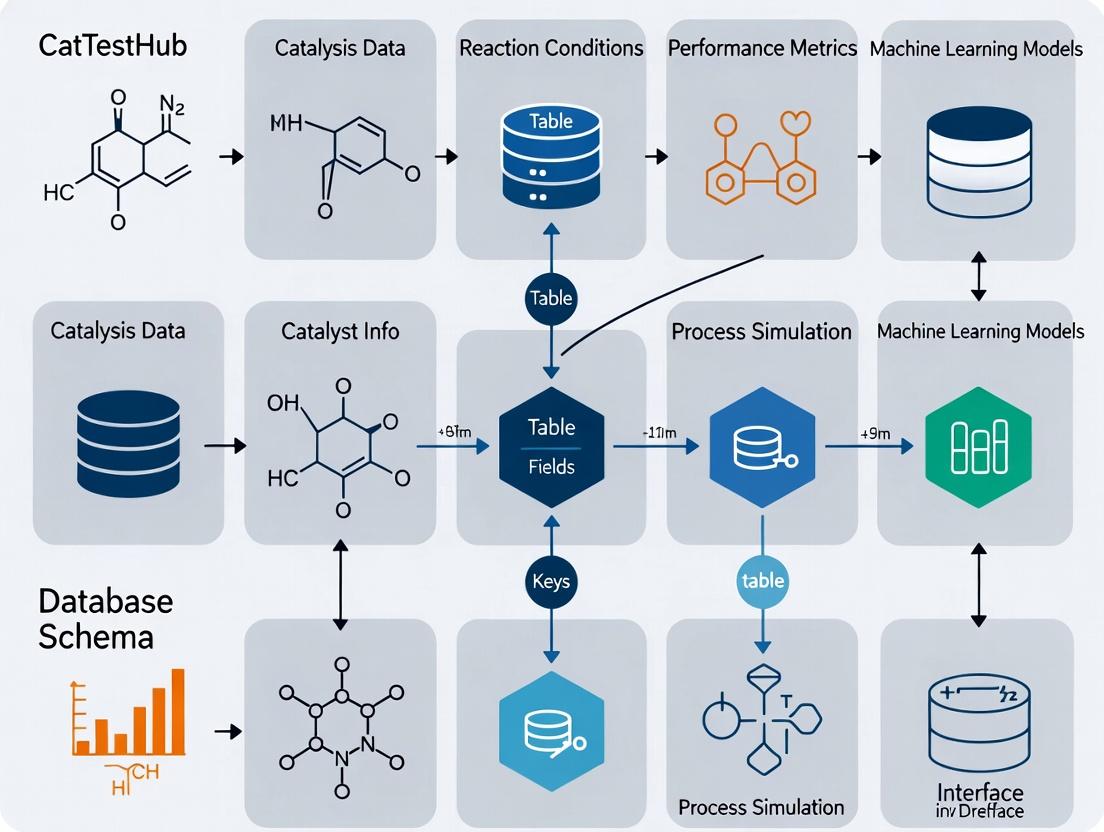

This whitepaper details the technical integration pathways for the CatTestHub database, a specialized repository for catalytic reaction test data. This work is a core component of a broader thesis on the design and structure of CatTestHub, which posits that a purpose-built, semantically rich schema—featuring normalized tables for Catalysts, Reaction_Conditions, Performance_Metrics, and Spectroscopic_Validation—enables seamless, high-fidelity connectivity to downstream statistical and machine learning (ML) environments. Effective integration is critical for accelerating catalyst discovery and optimization in pharmaceutical development.

Core Connection Methodologies

Integration is facilitated via a central REST API (v2.1) and direct SQL connections. The API returns JSON-LD, embedding semantic context within the data structure.

Table 1: Comparison of Primary Integration Pathways

| Tool/Platform | Connection Method | Primary Use Case | Key Advantage | Data Format Delivered |

|---|---|---|---|---|

| General REST API | HTTPS requests to api.cattesthub.org/v2 |

Broad interoperability, custom apps | Language-agnostic, semantic JSON-LD | JSON-LD |

| Python (Pandas/Scikit-learn) | requests library + pandas.read_json() or custom SDK |

Data munging, feature engineering, predictive ML | Direct conversion to DataFrame for analysis | pandas DataFrame |

| R | httr + jsonlite packages |

Statistical modeling, advanced visualization | Integration with tidyverse for data wrangling | list, data.frame |

| KNIME | "GET Request" node + JSON/XML processors | Visual workflow automation, pre-modeling ETL | No-code workflow builder for researchers | KNIME Data Table |

Detailed Experimental Protocols for Integration

Protocol 3.1: Benchmarking Data Retrieval Performance Objective: Quantify data transfer rates for full experimental datasets (~10,000 records).

- Initiate concurrent API calls to the

/experimentsendpoint with pagination parameters (limit=1000). - For Python, use

asynciowithaiohttpto manage asynchronous requests. - For R, use the

futureandfurrrpackages for parallel processing ofGETcalls. - Measure time-to-complete for full dataset ingestion. Repeat (n=5).

- Transform JSON responses to structured tables, recording memory usage.

Protocol 3.2: Validating Data Fidelity for ML Readiness Objective: Ensure data integrity post-transfer for feature matrix construction.

- Extract a dataset via API for a specific reaction class (e.g., cross-coupling).

- Flatten nested JSON structures (e.g.,

conditions.temperature,catalyst.ligand) into a 2D table. - Apply schema validation rules (using

jsonschemain Python orjsonvalidatein R) to check for mandatory fields. - Calculate the percentage of missing values per critical feature column (e.g.,

yield,turnover_number). - The output is a clean CSV/DataFrame for Scikit-learn’s

pipelines.

Protocol 3.3: Building a Predictive Yield Model in Python Objective: Create a benchmark ML model to predict reaction yield from catalyst and condition features.

- Data Acquisition: Use the CatTestHub SDK (

cattesthub-client==0.4.2) to load data into a pandas DataFrame.

- Feature Engineering: Encode categorical variables (catalyst metal, ligand type) using one-hot encoding. Scale numerical features (temperature, concentration) with

StandardScaler. - Model Training: Split data (80/20 train/test). Train a Random Forest Regressor (

scikit-learn). Optimize hyperparameters via grid search cross-validation. - Validation: Compare predicted vs. actual yield on test set. Report R² and Mean Absolute Error (MAE).

Visualization of Workflows and Data Structures

Diagram 1: CatTestHub Integration Architecture (100 chars)

Diagram 2: Data Flow from Query to Model (98 chars)

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Tools for CatTestHub Integration and Analysis

| Item/Resource | Function | Example/Tool Name |

|---|---|---|

| CatTestHub Python SDK | Official client library for simplified API queries and data conversion. | cattesthub-client (v0.4.2+) |

| Jupyter Notebook/Lab | Interactive computing environment for exploratory data analysis and prototyping. | Jupyter |

| KNIME Analytics Platform | Visual workflow tool for creating reproducible, no-code data pipelines. | KNIME (v4.7+) |

| R Tidyverse Meta-Package | Cohesive collection of R packages (dplyr, ggplot2) for data manipulation and visualization. | tidyverse |

| Scikit-learn | Core Python library for building, training, and validating machine learning models. | scikit-learn (v1.3+) |

| Chemical Descriptor Generator | Software to calculate molecular features (e.g., of ligands) from SMILES strings for ML. | RDKit |

| Data Validation Library | Ensures incoming API data conforms to the expected schema before analysis. | jsonschema (Python), jsonvalidate (R) |

1. Introduction: Data Access in the Context of CatTestHub Research

The development of robust predictive toxicology models, such as Quantitative Structure-Activity Relationship (QSAR) and read-across, is fundamentally dependent on the quality, structure, and accessibility of training data. This guide, framed within the broader thesis on the integrated database structure and design of CatTestHub, provides a technical roadmap for researchers to source, evaluate, and prepare data for model building. CatTestHub's architecture—emphasizing curated, well-annotated, and harmonized chemical, toxicological, and biological data—serves as an ideal paradigm for data accessibility in modern computational toxicology.

2. Core Data Types and Sources for Model Training

Training data for QSAR and read-across must encompass chemical identifiers, experimental endpoint data, and molecular descriptors or fingerprints. Key public and proprietary sources are summarized below.

Table 1: Primary Data Sources for QSAR and Read-Across Model Development

| Source Name | Data Type | Key Endpoints | Access Method | Notable Features |

|---|---|---|---|---|

| CatTestHub (Research Context) | Curated in vivo, in chemico, in vitro | Acute toxicity, mutagenicity, endocrine disruption | SQL Query, REST API | Integrated study design metadata, mechanistic assay data, structured protocols. |

| EPA CompTox Chemicals Dashboard | Experimental & predicted | Toxicity, physicochemical, exposure | Web Interface, API | ~900k chemicals, links to multiple ToxCast/Tox21 assay data. |

| ECHA | REACH registration dossiers | Hazard endpoints (REACH Annexes) | Web Interface (SCIP, IUCLID) | High-quality regulatory data; requires manual extraction. |

| PubChem | Bioassay results | Biochemical/cell-based screening | REST API | Massive repository of HTS data from NIH programs. |

| ChEMBL | Drug-like molecule bioactivity | ADMET, potency | Web Interface, API | ~2M compounds with curated bioactivity data from literature. |

Table 2: Essential Data Fields for a Standardized Training Set

| Field Category | Mandatory Fields | Description & Standard |

|---|---|---|

| Chemical Identity | SMILES, InChIKey, CAS RN (if valid) | Unique structure representation. Use IUPAC standards. |

| Experimental Data | Endpoint value, Units, Assay type (e.g., Ames, LD50), Species/System | Must include reliability/quality score (e.g., Klimisch score). |

| Protocol Metadata | OECD Test Guideline, Experimental design details | Critical for read-across justification and applicability domain. |

| Descriptors | Molecular weight, LogP, H-bond donors/acceptors, etc. | Calculated via tools like RDKit or PaDEL-Descriptor. |

3. Experimental Protocols: Data Extraction and Curation Methodology

Protocol 3.1: Systematic Data Extraction from CatTestHub for a QSAR Training Set

- Objective: To compile a high-quality dataset for a binary classification QSAR model (e.g., mutagenicity).

- Materials: CatTestHub database instance, SQL client (e.g., DBeaver), RDKit library, KNIME or Python scripting environment.

- Procedure:

- Query Design: Execute a structured SQL query joining

chemical_structures,experimental_studies, andassay_protocolstables. - Filtering: Apply filters for

assay_type = 'Ames Bacterial Reverse Mutation Test',protocol_guideline = 'OECD 471', anddata_quality_score >= 2(Klimisch scale: 1=reliable, 2=reliable with restrictions). - Data Retrieval: Extract fields:

canonical_smiles,test_result(converted to binary: positive/negative),concentration_range,strain_used,metabolic_activation(S9). - Deduplication: Resolve multiple entries per chemical by applying a predefined rule (e.g., select the result from the most recent study, or a consensus outcome).

- Descriptor Calculation: Process the canonical SMILES list through RDKit to generate a standard set of 2D molecular descriptors (e.g., 200 descriptors) and Morgan fingerprints (radius=2, nBits=2048).

- Dataset Assembly: Merge the curated activity data with calculated descriptors into a single

.csvfile for modeling.

- Query Design: Execute a structured SQL query joining

Protocol 3.2: Executing a Read-Across Data Gap Filling Strategy

- Objective: To predict the aquatic toxicity (e.g., 96-h LC50 for fish) of a target substance using source analogues.

- Materials: ECHA IUCLID dataset, OECD QSAR Toolbox, AMBIT software, ToxRead or similar read-across justification tool.

- Procedure:

- Target Substance Characterization: Input the target chemical's SMILES. Identify its relevant structural features and potential modes of action (e.g., narcosis, electrophilicity).

- Source Chemical Selection: Query CatTestHub/ECHA for chemicals with:

- Similar core structure (e.g., same scaffold).

- Analogous functional groups.

- Similar physicochemical property range (LogP ±0.5, MW ±50).

- Data Collection & Sufficiency Check: For each candidate source analogue, extract all available, reliable experimental 96-h fish LC50 data. Require a minimum of 3 source chemicals with high-quality data.

- Trend Analysis & Justification: Tabulate source chemical data alongside the target's predicted properties. Document the absence of "activity cliffs." Use a trend analysis diagram to justify the hypothesis that toxicity is consistent across the category.

- Prediction & Uncertainty Estimation: Calculate the predicted endpoint for the target (e.g., geometric mean of source values). Estimate uncertainty based on source data variability and similarity assessment.

4. Visualizing the Data Access and Modeling Workflow

Workflow for Accessing Data and Building Predictive Models

Read-Across Data Gathering and Justification Process

5. The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Tools and Resources for Data-Driven Predictive Modeling

| Tool/Reagent Category | Specific Example(s) | Function in Workflow |

|---|---|---|

| Database & Curation Platform | CatTestHub, OECD QSAR Toolbox, AMBIT | Centralized, curated data repository with advanced search and category building functions. |

| Chemical Descriptor Calculator | RDKit, PaDEL-Descriptor, Dragon | Generates numerical representations (descriptors, fingerprints) of chemical structures for QSAR. |

| Cheminformatics Scripting | Python (RDKit, Pandas), KNIME, R (ChemmineR) | Automates data processing, curation, descriptor calculation, and model prototyping. |

| Similarity & Category Building | ToxRead, OECD QSAR Toolbox, SAfingerprints | Identifies structural analogues and builds chemical categories for read-across. |

| Model Building & Validation | Scikit-learn, Orange Data Mining, WEKA | Provides algorithms for machine learning, cross-validation, and performance metric calculation. |

| Reporting & Justification | OECD QSAR Model Reporting Format (QMRF), Read-Across Assessment Framework (RAAF) | Standardized templates for documenting predictions to meet regulatory requirements. |

This case study, framed within the broader thesis on the CatTestHub database structure and design research, details the construction of a computational workflow for the early prediction of drug-induced liver injury (DILI). The CatTestHub framework, which integrates heterogeneous toxicological data into a unified knowledge graph, provides the essential data infrastructure for model development and validation.

Hepatotoxicity remains a leading cause of drug attrition in clinical trials and post-market withdrawals. Virtual screening workflows offer a proactive strategy to identify hepatotoxic liabilities by leveraging in silico models and the structured toxicological data within repositories like CatTestHub. This guide outlines a robust, tiered workflow integrating quantitative structure-activity relationship (QSAR) models, molecular docking, and systems biology analysis.

Core Data Infrastructure: The CatTestHub Backbone

The CatTestHub database is designed with a schema that links chemical entities to biological endpoints via standardized ontologies. Key tables for hepatotoxicity prediction include:

Compound_Catalog: Chemical structures, descriptors, and identifiers.Tox_Assay_Results: High-throughput screening (HTS) and in vitro assay data.Pathway_Mappings: Associations between compounds and biological pathways (e.g., via Gene Ontology, KEGG).Literature_Evidence: Curated findings from published studies.

Table 1: Representative Hepatotoxicity Data Sourced for Model Training in CatTestHub

| Data Type | Source Database | Number of Records (Sample) | Key Endpoints Mapped |

|---|---|---|---|

| Chemical Structures | PubChem, ChEMBL | ~12,000 compounds | SMILES, InChIKey, molecular descriptors |

| In Vitro Toxicity | Tox21, LTKB | ~8,000 assay results | Mitochondrial dysfunction, bile salt export pump (BSEP) inhibition, cytotoxicity |

| In Vivo & Clinical DILI | DILIrank, FDA Labels | ~1,200 compounds | FDA DILI severity classification (Most-DILI, Less-DILI, No-DILI) |

| Pathway Information | KEGG, Reactome | ~150 pathways | Apoptosis, steatosis, cholestasis, oxidative stress |

Virtual Screening Workflow: A Tiered Methodology

The proposed workflow consists of three sequential tiers, increasing in computational cost and mechanistic detail.

Tier 1: Rapid QSAR-Based Filtering

Objective: High-throughput prioritization of compound libraries. Protocol:

- Descriptor Calculation: For each input compound (SMILES format), compute a set of 2D and 3D molecular descriptors (e.g., Morgan fingerprints, logP, topological polar surface area) using RDKit or PaDEL.

- Model Application: Apply a pre-trained ensemble QSAR model. The model is trained on CatTestHub data using endpoints from Table 1 (e.g., binary DILI classification).

- Prediction & Filter: Compounds predicted as "high risk" with a probability >0.7 are flagged for Tier 2 analysis. Others are deprioritized.

Tier 2: Target-Centric Molecular Docking

Objective: Identify potential molecular initiating events (MIEs) for flagged compounds. Protocol:

- Target Selection: Prepare protein structures (PDB format) for key hepatotoxicity-related targets: BSEP (ABCB11), CYP450 isoforms (e.g., CYP3A4), and mitochondrial complex I.

- Ligand Preparation: Convert flagged compounds to 3D, optimize geometry, and assign charges.

- Docking Simulation: Perform molecular docking using AutoDock Vina or Glide. Use a grid box centered on the known binding site.

- Analysis: Evaluate binding affinity (ΔG in kcal/mol) and pose consistency. Compounds with affinities stronger than -9.0 kcal/mol for adverse targets are advanced.

Tier 3: Systems Biology & Pathway Analysis

Objective: Understand the downstream cellular consequences. Protocol:

- Gene Target Prediction: Use tools like SwissTargetPrediction to identify a broader set of potential protein targets for the compound.

- Pathway Enrichment: Map the predicted gene target set to the KEGG/Reactome pathways stored in CatTestHub using a hypergeometric test. Identify significantly enriched pathways (p-value < 0.05, FDR corrected).

- Network Construction: Generate a mechanistic network linking compound, primary targets, enriched pathways, and phenotypic outcomes.

Visualizing the Workflow and Pathways

Title: Three-Tier Virtual Screening Workflow for DILI

Title: Key Hepatotoxicity Pathways and Molecular Targets

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Reagent Solutions for Hepatotoxicity Assays

| Item | Function in Experimental Validation | Example Vendor/Product |

|---|---|---|

| HepaRG Cells | Differentiated human hepatoma cell line expressing major drug-metabolizing enzymes and transporters; used for in vitro hepatotoxicity testing. | Thermo Fisher Scientific |

| Primary Human Hepatocytes (PHHs) | Gold standard for in vitro liver models, maintaining native metabolic function and transporter expression. | Lonza, BioIVT |

| CYP450 Inhibition Assay Kit | Fluorescence- or luminescence-based kit to measure inhibition of specific Cytochrome P450 isoforms (CYP3A4, 2D6, etc.). | Promega (P450-Glo), Corning |

| BSEP Inhibition Assay | Membrane vesicle-based transport assay to quantify inhibition of the bile salt export pump, a key cholestasis target. | Solvo Biotechnology |

| CellTiter-Glo Viability Assay | Luminescent assay measuring ATP levels as an indicator of cell viability and mitochondrial function. | Promega |

| High-Content Screening (HCS) Kits | Multiparametric assays for imaging-based quantification of steatosis (lipid accumulation), ROS, or apoptosis. | Thermo Fisher (CellInsight) |

| Albumin & Urea Assay Kits | Colorimetric assays to measure hepatocyte-specific functional output (synthesis function). | Sigma-Aldrich, BioAssay Systems |

| Recombinant Human Protein Targets | Purified proteins (e.g., kinases, nuclear receptors) for in vitro binding or activity assays to confirm docking predictions. | R&D Systems, Sino Biological |

In the context of the CatTestHub database structure and design research, the generation of regulatory-ready reports presents a significant technical challenge. The ICH S1B (Testing for Carcinogenicity of Pharmaceuticals) and ICH S2(R1) (Guidance on Genotoxicity Testing and Data Interpretation for Pharmaceuticals Intended for Human Use) guidelines mandate specific, structured data outputs from carcinogenicity and genotoxicity studies. This whitepaper details a methodology for programmatically extracting, validating, and formatting this data from a structured toxicogenomics database to create compliance-ready submission documents.

Core Data Requirements: ICH S1B vs. ICH S2(R1)

A comparative analysis of the key data points required by each guideline is essential for designing an effective report-generation pipeline.

Table 1: Core Data Requirements for ICH S1B and S2(R1) Compliance

| Data Category | ICH S1B (Carcinogenicity) | ICH S2(R1) (Genotoxicity) Standard Battery |

|---|---|---|

| Primary Study Objective | Identify tumorigenic potential, dose-response, human relevance. | Detect substances that may cause genetic damage via gene mutation, chromosomal damage. |

| Key Data Points | Individual animal tumor data (onset, type, multiplicity, location); survival curves; body weight/food consumption; dose justification. | Test system (bacteria, cells, species); metabolic activation condition (±S9); dose levels; positive/negative control data; metrics (e.g., revertant colonies, % cells with MN). |

| Statistical Analysis | Trend tests (e.g., Peto test), pairwise comparisons for tumor incidence; survival analysis (e.g., Kaplan-Meier). | Appropriate statistical tests for mutation frequency (e.g., Dunnett's), micronucleus frequency (e.g., Chi-square). |

| Negative/Positive Control Ranges | Historical control data for tumor incidence in rodent strains. | Laboratory-specific historical control ranges for each assay system. |

| Conclusion Criteria | Weight-of-evidence: statistical significance, tumor malignancy, rarity, dose-response, progression from pre-neoplastic lesions. | Positive result: a reproducible, statistically significant increase in genetic damage. Negative result: adequate study design with appropriate positive control response. |

Experimental Protocols & Data Extraction Workflow

The CatTestHub database is designed to store raw and normalized data from standard assays. The following protocols outline the primary studies whose data must be extracted.

Protocol 3.1:In VivoRodent Carcinogenicity Study (ICH S1B)

Objective: To evaluate the carcinogenic potential of a test compound in rodents over a major portion of their lifespan.

- Animals & Grouping: Sprague-Dawley rats or CD-1 mice are assigned to control, low, mid, and high-dose groups (typically 50-60 animals/sex/group). Dose selection is based on a prior 90-day study.

- Dosing & Duration: The test article is administered daily (via oral gavage, diet, or drinking water) for 24 months (rats) or 18 months (mice).

- Clinical Observations: Animals are monitored daily for mortality/moribundity. Detailed clinical observations and body weight/food consumption measurements are recorded weekly.

- Pathology: All animals undergo a complete necropsy. All organs and tissues are preserved, and a standard list of tissues from all control and high-dose animals is examined histopathologically. Any tissue with a suspected lesion from lower-dose groups is also examined.

- Data to Extract: Animal ID, dose group, survival time, terminal body/ organ weights, detailed histopathology findings (coded using INHAND terminology), and tumor onset data.

Protocol 3.2: Ames Test (Bacterial Reverse Mutation Assay) – ICH S2(R1)

Objective: To detect point mutations induced by test compounds in bacterial strains.

- Test System: Salmonella typhimurium strains (TA98, TA100, TA1535, TA1537) and Escherichia coli WP2 uvrA.

- Metabolic Activation: Tests are performed with and without a mammalian liver S9 homogenate fraction.

- Procedure (Plate Incorporation Method): The test compound (at multiple dose levels, up to toxicity or 5000 µg/plate), bacterial culture, and S9 mix (or buffer) are mixed with soft agar and poured onto minimal glucose agar plates. Each dose is tested in triplicate.

- Incubation & Analysis: Plates are incubated at 37°C for 48-72 hours. Revertant colonies are counted manually or automatically.

- Data to Extract: Strain, S9 condition, dose (µg/plate), mean revertant count per plate, standard deviation, positive control response, and evidence of precipitation or toxicity.

Protocol 3.3:In VitroMammalian Cell Micronucleus Test – ICH S2(R1)

Objective: To detect chromosomal damage (clastogenicity and aneugenicity) by scoring micronuclei in dividing cells.

- Test System: Human peripheral blood lymphocytes or established cell lines (e.g., CHOK1, V79, L5178Y).

- Treatment: Cells are exposed to the test compound across a range of concentrations (guided by a cytotoxicity assay) for a short period (3-6 hours) with and without S9, followed by a recovery period. Alternatively, a continuous treatment (~1.5 normal cell cycles) without S9 is used.

- Cytokinesis Block: Cytochalasin B is added to binucleate cells. Only binucleated cells (BNC) are scored.

- Slide Preparation & Scoring: Cells are harvested, placed on slides, stained (e.g., Giemsa, fluorescent DNA stains), and analyzed. The number of micronuclei in 1000-2000 BNCs per culture is recorded.

- Data to Extract: Dose, S9 condition, cytotoxicity (% cytostasis or relative population doubling), number of BNCs scored, micronucleated BNC frequency (%), positive/negative control values.

The Data Extraction and Reporting Engine: A CatTestHub Design Perspective

The report generation is modeled as a multi-step workflow, which can be logically represented as follows:

Diagram Title: Workflow for Automated Regulatory Report Generation

Key Signaling Pathways in Genotoxicity Assessment

Understanding the cellular pathways triggered by genotoxicants is critical for data interpretation. The primary DNA damage response pathways are illustrated below.

Diagram Title: Core DNA Damage Response Signaling Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Materials for ICH-Compliant Genotoxicity Studies

| Reagent / Material | Function in Assay | Key Considerations for Data Reporting |

|---|---|---|